Create an empty array column of certain type in pyspark DataFrame

16,121

Solution 1

This is one of the way:

>>> import pyspark.sql.functions as F

>>> myList = [('Alice', 1)]

>>> df = spark.createDataFrame(myList)

>>> df.schema

StructType(List(StructField(_1,StringType,true),StructField(_2,LongType,true)))

>>> df = df.withColumn('temp', F.array()).withColumn("newCol", F.array("temp")).drop("temp")

>>> df.schema

StructType(List(StructField(_1,StringType,true),StructField(_2,LongType,true),StructField(newCol,ArrayType(ArrayType(StringType,false),false),false)))

>>> df

DataFrame[_1: string, _2: bigint, newCol: array<array<string>>]

>>> df.collect()

[Row(_1=u'Alice', _2=1, newCol=[[]])]

Solution 2

Another way to achieve an empty array of arrays column:

import pyspark.sql.functions as F

df = df.withColumn('newCol', F.array(F.array()))

Because F.array() defaults to an array of strings type, the newCol column will have type ArrayType(ArrayType(StringType,false),false). If you need the inner array to be some type other than string, you can cast the inner F.array() directly as follows.

import pyspark.sql.functions as F

import pyspark.sql.types as T

int_array_type = T.ArrayType(T.IntegerType()) # "array<integer>" also works

df = df.withColumn('newCol', F.array(F.array().cast(int_array_type)))

In this example, newCol will have a type of ArrayType(ArrayType(IntegerType,true),false).

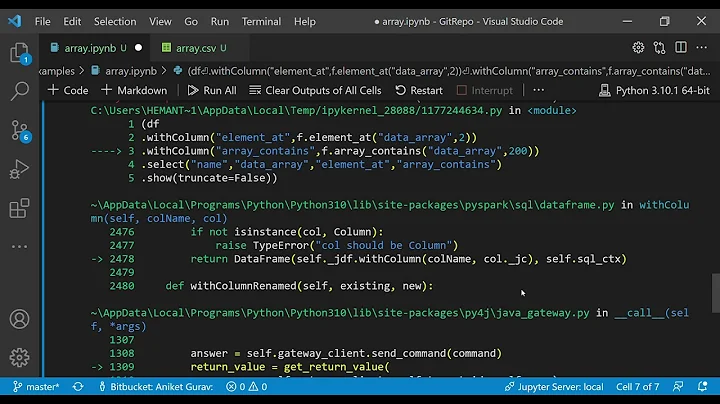

Related videos on Youtube

Comments

-

David Taub almost 2 years

David Taub almost 2 yearsI try to add to a df a column with an empty array of arrays of strings, but I end up adding a column of arrays of strings.

I tried this:

import pyspark.sql.functions as F df = df.withColumn('newCol', F.array([]))How can I do this in pyspark?