dataframe Spark scala explode json array

Solution 1

You'll have to parse the JSON string into an array of JSONs, and then use explode on the result (explode expects an array).

To do that (assuming Spark 2.0.*):

-

If you know all

Paymentvalues contain a json representing an array with the same size (e.g. 2 in this case), you can hard-code extraction of the first and second elements, wrap them in an array and explode:val newDF = dataframe.withColumn("Payment", explode(array( get_json_object($"Payment", "$[0]"), get_json_object($"Payment", "$[1]") ))) -

If you can't guarantee all records have a JSON with a 2-element array, but you can guarantee a maximum length of these arrays, you can use this trick to parse elements up to the maximum size and then filter out the resulting

nulls:val maxJsonParts = 3 // whatever that number is... val jsonElements = (0 until maxJsonParts) .map(i => get_json_object($"Payment", s"$$[$i]")) val newDF = dataframe .withColumn("Payment", explode(array(jsonElements: _*))) .where(!isnull($"Payment"))

Solution 2

import org.apache.spark.sql.types._

val newDF = dataframe.withColumn("Payment",

explode(

from_json(

get_json_object($"Payment", "$."),ArrayType(StringType)

)))

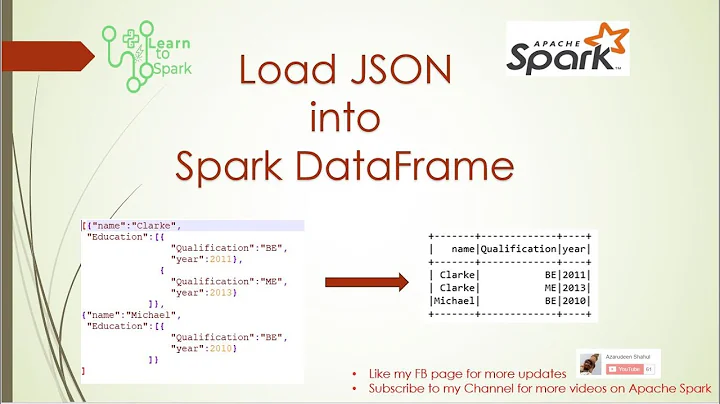

Related videos on Youtube

Richard

Updated on June 04, 2022Comments

-

Richard almost 2 years

Let's say I have a dataframe which looks like this:

+--------------------+--------------------+--------------------------------------------------------------+ | id | Name | Payment| +--------------------+--------------------+--------------------------------------------------------------+ | 1 | James |[ {"@id": 1, "currency":"GBP"},{"@id": 2, "currency": "USD"} ]| +--------------------+--------------------+--------------------------------------------------------------+And the schema is:

root

|-- id: integer (nullable = true) |-- Name: string (nullable = true) |-- Payment: string (nullable = true)How can I explode the above JSON array into below:

+--------------------+--------------------+-------------------------------+ | id | Name | Payment| +--------------------+--------------------+-------------------------------+ | 1 | James | {"@id":1, "currency":"GBP"} | +--------------------+--------------------+-------------------------------+ | 1 | James | {"@id":2, "currency":"USD"} | +--------------------+--------------------+-------------------------------+I've been trying to use the explode functionality like the below, but it's not working. It's giving an error about not being able to explode string types, and that it expects either a map or array. This makes sense given the schema denotes it's a string, rather than an array/map, but I'm not sure how to convert this into an appropriate format.

val newDF = dataframe.withColumn("nestedPayment", explode(dataframe.col("Payment")))Any help is greatly appreciated!

-

Paul over 6 yearsis there a way to do this with a while loop? Seems like it would be more efficient

-

Tzach Zohar over 6 yearsThe supposed performance improvement achieved by a while loop would be so small it's probably unmeasurable. This being a Spark application, one can assume that runtime is dominated by the actual DataFrame operations and not the driver-side code that builds them. Such "premature optimizations" only make code harder to read.

Tzach Zohar over 6 yearsThe supposed performance improvement achieved by a while loop would be so small it's probably unmeasurable. This being a Spark application, one can assume that runtime is dominated by the actual DataFrame operations and not the driver-side code that builds them. Such "premature optimizations" only make code harder to read. -

Mantovani over 5 yearsHello, if I don't know the max length of my array. How can I do something like: val jsonElements = (0 until arrayLength) .map(i => get_json_object($"Payment", s"$$[$i]")) ?

-

Kudakwashe Nyatsanza almost 4 yearsInstead of just downvoting, please leave a comment so I know what's wrong with my answer. This is my first post and it's quite discouraging when all you want to do is help. Thank you.

Kudakwashe Nyatsanza almost 4 yearsInstead of just downvoting, please leave a comment so I know what's wrong with my answer. This is my first post and it's quite discouraging when all you want to do is help. Thank you. -

Sugyan sahu over 2 years@TzachZohar how can we calculate the size of the json array using get_json_object(), I tried get_json_object(col("col_name"), "$.length()"), it didn't work and gives null