How to find probability distribution and parameters for real data? (Python 3)

Solution 1

To the best of my knowledge, there is no automatic way of obtaining the distribution type and parameters of a sample (as inferring the distribution of a sample is a statistical problem by itself).

In my opinion, the best you can do is:

(for each attribute)

Try to fit each attribute to a reasonably large list of possible distributions (e.g. see Fitting empirical distribution to theoretical ones with Scipy (Python)? for an example with Scipy)

Evaluate all your fits and pick the best one. This can be done by performing a Kolmogorov-Smirnov test between your sample and each of the distributions of the fit (you have an implementation in Scipy, again), and picking the one that minimises D, the test statistic (a.k.a. the difference between the sample and the fit).

Bonus: It would make sense - as you'll be building a model on each of the variables as you pick a fit for each one - although the goodness of your prediction would depend on the quality of your data and the distributions you are using for fitting. You are building a model, after all.

Solution 2

Use this approach

import scipy.stats as st

def get_best_distribution(data):

dist_names = ["norm", "exponweib", "weibull_max", "weibull_min", "pareto", "genextreme"]

dist_results = []

params = {}

for dist_name in dist_names:

dist = getattr(st, dist_name)

param = dist.fit(data)

params[dist_name] = param

# Applying the Kolmogorov-Smirnov test

D, p = st.kstest(data, dist_name, args=param)

print("p value for "+dist_name+" = "+str(p))

dist_results.append((dist_name, p))

# select the best fitted distribution

best_dist, best_p = (max(dist_results, key=lambda item: item[1]))

# store the name of the best fit and its p value

print("Best fitting distribution: "+str(best_dist))

print("Best p value: "+ str(best_p))

print("Parameters for the best fit: "+ str(params[best_dist]))

return best_dist, best_p, params[best_dist]

Solution 3

You can use that code to fit (according to the maximum likelihood) different distributions with your datas:

import matplotlib.pyplot as plt

import scipy

import scipy.stats

dist_names = ['gamma', 'beta', 'rayleigh', 'norm', 'pareto']

for dist_name in dist_names:

dist = getattr(scipy.stats, dist_name)

param = dist.fit(y)

# here's the parameters of your distribution, scale, location

You can see a sample snippet about how to use the parameters obtained here: Fitting empirical distribution to theoretical ones with Scipy (Python)?

Then, you can pick the distribution with the best log likelihood (there are also other criteria to match the "best" distribution, such as Bayesian posterior probability, AIC, BIC or BICc values, ...).

For your bonus question, there's I think no generic answer. If your set of data is significant and obtained under the same conditions as the real word datas, you can do it.

Solution 4

This code also works:

import pandas as pd

import numpy as np

import scipy

from scipy import stats

#Please write below the name of the statistical distributions that you would like to check.

#Full list is here: https://docs.scipy.org/doc/scipy/reference/stats.html

dist_names = ['weibull_min','norm','weibull_max','beta',

'invgauss','uniform','gamma','expon',

'lognorm','pearson3','triang']

#Read your data and set y_std to the column that you want to fit.

y_std=pd.read_csv('my_df.csv')

y_std=y_std['column_A']

#-------------------------------------------------

chi_square_statistics = []

size=len(y_std)

# 20 equi-distant bins of observed Data

percentile_bins = np.linspace(0,100,20)

percentile_cutoffs = np.percentile(y_std, percentile_bins)

observed_frequency, bins = (np.histogram(y_std, bins=percentile_cutoffs))

cum_observed_frequency = np.cumsum(observed_frequency)

# Loop through candidate distributions

for distribution in dist_names:

# Set up distribution and get fitted distribution parameters

dist = getattr(scipy.stats, distribution)

param = dist.fit(y_std)

print("{}\n{}\n".format(dist, param))

# Get expected counts in percentile bins

# cdf of fitted sistrinution across bins

cdf_fitted = dist.cdf(percentile_cutoffs, *param)

expected_frequency = []

for bin in range(len(percentile_bins)-1):

expected_cdf_area = cdf_fitted[bin+1] - cdf_fitted[bin]

expected_frequency.append(expected_cdf_area)

# Chi-square Statistics

expected_frequency = np.array(expected_frequency) * size

cum_expected_frequency = np.cumsum(expected_frequency)

ss = sum (((cum_expected_frequency - cum_observed_frequency) ** 2) / cum_observed_frequency)

chi_square_statistics.append(ss)

#Sort by minimum ch-square statistics

results = pd.DataFrame()

results['Distribution'] = dist_names

results['chi_square'] = chi_square_statistics

results.sort_values(['chi_square'], inplace=True)

print ('\nDistributions listed by goodness of fit:')

print ('............................................')

print (results)

Solution 5

On a similar question (see here) you may be interrested in @Michel_Baudin answer explaining. His code assesses around 40 different distributions available OpenTURNS library and chooses the best one according to the BIC criterion. Looks something like that:

import openturns as ot

sample = ot.Sample([[x] for x in your_data_list])

tested_factories = ot.DistributionFactory.GetContinuousUniVariateFactories()

best_model, best_bic = ot.FittingTest.BestModelBIC(sample, tested_factories)

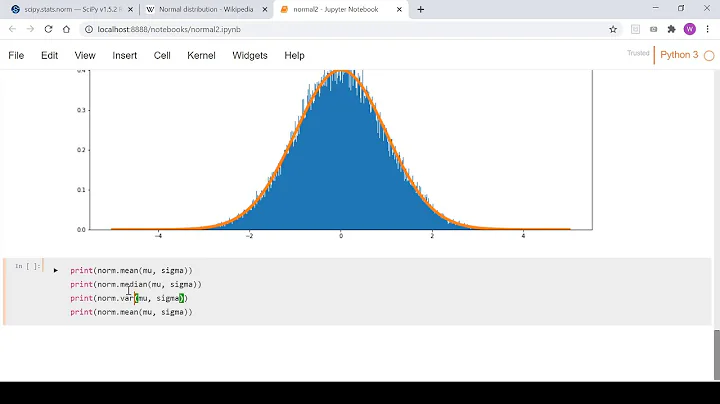

Related videos on Youtube

O.rka

I am an academic researcher studying machine-learning and microorganisms

Updated on July 09, 2022Comments

-

O.rka almost 2 years

O.rka almost 2 yearsI have a dataset from

sklearnand I plotted the distribution of theload_diabetes.targetdata (i.e. the values of the regression that theload_diabetes.dataare used to predict).I used this because it has the fewest number of variables/attributes of the regression

sklearn.datasets.Using Python 3, How can I get the distribution-type and parameters of the distribution this most closely resembles?

All I know the

targetvalues are all positive and skewed (positve skew/right skew). . . Is there a way in Python to provide a few distributions and then get the best fit for thetargetdata/vector? OR, to actually suggest a fit based on the data that's given? That would be realllllly useful for people who have theoretical statistical knowledge but little experience with applying it to "real data".Bonus Would it make sense to use this type of approach to figure out what your posterior distribution would be with "real data" ? If no, why not?

from sklearn.datasets import load_diabetes import matplotlib.pyplot as plt import seaborn as sns; sns.set() import pandas as pd #Get Data data = load_diabetes() X, y_ = data.data, data.target #Organize Data SR_y = pd.Series(y_, name="y_ (Target Vector Distribution)") #Plot Data fig, ax = plt.subplots() sns.distplot(SR_y, bins=25, color="g", ax=ax) plt.show() -

tmthydvnprt almost 8 yearsLook here if you need to view all the

tmthydvnprt almost 8 yearsLook here if you need to view all thescipy.statsdistributions. -

shaifali Gupta over 6 yearsI implemented the above code and param displayed just 3 values. Should'nt they be equal to 5?

-

someone_somewhere about 5 yearsCould you please explain this approach ?

-

Kivo360 almost 5 yearsIt is a complete version of the code in the answer above. They created a list of items for all of the possible distributions that could fit the data. Then they create a hypothesis using the p-score to determine how close that distribution matches the data. What ever has the highest p-score is considered the most accurate. That's because the higher p-score means the hypothesis is closest to reality.

-

Martian over 3 yearsBut how to differentiate between single-modal and multimodal distribution? E.g., here (imgur.com/a/NZKxxRv) I could see 3 modes of normal distribution or just a single one, if to be bold enough.

Martian over 3 yearsBut how to differentiate between single-modal and multimodal distribution? E.g., here (imgur.com/a/NZKxxRv) I could see 3 modes of normal distribution or just a single one, if to be bold enough. -

Victor Maricato over 3 yearsIn this approach you are looking for max P as the best one. Shouldn't it be the min(p) to be selected?

Victor Maricato over 3 yearsIn this approach you are looking for max P as the best one. Shouldn't it be the min(p) to be selected? -

DukeLover about 3 yearsHi , thanks for this approach. After getting the parameters of the distribution , how can I generate synthetic data from the same distribution ? Any idea ?

DukeLover about 3 yearsHi , thanks for this approach. After getting the parameters of the distribution , how can I generate synthetic data from the same distribution ? Any idea ? -

KasperGL almost 3 yearsThis article outlines how to use the Fitter library to automatically identify which of scipy's distributions best fit the data towardsdatascience.com/…

-

JeeyCi about 2 yearsAdditionally, The Expectation-Maximization (EM) algorithm is used instead of Conventional maximum likelihood estimation (MLE) in cases when dataset is not full - when some unobserved or hidden variables are present (they are referred to as latent variables)