How to implement sort in hadoop?

You can probably do this (I'm assuming you are using Java here)

From maps emit like this -

context.write(24,1);

context.write(4,3);

context.write(12,4)

context.write(23,5)

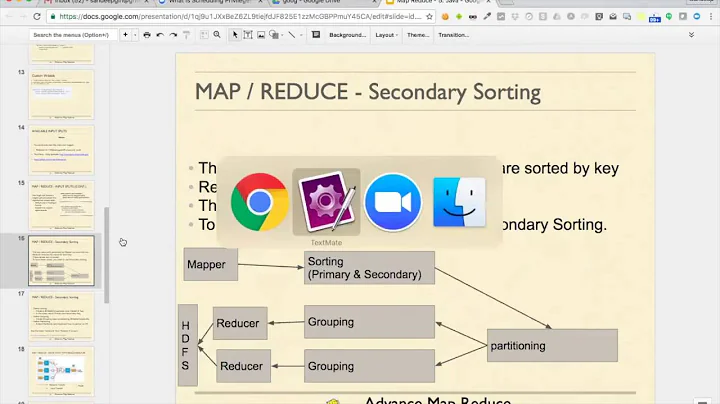

So, all you values that needs to be sorted should be the key in your mapreduce job. Hadoop by default sorts by ascending order of key.

Hence, either you do this to sort in descending order,

job.setSortComparatorClass(LongWritable.DecreasingComparator.class);

Or, this,

You need to set a custom Descending Sort Comparator, which goes something like this in your job.

public static class DescendingKeyComparator extends WritableComparator {

protected DescendingKeyComparator() {

super(Text.class, true);

}

@SuppressWarnings("rawtypes")

@Override

public int compare(WritableComparable w1, WritableComparable w2) {

LongWritable key1 = (LongWritable) w1;

LongWritable key2 = (LongWritable) w2;

return -1 * key1.compareTo(key2);

}

}

The suffle and sort phase in Hadoop will take care of sorting your keys in descending order 24,4,12,23

After comment:

If you require a Descending IntWritable Comparable, you can create one and use it like this -

job.setSortComparatorClass(DescendingIntComparable.class);

In case if you are using JobConf, use this to set

jobConfObject.setOutputKeyComparatorClass(DescendingIntComparable.class);

Put the following code below your main() function -

public static void main(String[] args) {

int exitCode = ToolRunner.run(new YourDriver(), args);

System.exit(exitCode);

}

//this class is defined outside of main not inside

public static class DescendingIntWritableComparable extends IntWritable {

/** A decreasing Comparator optimized for IntWritable. */

public static class DecreasingComparator extends Comparator {

public int compare(WritableComparable a, WritableComparable b) {

return -super.compare(a, b);

}

public int compare(byte[] b1, int s1, int l1, byte[] b2, int s2, int l2) {

return -super.compare(b1, s1, l1, b2, s2, l2);

}

}

}

Related videos on Youtube

csperson

Updated on February 27, 2020Comments

-

csperson about 4 years

My problem is sorting values in a file. keys and values are integers and need to maintain the keys of sorted values.

key value 1 24 3 4 4 12 5 23output:

1 24 5 23 4 12 3 4I am working with massive data and must run the code in a cluster of hadoop machines. How can i do it with mapreduce?

-

csperson over 10 yearsIf i have 5 computers running the code, does this code work and the final result is absoulutly true? how many reducer do i need?

-

SSaikia_JtheRocker over 10 yearsYes, you can have any number of reducrs. I'm also assuming you know how to write a MapReduce job. Please give it a shot and tell me if it solves your issue. I think it will with repect to the use case you have mentioned. Thank you.

SSaikia_JtheRocker over 10 yearsYes, you can have any number of reducrs. I'm also assuming you know how to write a MapReduce job. Please give it a shot and tell me if it solves your issue. I think it will with repect to the use case you have mentioned. Thank you. -

csperson over 10 yearsI work with jobconf, it doesn't have setSortComparatorClass method.

-

csperson over 10 yearsmy keys are intwritable.how do i use DescendingKeyComparator class in my code?

-

SSaikia_JtheRocker over 10 yearsTry creating one. I have modified my answer, please check and tell me if it helps.

SSaikia_JtheRocker over 10 yearsTry creating one. I have modified my answer, please check and tell me if it helps. -

csperson over 10 yearsThe type of class is static.it shows error.i changed it to final.Does this change cause problem?

-

SSaikia_JtheRocker over 10 yearsthe static class is defined outside the main() not inside, check the modified answer.

SSaikia_JtheRocker over 10 yearsthe static class is defined outside the main() not inside, check the modified answer. -

pk10 over 9 yearsBut what about a Double value? There is no class to accomplish it?

-

SSaikia_JtheRocker over 9 years@user2961121: I think, there is: hadoop.apache.org/docs/r2.3.0/api/org/apache/hadoop/io/…

SSaikia_JtheRocker over 9 years@user2961121: I think, there is: hadoop.apache.org/docs/r2.3.0/api/org/apache/hadoop/io/… -

Abhi about 8 yearsAbove process sorts data by keys(24,23,12,4) after map emits keys as values and vice-versa. Can I take the input into my reduce() from the sorted data and transform it back into orignal <key, value> pairs, for eg, 1 24, etc..

-

Kadima over 7 yearsTrying to use this DescendingIntWritableComparable to implement a descending sort instead of ascending sort, but job.setSortComparatorClass() does not see DescendingIntComparable.class as a class that extends RawComparator, so it doesn't run. Any ideas how to modify this so it'll work?