Reading a Cobol generated file

Solution 1

To read the COBOL-genned file, you'll need to know:

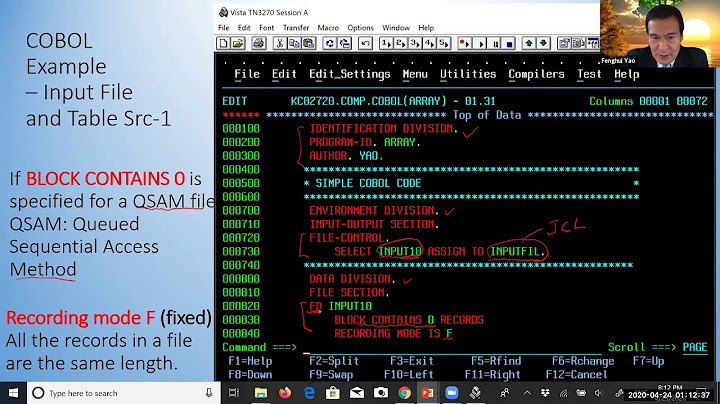

First, you'll need the record layout (copybook) for the file. A COBOL record layout will look something like this:

01 PATIENT-TREATMENTS.

05 PATIENT-NAME PIC X(30).

05 PATIENT-SS-NUMBER PIC 9(9).

05 NUMBER-OF-TREATMENTS PIC 99 COMP-3.

05 TREATMENT-HISTORY OCCURS 0 TO 50 TIMES

DEPENDING ON NUMBER-OF-TREATMENTS

INDEXED BY TREATMENT-POINTER.

10 TREATMENT-DATE.

15 TREATMENT-DAY PIC 99.

15 TREATMENT-MONTH PIC 99.

15 TREATMENT-YEAR PIC 9(4).

10 TREATING-PHYSICIAN PIC X(30).

10 TREATMENT-CODE PIC 99.

You'll also need a copy of IBM's Principles of Operation (S/360, S370, z/OS, doesn't really matter for our purposes). Latest is available from IBM at

- http://www-01.ibm.com/support/docview.wss?uid=isg2b9de5f05a9d57819852571c500428f9a (but you'll need an IBM account.

- An older edition is available, gratis, at http://www.hack.org/mc/texts/principles-of-operation.pdf

Chapters 8 (Decimal Instructions) and 9 (Floating Point Overview and Support Instructions) are the interesting bits for our purposes.

Without that, you're pretty much lost.

Then, you need to understand COBOL data types. For instance:

- PIC defines an alphameric formatted field (PIC 9(4), for example is 4 decimal digits, that might be filled with for space characters if missing). Pic 999V99 is 5 decimal digits, with an implied decimal point. So-on and so forthe.

- BINARY is [usually] a signed fixed point binary integer. Usual sizes are halfword (2 octets) and fullword (4 octets).

- COMP-1 is single precision floating point.

- COMP-2 is double precision floating point.

If the datasource is an IBM mainframe, COMP-1 and COMP-2 likely won't be IEE floating point: it will be IBM's base-16 excess 64 floating point format. You'll need something like the S/370 Principles of Operation to help you understand it.

- COMP-3 is 'packed decimal', of varying lengths. Packed decimal is a compact way of representing a decimal number. The declaration will look something like this:

PIC S9999V99 COMP-3. This says that is it signed, consists of 6 decimal digits with an implied decimal point. Packed decimal represents each decimal digit as a nibble of an octet (hex values 0-9). The high-order digit is the upper nibble of the leftmost octet. The low nibble of the rightmost octet is a hex value A-F representing the sign. So the abovePICclause will requireceil( (6+1)/2 )or 4 octets. the value -345.67, as represented by the abovePICclause will look like0x0034567D. The actual sign value may vary (the default is C/positive, D/negative, but A, C, E and F are treated as positive, while only B and D are treated as negative). Again, see the S\370 Principles of Operation for details on the representation.

Related to COMP-3 is zoned decimal. This might be declared as `PIC S9999V99' (signed, 5 decimal digits, with an implied decimal point). Decimal digits, in EBCDIC, are the hex values 0xFO - 0xF9. 'Unpack' (mainframe machine instruction) takes a packed decimal field and turns in into a character field. The process is:

- start with the rightmost octet. Invert it, so the sign nibble is on top and place it into the rightmost octet of the destination field.

Working from right to left (source and the target both), strip off each remaining nibble of the packed decimal field, and place it into the low nibble of the next available octet in the destination. Fill the high nibble with a hex F.

The operation ends when either the source or destination field is exhausted.

If the destination field is not exhausted, if it left-padded with zeroes by filling the remaining octets with decimal '0' (oxF0).

So our example value, -345.67, if stored with the default sign value (hex D), would get unpacked as 0xF0F0F0F3F4F5F6D7 ('0003456P', in EBDIC).

[There you go. There's a quiz later]

- If the COBOL app lives on an IBM mainframe, has the file been converted from its native EBCDIC to ASCII? If not, you'll have to do the mapping your self (Hint: its not necessarily as straightforward as that might seem, since this might be a selective process -- only character fields get converted (COMP-1, COMP-2, COMP-3 and BINARY get excluded since they are a sequence of binary octets). Worse, there are multiple flavors of EBCDIC representations, due to the varying national implementations and varying print chains in use on different printers.

Oh...one last thing. The mainframe hardware tends to like different things aligned on halfword, word or doubleword boundaries, so the record layout may not map directly to the octets in the file as there may be padding octets inserted between fields to maintain the needed word alignment.

Good Luck.

Solution 2

It would be useful to know which Cobol Dialect you are dealing with because there is no single Cobol Format. Some Cobol Compilers (Micro Focus) put a "File Description" at the front of files (For Micro Focus VB / Indexed files).

-

Have a look at the RecordEditor (http://record-editor.sourceforge.net/). It has a File Wizard which could be very useful for you.

- In the File Wizard set the file as Fixed-Width File (most common in Cobol). The program lets you try out different Record Lengths. When you get the correct record length, the Text fields should line up.

- Latter on in the Wizard there is field search which can look for Binary, Comp-3, Text Fields.

- There is some notes on using the RecordEditor's Wizard with an unknown file here http://record-editor.sourceforge.net/Unkown.htm

Unless the file is coming from a Mainframe / AS400 it is unlikely to use EBCDIC (cp037 - Coded Page 37 is US EBCDIC), any text is most likely in Ascii.

The file probably contains Packed-Decimal (Comp3) and Binary-Integer data. Most Cobols use Big-Endian (for Comp integers) even on Intel (little endian hardware).

One thing to remember with Cobol PIC s9(6)V99 comp is stored as a Binary Integer with x'0001' representing 0.01. So unless you have the Cobol definition you can not tell wether a binary 1 is 1 0.1, 0.01 etc

Solution 3

I see from comments attached to your question that you are dealing with the “classic” COBOL batch file structure: Header record, detail records and trailer record.

This is probably bad news if you are responsible for creating the trailer record! The typical “trailer” record is used to identify the end-of-file and provides control information such as the number of records that precede it and various check sums and/or grand totals for “detail” records. In other words, you may need to read and summarize the entire file in order to create the trailer. Add to this the possibility that much of the data in the file is in Packed Decimal, Zoned Decimal or other COBOLish numeric data types, you could be in for a rough time.

You might want to question why you are adding trailer records to these files. Typically the “trailer” is produced by the same program or application that created the “detail” records. The trailer is supposed to act as a verification that the sending application/program wrote all of the data it was supposed to. The summary totals, counts etc. are used by the receiving application to verify that the detail records tally with the preceding details. This is supposed to serve as another verification that the sending application didn't muff up the data or that it was not corrupted en-route (no that wasn't a joke – but maybe it should be). When a "man in the middle" creates the trailers it kind of defeats the entire purpose of the exercise (no matter how flawed it might have been to begin with).

Related videos on Youtube

Rauland

Updated on June 04, 2022Comments

-

Rauland almost 2 years

Rauland almost 2 yearsI’m currently on the task of writing a c# application, which is going sit between two existing apps. All I know about the second application is that it processes files generated by the first one. The first application is written in Cobol.

Steps: 1) Cobol application, writes some files and copies to a directory. 2) The second application picks these files up and processes them.

My C# app would sit between 1) an 2). It would have to pick up the file generated by 1), read it, modify it and save it, so that application 2) wouldn’t know I have even been there.

I have a few problems.

- First of all if I open a file generated by 1) in notepad, most of it is unreadable while other parts are.

- If I read the file, modify it and save, I must save the file with the same notation used by the cobol application, so that app 2), doesn´t know I´ve been there.

I´ve tried reading the file this way, but it´s still unreadable:

Code:

string ss = @"filename"; using (FileStream fs = new FileStream(ss, FileMode.Open)) { StreamReader sr = new StreamReader(fs); string gg = sr.ReadToEnd(); }Also if I find a way of making it readable (using some sort of encoding technique), I´m afraid that when I save the file again, I may change it´s original format.

Any thoughts? Suggestions?

-

John Saunders about 13 yearsYou need to find out the vendor of the COBOL, then find out what the file format is. There's no single "COBOL" format.

-

GilShalit almost 13 yearsSee some more information [here][1] [1]: stackoverflow.com/questions/5109302/…

-

Tim Lloyd about 13 yearsI will come back tomorrow and upvote - have run out for today. Excellent answer. Looks like quite a formidable task! :)

-

Nicholas Carey about 13 yearsIt's generally not as bad as it seems. Most COBOL apps write out straight character format records. You seldom see floating point out in the wild, but you might see packed decimal or fixed point binary. Fixed point binary is a straight 1:1 mapping to

shortorint(outside of big-/little-endian issues). Packed decimal is bit of a hassle, but it's not that bad to write a conversion routine to convert todecimal. -

Dr. belisarius about 13 years+1 for remind me how bad things became when Total fields overflowed in the trailer :)

-

Nicholas Carey about 13 yearsnot my copybook

B^). I've been out of the mainframe world for a long, long time, though recently, I did have to go through the process of building a process to periodically import a dump of a COBOL file containing a mixture of text, binary and packed decimal data and bring it into the .Net/SQL Server world. The conversion from EBCDIC is [mostly] pretty easy as the .Net TextInfo supports several EBCDIC code pages (037 and 500, for starters). Had to write a packed-decimal-to-decimalconversion routine and convert the fixed point from big-endian to little-endian. Easy! -

Bill Woodger about 11 yearsUnless you specify SYNCHRONIZED, the Mainframe does nothing for alignment.