spark read wholeTextFiles with non UTF-8 encoding

12,841

Solution 1

It's Simple.

Here is the source code,

import java.nio.charset.Charset

import org.apache.hadoop.io.{Text, LongWritable}

import org.apache.hadoop.mapred.TextInputFormat

import org.apache.spark.SparkContext

import org.apache.spark.rdd.RDD

object TextFile {

val DEFAULT_CHARSET = Charset.forName("UTF-8")

def withCharset(context: SparkContext, location: String, charset: String): RDD[String] = {

if (Charset.forName(charset) == DEFAULT_CHARSET) {

context.textFile(location)

} else {

// can't pass a Charset object here cause its not serializable

// TODO: maybe use mapPartitions instead?

context.hadoopFile[LongWritable, Text, TextInputFormat](location).map(

pair => new String(pair._2.getBytes, 0, pair._2.getLength, charset)

)

}

}

}

From here it's copied.

To Use it.

Edit:

If you need wholetext file,

Here is the actual source of the implementation.

def wholeTextFiles(

path: String,

minPartitions: Int = defaultMinPartitions): RDD[(String, String)] = withScope {

assertNotStopped()

val job = NewHadoopJob.getInstance(hadoopConfiguration)

// Use setInputPaths so that wholeTextFiles aligns with hadoopFile/textFile in taking

// comma separated files as input. (see SPARK-7155)

NewFileInputFormat.setInputPaths(job, path)

val updateConf = job.getConfiguration

new WholeTextFileRDD(

this,

classOf[WholeTextFileInputFormat],

classOf[Text],

classOf[Text],

updateConf,

minPartitions).map(record => (record._1.toString, record._2.toString)).setName(path)

}

Try changing :

.map(record => (record._1.toString, record._2.toString))

to(probably):

.map(record => (record._1.toString, new String(record._2.getBytes, 0, record._2.getLength, "myCustomCharset")))

Solution 2

You can read the files using SparkContext.binaryFiles() instead and build the String for the contents specifying the charset you need. E.g:

val df = spark.sparkContext.binaryFiles(path, 12)

.mapValues(content => new String(content.toArray(), StandardCharsets.ISO_8859_1))

.toDF

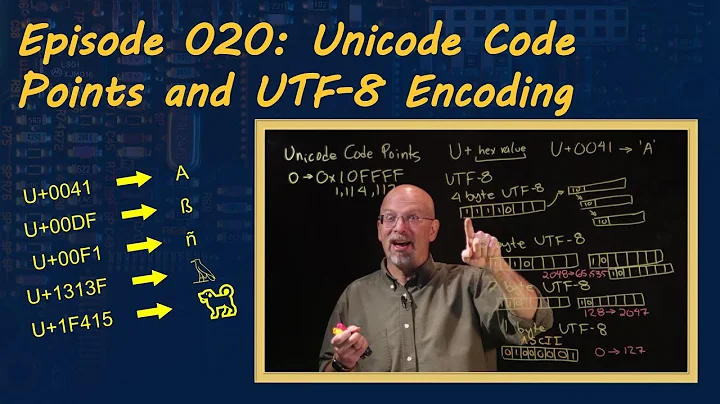

Related videos on Youtube

Author by

Georg Heiler

I am a Ph.D. candidate at the Vienna University of Technology and Complexity Science Hub Vienna as well as a data scientist in the industry.

Updated on June 04, 2022Comments

-

Georg Heiler almost 2 years

Georg Heiler almost 2 yearsI want to read whole text files in non UTF-8 encoding via

val df = spark.sparkContext.wholeTextFiles(path, 12).toDFinto spark. How can I change the encoding? I would want to read ISO-8859 encoded text, but it is not CSV, it is something similar to xml:SGML.

edit

maybe a custom Hadoop file input format should be used?

-

Georg Heiler about 7 yearsThat does seem to be a good start. But How can I achieve similar behavior of wholeTextFiles where the file path is contained as a key and a single row will be created per file unlike the spark-csv variant above, where the lines in a file will be tokenized into rows in the RDD.

Georg Heiler about 7 yearsThat does seem to be a good start. But How can I achieve similar behavior of wholeTextFiles where the file path is contained as a key and a single row will be created per file unlike the spark-csv variant above, where the lines in a file will be tokenized into rows in the RDD. -

RBanerjee about 7 years

-

Georg Heiler about 7 yearsI think this is nearly there. But

Georg Heiler about 7 yearsI think this is nearly there. But.map(record => (record._1.toString, record._2.toString, "iso-8859-1")).setName(path)will fail to compile due tofound : org.apache.spark.rdd.RDD[(String, String, String)] [error] required: org.apache.spark.rdd.RDD[(String, String)] -

Georg Heiler about 7 yearsAnd I am not sure if

Georg Heiler about 7 yearsAnd I am not sure ifWholeTextFileInputFormatwouldn't need to be redefined using the desired encoding? -

Georg Heiler about 7 yearsWould I be required to replace

Georg Heiler about 7 yearsWould I be required to replaceRecordReader[Text, Text]with a customTextimplementation which is not usingUTF-8? -

abhiyenta about 6 yearsSee now, this is simple!