Avoid linux out-of-memory application teardown

Solution 1

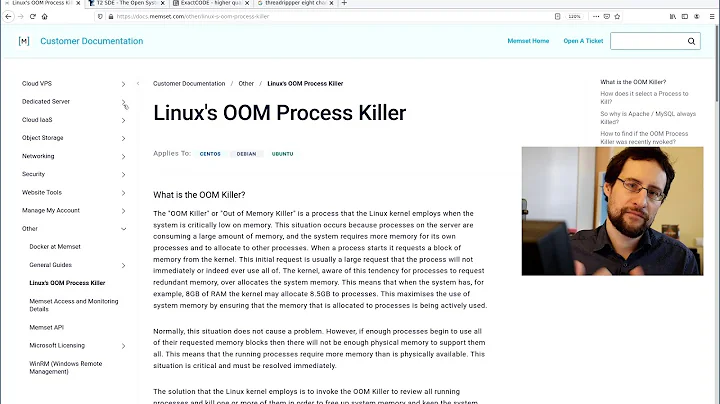

By default Linux has a somewhat brain-damaged concept of memory management: it lets you allocate more memory than your system has, then randomly terminates a process when it gets in trouble. (The actual semantics of what gets killed are more complex than that - Google "Linux OOM Killer" for lots of details and arguments about whether it's a good or bad thing).

To restore some semblance of sanity to your memory management:

- Disable the OOM Killer (Put

vm.oom-kill = 0in /etc/sysctl.conf) - Disable memory overcommit (Put

vm.overcommit_memory = 2in /etc/sysctl.conf)

Note that this is a trinary value: 0 = "estimate if we have enough RAM", 1 = "Always say yes", 2 = "say no if we don't have the memory")

These settings will make Linux behave in the traditional way (if a process requests more memory than is available malloc() will fail and the process requesting the memory is expected to cope with that failure).

Reboot your machine to make it reload /etc/sysctl.conf, or use the proc file system to enable right away, without reboot:

echo 2 > /proc/sys/vm/overcommit_memory

Solution 2

The short answer, for a server, is buy and install more RAM.

A server that routinely enough experienced OOM (Out-Of-Memory) errors, then besides the VM (virtual memory) manager's overcommit sysctl option in Linux kernels, this is not a good thing.

Upping the amount of swap (virtual memory that has been paged out to disk by the kernel's memory manager) will help if the current values are low, and the usage involves many tasks each such large amounts of memory, rather than a one or a few processes each requesting a huge amount of the total virtual memory available (RAM + swap).

For many applications allocating more than two time (2x) the amount of RAM as swap provides diminishing return on improvement. In some large computational simulations, this may be acceptable if the speed slow-down is bearable.

With RAM (ECC or not) be quite affordable for modest quantities, e.g. 4-16 GB, I have to admit, I haven't experienced this problem myself in a long time.

The basics at looking at the memory consumption including using free and top, sorted by memory usage, as the two most common quick evaluations of memory usage patterns. So be sure you understand the meaning of each field in the output of those commands at the very least.

With no specifics of applications (e.g. database, network service server, real-time video processing) and the server's usage (few power users, 100-1000s of user/client connections), I cannot think of any general recommendations in regards to dealing with the OOM problem.

Solution 3

You can disable overcommit, see http://www.mjmwired.net/kernel/Documentation/sysctl/vm.txt#514

Solution 4

You can use ulimit to reduce the amount of memory a process is allowed to claim before it's killed. It's very usefull if your problem is one or a few run away processes that crashes your server.

If your problem is that you simply don't have enough memory to run the services you need there are only three solutions:

Reduce the memory used by your services by limiting caches and similar

Create a larger swap area. It will cost you in performance, but can buy you some time.

Buy more memory

Solution 5

Increasing the amount of physical memory may not be an effective response in all circumstances.

One way to check this is the 'atop' command. Particularly these two lines.

This is out server when it was healthy:

MEM | tot 23.7G | free 10.0G | cache 3.9G | buff 185.4M | slab 207.8M |

SWP | tot 5.7G | free 5.7G | | vmcom 28.1G | vmlim 27.0G |

When it was running poorly (and before we adjusted overcommit_memory from 50 to 90, we would see behavior with vmcom running well over 50G, oom-killer blowing up processes every few seconds, and the load kept radically bouncing due to NFSd child processes getting blown up and re-created continually.

We've recently duplicated cases where multi-user Linux terminal servers massively over-commit the virtual memory allocation but very few of the requested pages are actually consumed.

While it's not advised to follow this exact route, we adjusted overcommit-memory from the default 50 to 90 which alleviated some of the problem. We did end up having to move all the users to another terminal server and restart to see the full benefit.

Related videos on Youtube

tasasaki

Updated on September 17, 2022Comments

-

tasasaki almost 2 years

tasasaki almost 2 yearsI'm finding that on occasion my Linux box runs out of memory and it starts tearing down random processes to deal with it.

I'm curious what administrators do to avoid this? Is the only real solution to up the amount of memory (will upping the swap alone help?), or is there better ways to set up the box with software to avoid this? (i.e., quotas, or some such?).

-

Amaury over 6 yearsI found an answer here : serverfault.com/questions/362589/… Patrick response is very instructive

Amaury over 6 yearsI found an answer here : serverfault.com/questions/362589/… Patrick response is very instructive

-

-

Aleksandar Ivanisevic about 14 yearsIt's not Linux thats braindamaged, but the programmers that allocate the memory, never to use it. Java VMs are notorious with this. I, as an admin who manages servers runing Java apps wouldn't survive one second without overcommit.

-

jlliagre about 14 yearsJava programmers don't allocate unused memory, there is no malloc in java. I think you are confusing this with JVM settings like -Xms. In any case, increasing virtual memory size by adding swap space is a much safer solution than overcommiting.

jlliagre about 14 yearsJava programmers don't allocate unused memory, there is no malloc in java. I think you are confusing this with JVM settings like -Xms. In any case, increasing virtual memory size by adding swap space is a much safer solution than overcommiting. -

pehrs about 14 yearsNote that this solution won't stop your system from running out of memory or killing processes. It will only revert you to traditional Unix behaviour, where if one process eats all your memory the next one that tries to malloc will not get any (and most likely crash). If you are unlucky that next process is init (or something else that is critical), which the OOM Killer generally avoids.

-

Rahim about 14 yearsWhile these settings work in theory, in practice things aren't nearly as clean cut. A lot of applications rely on being able to overallocate memory. Unless you have extremely tight control over what is running on your systems, it can lead to all sorts of weird problems, including ones like pehrs describes.

-

Aleksandar Ivanisevic about 14 yearsjlliagre, i said Java VMs (Virtual Machines), not Java programs, although from an admin perspective it is the same :)

-

nickgrim over 11 yearsPerhaps worth mentioning here that adding the above to

/etc/sysctl.confwill likely only take effect on the next reboot; if you want to make changes now you should use thesysctlcommand with root permissions e.g.sudo sysctl vm.overcommit_memory=2 -

Michael Hampton over 10 yearsYou have contradicted yourself here. At the top you say "by default vm.overcommit_ratio is 0" and then at the bottom you say "by default linux doesn't overcommit". If the latter were true, vm.overcommit_ratio would be 2 by default!

Michael Hampton over 10 yearsYou have contradicted yourself here. At the top you say "by default vm.overcommit_ratio is 0" and then at the bottom you say "by default linux doesn't overcommit". If the latter were true, vm.overcommit_ratio would be 2 by default! -

c4f4t0r over 10 yearsvm.overcommit_ratio = 0, malloc doesn't alloc more memory than your physical ram, so for me that means don't overcommit, overcommit is when you can allocate more virtual than your physical ram

c4f4t0r over 10 yearsvm.overcommit_ratio = 0, malloc doesn't alloc more memory than your physical ram, so for me that means don't overcommit, overcommit is when you can allocate more virtual than your physical ram -

Michael Hampton over 10 yearsYes, you have misunderstood.

Michael Hampton over 10 yearsYes, you have misunderstood. -

c4f4t0r over 10 yearsyou misunderstood, the default 0, doesn't allocate to allocate more virtual memory than ram and 2 doesn't go over allow vm.overcommit_ratio + swap space, so if i misunderstood tell me what

c4f4t0r over 10 yearsyou misunderstood, the default 0, doesn't allocate to allocate more virtual memory than ram and 2 doesn't go over allow vm.overcommit_ratio + swap space, so if i misunderstood tell me what -

c4f4t0r over 10 yearsif linux overcommit by default, why in my server with 8GB of ram, malloc fail to allocate 9GB of virtual memory? so i know you don't like my answer, but the true is linux doesn't overcommit by default and vm.overcommit_ratio = 0 means that. try for your self

c4f4t0r over 10 yearsif linux overcommit by default, why in my server with 8GB of ram, malloc fail to allocate 9GB of virtual memory? so i know you don't like my answer, but the true is linux doesn't overcommit by default and vm.overcommit_ratio = 0 means that. try for your self -

c4f4t0r over 10 yearsmaybe i don't speak very well, this is the @voretaq7 answer "By default Linux has a somewhat brain-damaged concept of memory management: it lets you allocate more memory than your system has", i don't think so, so as you know and like you told "Of course. "Obvious overcommits" are refused", so for me the answer isn't correct

c4f4t0r over 10 yearsmaybe i don't speak very well, this is the @voretaq7 answer "By default Linux has a somewhat brain-damaged concept of memory management: it lets you allocate more memory than your system has", i don't think so, so as you know and like you told "Of course. "Obvious overcommits" are refused", so for me the answer isn't correct -

c4f4t0r over 10 years

c4f4t0r over 10 years -

Bastian Voigt about 7 yearsAn answer should be more than just a link to some lengthy linux kernel documentation file. -1

Bastian Voigt about 7 yearsAn answer should be more than just a link to some lengthy linux kernel documentation file. -1 -

Stephane almost 7 yearsI cannot find the

Stephane almost 7 yearsI cannot find the/etc/sysctl.conffile inLubuntu 16.04. -

usr over 6 yearsIt definitely is Linux that is brain damaged. The OOM killer by design, and unfixably, introduces the most severe instability into a server. Unfortunately, programs have come to rely on this behavior. This means that fixing it is very hard and Linux has to keep doing this forever.

usr over 6 yearsIt definitely is Linux that is brain damaged. The OOM killer by design, and unfixably, introduces the most severe instability into a server. Unfortunately, programs have come to rely on this behavior. This means that fixing it is very hard and Linux has to keep doing this forever. -

Roel Van de Paar about 4 yearsOn Ubuntu there is no vm.oom-kill, see superuser.com/questions/1150215/…

-

cherouvim about 4 yearsPerfectly explained.

-

Per Lundberg over 2 yearsIn case anyone is thinking about doing this to "fix" any OOM issues they are running into (like I did), PLEASE RECONSIDER. I set

vm.overcommit_memory = 2with about 3.8 GiB RAM "free", but it destroyed my desktop session. Unfortunately, I added it to/etc/sysctl.confas well, which meant that after I rebooted and re-logged on, it would get destroyed again. (Using Debian and Cinnamon in my case, but I'm not sure other desktop environments are any better in this regard.) I think the idea is nice, but the reality is that many real-world applications rely on being able to overcommit memory. -

golimar over 2 years@PerLundberg Did you get errors like

...traps: chrome[5867] trap invalid opcode...in your applications? I got this all over the place after trying these settings on Debian 11. Some programs worked fine, others like Chrome, Chromium, games, Steam just crashed a few seconds after startup -

Per Lundberg over 2 years@golimar Hmm, unsure. I only know that things like the Cinammon window manager seemed to die, making the system incredibly hard to use. :) I can't tell exactly what was logged in

/var/log/kern.logif anything, unfortunately. :/ -

Admin about 2 yearsWhere is the

Admin about 2 yearsWhere is thevm.oom-killoption documented? It seems to be named in lots of places on the net, but not to exist in the kernel (try issuingsysctl vm.oom-kill) nor in its documentation.