extract the 4th column from a csv file using unix command

Solution 1

UPDATE:

Actually, a much easier way is to set the record separator in gawk:

$ gawk 'BEGIN{RS="\"\n"; FS=","}{print $4}' myFile.csv

"col4

"4th column

"4th column2

However, this will remove the trailing " from the end of each column. To fix that you can print it yourself:

$ gawk 'BEGIN{RS="\"\n"; FS=","}{print $4"\""}' myFile.csv

"col4"

"4th column"

"4th column2"

If you don't want the quotes at all, you can set the field separator to ",":

$ gawk 'BEGIN{RS="\"\n"; FS="\",\""}{print $3}' myFile.csv

col3

4th column

4th column2

The only way I can think of One way of doing this is to first modify the file and then parse it. In your example, the newline that actually separates two records is always following a ":

"col1","col2","col3","col4" <-- here

1,"text1","<p>big <-- no "

If that is the case for the entire file, you can replace all newlines that are not immediately after a " with a placeholder and so have everything in a single line. You can then parse normally with gawk and finally replace the placeholder with the newline again. I will use the string &%& as a placeholder since it is unlikely to exist in your file:

$ perl -pe 's/"\s*\n/"&%&/; s/\n//g; s/&%&/\n/;' myFile.csv | awk -F, '{print $4}'

"col4"

"4th column"

"4th column2"

The -p flag for perl means print each line of the input file after applying the script given by -e. Then there are 3 substitution (s/foo/bar/) commands:

s/"\s*\n/"&%&/: This will find any"which is followed by 0 or more whitespace characters (\s*) and then a newline character (\n). It will replace that with"&%&. The quotes are added to preserve the format and the&%&is just a random placeholder, it could be anything that does not appear in your file.s/\n//g;: since the real newlines have been replaced with the placeholder, we can now safely remove all remaining newlines in this record. This means that all lines of the current record have now been concatenated into the current line.s/&%&/\n/: This turns the placeholder back into a normal new line.

To understand the output of the command run it without gawk:

$ perl -pe 's/"\s*\n/"&%&/; s/\n//g; s/&%&/\n/;' myFile.csv

"col1","col2","col3","col4"

1,"text1","<p>big html text</p>","4th column"

2,"text2","<p>big2 html2 text2</p>","4th column2"

So, you now have your long records on single lines and this is perfect food for gawk.

You can also do it directly in Perl:

perl -ne '$/="\"\n"; chomp;@a=split(/,/);print "$a[3]\"\n"' myFile.csv

"col4"

"4th column"

"4th column2"

This is using a bit more Perl magic. The $/special variable is the input record separator. By setting it to "\n we tell Perl to split lines not at \n but only at "\n" so that each record will be treated as a single line. Once that is done, chomp removes the newline from the end of the line (for printing later) and split splits each record (on ,) and saves it in the array @a. Finally, we print the 4th element of the array (arrays are numbered from 0 so that is $a[3]) which is the 4th column.

And even more magic, turn on auto spitting (-a) and split on commas (F","). This will split each record into the special @F array and you can print the 4th element of the array:

$ perl -F"," -ane '$/="\"\n";chomp;print "$F[3]"' myFile.csv

"col4"

"4th column"

"4th column2"

Solution 2

I would recommend using a battle-tested CSV parsing module. For example:

perl -MText::CSV -E '

$csv = Text::CSV->new({binary=>1});

while ($row = $csv->getline(STDIN)) {say $row->[3]}

' < file.csv

col4

4th column

4th column2

or this produces the same results:

ruby -rcsv -e 'CSV.foreach(ARGV.shift) {|row| puts row[3]}' file.csv

Solution 3

Python :

python -c "import csv,sys; print '\n'.join([ r[3] for r in csv.reader(open(sys.argv[1]))])" myfile.csv

A memory-conservative solution for large files that iterates through the file a line at a time unlike the above approach that loads the contents of the file into memory via a list

#!/usr/bin/env python

import sys

import csv

with open(sys.argv[1]) as f:

for row in csv.reader(f):

print(row[3])

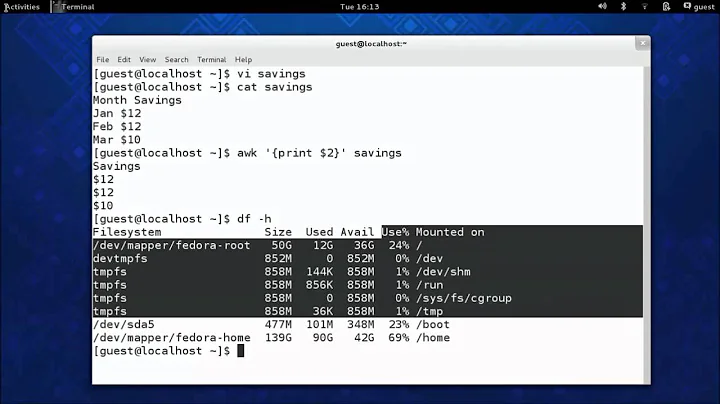

TEST RESULT of all Solutions:

OS: Ubuntu 12.04

Public CSV Data Download from : http://seanlahman.com/baseball-archive/statistics/

Versions details

root@ubuntu:~# python --version

Python 2.7.3

root@ubuntu:~# ruby --version

ruby 1.8.7 (2011-06-30 patchlevel 352) [i686-linux]

root@ubuntu:~# perl --version

This is perl 5, version 14, subversion 2 (v5.14.2) built for i686-linux-gnu-thread-multi-64int

Result with time

root@ubuntu:~# time python -c "import csv,sys; print '\n'.join([ r[3] for r in csv.reader(open(sys.argv[1]))])" Master.csv > /tmp/python

real 0m1.112s

user 0m0.056s

sys 0m0.316s

root@ubuntu:~# time ruby -rcsv -e 'CSV.foreach(ARGV.shift) {|row| puts row[3]}' Master.csv > /tmp/ruby

real 0m24.582s

user 0m23.397s

sys 0m0.448s

root@ubuntu:~# time perl -MText::CSV -E '

> $csv = Text::CSV->new({binary=>1});

> while ($row = $csv->getline(STDIN)) {say $row->[3]}

> ' < Master.csv > /tmp/perl

real 0m7.049s

user 0m5.876s

sys 0m0.468s

Solution 4

if it is unix style "\n" ended lines

tr -d "\n" < myfile.csv | awk 'BEGIN{RS=","} !(NR % 4)'

Some fields are multi-lines

the tr -d "\n"deletes all the newlines character, creating a stream of "," separated values.

the awk is told to use "," as line separator and to print every time (line number modulo 4) is 0.

This only works if the 4th field is the last field (as in your sample). If thats not the case :

tr -d "\n" < myfile.csv | awk 'BEGIN{RS=","; last=12} (++c == 4) (c == last) {c=0}'

It count lines, print the line when count is 4 and reset the count when last field is reached.

Solution 5

Get the 4th elements of your CSV using.

cut -d , -f 4 myFile.csv

Save it to a file with:

cut -d , -f 4 myFile.csv | cat >> my4thEltsFile.csv

Related videos on Youtube

agstudy

CTO - data scientist : beatyourbill I am the author of ggPMX R package and R sqlserver package You can reach me at : Twitter github

Updated on September 18, 2022Comments

-

agstudy over 1 year

I have a csv file formatted as below.

"col1","col2","col3","col4" "1","text1","<p>big html text</p> ","4th column" "2","text2","<p>big2 html2 text2</p> ","4th column2"I want to extract the 4th column using. I think that

awkis the best tool for this ( let me know if I am wrong). I tried thisawk -F, '{print $4}' myFile.csvbut it fails. I think because the 3rd column is multiline one. How can I use

awkor any other unix command to extract the 4th column. I am looking for an efficient solution since my real file is big (> 2GB)-

slm over 10 yearsWith multi-line CSV files using awk becomes even more difficult. You might want to check out using Perl and Text::CSV. stackoverflow.com/questions/3065095/…

slm over 10 yearsWith multi-line CSV files using awk becomes even more difficult. You might want to check out using Perl and Text::CSV. stackoverflow.com/questions/3065095/… -

terdon over 10 yearsWhat does the rest of the file look line? Is that the only record in the file or are there multiple records, each of which can contain a new line?

terdon over 10 yearsWhat does the rest of the file look line? Is that the only record in the file or are there multiple records, each of which can contain a new line? -

terdon over 10 yearsDamn, and the entries are not separated by more than one newline?

terdon over 10 yearsDamn, and the entries are not separated by more than one newline? -

agstudy over 10 years@slm one you say complicated , do you mean that the solution should be similar to this?

-

slm over 10 years@agstudy - perl runs on windows and linux. Also what are you planning on using

slm over 10 years@agstudy - perl runs on windows and linux. Also what are you planning on usingawkon? Also you tagged this Q as linux so you might want to change that if you need this solution on something else! -

Gregg Leventhal over 10 yearsYou just needed to put the comma in single quotes like -F','

-

-

agstudy over 10 yearsThanks for your answer. I am really sorry. I edited my question and if you notes I have

"1","text1","<p>binot1,"text1","<p>bi. Does this solution still work? -

terdon over 10 years@agstudy yes, as long as the newline at the end of the string is still just after a

terdon over 10 years@agstudy yes, as long as the newline at the end of the string is still just after a". I am writing an explanation of how this thing works, will update in a couple of minutes. -

agstudy over 10 yearsshould I install something to get MText?

-

Angel Todorov over 10 yearsYou need to

cpan install Text::CSV -

agstudy over 10 yearsI am under windows . cpan don't work. But thanks for your answer.(+1)

-

Angel Todorov over 10 yearsWhat distribution of Perl do you use in windows? There must be something to install new modules

-

terdon over 10 years@agstudy see updated answer for a (much) simpler way using

terdon over 10 years@agstudy see updated answer for a (much) simpler way usinggawk. -

agstudy over 10 yearsI have

v5.8.8 built for msys -

agstudy over 10 yearsdoes this solution is efficient?

-

agstudy over 10 years+1! can you please explain a little bit the commands here.

-

Rahul Patil over 10 yearsfile like XML,CSV should be parse using languages like Python,Perl,Ruby..

-

agstudy over 10 yearsI am an R/python developer. So If I should get a good reason to ask about efficiency here, isn't it? Also here we have some good solutions using unix command.

-

Emmanuel over 10 years@agstudy while editing and simplifying the script I added an error (forgot the "!" in the awk) .

-

Rahul Patil over 10 yearsSorry sir, I don't have good ans..

-

agstudy over 10 years:) come on! you have a python good answer( I already upvote). I am just asking if you solution is efficient to deal with a 2GB file.(personally I prefer to use python also, but here is not as efficient as I want).

-

Mathias Begert over 10 years@RahulPatil,do I have your permission to edit this and provide a better solution for large files?

Mathias Begert over 10 years@RahulPatil,do I have your permission to edit this and provide a better solution for large files? -

Rahul Patil over 10 years@1_CR Sure.. but what should I do for the same ?

-

Rahul Patil over 10 years@agstudy I have tested 3M public data csv data seanlahman.com/baseball-archive/statistics, see the test result : paste.ubuntu.com/6606611 but gawk giving on one raw

-

agstudy over 10 years@RahulPatil excellent! thank you for the benchmarking. Unfortunately I can't test in my real file ( i don't have a linux machine right now) maybe you can add the benchmarking to your answer. Thanks again for your effort.

-

Mathias Begert over 10 yearsRahulPatil, @agstudy, please see the edited version

Mathias Begert over 10 yearsRahulPatil, @agstudy, please see the edited version -

Rahul Patil over 10 years@agstudy the data I've in CSV is simple(comma separated) not complex one, so it's not accurate benchmark result, I hope you will test update in your question :)

-

Johannes Jarolim over 9 yearsBest solution here by far. Have an up vote.