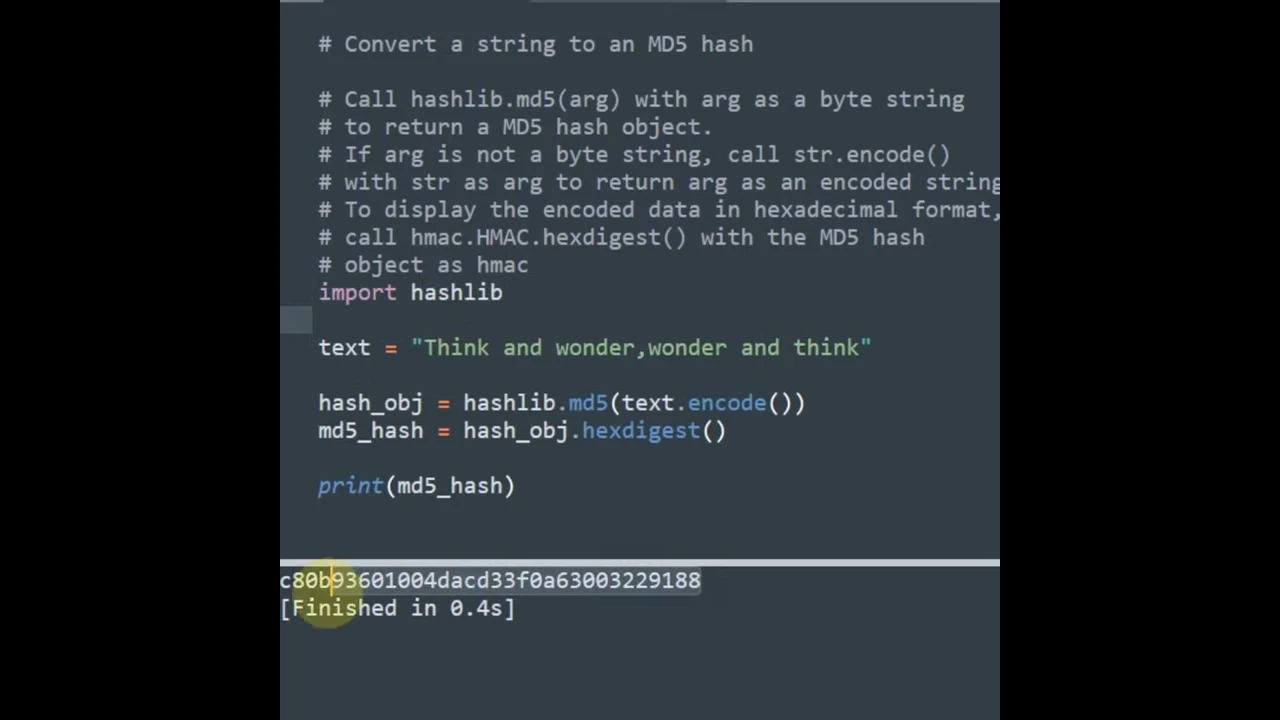

Get MD5 hash of big files in Python

Solution 1

Break the file into 8192-byte chunks (or some other multiple of 128 bytes) and feed them to MD5 consecutively using update().

This takes advantage of the fact that MD5 has 128-byte digest blocks (8192 is 128×64). Since you're not reading the entire file into memory, this won't use much more than 8192 bytes of memory.

In Python 3.8+ you can do

import hashlib

with open("your_filename.txt", "rb") as f:

file_hash = hashlib.md5()

while chunk := f.read(8192):

file_hash.update(chunk)

print(file_hash.digest())

print(file_hash.hexdigest()) # to get a printable str instead of bytes

Solution 2

You need to read the file in chunks of suitable size:

def md5_for_file(f, block_size=2**20):

md5 = hashlib.md5()

while True:

data = f.read(block_size)

if not data:

break

md5.update(data)

return md5.digest()

NOTE: Make sure you open your file with the 'rb' to the open - otherwise you will get the wrong result.

So to do the whole lot in one method - use something like:

def generate_file_md5(rootdir, filename, blocksize=2**20):

m = hashlib.md5()

with open( os.path.join(rootdir, filename) , "rb" ) as f:

while True:

buf = f.read(blocksize)

if not buf:

break

m.update( buf )

return m.hexdigest()

The update above was based on the comments provided by Frerich Raabe - and I tested this and found it to be correct on my Python 2.7.2 windows installation

I cross-checked the results using the 'jacksum' tool.

jacksum -a md5 <filename>

http://www.jonelo.de/java/jacksum/

Solution 3

Below I've incorporated suggestion from comments. Thank you all!

Python < 3.7

import hashlib

def checksum(filename, hash_factory=hashlib.md5, chunk_num_blocks=128):

h = hash_factory()

with open(filename,'rb') as f:

for chunk in iter(lambda: f.read(chunk_num_blocks*h.block_size), b''):

h.update(chunk)

return h.digest()

Python 3.8 and above

import hashlib

def checksum(filename, hash_factory=hashlib.md5, chunk_num_blocks=128):

h = hash_factory()

with open(filename,'rb') as f:

while chunk := f.read(chunk_num_blocks*h.block_size):

h.update(chunk)

return h.digest()

Original post

If you want a more Pythonic (no while True) way of reading the file check this code:

import hashlib

def checksum_md5(filename):

md5 = hashlib.md5()

with open(filename,'rb') as f:

for chunk in iter(lambda: f.read(8192), b''):

md5.update(chunk)

return md5.digest()

Note that the iter() function needs an empty byte string for the returned iterator to halt at EOF, since read() returns b'' (not just '').

Solution 4

Here's my version of @Piotr Czapla's method:

def md5sum(filename):

md5 = hashlib.md5()

with open(filename, 'rb') as f:

for chunk in iter(lambda: f.read(128 * md5.block_size), b''):

md5.update(chunk)

return md5.hexdigest()

Solution 5

Using multiple comment/answers in this thread, here is my solution :

import hashlib

def md5_for_file(path, block_size=256*128, hr=False):

'''

Block size directly depends on the block size of your filesystem

to avoid performances issues

Here I have blocks of 4096 octets (Default NTFS)

'''

md5 = hashlib.md5()

with open(path,'rb') as f:

for chunk in iter(lambda: f.read(block_size), b''):

md5.update(chunk)

if hr:

return md5.hexdigest()

return md5.digest()

- This is "pythonic"

- This is a function

- It avoids implicit values: always prefer explicit ones.

- It allows (very important) performances optimizations

And finally,

- This has been built by a community, thanks all for your advices/ideas.

Related videos on Youtube

JustRegisterMe

Updated on September 17, 2021Comments

-

JustRegisterMe over 2 years

I have used hashlib (which replaces md5 in Python 2.6/3.0) and it worked fine if I opened a file and put its content in

hashlib.md5()function.The problem is with very big files that their sizes could exceed RAM size.

How to get the MD5 hash of a file without loading the whole file to memory?

-

XTL about 12 yearsI would rephrase: "How to get the MD5 has of a file without loading the whole file to memory?"

XTL about 12 yearsI would rephrase: "How to get the MD5 has of a file without loading the whole file to memory?"

-

-

Oliver Turner almost 15 yearsYou can just as effectively use a block size of any multiple of 128 (say 8192, 32768, etc.) and that will be much faster than reading 128 bytes at a time.

-

JustRegisterMe almost 15 yearsThanks jmanning2k for this important note, a test on 184MB file takes (0m9.230s, 0m2.547s, 0m2.429s) using (128, 8192, 32768), I will use 8192 as the higher value gives non-noticeable affect.

-

mrkj over 13 yearsBetter still, use something like

128*md5.block_sizeinstead of8192. -

Frerich Raabe almost 13 yearsWhat's important to notice is that the file which is passed to this function must be opened in binary mode, i.e. by passing

rbto theopenfunction. -

tchaymore over 12 yearsThis is a simple addition, but using

hexdigestinstead ofdigestwill produce a hexadecimal hash that "looks" like most examples of hashes. -

Erik Kaplun over 11 yearsShouldn't it be

if len(data) < block_size: break? -

Admin over 11 yearsErik, no, why would it be? The goal is to feed all bytes to MD5, until the end of the file. Getting a partial block does not mean all the bytes should not be fed to the checksum.

Admin over 11 yearsErik, no, why would it be? The goal is to feed all bytes to MD5, until the end of the file. Getting a partial block does not mean all the bytes should not be fed to the checksum. -

Harvey about 11 years@FrerichRaabe: Thanks. I always forget that and then my code blows up on Windows machines.

-

Harvey about 11 yearsmrkj: I think it's more important to pick your read block size based on your disk and then to ensure that it's a multiple of

md5.block_size. -

Hawkwing almost 11 yearsOne suggestion: make your md5 object an optional parameter of the function to allow alternate hashing functions, such as sha256 to easily replace MD5. I'll propose this as an edit, as well.

-

Hawkwing almost 11 yearsalso: digest is not human-readable. hexdigest() allows a more understandable, commonly recogonizable output as well as easier exchange of the hash

-

Bastien Semene over 10 yearsOthers hash formats are out of the scope of the question, but the suggestion is relevant for a more generic function. I added a "human readable" option according to your 2nd suggestion.

Bastien Semene over 10 yearsOthers hash formats are out of the scope of the question, but the suggestion is relevant for a more generic function. I added a "human readable" option according to your 2nd suggestion. -

cod3monk3y about 10 yearsthe

b''syntax was new to me. Explained here. -

ThorSummoner about 9 years@Harvey Is there any rule of thumb for common disk block sizes? Or otherwise any recommendations for determining optimal block size?

-

Harvey about 9 years@ThorSummoner: Not really, but from my working finding optimum block sizes for flash memory, I'd suggest just picking a number like 32k or something easily divisible by 4, 8, or 16k. For example, if your block size is 8k, reading 32k will be 4 reads at the correct block size. If it's 16, then 2. But in each case, we're good because we happen to be reading an integer multiple number of blocks.

-

Chris about 9 yearsThis is not answering the question and 20 MB is hardly considered a very big file that may not fit into RAM as discussed here.

-

user2084795 almost 9 yearsMandatory to get the right hashsum: Reset the position where to read from! I.e. by adding

f.seek(0)to the first line of the first algorithm. Otherwise the start location is unknown and may be in the middle of the file. -

Jürgen A. Erhard over 8 years"while True" is quite pythonic.

-

Farside almost 8 yearsplease, format the code in the answer, and read this section before giving answers: stackoverflow.com/help/how-to-answer

Farside almost 8 yearsplease, format the code in the answer, and read this section before giving answers: stackoverflow.com/help/how-to-answer -

Steve Barnes almost 7 years@user2084795

Steve Barnes almost 7 years@user2084795openalways opens a fresh file handle with the position set to the start of the file, (unless you open a file for append). -

Steve Barnes almost 7 yearsThis will not work correctly as it is reading the file in text mode line by line then messing with it and printing the md5 of each stripped, encoded, line!

Steve Barnes almost 7 yearsThis will not work correctly as it is reading the file in text mode line by line then messing with it and printing the md5 of each stripped, encoded, line! -

EnemyBagJones about 6 yearsCan you elaborate on how 'hr' is functioning here?

-

Bastien Semene about 6 years@EnemyBagJones 'hr' stands for human readable. It returns a string of 32 char length hexadecimal digits: docs.python.org/2/library/md5.html#md5.md5.hexdigest

Bastien Semene about 6 years@EnemyBagJones 'hr' stands for human readable. It returns a string of 32 char length hexadecimal digits: docs.python.org/2/library/md5.html#md5.md5.hexdigest -

Naltharial about 5 yearsWhat possible reason is there to replace a simple and clear loop with a functools.reduce abberation containing multiple lambdas? I'm not sure if there's any convention on programming this hasn't broken.

-

Sebastian Wagner about 5 yearsMy main problem was that

hashlibs API doesn't really play well with the rest of Python. For example let's takeshutil.copyfileobjwhich closely fails to work. My next idea wasfold(akareduce) which folds iterables together into single objects. Like e.g. a hash.hashlibdoesn't provide operators which makes this a bit cumbersome. Nevertheless were folding an iterables here. -

Boris Verkhovskiy over 4 yearsIn Python 3.8+ you can just do

while chunk := f.read(8192): -

Boris Verkhovskiy over 4 yearsIf you can, you should use

hashlib.blake2binstead ofmd5. Unlike MD5, BLAKE2 is secure, and it's even faster. -

Ami about 4 years@Boris, you can't actually say that BLAKE2 is secure. All you can say is that it hasn't been broken yet.

-

Boris Verkhovskiy about 4 years@vy32 you can't say it's definitely going to be broken either. We'll see in 100 years, but it's at least better than MD5 which is definitely insecure.

-

Ami about 4 years@Boris, I didn't mean to imply to you that it's going to be broken. All we know is that it hasn't been broken yet. MD5 being "broken" is a funny thing. It's still not susceptible to the second pre-image attack, which is why it's still widely used in digital forensics.

-

Soumya almost 4 yearsis there a better way we can read the small chunks in parallel and get the hash, for better processing time/ performance if the file size is too big?

-

Tom de Geus over 3 yearsIs there an automatic way to set the block-size for ones disk?

Tom de Geus over 3 yearsIs there an automatic way to set the block-size for ones disk?