How do NULL values affect performance in a database search?

Solution 1

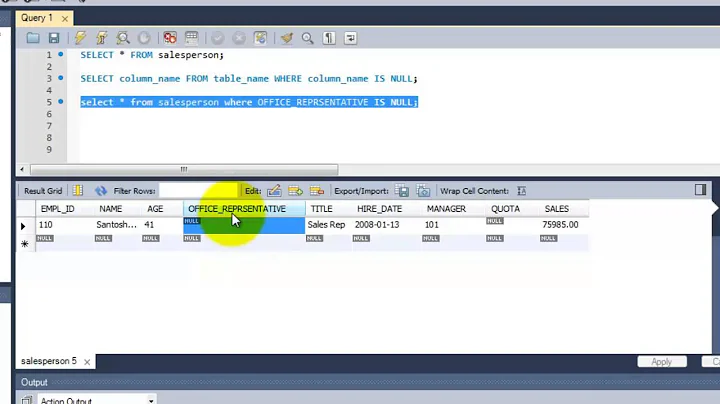

In Oracle, NULL values are not indexed, i. e. this query:

SELECT *

FROM table

WHERE column IS NULL

will always use full table scan since index doesn't cover the values you need.

More than that, this query:

SELECT column

FROM table

ORDER BY

column

will also use full table scan and sort for same reason.

If your values don't intrinsically allow NULL's, then mark the column as NOT NULL.

Solution 2

Short answer: yes, conditionally!

The main issue with null values and performance is to do with forward lookups.

If you insert a row into a table, with null values, it's placed in the natural page that it belongs to. Any query looking for that record will find it in the appropriate place. Easy so far....

...but let's say the page fills up, and now that row is cuddled in amongst the other rows. Still going well...

...until the row is updated, and the null value now contains something. The row's size has increased beyond the space available to it, so the DB engine has to do something about it.

The fastest thing for the server to do is to move the row off that page into another, and to replace the row's entry with a forward pointer. Unfortunately, this requires an extra lookup when a query is performed: one to find the natural location of the row, and one to find its current location.

So, the short answer to your question is yes, making those fields non-nullable will help search performance. This is especially true if it often happens that the null fields in records you search on are updated to non-null.

Of course, there are other penalties (notably I/O, although to a tiny extent index depth) associated with larger datasets, and then you have application issues with disallowing nulls in fields that conceptually require them, but hey, that's another problem :)

Solution 3

An extra answer to draw some extra attention to David Aldridge's comment on Quassnoi's accepted answer.

The statement:

this query:

SELECT * FROM table WHERE column IS NULL

will always use full table scan

is not true. Here is the counter example using an index with a literal value:

SQL> create table mytable (mycolumn)

2 as

3 select nullif(level,10000)

4 from dual

5 connect by level <= 10000

6 /

Table created.

SQL> create index i1 on mytable(mycolumn,1)

2 /

Index created.

SQL> exec dbms_stats.gather_table_stats(user,'mytable',cascade=>true)

PL/SQL procedure successfully completed.

SQL> set serveroutput off

SQL> select /*+ gather_plan_statistics */ *

2 from mytable

3 where mycolumn is null

4 /

MYCOLUMN

----------

1 row selected.

SQL> select * from table(dbms_xplan.display_cursor(null,null,'allstats last'))

2 /

PLAN_TABLE_OUTPUT

-----------------------------------------------------------------------------------------

SQL_ID daxdqjwaww1gr, child number 0

-------------------------------------

select /*+ gather_plan_statistics */ * from mytable where mycolumn

is null

Plan hash value: 1816312439

-----------------------------------------------------------------------------------

| Id | Operation | Name | Starts | E-Rows | A-Rows | A-Time | Buffers |

-----------------------------------------------------------------------------------

| 0 | SELECT STATEMENT | | 1 | | 1 |00:00:00.01 | 2 |

|* 1 | INDEX RANGE SCAN| I1 | 1 | 1 | 1 |00:00:00.01 | 2 |

-----------------------------------------------------------------------------------

Predicate Information (identified by operation id):

---------------------------------------------------

1 - access("MYCOLUMN" IS NULL)

19 rows selected.

As you can see, the index is being used.

Regards, Rob.

Solution 4

I would say that testing is required but it is nice to know other peoples experiences. In my experience on ms sql server, nulls can and do cause massive performance issues (differences). In a very simple test now I have seen a query return in 45 seconds when not null was set on the related fields in the table create statement and over 25 minutes where it wasn't set (I gave up waiting and just took a peak at the estimated query plan).

Test data is 1 million rows x 20 columns which are constructed from 62 random lowercase alpha characters on an i5-3320 normal HD and 8GB RAM (SQL Server using 2GB) / SQL Server 2012 Enterprise Edition on windows 8.1. It's important to use random data / irregular data to make the testing a realistic "worse" case. In both cases table was recreated and reloaded with random data that took about 30 seconds on database files that already had a suitable amount of free space.

select count(field0) from myTable where field0

not in (select field1 from myTable) 1000000

CREATE TABLE [dbo].[myTable]([Field0] [nvarchar](64) , ...

vs

CREATE TABLE [dbo].[myTable]([Field0] [nvarchar](64) not null,

for performance reasons both had table option data_compression = page set and everything else was defaulted. No indexes.

alter table myTable rebuild partition = all with (data_compression = page);

Not having nulls is a requirement for in memory optimized tables for which I am not specifically using however sql server will obviously do what is fastest which in this specific case appears to be massively in favor of not having nulls in data and using not null on the table create.

Any subsequent queries of the same form on this table return in two seconds so I would assume standard default statistics and possibly having the (1.3GB) table fit into memory are working well. i.e.

select count(field19) from myTable where field19

not in (select field18 from myTable) 1000000

On an aside not having nulls and not having to deal with null cases also makes queries much simplier, shorter, less error prone and very normally faster. If at all possible, best to avoid nulls generally on ms sql server at least unless they are explicitly required and can not reasonably be worked out of the solution.

Starting with a new table and sizing this up to 10m rows / 13GB same query takes 12 minutes which is very respectable considering the hardware and no indexes in use. For info query was completely IO bound with IO hovering between 20MB/s to 60MB/s. A repeat of the same query took 9 mins.

Solution 5

If your column doesn't contain NULLs it is best to declare this column NOT NULL, the optimizer may be able to take more efficient path.

However, if you have NULLs in your column you don't have much choice (a non-null default value may create more problems than it solves).

As Quassnoi mentionned, NULLs are not indexed in Oracle, or to be more precise, a row won't be indexed if all the indexed columns are NULL, this means:

- that NULLs can potentially speed up your research because the index will have fewer rows

- you can still index the NULL rows if you add another NOT NULL column to the index or even a constant.

The following script demonstrates a way to index NULL values:

CREATE TABLE TEST AS

SELECT CASE

WHEN MOD(ROWNUM, 100) != 0 THEN

object_id

ELSE

NULL

END object_id

FROM all_objects;

CREATE INDEX idx_null ON test(object_id, 1);

SET AUTOTRACE ON EXPLAIN

SELECT COUNT(*) FROM TEST WHERE object_id IS NULL;

Related videos on Youtube

Jakob Ojvind Nielsen

Updated on April 15, 2020Comments

-

Jakob Ojvind Nielsen about 4 years

In our product we have a generic search engine, and trying to optimze the search performance. A lot of the tables used in the queries allow null values. Should we redesign our table to disallow null values for optimization or not?

Our product runs on both

OracleandMS SQL Server.-

Vincent Malgrat about 15 yearsJakob, what kind of performance problems have you encountered with NULLs ?

-

Jakob Ojvind Nielsen about 15 yearswell - no problems so far. But I remember I read an article something about slower performance while using null values. So the discussion started in our team, whether we should allow null values or not - and we have not come to any conslusion yet. We have some very huges tables with millions of rows in it and the a lot of customers, so it is quite a big change for the project. But the customers raised an issue about the performance in the search engine.

-

dburges about 15 yearsIF you have problems with performance in the search engine, I would look many many other places before eliminating nulls. Start with the indexing, Look at the execution plans to see what is actually happening. Look at you where clauses to see if they are sargeable. Look at what you are returning, did you use select * (bad for performance if you have a join as one field at least is repeated thus wating nework resources), did you use subqueries instead of joins? Did you use a cursor? Is the where clause sufficiently exclusive? Did you use a wildcard for the first character? And on and on and on.

-

-

Vincent Malgrat about 15 yearsSetting those columns NOT NULL won't solve the "row migration" problem: if the information is not known at the time of inserting, another default value will be entered (like '.') and you will still have rows migrated when real data will replace the default value. In Oracle you would set PCTFREE appropriately to prevent row migration.

-

Jakob Ojvind Nielsen about 15 yearsHow will the same queries effect a MS SQL SERVER?

-

Quassnoi about 15 yearsSQL Server does index NULL's

-

David Aldridge about 15 yearsYou can get around this limitation with a function-based index in which you include a literal value, such as CREATE INDEX MY_INDEX ON MY_TABLE (MY_NULLABLE_COLUMN, 0)

David Aldridge about 15 yearsYou can get around this limitation with a function-based index in which you include a literal value, such as CREATE INDEX MY_INDEX ON MY_TABLE (MY_NULLABLE_COLUMN, 0) -

Steve Broberg about 15 yearsI only use null to express a nonexistent foreign key (e.g., a "Discount Coupon" foreign key on a invoice item table might not exist). However, I don't use nulls in non-foreign key columns; as you say, it "usually" means don't know. The problem with nulls is that they can mean several things - "unknown", "not applicable", "does not exist" (my case), etc. In non-key cases, you will always have to map a name to the NULL field when you finally get around to using it. Better to have that mapping valued defined in the column itself as a real value rather than duping the mapping everytwhere.

-

Marcello Miorelli over 8 yearshey folks this is not always true - see it on the answers below

Marcello Miorelli over 8 yearshey folks this is not always true - see it on the answers below -

Soy César Mora over 2 yearsCan you add a benchmark or documentation to empirically support this claim? The problem you reference occurs when a value of length x increases to x + x, is it really a null or data update problem?

Soy César Mora over 2 yearsCan you add a benchmark or documentation to empirically support this claim? The problem you reference occurs when a value of length x increases to x + x, is it really a null or data update problem?