How much data can R handle?

Solution 1

If you look at the High-Performance Computing Task View on CRAN, you will get a good idea of what R can do in a sense of high performance.

Solution 2

You can in principal store as much data as you have RAM with the exception that, currently, vectors and matrices are restricted to 2^31 - 1 elements because R uses 32-bit indexes on vectors. General vectors (lists, and their derivative data frames) are restricted to 2^31 - 1 components, and each of those components has the same restrictions as vectors/matrices/lists/data.frames etc.

Of course these are theoretical limits, if you want to do anything with data in R it will inevitably require space to hold a couple of copies at least, as R will usually copy data passed in to functions etc.

There are efforts to allow on disk storage (rather than in RAM); but even those will be restricted to the 2^31-1 restrictions mentioned above in use in R at any one time. See the Large memory and out-of-memory data section of the High Performance Computing Task View linked to in @Roman's post.

Solution 3

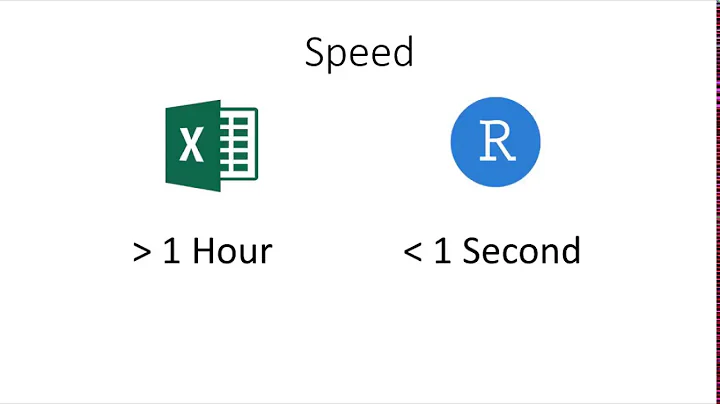

Perhaps a good indication of its suitability for "big data" is the fact that R has emerged as the platform of choice for developers competing in Kaggle.com data modeling competitions. See the article on the Revolution Analytics website -- R beats out SAS and SPSS by a healthy margin. What R lacks in out of the box number crunching power it apparently makes up for in flexibility.

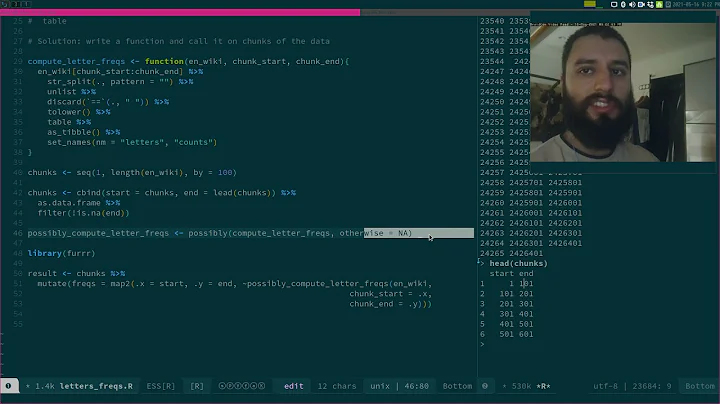

In addition to what's available on the web there are several new books for how to hot-rod R for tackling big data. The Art of R Programming (Matloff 2011; No Starch Press) provide introductions to writing optimized R code, parallel computing, and using R in conjunction with C. The entire book is well-written with great code samples and walk-throughs. Parallel R (McCallum & Weston 2011; O'Reilly) looks good too.

Related videos on Youtube

AME

Updated on July 09, 2022Comments

-

AME almost 2 years

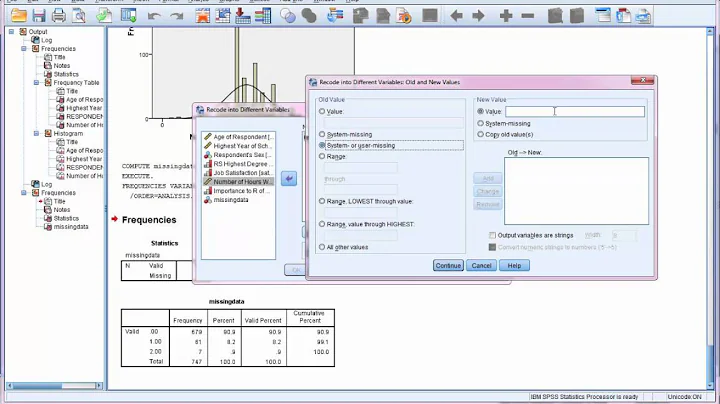

By "handle" I mean manipulate multi-columnar rows of data. How does R stack up against tools like Excel, SPSS, SAS, and others? Is R a viable tool for looking at "BIG DATA" (hundreds of millions to billions of rows)? If not, which statistical programming tools are best suited for analysis large data sets?

-

Blender about 13 yearsAs long as you don't store it to RAM, you can churn through virtually endless data using any language (Python).

-

Dirk Eddelbuettel about 13 yearsStick with Excel, it is web-scale. Oh, wait, am I two days late?

-

-

bua about 13 yearsWow,.. guys which gave '-', could you please explain why?

-

Eduardo Leoni about 13 yearstry adding a reproducible example, perhaps using SQLite instead of a RBDMS. or create a random vector with 200k rows and see if it chokes. Or tell us how many columns does your dataset have. etc etc