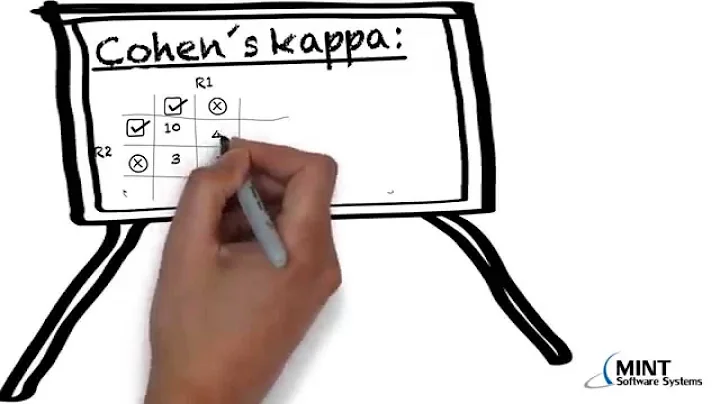

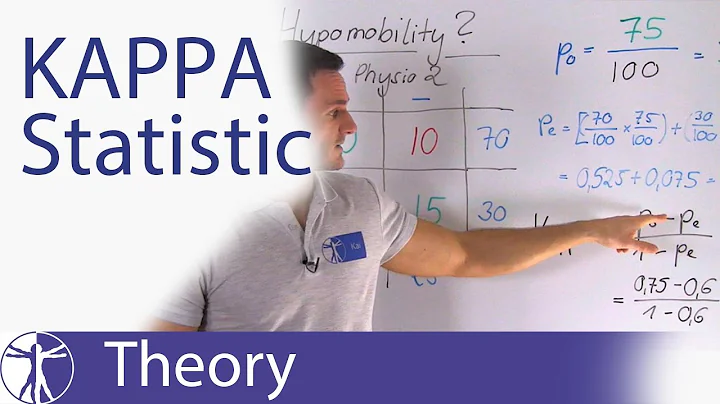

How to calculate Cohen's kappa coefficient that measures inter-rater agreement ? ( movie review )

As stated in the documentation of cohen_kappa_score:

The kappa statistic is symmetric, so swapping y1 and y2 doesn’t change the value.

There is no y_pred, y_true in this metric. The signature as you mentioned in the post is

sklearn.metrics.cohen_kappa_score(y1, y2, labels=None, weights=None)

There is no thing like the correct and predicted values in this case. Its just the labels by two different persons. So it may have differences because of their perceptions and understanding about the topic.

You just need to provide two lists (or arrays) with the labels annotated by different annotators. The ordering doesnt matter.

EDIT 1

You said that you have the text reviews. In this case, you need to apply some feature extraction process to identify the labels.

This metric is used to find the agreement between two humans labelling the data. Like assigning a class to some data samples. This cannot be used directly on raw text.

EDIT 2:

Assuming that your y contains only integers (maybe reviews from 1 to 10), this becomes a multiclass classification problem. Its supported by the scikit implementation of cohen_kappa_score.

And if I understand correctly the sentiment analysis link you posted, then you should do:

Y_pred = new_model.predict(X_test_dtm)

cohen_score = cohen_kappa_score(Y_test, Y_pred)

Related videos on Youtube

Comments

-

GuidoKaz almost 2 years

GuidoKaz almost 2 yearsI am using scikit learn and tackle the exercise of predicting movie review rating. I have read on Cohen's kappa ( i Frankly to not understand it fully ), and it's usefulness as a metric of comparison between Observed and Expected accuracy. I have proceeded as usual in applying machine learning algorithm on my corpus, using a bag of words model. I read the Cohen's Kappa is a good way to measure the performance of a classifier. How do i adapt this concept to my prediction problem using sklearn ?

Sklearn's documentation is not really explicit on how to proceed on this matter with a document term matrix ( if it's even the right way to do it )

sklearn.metrics.cohen_kappa_score(y1, y2, labels=None, weights=None)

this is the example found on the sklearn website:from sklearn.metrics import cohen_kappa_score y_true = [2, 0, 2, 2, 0, 1] y_pred = [0, 0, 2, 2, 0, 2] cohen_kappa_score(y_true, y_pred)Is the Kappa scoring calculation applicable here ? among the people who annotated the reviews in my corpus ? How to write it ? Since all the movie reviews are from different annotators, are they still two annotators to consider in evaluating Cohen's Kappa ? What should I do ? Here's the example I am trying :

import pandas as pd from sklearn.naive_bayes import MultinomialNB from sklearn.model_selection import cross_val_score from sklearn.model_selection import KFold from sklearn.feature_extraction.text import TfidfVectorizer from sklearn.model_selection import StratifiedShuffleSplit xlsx1 = pd.ExcelFile('App-Music/reviews.xlsx') ''' review are stored in two columns, one for the review, one for the rating ''' X = pd.read_excel(xlsx1,'Sheet1').Review Y = pd.read_excel(xlsx1,'Sheet1').Rating X_train, X_test, Y_train, Y_test = train_test_split(X_documents, Y, stratify=Y) new_vect= TfidfVectorizer(ngram_range=(1, 2), stop_words='english') X_train_dtm = new_vect.fit_transform(X_train.values.astype('U')) X_test_dtm = new_vect.fit_transform(X_test.values.astype('U')) new_model.fit(X_train_dtm,Y_train) new_model.score(X_test_dtm,Y_test) ''' this is the part where I want to calculate cohen kappa score for comparison '''I might completely wrong on the idea but i read it this page concerning sentiment analysis "Ultimately, a tool’s accuracy is merely the percentage of times that human judgment agrees with the tool’s judgment. This degree of agreement among humans is also known as human concordance. There have been various studies run by various people and companies, and they concluded that the rate of human concordance is between 70% and 79%." I hope this is suffient enough information. :)

-

GuidoKaz about 7 yearsI edited my question with more code and details. I get a poor 50% of accuracy. My point of interest is however on the cohen kappa score. Since every review comes from a different writer. Can we still consider there are only 2 humans ?

GuidoKaz about 7 yearsI edited my question with more code and details. I get a poor 50% of accuracy. My point of interest is however on the cohen kappa score. Since every review comes from a different writer. Can we still consider there are only 2 humans ? -

Vivek Kumar about 7 years@GuidoKaz Edited the answer to add details concerning the edited question

Vivek Kumar about 7 years@GuidoKaz Edited the answer to add details concerning the edited question -

Parth Tamane over 4 yearsI am getting this error:

Parth Tamane over 4 yearsI am getting this error:if len(y_type) > 1: raise ValueError("Classification metrics can't handle a mix of {0} and {1} targets".format(type_true, type_pred)). My predictions from linear regression:[[ 3.8941539 ] [13.59865976] [13.56027829] ... [36.70557292] [28.65117951] [30.44536662]]and my ground truth labels =[[10] [ 8] [10] ... [40] [44] [40]] -

Vivek Kumar over 4 years@ParthTamane Why are your labels a list of list instead of simple list?

Vivek Kumar over 4 years@ParthTamane Why are your labels a list of list instead of simple list?