How to Copy Files Fast

Solution 1

The fastest version w/o overoptimizing the code I've got with the following code:

class CTError(Exception):

def __init__(self, errors):

self.errors = errors

try:

O_BINARY = os.O_BINARY

except:

O_BINARY = 0

READ_FLAGS = os.O_RDONLY | O_BINARY

WRITE_FLAGS = os.O_WRONLY | os.O_CREAT | os.O_TRUNC | O_BINARY

BUFFER_SIZE = 128*1024

def copyfile(src, dst):

try:

fin = os.open(src, READ_FLAGS)

stat = os.fstat(fin)

fout = os.open(dst, WRITE_FLAGS, stat.st_mode)

for x in iter(lambda: os.read(fin, BUFFER_SIZE), ""):

os.write(fout, x)

finally:

try: os.close(fin)

except: pass

try: os.close(fout)

except: pass

def copytree(src, dst, symlinks=False, ignore=[]):

names = os.listdir(src)

if not os.path.exists(dst):

os.makedirs(dst)

errors = []

for name in names:

if name in ignore:

continue

srcname = os.path.join(src, name)

dstname = os.path.join(dst, name)

try:

if symlinks and os.path.islink(srcname):

linkto = os.readlink(srcname)

os.symlink(linkto, dstname)

elif os.path.isdir(srcname):

copytree(srcname, dstname, symlinks, ignore)

else:

copyfile(srcname, dstname)

# XXX What about devices, sockets etc.?

except (IOError, os.error), why:

errors.append((srcname, dstname, str(why)))

except CTError, err:

errors.extend(err.errors)

if errors:

raise CTError(errors)

This code runs a little bit slower than native linux "cp -rf".

Comparing to shutil the gain for the local storage to tmfps is around 2x-3x and around than 6x for NFS to local storage.

After profiling I've noticed that shutil.copy does lots of fstat syscals which are pretty heavyweight. If one want to optimize further I would suggest to do a single fstat for src and reuse the values. Honestly I didn't go further as I got almost the same figures as native linux copy tool and optimizing for several hundrends of milliseconds wasn't my goal.

Solution 2

You could simply just use the OS you are doing the copy on, for Windows:

from subprocess import call

call(["xcopy", "c:\\file.txt", "n:\\folder\\", "/K/O/X"])

/K - Copies attributes. Typically, Xcopy resets read-only attributes

/O - Copies file ownership and ACL information.

/X - Copies file audit settings (implies /O).

Solution 3

import sys

import subprocess

def copyWithSubprocess(cmd):

proc = subprocess.Popen(cmd, stdout=subprocess.PIPE, stderr=subprocess.PIPE)

cmd=None

if sys.platform.startswith("darwin"): cmd=['cp', source, dest]

elif sys.platform.startswith("win"): cmd=['xcopy', source, dest, '/K/O/X']

if cmd: copyWithSubprocess(cmd)

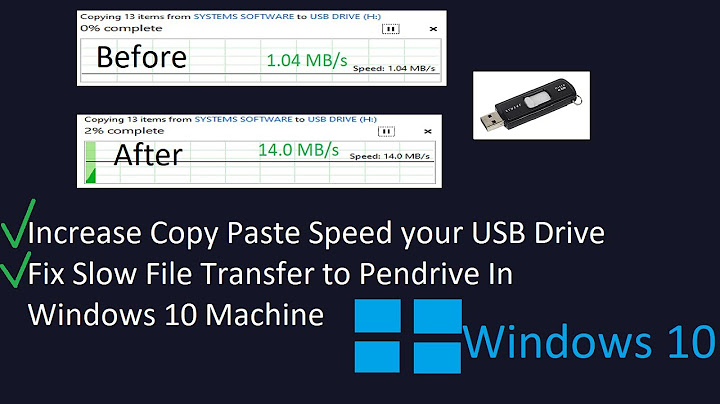

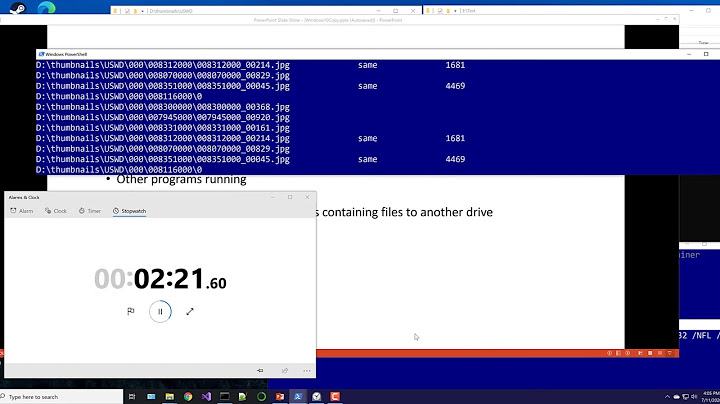

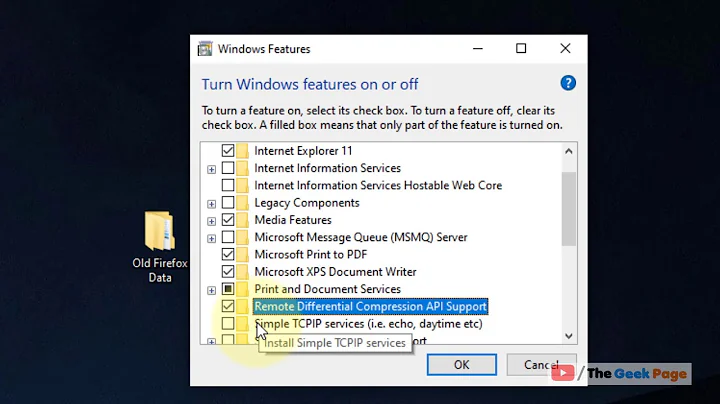

Related videos on Youtube

alphanumeric

Updated on February 28, 2021Comments

-

alphanumeric over 3 years

It takes at least 3 times longer to copy files with

shutil.copyfile()versus to a regular right-click-copy > right-click-paste using Windows File Explorer or Mac's Finder. Is there any faster alternative toshutil.copyfile()in Python? What could be done to speed up a file copying process? (The files destination is on the network drive... if it makes any difference...).EDITED LATER:

Here is what I have ended up with:

def copyWithSubprocess(cmd): proc = subprocess.Popen(cmd, stdout=subprocess.PIPE, stderr=subprocess.PIPE) win=mac=False if sys.platform.startswith("darwin"):mac=True elif sys.platform.startswith("win"):win=True cmd=None if mac: cmd=['cp', source, dest] elif win: cmd=['xcopy', source, dest, '/K/O/X'] if cmd: copyWithSubprocess(cmd)-

Ecno92 over 10 yearsYou can use the native command line options like

cpfor Linux & Mac andCOPYfor Windows. They should be as fast as when you use the GUI. -

David Heffernan over 10 yearsOn Windows SHFileOperation gives you the native shell file copy

David Heffernan over 10 yearsOn Windows SHFileOperation gives you the native shell file copy -

moooeeeep over 10 yearsDepending on some factors not stated in the question it could be beneficial to pack the files into a compressed archive before transmission... Have you considered using something like rsync?

-

Michael Burns over 10 yearsIf you are concerned with ownership and ACL don't use shutil for that reason alone: 'On Windows, file owners, ACLs and alternate data streams are not copied. '

Michael Burns over 10 yearsIf you are concerned with ownership and ACL don't use shutil for that reason alone: 'On Windows, file owners, ACLs and alternate data streams are not copied. ' -

alphanumeric over 10 yearsIf I use the native operation system's commands (such as OSX cp) should I be then using subprocess? Is there any Python module to call cp directly without a need for a subprocess on Mac?

-

Morwenn almost 6 yearsIt's worth noting that in Python 3.8 functions that copy files and directories have been optimized to work faster on several major OS.

Morwenn almost 6 yearsIt's worth noting that in Python 3.8 functions that copy files and directories have been optimized to work faster on several major OS.

-

-

Sharif Mamun over 10 yearsI believe you would be a good instructor, nice!

-

alphanumeric over 10 yearsGood point. I should be more specific. Instead of right-click-copy and then paste: This schema: 1. Select files; 2. Drag files. 3 Drop files onto a destination folder.

-

Joran Beasley over 10 yearsthats a move then ... which is much different ... try

shutil.moveinstead -

alphanumeric over 10 yearsWould "xcopy" on Windows work with a "regular" subprocess, such as: cmd = ['xcopy', source, dest, "/K/O/X"] subprocess.Popen(cmd, stdout=subprocess.PIPE, stderr=subprocess.PIPE)

-

Michael Burns over 10 yearsThat will work as well.

Michael Burns over 10 yearsThat will work as well. -

alphanumeric over 10 yearsGreat! Thanks for the help!

-

alphanumeric over 10 yearsIn win it could be a move. In osx it could be a copy.

-

searchengine27 over 7 yearsThis solution does not scale. As the files get large this becomes a less usable solution. You would need to make multiple system calls to the OS to still read portions of the file into memory as files get large.

searchengine27 over 7 yearsThis solution does not scale. As the files get large this becomes a less usable solution. You would need to make multiple system calls to the OS to still read portions of the file into memory as files get large. -

Joran Beasley over 7 yearsthis was a question about timings ... and it was basically pointing out his operations were not equivelent

-

muppetjones over 7 yearsNot sure if this is specific to later versions of python (3.5+), but the sentinel in

iterneeds to beb''in order to stop. (At least on OSX) -

Robert Kelly about 7 yearsI find it hard to believe that if you CTRL + C a 100 gigabyte file in Windows it attempts to load that in memory right then and there...

-

Joran Beasley about 7 yearstheres more than a grain of truth to that im sure ... perhaps i oversimplified it

-

Admin almost 7 yearsInvalid number of parameters error

Admin almost 7 yearsInvalid number of parameters error -

Vasily over 6 yearsdoesn't work with python 3.6 even after fixing 'except' syntax

Vasily over 6 yearsdoesn't work with python 3.6 even after fixing 'except' syntax -

Dmytro over 6 yearsThis is a python2 version. I haven't tested this with python3. Probably python3 native filetree copy is fast enough, one shall do a benchmark.

-

Soumyajit over 6 yearsThis is way faster than anything else I tried.

Soumyajit over 6 yearsThis is way faster than anything else I tried. -

Spencer almost 6 yearsFYI I would suggest adding

shutil.copystat(src, dst)after the file is written out to bring over metadata. -

Spencer over 5 years@Dmytro Just tested in py3.6, still 3x faster than shutil.copy2()!

-

Varlor over 5 years@Spencer What did you changed in the code to make it work with python 3.5+ ?, Best regards!

-

Spencer over 5 years@Varlor From what I remember I just changed this one line to the following:

for x in iter(lambda: os.read(fin, BUFFER_SIZE), b""):(made the string into bytes by adding 'b') -

Varlor over 5 yearsah, because I get also a syntax error with the 'why' in the except line 'except (IOError, os.error), why:'

-

Vahid Pazirandeh over 5 yearsTo boost performance also see the pyfastcopy module which uses the system call sendfile(). This module works for Python 2 and 3. All you have to do is literally "import pyfastcopy" and shutils will automatically perform better. Like @Morwenn mentioned above, Python 3.8 will have sendfile() built into its impl.

-

Andry over 4 yearsI've tried to replace

shutil.copyfileby thecopyfilefrom the example under python3.8.0, but it seems hanged in thefor x in iterloop. -

Andry over 4 years@VahidPazirandeh

pyfastcopydoes not work on Windows: Thesendfilesystem call does not exist on Windows, so importing this module will have no effect. -

Gustavo Gonçalves about 4 yearsEconomic on explanations, but this is a great answer.

Gustavo Gonçalves about 4 yearsEconomic on explanations, but this is a great answer. -

Rexovas over 3 yearsNote that the /O and /X flags require an elevated subprocess, else you will result in "Access denied"

Rexovas over 3 yearsNote that the /O and /X flags require an elevated subprocess, else you will result in "Access denied" -

Spencer about 2 yearsFor those trying to get the best performance, buffer size is VERY important zabkat.com/blog/buffered-disk-access.htm

-

Spencer about 2 yearsThis is a very fast option for single-file copies, but for anyone out there trying to thread large numbers of files it can run far slower (9x in a recent test of 4000 files). I was able to get better results modifying copy2 buffer size as in other answer.