How to delete files from a folder which have more than 60 files in unix?

Solution 1

No, for one thing it will break on filenames containing newlines. It is also more complex than necessary and has all the dangers of parsing ls.

A better version would be (using GNU tools):

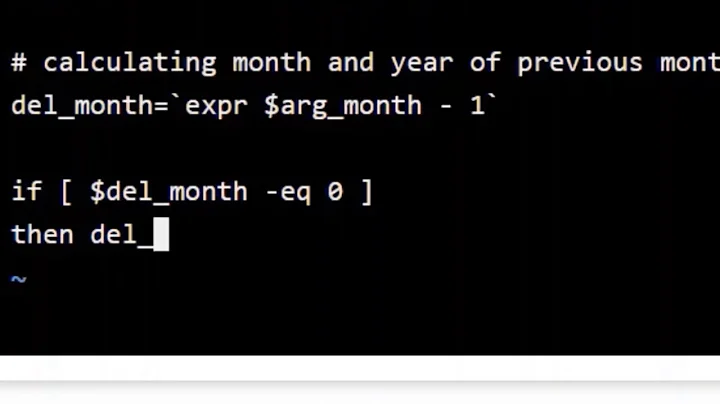

#!/bin/ksh

for dir in /home/DABA_BACKUP/*

do

## Get the file names and sort them by their

## modification time

files=( "$dir"/* );

## Are there more than 60?

extras=$(( ${#files[@]} - 60 ))

if [ "$extras" -gt 0 ]

then

## If there are more than 60, remove the first

## files until only 60 are left. We use ls to sort

## by modification date and get the inodes only and

## pass the inodes to GNU find which deletes them

find dir1/ -maxdepth 1 \( -inum 0 $(\ls -1iqtr dir1/ | grep -o '^ *[0-9]*' |

head -n "$extras" | sed 's/^/-o -inum /;' ) \) -delete

fi

done

Note that this assumes that all files are on the same filesystem and can give unexpected results (such as deleting wrong files) if they are not. It also won't work well if there are multiple hardlinks pointing to the same inode.

Solution 2

#! /bin/zsh -

for dir (/home/DABA_BACKUP/*) rm -f $dir/*(Nom[61,-1])

For the zsh-ignorant ;-):

-

for var (list) cmd: short version of thefor var in list; do cmd; doneloop (reminiscent ofperlsyntax). -

$dir:zshvariables don't need quoted like they do in other shells aszshhas explicitsplitandgloboperators so doesn't do implicit split+glob upon parameter expansion. -

*(...): glob with glob qualifiers: -

N:nullglob: the glob expands to nothing instead of raising an error when it doesn't match. -

m: order the generated files on modification time (youngest first). -

[61,-1]: from that ordered list pick the 61st to last ones.

So basically removes all but the 60 youngest files.

Solution 3

To obtain a list of the oldest entries to delete (thus keeping the 60 latest entries):

ls -t | tail -n +61

Note that the principle problem of your approach remains to be addressed here as well: how to handle files with newlines, in case it matters; otherwise you can just use (replacing your quite complex program):

cd /home/DABA_BACKUP || exit 1

ls -t | tail -n +61 | xargs rm -rf

Note:

As it seems that you have daily backups you could maybe also use an approach based on the file dates and find; as in:

find /home/DABA_BACKUP -mtime +60 -exec ls {} +

(where the ls command would - after careful examination of the correct operation! - be replaced by the appropriate rm command).

Solution 4

rm60()( IFS=/; set -f; set $(

set +f; \ls -1drt ./*)

while shift &&

[ $# -gt 60 ]

do [ -d "${1%?.}" ] ||

rm "./${1%?.}" || exit

done

)

This will work for you. It will delete the oldest files in the current directory up to a count of 60. It will do this by parsing ls robustly and it will do it without making any assumptions about your filenames - they might be named anything and need not be named by dates. This will only work for a listing of the current directory and in the case that you have a POSIX ls installed (and not masked by some evil shell function, but aliases are covered).

The above solution just applies some very basic shell splitting to some very basic Unix pathnames. It ensures ls lists all not-dot files in the current directory one per line like:

./oldestfile

./second-oldestfile

Now, any one of those might have newlines in between as well, but that would not be an issue. Because in that case they would be listed like:

./oldest

file

./s

econd

old

est

file

./third

...and so on. And the newlines don't bother us anyway - because we don't split on them. Why would we? We're working w/ pathnames, we should split on the path delimiter, and so that is what we do: IFS=/.

Now that works out a little bit strange. We end up with an argument list that looks like this:

<.> <file1\n.> <file2\n.> ... <filelast>

...but that's actually very good for us, because we can delay our arguments being treated by the shell as files (or, in the case we want to avoid, symlinks) until we're quite ready to rm them.

So once we've got our file list all we have to do is shift away our first argument, check to see that we currently have more than 60 arguments, probably decline to rm a child directory (though, of course, that's completely up to you), and otherwise rm our first argument less its last two characters. We don't have to worry about the last last argument - which hasn't got the appended period - because we never get there, and instead quit at 60. If we've made it this far for an iteration then we just try again and loop over the arg list in this fashion until we have pruned it to our satisfaction.

How does this break? It doesn't, to my knowledge, but I've allowed for it - if at any time an unexpected error occurs the loop breaks and the function returns other than 0.

And so ls can do your listing for you in the current directory without any issue at all. You can robustly allow it to sort your arguments for you, as long as you can reliably delimit them. It is for that reason that this will not work as written for anything but the current directory - more than one delimiter in a pathstring would require another level of delimiting, which could be done by factoring it out doubly for all but the last into NUL fields, but I don't care to do that now.

Related videos on Youtube

Nainita

Updated on September 18, 2022Comments

-

Nainita over 1 year

Nainita over 1 yearI want to put a script in cronjob which will run in a particular time and if the file count is more than 60, it will delete oldest files from that folder. Last In First Out. I have tried,

#!/bin/ksh for dir in /home/DABA_BACKUP do cd $dir count_files=`ls -lrt | wc -l` if [ $count_files -gt 60 ]; then todelete=$(($count_files-60)) for part in `ls -1rt` do if [ $todelete -gt 0 ] then rm -rf $part todelete=$(($todelete-1)) fi done fi doneThese are all backup files which are saved daily and named

backup_$date. Is this ok?-

Admin almost 9 yearsNotes: To just count files you don't need

Admin almost 9 yearsNotes: To just count files you don't needlsoptions-lrtand to build a list in the for loop you don't needlsoption-1. Free variable expansions ("$dir"and"$part") should be quoted. Instead of The backtics use$(ls | wc -l). -

Admin almost 9 years@Janis that will still fail if the file names contain newlines.

Admin almost 9 years@Janis that will still fail if the file names contain newlines. -

Admin almost 9 years@Yes, I know. There's just too much worth fixing there.

Admin almost 9 years@Yes, I know. There's just too much worth fixing there. -

Admin almost 9 yearsMy script is ok... I just edited according to the last answer. It's now deleting files from the folder where file count is greater then 60. Last file entered and the first one removed from folder. That what I wanted, Last In First Out.

Admin almost 9 yearsMy script is ok... I just edited according to the last answer. It's now deleting files from the folder where file count is greater then 60. Last file entered and the first one removed from folder. That what I wanted, Last In First Out. -

Admin almost 9 yearsIt's not OK. It will break if your file names contain spaces or newlines. It is also far more complex than necessary. What format are your names in? You said

Admin almost 9 yearsIt's not OK. It will break if your file names contain spaces or newlines. It is also far more complex than necessary. What format are your names in? You saidbackup_$datebut what is$date? Is it114-06-2015? OrSun Jun 14 15:06:53 EEST 2015? If you tell us exactly what it is, we can give you more robust and efficient way of doing this. -

Admin almost 9 yearsI have a folder which is /home/DABA_BACKUP. Where daily backups are saved (ex. backup_130615 ,backup_140615). My disk is also getting filled due to this huge backup file size. So my intention is to delete the oldest one from the folder to keep only 60 files in my folder.

Admin almost 9 yearsI have a folder which is /home/DABA_BACKUP. Where daily backups are saved (ex. backup_130615 ,backup_140615). My disk is also getting filled due to this huge backup file size. So my intention is to delete the oldest one from the folder to keep only 60 files in my folder.

-

-

Nainita almost 9 yearsMany Thanks @terdon. I have just modified my script as per your solution. It's working smoothly. Thanks everyone for you valuable efforts. Could you please do me a favor? If possible, then kindly share some links for writing shell scripts.

Nainita almost 9 yearsMany Thanks @terdon. I have just modified my script as per your solution. It's working smoothly. Thanks everyone for you valuable efforts. Could you please do me a favor? If possible, then kindly share some links for writing shell scripts. -

terdon almost 9 years@Nainita I don't really have any. I've learned a LOT by answering questions here and just googling or things I don't know.

terdon almost 9 years@Nainita I don't really have any. I've learned a LOT by answering questions here and just googling or things I don't know. -

Erathiel almost 9 yearsIt's generally advised to avoid parsing of

Erathiel almost 9 yearsIt's generally advised to avoid parsing oflsoutput in scripts. You could usefindinstead. -

Lambert almost 9 yearsI can hardly believe that the date format Nainita mentioned (130615, 140615) is automatically sorted well as you assume... Try with dates 140615 and 130715. The default output will be 130715 followed by 140615.

-

mikeserv almost 9 years@Erathiel - exactly what does

mikeserv almost 9 years@Erathiel - exactly what doesfindoffer here that should be preferred tols? One time somebody wrote a pretty error-strewn blog post about parsinglsand for some reason the entire linux community treat it like the Pentateuch. Look, the few valid points made in the blog post apply equally as well tofindin this case. -

terdon almost 9 years@mikeserv you raise two valid points. Was the snarky sarcasm really needed to make them? Why must you turn everything into a fight? All you had to do is point out my mistakes and I would have happily admitted them yet you chose to attack instead of teach.

terdon almost 9 years@mikeserv you raise two valid points. Was the snarky sarcasm really needed to make them? Why must you turn everything into a fight? All you had to do is point out my mistakes and I would have happily admitted them yet you chose to attack instead of teach. -

terdon almost 9 yearsCould you explain that to the zsh ignorant? I assume you are somehow sorting by date so you won't have the issues my answer does, right? Is that what the

terdon almost 9 yearsCould you explain that to the zsh ignorant? I assume you are somehow sorting by date so you won't have the issues my answer does, right? Is that what theNOmdoes? -

Stéphane Chazelas almost 9 years@terdon, see edit. I actually had the logic wrong (reversed). Should be

Stéphane Chazelas almost 9 years@terdon, see edit. I actually had the logic wrong (reversed). Should beomto sort with youngest first (like inls -t). -

terdon almost 9 years@mikeserv you were snarky now and it was uncalled for. Surely by now you know I have absolutely no problem admitting I was wrong. And I was very wrong here. All you had to do was point it out. Anyway, see updated answer, you'll like it, it parses

terdon almost 9 years@mikeserv you were snarky now and it was uncalled for. Surely by now you know I have absolutely no problem admitting I was wrong. And I was very wrong here. All you had to do was point it out. Anyway, see updated answer, you'll like it, it parsesls. -

terdon almost 9 years@Lambert Back at my computer. Thanks for pointing out the issue, it should be fixed now.

terdon almost 9 years@Lambert Back at my computer. Thanks for pointing out the issue, it should be fixed now. -

terdon almost 9 yearsVery nice, thanks! Could you have a look at my updated answer. I think it should i) work now and ii) be robust with any file name. I'd appreciate it if you could point out any file names that would break it.

terdon almost 9 yearsVery nice, thanks! Could you have a look at my updated answer. I think it should i) work now and ii) be robust with any file name. I'd appreciate it if you could point out any file names that would break it. -

Stéphane Chazelas almost 9 yearsRelying on inum implies all files are from the same filesystem and hard links will break some of your assumptions. Note that split+glob will as happily split on newline as it will on space, no need for the tr.

Stéphane Chazelas almost 9 yearsRelying on inum implies all files are from the same filesystem and hard links will break some of your assumptions. Note that split+glob will as happily split on newline as it will on space, no need for the tr.findwill recurse which doesn't seem is desired here. If you're at using GNUisms, best would probably be to usefind -printf %T... andsort -z. -

Stéphane Chazelas almost 9 yearsNote that using

Stéphane Chazelas almost 9 yearsNote that usingxargsalso assumes filename don't contain space, tabs, newline (other forms of blank characters depending on the locale and xargs implementation), single quote, double quote and backslash. You may want to add a -- to the rm cmdline to avoid problems with files whose name starts with -. (probably not a problem to the OP but worth noting here for anyone coming here with a similar need). -

mikeserv almost 9 years@terdon - and, in fairness, I know whereof I speak when discussing Rube Goldberg-ish solutions - it's only because I am so well experienced w/ writing them that I can recognize them so easily. Honestly, this is a bad answer - it always was - and this doesn't help. You should fold, man. The ^samefs assumptions are especially dangerous on Solaris systems, where ZFS reigns supreme.

mikeserv almost 9 years@terdon - and, in fairness, I know whereof I speak when discussing Rube Goldberg-ish solutions - it's only because I am so well experienced w/ writing them that I can recognize them so easily. Honestly, this is a bad answer - it always was - and this doesn't help. You should fold, man. The ^samefs assumptions are especially dangerous on Solaris systems, where ZFS reigns supreme. -

terdon almost 9 years@mikeserv I'm not using

terdon almost 9 years@mikeserv I'm not using-l. I also have no idea why you mention Solaris. I'm as familiar with your opinion of that post as you must be with mine. Let's not rehash it. I amfinding because that's the best way I know of to delete files by inodes. I'd be happy to hear of a better one (and that would make an actually constructive comment). And yes, this is not a good answer and I'd rather not see it accepted (and I wrote this before seeing your last comment). Since it is accepted, however, I have at least tried to make it i) work, unlike the previous version and ii) robust. -

mikeserv almost 9 yearsWell... Do you know about

mikeserv almost 9 yearsWell... Do you know abouttouch's-r? Conceivably, a very robust solution involvingfindmight be one wherein you recursivelytouch(sounds naughty) files in the current directory against some-referenced oldest mod-time. Basically, if you can cycle through 60 files which are newer than yourtouch-reference file, all others are older and ought to be disposed of. Of course, a solution like that wouldn't really needfind- orls- if the shell supported the-[on]ttestargument anyway. Oh,-1! -

mikeserv almost 9 yearsRegarding Solaris, the asker's history might show that out. Even on the questions which do not specifically mention Solaris in the title, the asker seems to indicate somewhere in the comments or question body that the intended system is a Solaris one.

mikeserv almost 9 yearsRegarding Solaris, the asker's history might show that out. Even on the questions which do not specifically mention Solaris in the title, the asker seems to indicate somewhere in the comments or question body that the intended system is a Solaris one.