How to incrementally sample without replacement?

Solution 1

Note to readers from OP: Please consider looking at the originally accepted answer to understand the logic, and then understand this answer.

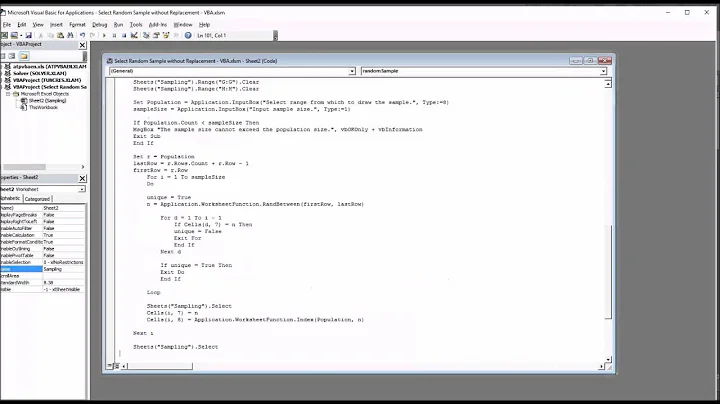

Aaaaaand for completeness sake: This is the concept of necromancer’s answer, but adapted so it takes a list of forbidden numbers as input. This is just the same code as in my previous answer, but we build a state from forbid, before we generate numbers.

- This is time

O(f+k)and memoryO(f+k). Obviously this is the fastest thing possible without requirements towards the format offorbid(sorted/set). I think this makes this a winner in some way ^^. - If

forbidis a set, the repeated guessing method is faster withO(k⋅n/(n-(f+k))), which is very close toO(k)forf+knot very close ton. - If

forbidis sorted, my ridiculous algorithm is faster with:

import random

def sample_gen(n, forbid):

state = dict()

track = dict()

for (i, o) in enumerate(forbid):

x = track.get(o, o)

t = state.get(n-i-1, n-i-1)

state[x] = t

track[t] = x

state.pop(n-i-1, None)

track.pop(o, None)

del track

for remaining in xrange(n-len(forbid), 0, -1):

i = random.randrange(remaining)

yield state.get(i, i)

state[i] = state.get(remaining - 1, remaining - 1)

state.pop(remaining - 1, None)

usage:

gen = sample_gen(10, [1, 2, 4, 8])

print gen.next()

print gen.next()

print gen.next()

print gen.next()

Solution 2

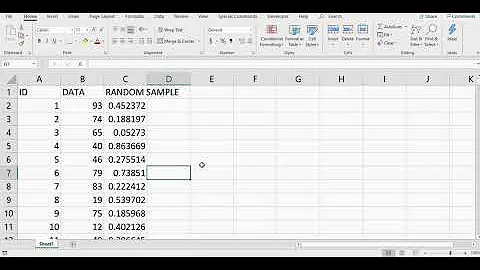

If you know in advance that you're going to want to multiple samples without overlaps, easiest is to do random.shuffle() on list(range(100)) (Python 3 - can skip the list() in Python 2), then peel off slices as needed.

s = list(range(100))

random.shuffle(s)

first_sample = s[-10:]

del s[-10:]

second_sample = s[-10:]

del s[-10:]

# etc

Else @Chronial's answer is reasonably efficient.

Solution 3

Ok, here we go. This should be the fastest possible non-probabilistic algorithm. It has runtime of O(k⋅log²(s) + f⋅log(f)) ⊂ O(k⋅log²(f+k) + f⋅log(f))) and space O(k+f). f is the amount of forbidden numbers, s is the length of the longest streak of forbidden numbers. The expectation for that is more complicated, but obviously bound by f. If you assume that s^log₂(s) is bigger than f or are just unhappy about the fact that s is once again probabilistic, you can change the log part to a bisection search in forbidden[pos:] to get O(k⋅log(f+k) + f⋅log(f)).

The actual implementation here is O(k⋅(k+f)+f⋅log(f)), as insertion in the list forbid is O(n). This is easy to fix by replacing that list with a blist sortedlist.

I also added some comments, because this algorithm is ridiculously complex. The lin part does the same as the log part, but needs s instead of log²(s) time.

import bisect

import random

def sample(k, end, forbid):

forbidden = sorted(forbid)

out = []

# remove the last block from forbidden if it touches end

for end in reversed(xrange(end+1)):

if len(forbidden) > 0 and forbidden[-1] == end:

del forbidden[-1]

else:

break

for i in xrange(k):

v = random.randrange(end - len(forbidden) + 1)

# increase v by the number of values < v

pos = bisect.bisect(forbidden, v)

v += pos

# this number might also be already taken, find the

# first free spot

##### linear

#while pos < len(forbidden) and forbidden[pos] <=v:

# pos += 1

# v += 1

##### log

while pos < len(forbidden) and forbidden[pos] <= v:

step = 2

# when this is finished, we know that:

# • forbidden[pos + step/2] <= v + step/2

# • forbidden[pos + step] > v + step

# so repeat until (checked by outer loop):

# forbidden[pos + step/2] == v + step/2

while (pos + step <= len(forbidden)) and \

(forbidden[pos + step - 1] <= v + step - 1):

step = step << 1

pos += step >> 1

v += step >> 1

if v == end:

end -= 1

else:

bisect.insort(forbidden, v)

out.append(v)

return out

Now to compare that to the “hack” (and the default implementation in python) that Veedrac proposed, which has space O(f+k) and (n/(n-(f+k)) is the expected number of “guesses”) time:

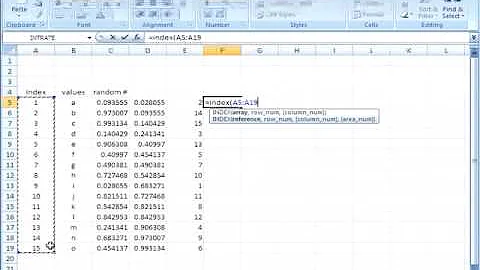

I just plotted this for k=10 and a reasonably big n=10000 (it only gets more extreme for bigger n). And I have to say: I only implemented this because it seemed like a fun challenge, but even I am surprised by how extreme this is:

Let’s zoom in to see what’s going on:

Yes – the guesses are even faster for the 9998th number you generate. Note that, as you can see in the first plot, even my one-liner is probably faster for bigger f/n (but still has rather horrible space requirements for big n).

To drive the point home: The only thing you are spending time on here is generating the set, as that’s the f factor in Veedrac’s method.

So I hope my time here was not wasted and I managed to convince you that Veedrac’s method is simply the way to go. I can kind of understand why that probabilistic part troubles you, but maybe think of the fact that hashmaps (= python dicts) and tons of other algorithms work with similar methods and they seem to be doing just fine.

You might be afraid of the variance in the number of repetitions. As noted above, this follows a geometric distribution with p=n-f/n. So the standard deviation (=the amount you “should expect” the result to deviate from the expected average) is

Which is basically the same as the mean (√f⋅n < √n² = n).

****edit**:

I just realized that s is actually also n/(n-(f+k)). So a more exact runtime for my algorithm is O(k⋅log²(n/(n-(f+k))) + f⋅log(f)). Which is nice since given the graphs above, it proves my intuition that that is quite a bit faster than O(k⋅log(f+k) + f⋅log(f)). But rest assured that that also does not change anything about the results above, as the f⋅log(f) is the absolutely dominant part in the runtime.

Solution 4

The short way

If the number sampled is much less than the population, just sample, check if it's been chosen and repeat while so. This might sound silly, but you've got an exponentially decaying possibility of choosing the same number, so it's much faster than O(n) if you've got even a small percentage unchosen.

The long way

Python uses a Mersenne Twister as its PRNG, which is goodadequate. We can use something else entirely to be able to generate non-overlapping numbers in a predictable manner.

Quadratic residues,

x² mod p, are unique when2x < pandpis a prime.If you "flip" the residue,

p - (x² % p), given this time also thatp = 3 mod 4, the results will be the remaining spaces.This isn't a very convincing numeric spread, so you can increase the power, add some fudge constants and then the distribution is pretty good.

First we need to generate primes:

from itertools import count

from math import ceil

from random import randrange

def modprime_at_least(number):

if number <= 2:

return 2

number = (number // 4 * 4) + 3

for number in count(number, 4):

if all(number % factor for factor in range(3, ceil(number ** 0.5)+1, 2)):

return number

You might worry about the cost of generating the primes. For 10⁶ elements this takes a tenth of a millisecond. Running [None] * 10**6 takes longer than that, and since it's only calculated once, this isn't a real problem.

Further, the algorithm doesn't need an exact value for the prime; is only needs something that is at most a constant factor larger than the input number. This is possible by saving a list of values and searching them. If you do a linear scan, that is O(log number) and if you do a binary search it is O(log number of cached primes). In fact, if you use galloping you can bring this down to O(log log number), which is basically constant (log log googol = 2).

Then we implement the generator

def sample_generator(up_to):

prime = modprime_at_least(up_to+1)

# Fudge to make it less predictable

fudge_power = 2**randrange(7, 11)

fudge_constant = randrange(prime//2, prime)

fudge_factor = randrange(prime//2, prime)

def permute(x):

permuted = pow(x, fudge_power, prime)

return permuted if 2*x <= prime else prime - permuted

for x in range(prime):

res = (permute(x) + fudge_constant) % prime

res = permute((res * fudge_factor) % prime)

if res < up_to:

yield res

And check that it works:

set(sample_generator(10000)) ^ set(range(10000))

#>>> set()

Now, the lovely thing about this is that if you ignore the primacy test, which is approximately O(√n) where n is the number of elements, this algorithm has time complexity O(k), where k is the sample sizeit's and O(1) memory usage! Technically this is O(√n + k), but practically it is O(k).

Requirements:

You do not require a proven PRNG. This PRNG is far better then linear congruential generator (which is popular; Java uses it) but it's not as proven as a Mersenne Twister.

You do not first generate any items with a different function. This avoids duplicates through mathematics, not checks. Next section I show how to remove this restriction.

The short method must be insufficient (

kmust approachn). Ifkis only halfn, just go with my original suggestion.

Advantages:

Extreme memory savings. This takes constant memory... not even

O(k)!Constant time to generate the next item. This is actually rather fast in constant terms, too: it's not as fast as the built-in Mersenne Twister but it's within a factor of 2.

Coolness.

To remove this requirement:

You do not first generate any items with a different function. This avoids duplicates through mathematics, not checks.

I have made the best possible algorithm in time and space complexity, which is a simple extension of my previous generator.

Here's the rundown (n is the length of the pool of numbers, k is the number of "foreign" keys):

Initialisation time O(√n); O(log log n) for all reasonable inputs

This is the only factor of my algorithm that technically isn't perfect with regards to algorithmic complexity, thanks to the O(√n) cost. In reality this won't be problematic because precalculation brings it down to O(log log n) which is immeasurably close to constant time.

The cost is amortized free if you exhaust the iterable by any fixed percentage.

This is not a practical problem.

Amortized O(1) key generation time

Obviously this cannot be improved upon.

Worst-case O(k) key generation time

If you have keys generated from the outside, with only the requirement that it must not be a key that this generator has already produced, these are to be called "foreign keys". Foreign keys are assumed to be totally random. As such, any function that is able to select items from the pool can do so.

Because there can be any number of foreign keys and they can be totally random, the worst case for a perfect algorithm is O(k).

Worst-case space complexity O(k)

If the foreign keys are assumed totally independent, each represents a distinct item of information. Hence all keys must be stored. The algorithm happens to discard keys whenever it sees one, so the memory cost will clear over the lifetime of the generator.

The algorithm

Well, it's both of my algorithms. It's actually quite simple:

def sample_generator(up_to, previously_chosen=set(), *, prune=True):

prime = modprime_at_least(up_to+1)

# Fudge to make it less predictable

fudge_power = 2**randrange(7, 11)

fudge_constant = randrange(prime//2, prime)

fudge_factor = randrange(prime//2, prime)

def permute(x):

permuted = pow(x, fudge_power, prime)

return permuted if 2*x <= prime else prime - permuted

for x in range(prime):

res = (permute(x) + fudge_constant) % prime

res = permute((res * fudge_factor) % prime)

if res in previously_chosen:

if prune:

previously_chosen.remove(res)

elif res < up_to:

yield res

The change is as simple as adding:

if res in previously_chosen:

previously_chosen.remove(res)

You can add to previously_chosen at any time by adding to the set that you passed in. In fact, you can also remove from the set in order to add back to the potential pool, although this will only work if sample_generator has not yet yielded it or skipped it with prune=False.

So there is is. It's easy to see that it fulfils all of the requirements, and it's easy to see that the requirements are absolute. Note that if you don't have a set, it still meets its worst cases by converting the input to a set, although it increases overhead.

Testing the RNG's quality

I became curious how good this PRNG actually is, statistically speaking.

Some quick searches lead me to create these three tests, which all seem to show good results!

Firstly, some random numbers:

N = 1000000

my_gen = list(sample_generator(N))

target = list(range(N))

random.shuffle(target)

control = list(range(N))

random.shuffle(control)

These are "shuffled" lists of 10⁶ numbers from 0 to 10⁶-1, one using our fun fudged PRNG, the other using a Mersenne Twister as a baseline. The third is the control.

Here's a test which looks at the average distance between two random numbers along the line. The differences are compared with the control:

from collections import Counter

def birthdat_calc(randoms):

return Counter(abs(r1-r2)//10000 for r1, r2 in zip(randoms, randoms[1:]))

def birthday_compare(randoms_1, randoms_2):

birthday_1 = sorted(birthdat_calc(randoms_1).items())

birthday_2 = sorted(birthdat_calc(randoms_2).items())

return sum(abs(n1 - n2) for (i1, n1), (i2, n2) in zip(birthday_1, birthday_2))

print(birthday_compare(my_gen, target), birthday_compare(control, target))

#>>> 9514 10136

This is less than the variance of each.

Here's a test which takes 5 numbers in turn and sees what order the elements are in. They should be evenly distributed between all 120 possible orders.

def permutations_calc(randoms):

permutations = Counter()

for items in zip(*[iter(randoms)]*5):

sorteditems = sorted(items)

permutations[tuple(sorteditems.index(item) for item in items)] += 1

return permutations

def permutations_compare(randoms_1, randoms_2):

permutations_1 = permutations_calc(randoms_1)

permutations_2 = permutations_calc(randoms_2)

keys = sorted(permutations_1.keys() | permutations_2.keys())

return sum(abs(permutations_1[key] - permutations_2[key]) for key in keys)

print(permutations_compare(my_gen, target), permutations_compare(control, target))

#>>> 5324 5368

This is again less than the variance of each.

Here's a test that sees how long "runs" are, aka. sections of consecutive increases or decreases.

def runs_calc(randoms):

runs = Counter()

run = 0

for item in randoms:

if run == 0:

run = 1

elif run == 1:

run = 2

increasing = item > last

else:

if (item > last) == increasing:

run += 1

else:

runs[run] += 1

run = 0

last = item

return runs

def runs_compare(randoms_1, randoms_2):

runs_1 = runs_calc(randoms_1)

runs_2 = runs_calc(randoms_2)

keys = sorted(runs_1.keys() | runs_2.keys())

return sum(abs(runs_1[key] - runs_2[key]) for key in keys)

print(runs_compare(my_gen, target), runs_compare(control, target))

#>>> 1270 975

The variance here is very large, and over several executions I have seems an even-ish spread of both. As such, this test is passed.

A Linear Congruential Generator was mentioned to me, as possibly "more fruitful". I have made a badly implemented LCG of my own, to see whether this is an accurate statement.

LCGs, AFAICT, are like normal generators in that they're not made to be cyclic. Therefore most references I looked at, aka. Wikipedia, covered only what defines the period, not how to make a strong LCG of a specific period. This may have affected results.

Here goes:

from operator import mul

from functools import reduce

# Credit http://stackoverflow.com/a/16996439/1763356

# Meta: Also Tobias Kienzler seems to have credit for my

# edit to the post, what's up with that?

def factors(n):

d = 2

while d**2 <= n:

while not n % d:

yield d

n //= d

d += 1

if n > 1:

yield n

def sample_generator3(up_to):

for modulier in count(up_to):

modulier_factors = set(factors(modulier))

multiplier = reduce(mul, modulier_factors)

if not modulier % 4:

multiplier *= 2

if multiplier < modulier - 1:

multiplier += 1

break

x = randrange(0, up_to)

fudge_constant = random.randrange(0, modulier)

for modfact in modulier_factors:

while not fudge_constant % modfact:

fudge_constant //= modfact

for _ in range(modulier):

if x < up_to:

yield x

x = (x * multiplier + fudge_constant) % modulier

We no longer check for primes, but we do need to do some odd things with factors.

modulier ≥ up_to > multiplier, fudge_constant > 0-

a - 1must be divisible by every factor inmodulier... - ...whereas

fudge_constantmust be coprime withmodulier

Note that these aren't rules for a LCG but a LCG with full period, which is obviously equal to the modulier.

I did it as such:

- Try every

modulierat leastup_to, stopping when the conditions are satisfied- Make a set of its factors,

𝐅 - Let

multiplierbe the product of𝐅with duplicates removed - If

multiplieris not less thanmodulier, continue with the nextmodulier - Let

fudge_constantbe a number less thatmodulier, chosen randomly - Remove the factors from

fudge_constantthat are in𝐅

- Make a set of its factors,

This is not a very good way of generating it, but I don't see why it would ever impinge the quality of the numbers, aside from the fact that low fudge_constants and multiplier are more common than a perfect generator for these might make.

Anyhow, the results are appalling:

print(birthday_compare(lcg, target), birthday_compare(control, target))

#>>> 22532 10650

print(permutations_compare(lcg, target), permutations_compare(control, target))

#>>> 17968 5820

print(runs_compare(lcg, target), runs_compare(control, target))

#>>> 8320 662

In summary, my RNG is good and a linear congruential generator is not. Considering that Java gets away with a linear congruential generator (although it only uses the lower bits), I would expect my version to be more than sufficient.

Solution 5

OK, one last try ;-) At the cost of mutating the base sequence, this takes no additional space, and requires time proportional to n for each sample(n) call:

class Sampler(object):

def __init__(self, base):

self.base = base

self.navail = len(base)

def sample(self, n):

from random import randrange

if n < 0:

raise ValueError("n must be >= 0")

if n > self.navail:

raise ValueError("fewer than %s unused remain" % n)

base = self.base

for _ in range(n):

i = randrange(self.navail)

self.navail -= 1

base[i], base[self.navail] = base[self.navail], base[i]

return base[self.navail : self.navail + n]

Little driver:

s = Sampler(list(range(100)))

for i in range(9):

print s.sample(10)

print s.sample(1)

print s.sample(1)

In effect, this implements a resumable random.shuffle(), pausing after n elements have been selected. base is not destroyed, but is permuted.

Related videos on Youtube

necromancer

Updated on February 21, 2020Comments

-

necromancer about 4 years

Python has

my_sample = random.sample(range(100), 10)to randomly sample without replacement from[0, 100).Suppose I have sampled

nsuch numbers and now I want to sample one more without replacement (without including any of the previously sampledn), how to do so super efficiently?update: changed from "reasonably efficiently" to "super efficiently" (but ignoring constant factors)

-

Chronial over 10 yearsDo you only want to sample integers in a

[0, x)range? What is your expectedx? -

necromancer over 10 years[0, n) works for me. i can make any problem fit into it

-

Chronial over 10 yearsIs that what you need or not? Making another problem fit into that costs a severe amount of time and is very relevant considering the tight bounds you are asking for.

-

Eric over 10 yearsYou might want to look at the source for

random.sample -

necromancer over 10 yearsWhat a thread! A simple question end up needing a 300 pt bounty as sheer gratitude for amazing answers. 4 answers from 1 person. 3 answers from another. 1 answer from OP that served as basis for correct answer. 1 amazing thesis-like answer which actually includes multiple sub-answers. Happy conclusions I hope. Thank you everybody. :-)

-

-

necromancer over 10 years+1 the problem is that if the n is large, say 10,000,000 then explicitly constructing a set will blow up

-

necromancer over 10 years+1 thanks, still looking for perfect solution where i can pass in the list of previously sampled objects and it

smartly(@Chronial's answer uses brute force) to sample the next one. -

Chronial over 10 yearsThat’s right, but know that constructing a set is still

O(n)so even for 10,000,00 it takes less then a second. -

Chronial over 10 years@no_answer_not_upvoted I think you’ve seen all possibilities. If your range() is small, use my answer, if your sample size is small, use Veedrac’s answer. If both of them are massive, state that in your question and hope someone gives you a more complicated algorithm. But note that that would be slower in the first two cases.

-

Chronial over 10 yearsNice solution, but I would recommend storing the current index instead of deleting, as that is quite slow. Or use queue.

-

Tim Peters over 10 years@no_answer_not_upvoted, good luck on that ;-) It will need to look at every "forbidden" value you pass in, and will need to look at every value in the base list to ensure that each is not in the forbidden list. Without smarter data structures to start with, it has to take time at least proportional to the sum of the sizes of both lists (base list and forbidden list).

-

necromancer over 10 years@Chronial thanks, there is the possibility of doing a "virtual set difference" without instantiating the entire resultant set difference and hopefully there is an implementation of that somewhere.

-

Tim Peters over 10 years@Chronial, no, deleting is very fast here: that's why it deletes from the end of the list. No items need to be moved. CPython just decrements the length of the list, and decref's the pointers in the tail.

-

Chronial over 10 years@TimPeters Ahhh, nice – that’s so clever I didn’t even notice :). I just assumed you’d take and delete from the start of the list without even looking.

-

necromancer over 10 years@Chronial i was talking about the set difference your answer. this answer is fine where it applies.

-

necromancer over 10 years+1 thank you, if i understand this correctly, this is

O(size(base))? comparing with @Chronialis's answer, your space complexity is lower but the time complexity is on average the same? (ps: sampling just 1 would be ok to simplify the logic). -

Tim Peters over 10 yearsYes,

O(len(base))time per call. But if you exhaust the base set (as my sample driver did),len(forbidden) == len(base)at the end of all the calls - you still end up with a set of the same size asbase. Sorry, I don't understand what "sampling just 1" means. If you only want a sample of size 1, pass1fornto mysample()function. If you hard-coded1into it, the code would get a little simpler, but not a lot. The "hard part" remains skipping over all the elements returned on previous calls. -

Tim Peters over 10 yearsBTW, you should think about my

shuffleanswer again. It's by far the most efficient way to do this if you're going to take multiple samples:O(len(base))time on the first call, andO(n)time for each subsequent call asking for a sample of sizen. And it takes no extra space if you're willing to letbaseget destroyed. -

necromancer over 10 yearsno doubt, but if the range is huge and I am selecting a small number of samples it is overkill. It is also an

O(range)solution which I am trying to avoid. -

Tim Peters over 10 yearsYou really need to be more precise about what you want ;-) For example, you told @Veedrac "the number sampled gets close enough to hit my failsafe on this logic", implying that you're going to eventually use up almost all the elements of

base. But here you're telling me that's not the case. I can't guess what you really need. As precisely as possible, what are your time and space requirements? How large can "huge" be? Are you willing to mutate the base sequence? -

necromancer over 10 yearssorry for the confusion. what i am really looking for probably is not available as a library. suppose the range is 10,000,000 and i have sampled 10, and i want to sample one single 11th. this could be done by choosing a random integer in

[0, 9,999,990)and then incrementing it by 1 for each of my previously chosen 10 that is below my chosen number. this is highly efficient, even if you sample all (but not as efficient as shuffle for sample all). i tried to implement this myself and was wondering if python had some library that did this incremental sample. -

necromancer over 10 years(i absolutely detest the hack described in @Veedrac's answer. my remark was in the context of my prototype with limited data which uses that hack. i am now trying to replace that hack with the right solution.)

-

necromancer over 10 yearsthanks so much :-) at the very least a +1 until i understand it. i do see that it uses

O(range)memory but still if i don't write a better one myself i will accept it for effort. thank you again! -

Chronial over 10 years@no_answer_not_upvoted: If you detest that “hack”, I would strongly advice against using the python

sample()function -

necromancer over 10 years@Chronial thanks for that tip, i didn't know that's what the python sample did!!

-

Tim Peters over 10 yearsPython's

random.sample()uses 2 methods internally, "accept/reject" if the sample size is small compared to the population size, and more akin torandom.shuffle()otherwise. -

Tim Peters over 10 yearsLOL - you still haven't defined your problem ;-) If the size of your range is much larger than the total sizes of the samples you expect to extract, then @Veedrac's is an excellent approach. At least in Python 3, it will use a small amount of memory even if the

ninrange(n)is huge (in Python 2, much the same if you usexrange(n)instead). -

necromancer over 10 yearsyou guys have me caught between a rock and a hard place. that's how i feel about the two solutions. sigh..

-

necromancer over 10 yearswoops did i forget the +1 originally? well +1-ed. feel free to triple dip too ;-)

-

Chronial over 10 yearsBut note that they are not doing that because of time-complexity (which would also be a stupid idea as you can see in plots I posted below), but for space-complexity. As you would expect, python is once again crazy optimized ^^. Btw: it uses the shuffle method around k > n/3.

-

Chronial over 10 years@TimPeters I have looked at that for quite some time – at first I just ran the code and checked for mean and std.dev., then I thought about the math. It all checkout out, but I still can’t believe that this returns the proper distribution. But it just does ^^.

-

necromancer over 10 yearswow... +1 for now .. i promise to fully understand this ASAP!! thank you so much!

-

Tim Peters over 10 yearsYa, practical or not, it's pretty :-) It's just an elaboration of Knuth's algorithm S in section 3.4.2 - see his book for a correctness proof.

-

Chronial over 10 yearsFeel free to ask questions if you any problems.

-

Tim Peters over 10 years@Chronial, I don't know whether to laugh, cry, or applaud. So some of each: LOL, mwah, bravo! ;-) It is indeed an heroic solution to a problem nobody has ;-)

-

Chronial over 10 years@TimPeters yep, obviously I don’t have any useful algorithms to write ^^.

-

necromancer over 10 yearsi don't yet understand it, but hey, it is worth pushing you over to 10k whenever SO lets me do it (or if somebody else gives an even better answer, :D)

-

necromancer over 10 years@TimPeters it's on! it's funny how this question has yielded multiple single-author answers from multiple authors. note the change in the stakes ;-)

-

necromancer over 10 yearsps: so much for instant gratification. i thought i could instantly push you over 10k, but SO makes me wait. actually, it kinda makes it exciting .. enough time for @TimPeters to add a few more answers ;-)

-

Tim Peters over 10 yearsEric, this is basically the same as one of my earlier answers. Note that second argument to

xrange()here should be 0, not 1 (e.g.list(xrange(4, 1, -1)is[4, 3, 2]-range/xrangealways quit before thestopargument. -

Eric over 10 years@TimPeters: Had that before and decided to change it for some reason... Fixed now. Yes, this is algorithmically the same, but I think it's more cleanly implemented as an iterator.

-

necromancer over 10 yearsI would appreciate if somebody could point out any inefficiencies relative to other answers.

-

necromancer over 10 years@Eric I just added my own answer. Any thoughts?

-

necromancer over 10 years@Chronial the bounty will almost surely be yours for the effort, but I am not sure if all that complexity is needed or helpful. See my own answer. It does the incremental sampling without any loops. Your critique would be appreciated.

-

necromancer over 10 yearsI have added my own answer. A critique would be appreciated. Thanks!

-

necromancer over 10 years@Chronial as promised, bounty is yours. enjoy the 10+k :-) I still feel your answer is not the correct one compared to the rather simple solution I have just posted. I will probably accept my own after factoring in any critique. Your thoughts will be appreciated.

-

Veedrac over 10 yearsWhat's the advantage over the Veedrac Hack? Keeping track of a few auxiliary variables is less than keeping a whole dictionary so I don't see where you could ever use this but not mine.

Veedrac over 10 yearsWhat's the advantage over the Veedrac Hack? Keeping track of a few auxiliary variables is less than keeping a whole dictionary so I don't see where you could ever use this but not mine. -

Veedrac over 10 yearsAlso, I request a walkthrough of the algorithm. It looks cool but I'm finding it difficult to decipher. I want to check for the edge-cases but I can't until I'm confident I know how it works. // EDIT: Is this perchance basically a

Veedrac over 10 yearsAlso, I request a walkthrough of the algorithm. It looks cool but I'm finding it difficult to decipher. I want to check for the edge-cases but I can't until I'm confident I know how it works. // EDIT: Is this perchance basically adiff'd version of Eric's? 'Cause it's seriously cool if it is. -

Tim Peters over 10 yearsSeriously cool! I'm going to post a rewrite that's easier to use, and perhaps to understand - I don't want to "enter" it, I just want to post it for posterity ;-) About the time complexity claim, doesn't make sense: this is

O(1)per element extraction, soO(k)for getting a sample of sizek. -

necromancer over 10 years@TimPeters Thank you so much. I am pressed for time hence the succinct version. It is not quite

O(1)because the dictionary needs to be accessed, which is anO(log(k))operation. -

Tim Peters over 10 yearsNo, dict access is

O(1)time in CPython. That's expected time. Worst-case time isO(len(dict))time, but that's never seen. But, to believe theO(1)claim, you need to have faith in probability ;-) -

necromancer over 10 years@TimPeters It would be rather hypocritical of me to develop a belated faith in it given that I wrote this in protest of Veedrack's probabilistic solution :D Either way, it is good to know.

-

Tim Peters over 10 yearsActually, because ints are their own hash code in CPython, dict access when the keys are taken from a contiguous range of integers is guaranteed

O(1)time (best, expected, and worst cases). No love of probability required in this case ;-) -

necromancer over 10 years@Veedrac The essence of the algorithm is this: suppose you instantiated an array

[0, 1, 2, 3, 4, 5, 6, 7, 8, 9]and sampled3rd position, then you move the last element into its place[0, 1, 2, 9, 4, 5, 6, 7, 8], then sample say5th positionand the array becomes[0, 1, 2, 9, 4, 8, 6, 7]and so forth... Now, keeping the array would space expensive, so I only keep a dict for elements that were moved .. sodict(3)=9, dict(5)=8.. to samplekth position ifkexists indict, use that element else the element iskitself. no array needed. -

Chronial over 10 yearsThis is indeed a very nice variation on Tim's solution. My only problem with that is that like Tim's solution, it does not solve your stated problem: “Still looking for perfect solution where i can pass in the list of previously sampled objects”.

-

Chronial over 10 years@TimPeters The integers here are not from a continuous range, so it is definitely possible to hit the worst case somewhere during the loop. But if you assume that, then the algorithm is

O(number_previously_sampled)and notO(log(number_previously_sampled)). -

Tim Peters over 10 yearsPoint taken! The expected access time remains

O(1); CPython keeps the load factor of the hash table under 2/3, so truly bad cases are astronomically unlikely. -

Chronial over 10 years@TimPeters Astronomically unlikely – yes, but that also doesn’t stop no_answer_not_upvoted from disliking Veedrac’s answer ;). Btw: I think I win the pretty contest :P.

-

necromancer over 10 years@Chronial I don't see the

O(number_previously_sampled). The worst case is when you have previously sampled[0, n/2)so that the dict hasn/2keys. At this point if you incrementally sample one more number then you have to do just a couple of dict operations which should beO(log(n)).. right? -

necromancer over 10 years+1 Thank you for the elegant rewrite. I am new to Python so this helps me learn too.

-

Chronial over 10 yearsNo, the worst case on dict access with

nentries is O(n) – see [the python wiki]{wiki.python.org/moin/TimeComplexity). If all of your occupied indexes get the same hash, the dict degenerates into an (unsorted!) linked list. -

necromancer over 10 years@Chronial gotcha. It doesn't bother me because the hash dict could be substituted out with a tree implementation, probably a custom impleentation. Algorithm fundamentals > language. You're right re the deficiency “Still looking for perfect solution where i can pass in the list of previously sampled objects”. I think that safely cannot be satisfied because just to construct the dict or just to sort the previously sampled objects would be

O(n.log(n))or just to read themO(n). So that was an unreasonable demand on my part for an incremental sampler. Your brave attempt cost me 300 rep! ;-) -

Chronial over 10 yearsThanks for those sweet 300 rep :). I think your concept here is even a good base for a stateless algorithm: given the forbidden numbers, you can generate a matching

stateinO(f). So samplingknumbers isO(f+k), which is slightly faster than my algorithm. -

necromancer over 10 yearsTimPeters you get to pick the correct answer (hopefully between this one and Chronial's rewrite which I almost prefer but since he already has 300 rep and you have contributed hugely). Thanks so much for your contributions -- what started out as a simple question turned out to be a somewhat legendary thread. Multiple single-author answers, self-answer, and @Veedrac's semi-thesis which ended up in the community wiki.

-

Tim Peters over 10 years@Chronial, because ints are their own hash code in CPython, it's impossible for all the hash codes to be equal - or even for any two of them to be equal (except for hash(-1) == -2 == hash(-2) - don't ask ;-)), unless these are longs (unbounded integers) larger than the 32 or 64 bit hashes used on the platform. There's no linked list in any case. I wrote most of Python's current dict implementation, so I can go on about this for a very long time ;-)

-

Tim Peters over 10 yearsNo contest - @Chronial's is prettiest! This was a lot of fun, but the cleanest code wins :-) Having said that, I wouldn't use his version - or mine. I want an interface that lets me specify how many samples to take "in one gulp". Messing with (e.g.)

itertools.slice()to get that complicates life for the user, and obfuscates the intent for the code reader. Code like this would remain the core of it, though. Thanks to all for playing! :-) -

Chronial over 10 years@TimPeters the hash used for integer key

ion dict sizesisi%s, thus if all your keys arex*s, they all get the same hash 0. Just checked on the implementation and it seems the higher order bits will also have to fulfill certain properties for the collision resolution to go properly wrong, but since we are talking random, that’s of course possible (even though massively unlikely, but no_answer_not_upvoted seems to just have that irrational fear of uncertainties :). Or am I missing something? Btw: Your are the timsort Tim? *hail* -

Chronial over 10 years@TimPeters is there any reason why you did not use a generator here? It seems to just perfectly fit the situation. Or are there some drawbacks I’m missing?

-

Tim Peters over 10 yearsYup, that's me ;-) All the bits of the full hash code eventually come into play - as the comments say, "smart" collision resolution is necessary here to avoid easily provoked bad behavior. Especially since your

sis always a power of 2: the starting index is just some of the last bits of the full hash code (that's why there are no starting-index collisions if the keys are a contiguous range of integers). Bad cases can still be contrived, but they're really contrived ;-) -

necromancer over 10 years@TimPeters and Chronial since you both love hashing, here's a link that might be interesting state-of-the-art: en.wikipedia.org/wiki/Cuckoo_hashing "with worst-case constant lookup time", cool huh?

-

necromancer over 10 years@TimPeters I think Chronial's "if-less" version is masterful indeed so I accepted it. I wanted to add another bounty for your contributions but the way StackOverflow bounties work is that the minimum amount I can give in a second bounty for this question is 500 points, and it would be too big a hit to my rep. Good to have your answers, esp. from THE Tim Peters!! It is a privilege :-) Will look forward to following your other answers. PS: Somebody made almost a 1000 points of rep just by asking a question based on something you wrote: stackoverflow.com/questions/228181/zen-of-python :)

-

Tim Peters over 10 yearsIt's all good - I view "reputation" here like "leveling up" in FarmVille - a curious artifact of the rules ;-) I didn't use a generator because it's simply a wrong approach for most plausible uses of this: a one-at-a-time implementation is a poor fit for a function that's almost always going to ask for

k-at-a-time. And people who care about random sampling usually care a lot about wall-clock speed, not justO()behavior. So in any real deployment of this, I'd have a loop gathering - and returning -ksamples per call. Faster and a better fit. -

Tim Peters over 10 yearsBy the way, this code is waaaay too wordy ;-) You can get rid of the final line (

state.pop(...)), and in the penultimate line replacegetwithpop. Then it's a good answer - LOL ;-) -

Chronial over 10 years@TimPeters hehe, no. The reason why there is a

getand and apopis that it is possible thati=remaining-1. With just apopwe remove the item and just re-add it again. I would say thatremainingis either large and this event very unlikely orremainingis small and we will be finished soon anyways, so the “leak” is not too problematic. But I wanted to be thorough :). -

Tim Peters over 10 yearsAh! Got it. My apologies for attempting to gild the lily ;-)