How to properly pickle sklearn pipeline when using custom transformer

Solution 1

I found a pretty straightforward solution. Assuming you are using Jupyter notebooks for training:

- Create a

.pyfile where the custom transformer is defined and import it to the Jupyter notebook.

This is the file custom_transformer.py

from sklearn.pipeline import TransformerMixin

class FilterOutBigValuesTransformer(TransformerMixin):

def __init__(self):

pass

def fit(self, X, y=None):

self.biggest_value = X.c1.max()

return self

def transform(self, X):

return X.loc[X.c1 <= self.biggest_value]

- Train your model importing this class from the

.pyfile and save it usingjoblib.

import joblib

from custom_transformer import FilterOutBigValuesTransformer

from sklearn.externals import joblib

from sklearn.preprocessing import MinMaxScaler

pipeline = Pipeline([

('filter', FilterOutBigValuesTransformer()),

('encode', MinMaxScaler()),

])

X=load_some_pandas_dataframe()

pipeline.fit(X)

joblib.dump(pipeline, 'pipeline.pkl')

- When loading the

.pklfile in a different python script, you will have to import the.pyfile in order to make it work:

import joblib

from utils import custom_transformer # decided to save it in a utils directory

pipeline = joblib.load('pipeline.pkl')

Solution 2

I have created a workaround solution. I do not consider it a complete answer to my question, but non the less it let me move on from my problem.

Conditions for the workaround to work:

I. Pipeline needs to have only 2 kinds of transformers:

- sklearn transformers

- custom transformers, but with only attributes of types:

- number

- string

- list

- dict

or any combination of those e.g. list of dicts with strings and numbers. Generally important thing is that attributes are json serializable.

II. names of pipeline steps need to be unique (even if there is pipeline nesting)

In short model would be stored as a catalog with joblib dumped files, a json file for custom transformers, and a json file with other info about model.

I have created a function that goes through steps of a pipeline and checks __module__ attribute of transformer.

If it finds sklearn in it it then it runs joblib.dump function under a name specified in steps (first element of step tuple), to some selected model catalog.

Otherwise (no sklearn in __module__) it adds __dict__ of transformer to result_dict under a key equal to name specified in steps. At the end I json.dump the result_dict to model catalog under name result_dict.json.

If there is a need to go into some transformer, because e.g. there is a Pipeline inside a pipeline, you can probably run this function recursively by adding some rules to the beginning of the function, but it becomes important to have always unique steps/transformers names even between main pipeline and subpipelines.

If there are other information needed for creation of model pipeline then save them in model_info.json.

Then if you want to load the model for usage: You need to create (without fitting) the same pipeline in target project. If pipeline creation is somewhat dynamic, and you need information from source project, then load it from model_info.json.

You can copy function used for serialization and:

- replace all joblib.dump with joblib.load statements, assign __dict__ from loaded object to __dict__ of object already in pipeline

- replace all places where you added __dict__ to result_dict with assignment of appropriate value from result_dict to object __dict__ (remember to load result_dict from file beforehand)

After running this modified function, previously unfitted pipeline should have all transformer attributes that were effect of fitting loaded, and pipeline as a whole ready to predict.

The main things I do not like about this solution is that it needs pipeline code inside target project, and needs all attrs of custom transformers to be json serializable, but I leave it here for other people that stumble on a similar problem, maybe somebody comes up with something better.

Solution 3

Based on my research it seems that the best solution is to create a Python package that includes your trained pipeline and all files.

Then you can pip install it in the project where you want to use it and import the pipeline with from <package name> import <pipeline name>.

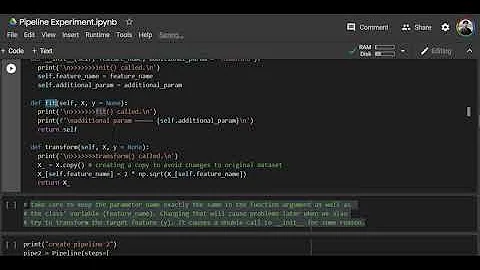

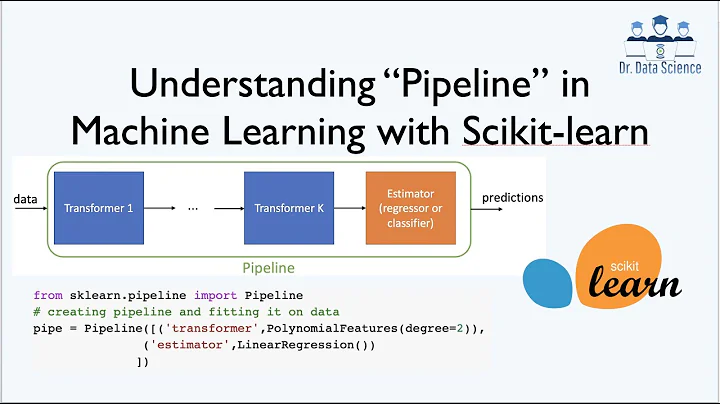

Related videos on Youtube

spiral

Updated on September 15, 2022Comments

-

spiral over 1 year

spiral over 1 yearI am trying to pickle a sklearn machine-learning model, and load it in another project. The model is wrapped in pipeline that does feature encoding, scaling etc. The problem starts when i want to use self-written transformers in the pipeline for more advanced tasks.

Let's say I have 2 projects:

- train_project: it has the custom transformers in src.feature_extraction.transformers.py

- use_project: it has other things in src, or has no src catalog at all

If in "train_project" I save the pipeline with joblib.dump(), and then in "use_project" i load it with joblib.load() it will not find something such as "src.feature_extraction.transformers" and throw exception:

ModuleNotFoundError: No module named 'src.feature_extraction'

I should also add that my intention from the beginning was to simplify usage of the model, so programist can load the model as any other model, pass very simple, human readable features, and all "magic" preprocessing of features for actual model (e.g. gradient boosting) is happening inside.

I thought of creating /dependencies/xxx_model/ catalog in root of both projects, and store all needed classes and functions in there (copy code from "train_project" to "use_project"), so structure of projects is equal and transformers can be loaded. I find this solution extremely inelegant, because it would force the structure of any project where the model would be used.

I thought of just recreating the pipeline and all transformers inside "use_project" and somehow loading fitted values of transformers from "train_project".

The best possible solution would be if dumped file contained all needed info and needed no dependencies, and I am honestly shocked that sklearn.Pipelines seem to not have that possibility - what's the point of fitting a pipeline if i can not load fitted object later? Yes it would work if i used only sklearn classes, and not create custom ones, but non-custom ones do not have all needed functionality.

Example code:

train_project

src.feature_extraction.transformers.py

from sklearn.pipeline import TransformerMixin class FilterOutBigValuesTransformer(TransformerMixin): def __init__(self): pass def fit(self, X, y=None): self.biggest_value = X.c1.max() return self def transform(self, X): return X.loc[X.c1 <= self.biggest_value]train_project

main.py

from sklearn.externals import joblib from sklearn.preprocessing import MinMaxScaler from src.feature_extraction.transformers import FilterOutBigValuesTransformer pipeline = Pipeline([ ('filter', FilterOutBigValuesTransformer()), ('encode', MinMaxScaler()), ]) X=load_some_pandas_dataframe() pipeline.fit(X) joblib.dump(pipeline, 'path.x')test_project

main.py

from sklearn.externals import joblib pipeline = joblib.load('path.x')The expected result is pipeline loaded correctly with transform method possible to use.

Actual result is exception when loading the file.

-

iratzhash over 4 yearsI have the same question, I will share what I've tried so far. interchanging joblib, pickle . re-importing the my custom featureUnion subclass. Please post here if you figure a way out.