How to use AWS S3 CLI to dump files to stdout in BASH?

Solution 1

dump the contents of all of the file objects to stdout.

You can accomplish this if you pass - for destination of aws s3 cp command.

For example, $ aws s3 cp s3://mybucket/stream.txt -.

What you're trying to do is something like this? ::

#!/bin/bash

BUCKET=YOUR-BUCKET-NAME

for key in `aws s3api list-objects --bucket $BUCKET --prefix bucket/path/to/files/ | jq -r '.Contents[].Key'`

do

echo $key

aws s3 cp s3://$BUCKET/$key - | md5sum

done

Solution 2

If you are using a version of the AWS CLI that doesn't support copying to "-" you can also use /dev/stdout:

$ aws s3 cp --quiet s3://mybucket/stream.txt /dev/stdout

You also may want the --quiet flag to prevent a summary line like the following from being appended to your output:

download: s3://mybucket/stream.txt to ../../dev/stdout

Solution 3

You can try using s3streamcat, it supports bzip, gzip and xz formats as well.

Install with

sudo pip install s3streamcat

Usage:

s3streamcat s3://bucketname/dir/file_path

s3streamcat s3://bucketname/dir/file_path | more

s3streamcat s3://bucketname/dir/file_path | grep something

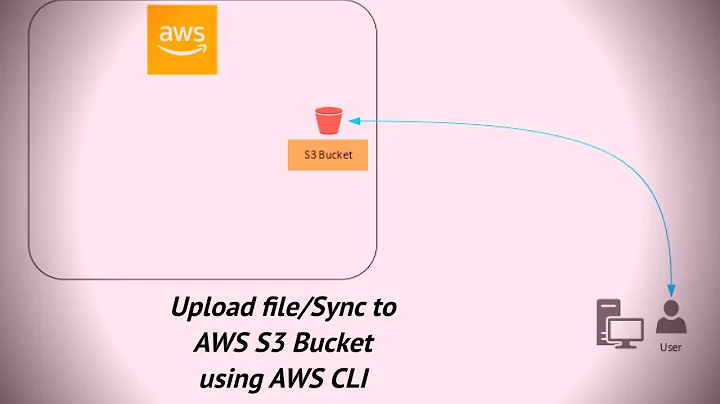

Related videos on Youtube

Comments

-

Neil C. Obremski over 3 years

I'm starting a bash script which will take a path in S3 (as specified to the ls command) and dump the contents of all of the file objects to

stdout. Essentially I'd like to replicatecat /path/to/files/*except for S3, e.g.s3cat '/bucket/path/to/files/*'. My first inclination looking at the options is to use thecpcommand to a temporary file and thencatthat.Has anyone tried this or similar or is there already a command I'm not finding which does it?

-

Misunderstood about 9 yearsI use PHP and the Services_Amazon_S3 class to do similar things.

Misunderstood about 9 yearsI use PHP and the Services_Amazon_S3 class to do similar things.

-

-

Antonio Barbuzzi almost 9 yearsNote however that '-' as a placeholder for stdout does not work in all the versions of awscli. For example, the version 1.2.9, which comes with ubuntu LTS 14.04.2, doesn't support it.

-

Kode Charlie over 8 yearsDitto that. I'm on Ubuntu 12.x, and it does not work in my instance of bash.

-

Mahendar Patel over 8 yearsThe above answer lists that you can use 'cp' with '-' as the 2nd file argument to make it output the file to stdout.

Mahendar Patel over 8 yearsThe above answer lists that you can use 'cp' with '-' as the 2nd file argument to make it output the file to stdout. -

Eamorr almost 8 yearsProblem with this is that you can't get a specific version of the file.

-

MichaelChirico over 5 yearsnot working on macOS High Sierra 10.13.6 either (

MichaelChirico over 5 yearsnot working on macOS High Sierra 10.13.6 either (aws --version:aws-cli/1.15.40 Python/3.6.5 Darwin/17.7.0 botocore/1.10.40) -

Khoa over 5 yearsthis answer has also the advantage that the file content will be stream to your terminal, and not copied as a whole. see more at loige.co/aws-command-line-s3-content-from-stdin-or-to-stdout/…