Incredibly low KVM disk performance (qcow2 disk files + virtio)

Solution 1

Well, yeah, qcow2 files aren't designed for blazingly fast performance. You'll get much better luck out of raw partitions (or, preferably, LVs).

Solution 2

How to achieve top performance with QCOW2:

qemu-img create -f qcow2 -o preallocation=metadata,compat=1.1,lazy_refcounts=on imageXYZ

The most important one is preallocation which gives nice boost, according to qcow2 developers. It is almost on par with LVM now! Note that this is usually enabled in modern (Fedora 25+) Linux distros.

Also you can provide unsafe cache if this is not production instance (this is dangerous and not recommended, only good for testing):

<driver name='qemu' cache='unsafe' />

Some users reports that this configuration beats LVM/unsafe configuration in some tests.

For all these parameters latest QEMU 1.5+ is required! Again, most of modern distros have these.

Solution 3

I achieved great results for qcow2 image with this setting:

<driver name='qemu' type='raw' cache='none' io='native'/>

which disables guest caches and enables AIO (Asynchronous IO). Running your dd command gave me 177MB/s on host and 155MB/s on guest. The image is placed on same LVM volume where host's test was done.

My qemu-kvm version is 1.0+noroms-0ubuntu14.8 and kernel 3.2.0-41-generic from stock Ubuntu 12.04.2 LTS.

Solution 4

I experienced exactly the same issue. Within RHEL7 virtual machine I have LIO iSCSI target software to which other machines connect. As underlying storage (backstore) for my iSCSI LUNs I initially used LVM, but then switched to file based images.

Long story short: when backing storage is attached to virtio_blk (vda, vdb, etc.) storage controller - performance from iSCSI client connecting to the iSCSI target was in my environment ~ 20 IOPS, with throughput (depending on IO size) ~ 2-3 MiB/s. I changed virtual disk controller within virtual machine to SCSI and I'm able to get 1000+ IOPS and throughput 100+ MiB/s from my iSCSI clients.

<disk type='file' device='disk'>

<driver name='qemu' type='qcow2' cache='none' io='native'/>

<source file='/var/lib/libvirt/images/station1/station1-iscsi1-lun.img'/>

<target dev='sda' bus='scsi'/>

<address type='drive' controller='0' bus='0' target='0' unit='0'/>

</disk>

Solution 5

If you're running your vms with a single command, for arguments you can use

kvm -drive file=/path_to.qcow2,if=virtio,cache=off <...>

It got me from 3MB/s to 70MB/s

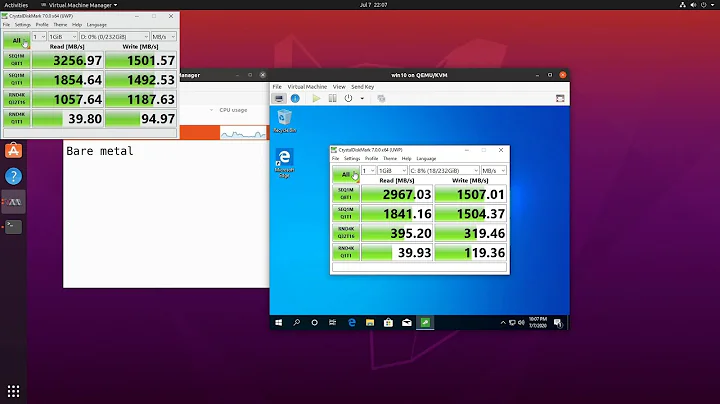

Related videos on Youtube

El Yobo

Updated on September 18, 2022Comments

-

El Yobo almost 2 years

I'm having some serious disk performance problems while setting up a KVM guest. Using a simple

ddtest, the partition on the host that the qcow2 images reside on (a mirrored RAID array) writes at over 120MB/s, while my guest gets writes ranging from 0.5 to 3MB/s.- The guest is configured with a couple of CPUs and 4G of RAM and isn't currently running anything else; it's a completely minimal install at the moment.

- Performance is tested using

time dd if=/dev/zero of=/tmp/test oflag=direct bs=64k count=16000. - The guest is configured to use virtio, but this doesn't appear to make a difference to the performance.

- The host partitions are 4kb aligned (and performance is fine on the host, anyway).

- Using writeback caching on the disks increases the reported performance massively, but I'd prefer not to use it; even without it performance should be far better than this.

- Host and guest are both running Ubuntu 12.04 LTS, which comes with qemu-kvm 1.0+noroms-0ubuntu13 and libvirt 0.9.8-2ubuntu17.1.

- Host has the deadline IO scheduler enabled and the guest has noop.

There seem to be plenty of guides out there tweaking kvm performance, and I'll get there eventually, but it seems like I should be getting vastly better performance than this at this point in time so it seems like something is already very wrong.

Update 1

And suddenly when I go back and test now, it's 26.6 MB/s; this is more like what I expected w/qcrow2. I'll leave the question up in case anyone has any ideas as to what might have been the problem (and in case it mysteriously returns again).

Update 2

I stopped worrying about qcow2 performance and just cut over to LVM on top of RAID1 with raw images, still using virtio but setting cache='none' and io='native' on the disk drive. Write performance is now appx. 135MB/s using the same basic test as above, so there doesn't seem to be much point in figuring out what the problem was when it can be so easily worked around entirely.

-

David Corsalini almost 12 yearsYou didn't mention the distribution and software versions in use.

-

El Yobo almost 12 yearsAdded some info on versions.

-

David Corsalini almost 12 yearsah, as expected, ubuntu... any chance you can reproduce this on fedora?

-

El Yobo almost 12 yearsThe server is in Germany and I'm currently in Mexico, so that could be a little tricky. And if it did suddenly work... I still wouldn't want to have to deal with a Fedora server ;) I have seen a few comments suggesting that Debian/Ubuntu systems did have more issues than Fedora/CentOS for KVM as much of the development work was done there.

-

David Corsalini almost 12 yearsmy point exactly. and in any case, if you are after a server grade OS you need RHEL, not Ubuntu

-

El Yobo almost 12 yearsIt's probably a whole new question and an invitation to a flame war, I'd be interest to know why you think that. My experience with RHEL and CentOS in the past has been negative, but mainly due to their limited repositories and out of date packages. Having used Debian for more than 10 years, I'd generally been happy with it as a server OS, but I thought I'd play around with Ubuntu this time.

-

Michael Hampton almost 12 yearsAnd Debian doesn't have out of date packages?! Yep, you've invited a flame war all right. :)

Michael Hampton almost 12 yearsAnd Debian doesn't have out of date packages?! Yep, you've invited a flame war all right. :) -

El Yobo almost 12 yearsOh, Debian has definitely had their problems (three years between stable releases at one time, or thereabouts), so no flame war on that front :) But at least you could use the official backports to get reasonably recent versions on a largely stable system, and they've picked up their game since then. But that is one reason to use Ubuntu over Debian, more packages, more up to date.

-

El Yobo almost 12 yearsAnyhow, it's a massive digression, I shouldn't have asked. If anyone cares to comment on serverfault.com/questions/91917/… then perhaps that would be a more appropriate place to discuss.

-

David Corsalini almost 12 yearsNo flame war meant really, I'm just seeing lots of these very hard to nail issues with the way virtualization works on ubuntu, compared to RHEL and Fedora. Usually, it's simple enough to reinstall another distro and see if the issue reproduces

-

El Yobo almost 12 yearsYeah, I know :) Added new notes anyway, switched to raw on lvm as suggested and performance is where it should be.

-

gertas about 11 yearsYour Update 2 should be added as answer, I just did that because accepted answer is not the best for these who want to keep image files.

-

El Yobo almost 11 yearsI'm not sure what you mean, sorry; my Update 2 is to do what was suggested in the accepted answer (use raw partitions with LVM).

-

U.Z.A.I.R over 5 yearsCan you also provide data around write latency for small writes? ioping -W /

-

El Yobo almost 12 yearsObviously, but they're also not meant to be quite as crap as the numbers I'm getting either.

-

womble almost 12 years[citation needed], as the wikipedians say. Who says they're not meant to be that crap?

womble almost 12 years[citation needed], as the wikipedians say. Who says they're not meant to be that crap? -

El Yobo almost 12 yearsMost examples out there show similar performance with qcow2, which seems to be a significant improvement over the older version. The KVM site itself has come numbers up at linux-kvm.org/page/Qcow2 which show comparable times for a range of cases.

-

womble almost 12 years18:35 (qcow2) vs 8:48 (raw) is "comparable times"?

womble almost 12 years18:35 (qcow2) vs 8:48 (raw) is "comparable times"? -

El Yobo almost 12 yearsThat's the worst rate on the page, but even if you take that as the example and say that raw is appx. 2.5 times faster than qcow2... multiplying my 3MB/s by 2.5 gets you 7.5MB/s with raw and again, that's not exactly something you'd be happy with.

-

El Yobo almost 12 yearsI've switched them over to LVM backed raw images on top of RAID1, set the io scheduler to noop on the guest and deadline on the host and it now writes at 138 MB/s. I still don't know what it was that caused the qcow2 to have the 3MB/s speeds, but clearly it can be sidestepped by using raw, so thanks for pushing me in that direction.

-

Alex almost 11 yearsYou set a qcow2 image type to "raw"?

-

gertas almost 11 yearsI guess I have copied older entry, I suppose speed benefits should be same for

type='qcow2', could you check that before I edit? I have no more access to such configuration - I migrated to LXC withmount binddirectories to achieve real native speeds in guests. -

lzap over 10 yearsThat is not quite true - latest patches in qemu speeds qcow2 a lot! We are almost on par.

-

shodanshok over 9 yearsIt is not a good idea to use cache=unsafe: an unexpected host shutdown can wreak havoc on the entire guest filesystem. It is much better to use cache=writeback: similar performance, but much better reliability.

shodanshok over 9 yearsIt is not a good idea to use cache=unsafe: an unexpected host shutdown can wreak havoc on the entire guest filesystem. It is much better to use cache=writeback: similar performance, but much better reliability. -

lzap about 9 yearsAs I've said: if this is not production instance (good for testing)

-

shodanshok about 9 yearsFair enough. I missed it ;)

shodanshok about 9 yearsFair enough. I missed it ;) -

U.Z.A.I.R over 5 yearsThis answer is not correct. The qcow2 format does not leads to this level of inefficiency! There are other issues.

-

Joachim Wagner over 2 years@Federico The question is from July 2012. While Ubuntu 12.04 LTS was released 6 days after the patches for support of qcow2 version 3 had been committed to the qemu repository, it is highly unlikely that these changes made it into the release. Version 2 was known to have poor performance: wiki.qemu.org/Features/Qcow3

Joachim Wagner over 2 years@Federico The question is from July 2012. While Ubuntu 12.04 LTS was released 6 days after the patches for support of qcow2 version 3 had been committed to the qemu repository, it is highly unlikely that these changes made it into the release. Version 2 was known to have poor performance: wiki.qemu.org/Features/Qcow3