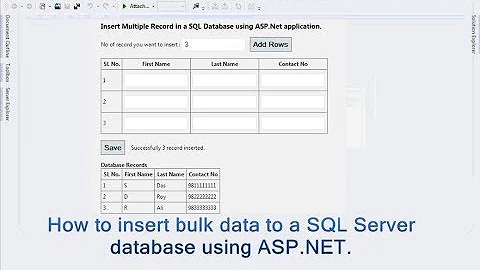

Insert 2 million rows into SQL Server quickly

Solution 1

You can try with SqlBulkCopy class.

Lets you efficiently bulk load a SQL Server table with data from another source.

There is a cool blog post about how you can use it.

Solution 2

I think its better you read data of text file in DataSet

-

Try out SqlBulkCopy - Bulk Insert into SQL from C# App

// connect to SQL using (SqlConnection connection = new SqlConnection(connString)) { // make sure to enable triggers // more on triggers in next post SqlBulkCopy bulkCopy = new SqlBulkCopy( connection, SqlBulkCopyOptions.TableLock | SqlBulkCopyOptions.FireTriggers | SqlBulkCopyOptions.UseInternalTransaction, null ); // set the destination table name bulkCopy.DestinationTableName = this.tableName; connection.Open(); // write the data in the "dataTable" bulkCopy.WriteToServer(dataTable); connection.Close(); } // reset this.dataTable.Clear();

or

after doing step 1 at the top

- Create XML from DataSet

- Pass XML to database and do bulk insert

you can check this article for detail : Bulk Insertion of Data Using C# DataTable and SQL server OpenXML function

But its not tested with 2 million record, it will do but consume memory on machine as you have to load 2 million record and insert it.

Solution 3

Re the solution for SqlBulkCopy:

I used the StreamReader to convert and process the text file. The result was a list of my object.

I created a class than takes Datatable or a List<T> and a Buffer size (CommitBatchSize). It will convert the list to a data table using an extension (in the second class).

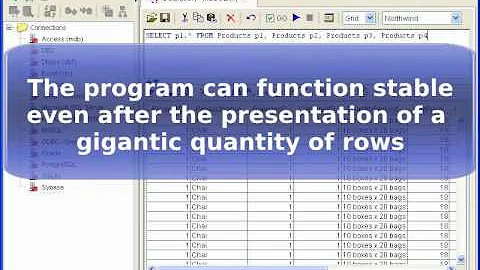

It works very fast. On my PC, I am able to insert more than 10 million complicated records in less than 10 seconds.

Here is the class:

using System;

using System.Collections;

using System.Collections.Generic;

using System.ComponentModel;

using System.Data;

using System.Data.SqlClient;

using System.Linq;

using System.Text;

using System.Threading.Tasks;

namespace DAL

{

public class BulkUploadToSql<T>

{

public IList<T> InternalStore { get; set; }

public string TableName { get; set; }

public int CommitBatchSize { get; set; }=1000;

public string ConnectionString { get; set; }

public void Commit()

{

if (InternalStore.Count>0)

{

DataTable dt;

int numberOfPages = (InternalStore.Count / CommitBatchSize) + (InternalStore.Count % CommitBatchSize == 0 ? 0 : 1);

for (int pageIndex = 0; pageIndex < numberOfPages; pageIndex++)

{

dt= InternalStore.Skip(pageIndex * CommitBatchSize).Take(CommitBatchSize).ToDataTable();

BulkInsert(dt);

}

}

}

public void BulkInsert(DataTable dt)

{

using (SqlConnection connection = new SqlConnection(ConnectionString))

{

// make sure to enable triggers

// more on triggers in next post

SqlBulkCopy bulkCopy =

new SqlBulkCopy

(

connection,

SqlBulkCopyOptions.TableLock |

SqlBulkCopyOptions.FireTriggers |

SqlBulkCopyOptions.UseInternalTransaction,

null

);

// set the destination table name

bulkCopy.DestinationTableName = TableName;

connection.Open();

// write the data in the "dataTable"

bulkCopy.WriteToServer(dt);

connection.Close();

}

// reset

//this.dataTable.Clear();

}

}

public static class BulkUploadToSqlHelper

{

public static DataTable ToDataTable<T>(this IEnumerable<T> data)

{

PropertyDescriptorCollection properties =

TypeDescriptor.GetProperties(typeof(T));

DataTable table = new DataTable();

foreach (PropertyDescriptor prop in properties)

table.Columns.Add(prop.Name, Nullable.GetUnderlyingType(prop.PropertyType) ?? prop.PropertyType);

foreach (T item in data)

{

DataRow row = table.NewRow();

foreach (PropertyDescriptor prop in properties)

row[prop.Name] = prop.GetValue(item) ?? DBNull.Value;

table.Rows.Add(row);

}

return table;

}

}

}

Here is an example when I want to insert a List of my custom object List<PuckDetection> (ListDetections):

var objBulk = new BulkUploadToSql<PuckDetection>()

{

InternalStore = ListDetections,

TableName= "PuckDetections",

CommitBatchSize=1000,

ConnectionString="ENTER YOU CONNECTION STRING"

};

objBulk.Commit();

The BulkInsert class can be modified to add column mapping if required. Example you have an Identity key as first column.(this assuming that the column names in the datatable are the same as the database)

//ADD COLUMN MAPPING

foreach (DataColumn col in dt.Columns)

{

bulkCopy.ColumnMappings.Add(col.ColumnName, col.ColumnName);

}

Solution 4

I use the bcp utility. (Bulk Copy Program) I load about 1.5 million text records each month. Each text record is 800 characters wide. On my server, it takes about 30 seconds to add the 1.5 million text records into a SQL Server table.

The instructions for bcp are at http://msdn.microsoft.com/en-us/library/ms162802.aspx

Solution 5

I ran into this scenario recently (well over 7 million rows) and eneded up using sqlcmd via powershell (after parsing raw data into SQL insert statements) in segments of 5,000 at a time (SQL can't handle 7 million lines in one lump job or even 500,000 lines for that matter unless its broken down into smaller 5K pieces. You can then run each 5K script one after the other.) as I needed to leverage the new sequence command in SQL Server 2012 Enterprise. I couldn't find a programatic way to insert seven million rows of data quickly and efficiently with said sequence command.

Secondly, one of the things to look out for when inserting a million rows or more of data in one sitting is the CPU and memory consumption (mostly memory) during the insert process. SQL will eat up memory/CPU with a job of this magnitude without releasing said processes. Needless to say if you don't have enough processing power or memory on your server you can crash it pretty easily in a short time (which I found out the hard way). If you get to the point to where your memory consumption is over 70-75% just reboot the server and the processes will be released back to normal.

I had to run a bunch of trial and error tests to see what the limits for my server was (given the limited CPU/Memory resources to work with) before I could actually have a final execution plan. I would suggest you do the same in a test environment before rolling this out into production.

Related videos on Youtube

Wadhawan Vishal

Having 15 year of experience in Microsoft Technology, Team Handling, Costing, Budgeting, Scrum Master

Updated on May 08, 2022Comments

-

Wadhawan Vishal almost 2 years

Wadhawan Vishal almost 2 yearsI have to insert about 2 million rows from a text file.

And with inserting I have to create some master tables.

What is the best and fast way to insert such a large set of data into SQL Server?

-

David Carvalho over 11 yearshow long did 7M rows take ? I have about 30M rows to insert. right now i'm pushing them through a stored procedure and a DataTable.

-

Techie Joe over 11 yearsIt took a good five to six hours running the small batches concurrently. Keep in mind that I just did straight T-SQL insert commands as I leveraged the new SEQUENCE command in SQL 2012 and couldn't find information on how to automate this process outside of T-SQL.

Techie Joe over 11 yearsIt took a good five to six hours running the small batches concurrently. Keep in mind that I just did straight T-SQL insert commands as I leveraged the new SEQUENCE command in SQL 2012 and couldn't find information on how to automate this process outside of T-SQL. -

Razort4x about 9 yearsI know this is quite late, but for about 2 million rows (or more), if there are enough columns (25+), it is almost inevitable that the code will generate

OutOfMemoryExceptionat some point, when filling the dataset/datatable. -

Jason Foglia over 8 yearsYou can setup a buffer to avoid out of memory exceptions. For a text file I used File.ReadLines(file).Skip(X).Take(100000).ToList(). After every 100k I reset and move through the next 100k. Works good and very quick.

Jason Foglia over 8 yearsYou can setup a buffer to avoid out of memory exceptions. For a text file I used File.ReadLines(file).Skip(X).Take(100000).ToList(). After every 100k I reset and move through the next 100k. Works good and very quick. -

Morzel about 7 yearsI would recommend the bcp utility too. I know nothing faster.

-

nam about 5 yearsPlease also note that (as mentioned in the SQLBulCopy link, as well) that

If the source and destination tables are in the same SQL Server instance, it is easier and faster to use a Transact-SQL INSERT … SELECT statement to copy the data. -

Andre Soares almost 5 yearsBe careful! This can be exploited with SQL injection

-

Madhav Shenoy almost 5 yearsWhat if the source data is from a Sql Server table. Lets say the table has 30 million rows, can we still use bulkcopy? Wouldn't a simple

Madhav Shenoy almost 5 yearsWhat if the source data is from a Sql Server table. Lets say the table has 30 million rows, can we still use bulkcopy? Wouldn't a simpleInsert into table1 Select * from table2be faster? -

Andrew Hill over 3 yearsdownvoted for 1) not avoiding sql injection attacks. (see stackoverflow.com/questions/15537368/… )

-

eug100 almost 2 yearsWhen I try to use your code I get error: IEnumerable<T> does not contain definition for ToDataTable