QEMU with GPU pass through won't start

You're close.

Using both pci-stub and vfio-pci

It's okay to use pci-stub to reserve a PCI device (like your GPU) to prevent the graphics driver from grabbing it, since the graphics driver (like nouveau or fglrx) will not let go of the device.

In fact, in my test, I needed to claim the PCI graphics card with pci-stub first because vfio-pci wouldn't do so on boot, which is one of the problems you experienced. While unloading one driver (pci-stub) and loading another (vfio-pci) in its place may seem ugly to some, pci-stub and vfio-pci are a reliable tag team that results in a successful virtual machine with GPU passthrough. My testing has not found success using just one or the other.

Now, you need to release the PCI device from pci-stub and hand it to vfio-pci. This part of your script should be doing that already:

configfile=/etc/vfio-pci1.cfg

vfiobind() {

dev="$1"

vendor=$(cat /sys/bus/pci/devices/$dev/vendor)

device=$(cat /sys/bus/pci/devices/$dev/device)

if [ -e /sys/bus/pci/devices/$dev/driver ]; then

echo $dev > /sys/bus/pci/devices/$dev/driver/unbind

fi

echo $vendor $device > /sys/bus/pci/drivers/vfio-pci/new_id

}

modprobe vfio-pci

cat $configfile | while read line;do

echo $line | grep ^# >/dev/null 2>&1 && continue

vfiobind $line

done

Caveat: "vfiobind" is only needed once

As noted in this comment, it is true that the switchover from pci-stub to vfio-pci only needs to be done once after booting. This is true, but it is actually harmless to run the "vfiobind" function multiple times unless a virtual machine is currently using the affected PCI device.

If the virtual machine is using the device, the unbind operation will be blocked ("D state" process). This can be fixed by shutting down or killing the virtual machine, after which the unbind will probably succeed.

You can avoid this unnecessary extra unbinding and rebinding by changing your vfiobind() function to read as follows:

vfiobind() {

dev="$1"

vendor=$(cat /sys/bus/pci/devices/$dev/vendor)

device=$(cat /sys/bus/pci/devices/$dev/device)

if [ -e /sys/bus/pci/devices/$dev/driver/module/drivers/pci\:vfio-pci ]; then

echo "Skipping $dev because it is already using the vfio-pci driver"

continue;

fi

if [ -e /sys/bus/pci/devices/$dev/driver ]; then

echo "Unbinding $dev"

echo $dev > /sys/bus/pci/devices/$dev/driver/unbind

echo "Unbound $dev"

fi

echo "Plugging $dev into vfio-pci"

echo $vendor $device > /sys/bus/pci/drivers/vfio-pci/new_id

echo "Plugged $dev into vfio-pci"

}

Check lspci -k for successful vfio-pci driver attachment

Verify that vfio-pci has taken over using lspci -k. This example is from my equivalent setup:

01:00.0 VGA compatible controller: NVIDIA Corporation GK104 [GeForce GTX 760] (rev a1)

Subsystem: eVga.com. Corp. Device 3768

Kernel driver in use: vfio-pci

01:00.1 Audio device: NVIDIA Corporation GK104 HDMI Audio Controller (rev a1)

Subsystem: eVga.com. Corp. Device 3768

Kernel driver in use: vfio-pci

If you don't see Kernel driver in use: vfio-pci, something went wrong with the part of your script that I pasted above.

QEMU passthrough configuration

I struggled a bit with the black display.

In an earlier revision of your script, you specified:

-device vfio-pci,host=01:00.0,bus=root.1,addr=00.0,multifunction=on,x-vga=on \

-device vfio-pci,host=01:00.1,bus=root.1,addr=00.1 \

Try letting QEMU decide what virtual bus and address to use:

-device vfio-pci,host=01:00.0,multifunction=on,x-vga=on \

-device vfio-pci,host=01:00.1 \

You also should pass the -nographic and -vga none flags to qemu-system-x86_64. By default, QEMU reveals an emulated graphics card to the virtual machine, and the virtual machine may use this other video device to display instead of your intended physical NVIDIA card.

If you're still getting a blank display, try adding the -nodefaults flag as well, which excludes the default serial port, parallel port, virtual console, monitor device, VGA adapter, floppy device, and CD-ROM device.

Now, your qemu-system-x86_64 command should be able to start your virtual machine with PCI devices 01:00.0 and 01:00.1 passed through and the display connected to 01:00.0 should be showing something.

Reference / Sample Configuration

My test isn't identical to yours, but I was able to get working graphics passthrough and USB passthrough with this qemu-system-x86_64 command, after claiming all the relevant PCI devices from pci-stub with vfio-pci:

qemu-system-x86_64 \

-enable-kvm \

-name node51-Win10 \

-S \

-machine pc-i440fx-2.1,accel=kvm,usb=off \

-cpu host,kvm=off \

-m 16384 \

-realtime mlock=off \

-smp 8,sockets=8,cores=1,threads=1 \

-uuid 5c4a3e8a-6e8e-449f-9361-29fcdc35358d \

-nographic \

-no-user-config \

-nodefaults \

-chardev socket,id=charmonitor,path=/var/lib/libvirt/qemu/node51-Win10.monitor,server,nowait \

-mon chardev=charmonitor,id=monitor,mode=control \

-rtc base=localtime,driftfix=slew \

-global kvm-pit.lost_tick_policy=discard \

-no-hpet \

-no-shutdown \

-global PIIX4_PM.disable_s3=0 \

-global PIIX4_PM.disable_s4=0 \

-boot strict=on \

-device ich9-usb-ehci1,id=usb,bus=pci.0,addr=0x5.0x7 \

-device ich9-usb-uhci1,masterbus=usb.0,firstport=0,bus=pci.0,multifunction=on,addr=0x5 \

-device ich9-usb-uhci2,masterbus=usb.0,firstport=2,bus=pci.0,addr=0x5.0x1 \

-device ich9-usb-uhci3,masterbus=usb.0,firstport=4,bus=pci.0,addr=0x5.0x2 \

-device virtio-serial-pci,id=virtio-serial0,bus=pci.0,addr=0x6 \

-drive file=/dev/zd16,if=none,id=drive-virtio-disk0,format=raw,cache=none,aio=native \

-device virtio-blk-pci,scsi=off,bus=pci.0,addr=0x2,drive=drive-virtio-disk0,id=virtio-disk0,bootindex=1 \

-drive file=/media/isos/Win10_English_x64.iso,if=none,id=drive-ide0-1-0,readonly=on,format=raw \

-device ide-cd,bus=ide.1,unit=0,drive=drive-ide0-1-0,id=ide0-1-0 \

-device ide-cd,bus=ide.1,unit=1,drive=drive-ide0-1-1,id=ide0-1-1 \

-netdev tap,fd=24,id=hostnet0,vhost=on,vhostfd=25 \

-device virtio-net-pci,netdev=hostnet0,id=net0,mac=52:54:00:11:bf:dd,bus=pci.0,addr=0x3 \

-chardev pty,id=charserial0 \

-device isa-serial,chardev=charserial0,id=serial0 \

-device usb-tablet,id=input0 \

-device intel-hda,id=sound0,bus=pci.0,addr=0x4 \

-device hda-duplex,id=sound0-codec0,bus=sound0.0,cad=0 \

-device vfio-pci,host=01:00.1,id=hostdev0,bus=pci.0,addr=0x9 \

-device vfio-pci,host=00:12.0,id=hostdev1,bus=pci.0,addr=0x8 \

-device vfio-pci,host=00:12.2,id=hostdev2,bus=pci.0,addr=0xa \

-device virtio-balloon-pci,id=balloon0,bus=pci.0,addr=0x7 \

-device vfio-pci,host=01:00.0,x-vga=on \

-vga none \

-msg timestamp=on

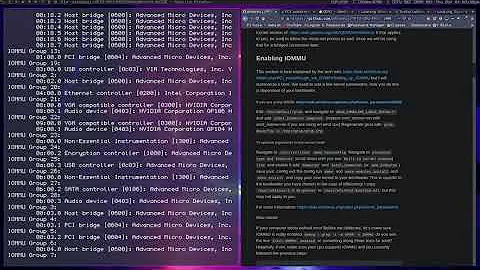

Relevant items from lspci -k:

00:12.0 USB controller: Advanced Micro Devices, Inc. [AMD/ATI] SB7x0/SB8x0/SB9x0 USB OHCI0 Controller

Subsystem: Gigabyte Technology Co., Ltd Device 5004

Kernel driver in use: vfio-pci

00:12.2 USB controller: Advanced Micro Devices, Inc. [AMD/ATI] SB7x0/SB8x0/SB9x0 USB EHCI Controller

Subsystem: Gigabyte Technology Co., Ltd Device 5004

Kernel driver in use: vfio-pci

01:00.0 VGA compatible controller: NVIDIA Corporation GK104 [GeForce GTX 760] (rev a1)

Subsystem: eVga.com. Corp. Device 3768

Kernel driver in use: vfio-pci

01:00.1 Audio device: NVIDIA Corporation GK104 HDMI Audio Controller (rev a1)

Subsystem: eVga.com. Corp. Device 3768

Kernel driver in use: vfio-pci

Observed result:

Related videos on Youtube

Victor Marchuk

Updated on September 18, 2022Comments

-

Victor Marchuk over 1 year

Victor Marchuk over 1 yearI'm running Ubuntu and trying to configure QEMU with a GPU pass through. I was following those guides:

https://www.youtube.com/watch?v=w-hOr44oBAI

http://www.howtogeek.com/117635/how-to-install-kvm-and-create-virtual-machines-on-ubuntu/

My

/etc/modules:lp rtc pci_stub vfio vfio_iommu_type1 vfio_pci kvm kvm_intelMy

/etc/default/grub:GRUB_DEFAULT=0 GRUB_HIDDEN_TIMEOUT=0 GRUB_HIDDEN_TIMEOUT_QUIET=true GRUB_TIMEOUT=10 GRUB_DISTRIBUTOR=`lsb_release -i -s 2> /dev/null || echo Debian` GRUB_CMDLINE_LINUX_DEFAULT="quiet splash intel_iommu=on vfio_iommu_type1.allow_unsafe_interrupts=1" GRUB_CMDLINE_LINUX=""My GPU:

$ lspci -nn | grep NVIDIA 01:00.0 VGA compatible controller [0300]: NVIDIA Corporation GK106 [GeForce GTX 650 Ti] [10de:11c6] (rev a1) 01:00.1 Audio device [0403]: NVIDIA Corporation GK106 HDMI Audio Controller [10de:0e0b] (rev a1) $ lspci -nn | grep -i graphic 00:02.0 VGA compatible controller [0300]: Intel Corporation Xeon E3-1200 v2/3rd Gen Core processor Graphics Controller [8086:0152] (rev 09)My

/etc/initramfs-tools/modules:pci_stub ids=10de:11c6,10de:0e0bpci_stubseems to be working:$ dmesg | grep pci-stub [ 0.541737] pci-stub: add 10DE:11C6 sub=FFFFFFFF:FFFFFFFF cls=00000000/00000000 [ 0.541750] pci-stub 0000:01:00.0: claimed by stub [ 0.541755] pci-stub: add 10DE:0E0B sub=FFFFFFFF:FFFFFFFF cls=00000000/00000000 [ 0.541760] pci-stub 0000:01:00.1: claimed by stubMy

/etc/vfio-pci1.cfg:0000:01:00.0 0000:01:00.1My

~/windows_start.bash: http://pastebin.com/F7fq2SztAfter I run the bash script,

vfio-pciis being used as a driver:$ lspci -k | grep -C 3 -i nvidia Kernel driver in use: ahci 00:1f.3 SMBus: Intel Corporation 7 Series/C210 Series Chipset Family SMBus Controller (rev 04) Subsystem: ASRock Incorporation Motherboard 01:00.0 VGA compatible controller: NVIDIA Corporation GK106 [GeForce GTX 650 Ti] (rev a1) Subsystem: Gigabyte Technology Co., Ltd Device 3557 Kernel driver in use: vfio-pci 01:00.1 Audio device: NVIDIA Corporation GK106 HDMI Audio Controller (rev a1) Subsystem: Gigabyte Technology Co., Ltd Device 3557 Kernel driver in use: vfio-pci 03:00.0 PCI bridge: ASMedia Technology Inc. ASM1083/1085 PCIe to PCI Bridge (rev 03)Software versions:

$ kvm --version QEMU emulator version 2.5.0, Copyright (c) 2003-2008 Fabrice Bellard $ lsb_release -a No LSB modules are available. Distributor ID: Ubuntu Description: Ubuntu 14.04.3 LTS Release: 14.04 Codename: trustyThe problem is that when I run

windows_start.bash, QEMU terminal starts, but nothing is happening. The monitor that is connected to NVIDIA GPU is black, it's supposed to be turned on by QEMU, but it doesn't. What am I doing wrong? How can I debug it? What else can I try to achieve GPU pass through?I checked using this guide and it seems that my GPU doesn't support UEFI, so maybe that's the reason it fails? It's still weird, a lot of people from had success using even older GPUs, so there must be a way.

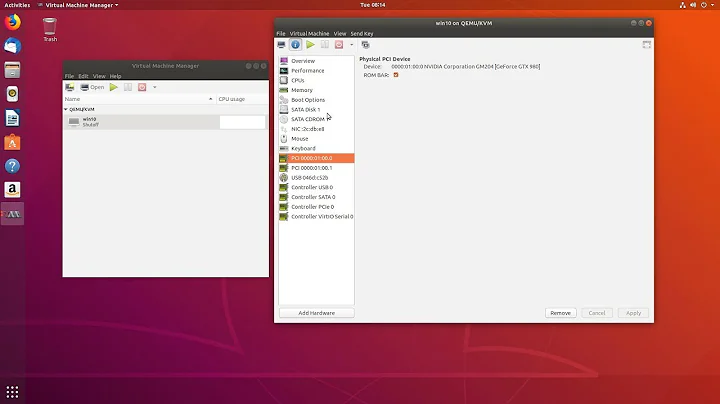

EDIT: I've just tried to run the vm with

libvirtusingvirt-manager, as suggested by @Deltik. Here's what my config looks like: http://pastebin.com/W46kNcrhThe result was pretty much the same as before - it started, showed a black screen in

virt-manager's window and nothing else happened. There were no errors in the debug console (which I started by runningvirt-manager --debug). I've also tried the same approach on Arch Linux and on a newer version of Ubuntu, it made no difference whatsoever.I've given the bounty to @Deltik, because he gave me a few good advices, but I still wasn't able to make it work. It seems that this task is impossible to complete, at least with my current hardware.

-

Tom Yan about 8 yearsone thing (although it may not fix your issue): don't involve

pci-stubandvfio-pciat the same time. they are two kernel modules that is basically for the same thing -- pci(-e) passthrough. EACH of them corresponds to a DIFFERENT qemu "device": you should involvevfio-pcionly if you use the newer-device vfio-pci, ORpci-stubonly if you use the older-device pci-assign -

Tom Yan about 8 yearsso basically you can remove this whole part from your start script: gist.github.com/anonymous/039bd3a033210f401d0b as long as you change

pci-stubtovfio-pci(yes they take the sameids=param the same way) in/etc/initramfs-tools/modules -

Tom Yan about 8 yearsthese should probably be removed from

/etc/modulesas well: gist.github.com/anonymous/5d19ae3c22653a282e90 , especiallypci-stub -

Tom Yan about 8 yearsbtw i faintly remember you need ovmf (UEFI) instead of seabios to make gpu/vga pass through work

-

Victor Marchuk about 8 years@Tom Yan Thanks for you input, I've tried everything you mentioned, but unfortunately I'm getting some errors now. Do you have any ideas what else could I try?

Victor Marchuk about 8 years@Tom Yan Thanks for you input, I've tried everything you mentioned, but unfortunately I'm getting some errors now. Do you have any ideas what else could I try? -

Deltik about 8 yearsAfter running your script

~/windows_start.bash, inlspci -k, do you seeKernel driver in use: vfio-pcifor the devices01:00.0and01:00.1? If not,vfio-pciisn't the driver in use on those devices. -

Tom Yan about 8 years@VictorMarchuk apparently your kernel is too old to have included the

idsparam: git.kernel.org/cgit/linux/kernel/git/torvalds/linux.git/tree/… git.kernel.org/cgit/linux/kernel/git/torvalds/linux.git/tree/… ; In that case, you will need to bind it manually after boot with theecho/sysfs way. I still discourage you to bind it with pci-stub first but just blacklist nouveau/nvidia, unless you have nvidia card(s) that you want to use on the host side. -

Tom Yan about 8 years@VictorMarchuk is

/sys/kernel/iommu_groups/empty? if so make sure you've enabled iommu in bios/uefi settings. -

Deltik about 8 yearsIn revision #6, you included this image. QEMU should not be showing that to you; you should be seeing that on your physical display. I've updated my answer with how to disable QEMU's emulated display.

-

Deltik about 8 yearsIf you're available now, I've created a chatroom where we can discuss this further.

-

-

Tom Yan about 8 yearsIt's only silly and self-confusing to bind

pci-stubto the card with initramfs first and then re-bind it withvfio-pci, EVEN IF it would still work. Not to mention that it makes the script include steps that only need to run once, so they will be unnessarily run repeatedly if you perform "power cycles" on the VM. -

Deltik about 8 years@TomYan: Both the OP and I were unable to bind with

vfio-pcion boot. Reserving withpci-stuband then switching over tovfio-pciwas successful in my test. -

Victor Marchuk about 8 years@Deltik: Thank you for your detailed answer. I've made the changes you suggested and updated my question accordingly. It seems that my GPU uses

Victor Marchuk about 8 years@Deltik: Thank you for your detailed answer. I've made the changes you suggested and updated my question accordingly. It seems that my GPU usesvfio-pciafter running the script now, which is good, but nothing else has changed otherwise. The screen that is connected to the NVIDIA is still black and there are no errors in the terminal. Anything else I could try to debug the problem? My biggest issue is that I don't even understand what is wrong right now. I will try the other settings from your sample configuration, maybe something will work. -

Deltik about 8 years@VictorMarchuk: Can you try adding the

-nographicflag and/or-nodefaultsflag toqemu-system-x86_64? These flags disable the emulated graphics device that QEMU provides by default, which hopefully would force your GeForce GTX 650 Ti to be the only graphics option. -

Victor Marchuk about 8 years@Deltik: I've added

Victor Marchuk about 8 years@Deltik: I've added-nographicand removed trailing slashes from the main command, it resulted in some output being printed to the QEMU terminal. See my original question for the screenshot (I can't copy text from that terminal). It seems that the error is related to my drives now. -

Deltik about 8 years@VictorMarchuk: Your command needs the trailing `\` on each line except the last line. It tells the shell that there are still more parameters in the command.

-

Victor Marchuk about 8 years@Deltik: Oh, right. That was silly of me. Updated my config to include

Victor Marchuk about 8 years@Deltik: Oh, right. That was silly of me. Updated my config to include-nographicand-nodefaults: pastebin.com/F7fq2Szt and now QEMU terminal doesn't appear at all, but the monitor connected to NVIDIA is still black, unfortunately. I've tried-nographicand-nodefaultsseparately as well and got the same results. -

lilezek about 7 yearsI have this same issue. Still black after those steps, though my VGA is a Radeon. It turns on the monitor but it is black at whole. If I choose default VGA qemu creates a Window where I can correctly see the VM.