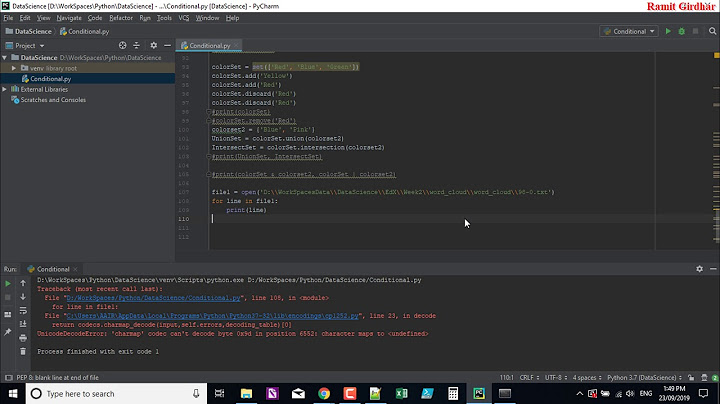

"for line in..." results in UnicodeDecodeError: 'utf-8' codec can't decode byte

Solution 1

As suggested by Mark Ransom, I found the right encoding for that problem. The encoding was "ISO-8859-1", so replacing open("u.item", encoding="utf-8") with open('u.item', encoding = "ISO-8859-1") will solve the problem.

Solution 2

The following also worked for me. ISO 8859-1 is going to save a lot, mainly if using Speech Recognition APIs.

Example:

file = open('../Resources/' + filename, 'r', encoding="ISO-8859-1")

Solution 3

Your file doesn't actually contain UTF-8 encoded data; it contains some other encoding. Figure out what that encoding is and use it in the open call.

In Windows-1252 encoding, for example, the 0xe9 would be the character é.

Solution 4

Try this to read using Pandas:

pd.read_csv('u.item', sep='|', names=m_cols, encoding='latin-1')

Solution 5

This works:

open('filename', encoding='latin-1')

Or:

open('filename', encoding="ISO-8859-1")

Related videos on Youtube

SujitS

Updated on March 17, 2022Comments

-

SujitS about 2 years

SujitS about 2 yearsHere is my code,

for line in open('u.item'): # Read each lineWhenever I run this code it gives the following error:

UnicodeDecodeError: 'utf-8' codec can't decode byte 0xe9 in position 2892: invalid continuation byte

I tried to solve this and add an extra parameter in open(). The code looks like:

for line in open('u.item', encoding='utf-8'): # Read each lineBut again it gives the same error. What should I do then?

-

Andreas Jung over 10 yearsBadly encoded data I would assume.

-

Mark Tolonen over 10 yearsOr just not UTF-8 data.

Mark Tolonen over 10 yearsOr just not UTF-8 data. -

tripleee over 5 yearsPossible duplicate of Python 3 UnicodeDecodeError - How do I debug UnicodeDecodeError?

tripleee over 5 yearsPossible duplicate of Python 3 UnicodeDecodeError - How do I debug UnicodeDecodeError? -

Jesse W. Collins almost 5 yearsWe had this error with msgpack when using python 3 instead of python 2.7. For us, the course of action was to work with python 2.7.

-

-

SujitS over 10 yearsSo, How can I find out what encoding is it! I am using linux

SujitS over 10 yearsSo, How can I find out what encoding is it! I am using linux -

RemcoGerlich over 10 yearsThere is no way to do that that always works, but see the answer to this question: stackoverflow.com/questions/436220/…

RemcoGerlich over 10 yearsThere is no way to do that that always works, but see the answer to this question: stackoverflow.com/questions/436220/… -

0 _ almost 8 yearsExplicit is better than implicit (PEP 20).

-

SujitS about 7 yearsBut this is version 3

SujitS about 7 yearsBut this is version 3 -

Jeril about 7 yearsYeah I know. I thought it might be helpful for the people using

Jeril about 7 yearsYeah I know. I thought it might be helpful for the people usingPython 2 -

fenkerbb over 6 yearsWorked for me in Python3 as well

-

RolfBly over 6 yearsYou may be correct that the OP is reading ISO 8859-1, as can be deduced from the 0xe9 (é) in the error message, but you should explain why your solution works. The reference to speech recognitions API's does not help.

RolfBly over 6 yearsYou may be correct that the OP is reading ISO 8859-1, as can be deduced from the 0xe9 (é) in the error message, but you should explain why your solution works. The reference to speech recognitions API's does not help. -

Max Candocia over 6 yearsIn case you want something easier to remember,

'ISO-8859-1'is also known as'latin-1'or'latin1'. -

Kjeld Flarup about 6 yearsThe trick is that ISO-8859-1 or Latin_1 is 8 bit character sets, thus all garbage has a valid value. Perhaps not useable, but if you want to ignore!

-

Евгений Коптюбенко over 5 yearsI had the same issue UnicodeDecodeError: 'utf-8' codec can't decode byte 0xd0 in position 32: invalid continuation byte. I used python 3.6.5 to install aws cli. And when I tried aws --version it failed with this error. So I had to edit /Library/Frameworks/Python.framework/Versions/3.6/lib/python3.6/configparser.py and changed the code to the following def read(self, filenames, encoding="ISO-8859-1"):

-

JoseOrtiz3 over 5 yearsIs there an automatic way of detecting encoding?

-

VertigoRay about 5 years@OrangeSherbet I implemented detection using

chardet. Here's the one-liner (afterimport chardet):chardet.detect(open(in_file, 'rb').read())['encoding']. Check out this answer for details: stackoverflow.com/a/3323810/615422 -

JohnAndrews over 4 yearsHow do you get the encoding of a file?

-

Alastair McCormack over 4 yearsNot sure why you're suggesting Pandas. The solution is setting the correct encoding, which you've chanced upon here.

-

Mark Ransom almost 4 yearsNote that

'ISO-8859-1'will always work even if it's not the right encoding, because each of the 256 byte values maps to a Unicode character. I believe it's the only encoding which does this. -

MartenCatcher almost 4 yearsThis does not provide an answer to the question. To critique or request clarification from an author, leave a comment below their post. - From Review

-

Billy over 3 yearsI like @VertigoRay's suggestion of

chardetin a script, but for something really quick to diagnose what's going on, a simplefilehelped me:% file list.log list.log: ISO-8859 textvs%file playlist.txt playlist.txt: UTF-8 Unicode text, with CRLF, LF line terminators -

Silidrone over 3 years@MartenCatcher yeah but it helps future visitors to the question, although more explanation put to the answer would make it much better, I believe it serves better purpose as an answer rather than as a comment

Silidrone over 3 years@MartenCatcher yeah but it helps future visitors to the question, although more explanation put to the answer would make it much better, I believe it serves better purpose as an answer rather than as a comment -

Peter Mortensen over 3 years'latin-1' is the same as 'ISO-8859-1'?

Peter Mortensen over 3 years'latin-1' is the same as 'ISO-8859-1'? -

Peter Mortensen over 3 yearsWhat is the intent? Ignoring errors? What are the consequences?

Peter Mortensen over 3 yearsWhat is the intent? Ignoring errors? What are the consequences? -

Peter Mortensen over 3 years

Peter Mortensen over 3 years -

Peter Mortensen over 3 yearsWhat is "Encodage"? What language?

Peter Mortensen over 3 yearsWhat is "Encodage"? What language? -

Mark Ransom about 3 years@PeterMortensen yes it is, Wikipedia confirms it. They both produce the same output when used with

decodein Python as well. -

Mark Ransom about 3 yearsDepends on what you mean by "works". If you mean avoids exceptions that's true, because it's the only encoding that doesn't have invalid bytes or sequences. Doesn't mean you'll get the proper characters though.

-

Thierry Lathuille over 2 yearsPlease note that questions and answers on SO must be in English only - even if the problem you encountered may bite mainly programmers using cyrillic alphabet.

Thierry Lathuille over 2 yearsPlease note that questions and answers on SO must be in English only - even if the problem you encountered may bite mainly programmers using cyrillic alphabet. -

Nikita Axenov over 2 years@ThierryLathuille, is it a real problem? Could you please give me a link/referense to the comunity rule on that issue?

-

Thierry Lathuille over 2 yearsThis is considered a real problem - and is probably what caused your answer to get downvoted. Non-English content is not allowed on SO (see for example meta.stackoverflow.com/questions/297673/… ), and the rule is really strictly respected. For questions in Russian, you have ru.stackoverflow.com , though ;)

Thierry Lathuille over 2 yearsThis is considered a real problem - and is probably what caused your answer to get downvoted. Non-English content is not allowed on SO (see for example meta.stackoverflow.com/questions/297673/… ), and the rule is really strictly respected. For questions in Russian, you have ru.stackoverflow.com , though ;) -

Anonymous over 2 years@ThierryLathuille This applies to the English content, not problems with non-English symbols. And this doesn't necessarily have to be about other languages, it could be a different UTF-8 character (for example, a checkmark).

-

Pooya Estakhri over 2 yearsI was looking for an answer and interestingly you've answered 7 hours ago to a question asked 8 years ago. interesting coincidence .

-

Fred Zimmerman over 2 years@vertigoray answer should be the accepted one IMHO -- answers without encoding detection cannot reliably solve the question

-

Mark Ransom over 2 yearsI don't get it, why would you use a 33-line program to avoid typing one line in the shell?

-

Mark Ransom over 2 yearsHow would opening a file that doesn't exist generate a

UnicodeDecodeError? And in Python it's customary to use the EAFP principle over the LBYL that you're endorsing here. -

Mark Ransom over 2 years@OrangeSherbet there's no sure way unless you can find out from whoever produced the file. But it's possible to guess based on the file contents and some guessing methods are better than others. By coincidence I chanced on a new way to do it in Python the other day: charset-normalizer.

-

Arpad Horvath -- Слава Україні about 2 yearsNobody said that the file in the question is a csv file.

-

JGaber about 2 years"Encodage" is "Encoding" if the menu is in French

![[SOLVED] UnicodeEncodeError: 'charmap' codec can't encode character...](https://i.ytimg.com/vi/TumTf8-wY1k/hq720.jpg?sqp=-oaymwEcCNAFEJQDSFXyq4qpAw4IARUAAIhCGAFwAcABBg==&rs=AOn4CLDxAJJVfjJXkcss8mnp2RS0kfxoAQ)