Running multiple scp threads simultaneously

Solution 1

I would do it like this:

tar -cf - /manyfiles | ssh dest.server 'tar -xf - -C /manyfiles'

Depending on the files you are transferring it can make sense to enable compression in the tar commands:

tar -czf - /manyfiles | ssh dest.server 'tar -xzf - -C /manyfiles'

It may also make sense that you choose a CPU friendlier cipher for the ssh command (like arcfour):

tar -cf - /manyfiles | ssh -c arcfour dest.server 'tar -xf - -C /manyfiles'

Or combine both of them, but it really depends on what your bottleneck is.

Obviously rsync will be a lot faster if you are doing incremental syncs.

Solution 2

Use rsync instead of scp. You can use rsync over ssh as easily as scp, and it supports "pipelining of file transfers to minimize latency costs".

One tip: If the data is compressible, enable compression. If it's not, disable it.

Solution 3

Not scp directly, but an option for mutli threaded transfer (even on single files) is bbcp - https://www2.cisl.ucar.edu/resources/storage-and-file-systems/bbcp.

use the -s option for the number of threads you want transferring data. Great for high bandwidth but laggy connections, as lag limits the TCP window size per thread.

Solution 4

I was about to suggest GNO Parallel (which still requires some scripting work on your part), but then I found pscp (which is part of pssh). That may just fit your need.

Related videos on Youtube

caesay

Updated on September 18, 2022Comments

-

caesay almost 2 years

caesay almost 2 yearsRunning multiple scp threads simultaneously:

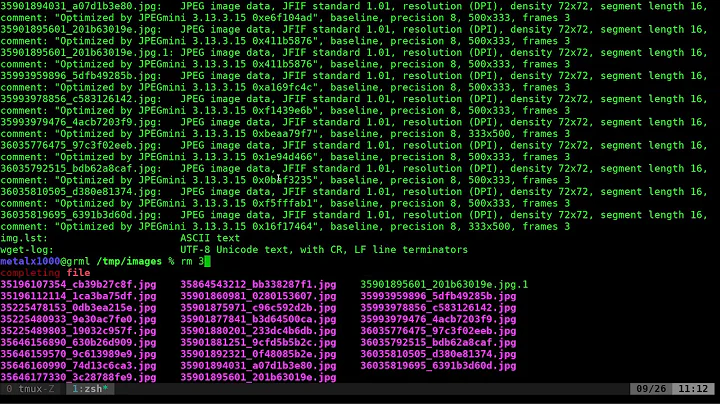

Background:

I'm often finding myself mirroring a set of server files a lot, and included in these server files are thousands of little 1kb-3kb files. All the servers are connected to 1Gbps ports, generally spread out in a variety of data-centers.

Problem:

SCP transfers these little files, ONE by ONE, and it takes ages, and I feel like I'm wasting the beautiful network resources I have.

Solution?:

I had an idea; Creating a script, which divides the files up into equal amounts, and starts up 5-6 scp threads, which theoretically would then get done 5-6 times faster, no? But I don't have any linux scripting experience!

Question(s):

- Is there a better solution to the mentioned problem?

- Is there something like this that exists already?

- If not, is there someone who would give me a start, or help me out?

- If not to 2, or 3, where would be a good place to start looking to learn linux scripting? Like bash, or other.

-

Scott Pack about 12 yearspossible duplicate of How to copy a large number of files quickly between two servers

-

David Schwartz over 12 yearsIt seems

psshoperates concurrently to multiple machines. I don't think it implements file-level parallelism. -

Rilindo over 12 yearsI probably should be specific - I meant pscp.

-

aendra almost 12 yearsI just did one transfer last night with scp and am doing another similar transfer with rsync -- it seems a lot faster. However, it still seems to be transferring one file at a time — any idea how to make this do multiple threads (Beyond --include'ing and --exclude'ing a bunch of directories via script; see: sun3.org/archives/280)

-

Joe over 6 yearsThere's no point transferring multiple files at the same time given the limited bandwidth. I believe you won't consider this command when the bandwidth is abundant. Eliminating the latency cost already helped a lot when you are coping a lot of small files. Even if you can copy multiple files at the same time, the limited bandwidth won't speed up your file transfer.

Joe over 6 yearsThere's no point transferring multiple files at the same time given the limited bandwidth. I believe you won't consider this command when the bandwidth is abundant. Eliminating the latency cost already helped a lot when you are coping a lot of small files. Even if you can copy multiple files at the same time, the limited bandwidth won't speed up your file transfer.