Scrapy on a schedule

Solution 1

First noteworthy statement, there's usually only one Twisted reactor running and it's not restartable (as you've discovered). The second is that blocking tasks/functions should be avoided (ie. time.sleep(n)) and should be replaced with async alternatives (ex. 'reactor.task.deferLater(n,...)`).

To use Scrapy effectively from a Twisted project requires the scrapy.crawler.CrawlerRunner core API as opposed to scrapy.crawler.CrawlerProcess. The main difference between the two is that CrawlerProcess runs Twisted's reactor for you (thus making it difficult to restart the reactor), where as CrawlerRunner relies on the developer to start the reactor. Here's what your code could look like with CrawlerRunner:

from twisted.internet import reactor

from quotesbot.spiders.quotes import QuotesSpider

from scrapy.crawler import CrawlerRunner

def run_crawl():

"""

Run a spider within Twisted. Once it completes,

wait 5 seconds and run another spider.

"""

runner = CrawlerRunner({

'USER_AGENT': 'Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1)',

})

deferred = runner.crawl(QuotesSpider)

# you can use reactor.callLater or task.deferLater to schedule a function

deferred.addCallback(reactor.callLater, 5, run_crawl)

return deferred

run_crawl()

reactor.run() # you have to run the reactor yourself

Solution 2

You can use apscheduler

pip install apscheduler

# -*- coding: utf-8 -*-

from scrapy.crawler import CrawlerProcess

from scrapy.utils.project import get_project_settings

from apscheduler.schedulers.twisted import TwistedScheduler

from Demo.spiders.baidu import YourSpider

process = CrawlerProcess(get_project_settings())

scheduler = TwistedScheduler()

scheduler.add_job(process.crawl, 'interval', args=[YourSpider], seconds=10)

scheduler.start()

process.start(False)

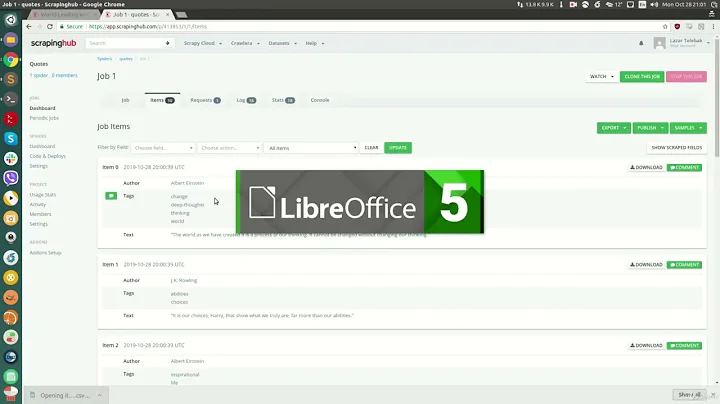

Related videos on Youtube

itzafugazi

Windows syadmin, currently learning Python for a sports betting application I would like to write.

Updated on September 14, 2022Comments

-

itzafugazi over 1 year

itzafugazi over 1 yearGetting Scrapy to run on a schedule is driving me around the Twist(ed).

I thought the below test code would work, but I get a

twisted.internet.error.ReactorNotRestartableerror when the spider is triggered a second time:from quotesbot.spiders.quotes import QuotesSpider import schedule import time from scrapy.crawler import CrawlerProcess def run_spider_script(): process.crawl(QuotesSpider) process.start() process = CrawlerProcess({ 'USER_AGENT': 'Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1)', }) schedule.every(5).seconds.do(run_spider_script) while True: schedule.run_pending() time.sleep(1)I'm going to guess that as part of the CrawlerProcess, the Twisted Reactor is called to start again, when that's not required and so the program crashes. Is there any way I can control this?

Also at this stage if there's an alternative way to automate a Scrapy spider to run on a schedule, I'm all ears. I tried

scrapy.cmdline.execute, but couldn't get that to loop either:from quotesbot.spiders.quotes import QuotesSpider from scrapy import cmdline import schedule import time from scrapy.crawler import CrawlerProcess def run_spider_cmd(): print("Running spider") cmdline.execute("scrapy crawl quotes".split()) process = CrawlerProcess({ 'USER_AGENT': 'Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1)', }) schedule.every(5).seconds.do(run_spider_cmd) while True: schedule.run_pending() time.sleep(1)EDIT

Adding code, which uses Twisted

task.LoopingCall()to run a test spider every few seconds. Am I going about this completely the wrong way to schedule a spider that runs at the same time each day?from twisted.internet import reactor from twisted.internet import task from scrapy.crawler import CrawlerRunner import scrapy class QuotesSpider(scrapy.Spider): name = 'quotes' allowed_domains = ['quotes.toscrape.com'] start_urls = ['http://quotes.toscrape.com/'] def parse(self, response): quotes = response.xpath('//div[@class="quote"]') for quote in quotes: author = quote.xpath('.//small[@class="author"]/text()').extract_first() text = quote.xpath('.//span[@class="text"]/text()').extract_first() print(author, text) def run_crawl(): runner = CrawlerRunner() runner.crawl(QuotesSpider) l = task.LoopingCall(run_crawl) l.start(3) reactor.run() -

notorious.no almost 7 yearsLoopingCall will work fine and is the simplest solution. You could also modify the example code (ie.

notorious.no almost 7 yearsLoopingCall will work fine and is the simplest solution. You could also modify the example code (ie.addCallback(reactor.callLater, 5, run_crawl)) and replace5with the number of seconds that represents when you want to scrape next. This will give you a bit more precision as opposed toLoopingCall -

Sy Ker over 2 yearsThis will not work with Django: the spider will open but not scrape or block the server's initialization.