Script to go through $PATH folders and see what executable files are available on your system

Solution 1

If what you want is a list of executable files, find is plenty:

IFS=':'

find $PATH -type f '(' -perm -u+x -o -perm -g+x -o -perm -o+x ')'

This will list the full path of every executable in your $PATH. The IFS=':' ensures that $PATH is split at colons (:), the separator for that variable.

If you don't want the full path, but just the executable names, you might do

IFS=':'

find $PATH -type f '(' -perm -u+x -o -perm -g+x -o -perm -o+x ')' -exec basename {} \; | sort

If your find is GNU-compatible, the condition simplifies quite a bit:

IFS=':'

find $PATH -type f -executable -exec basename {} \; | sort

As @StephenHarris points out, there is a bit of an issue with this: if there are subdirectories of your $PATH, files in those subdirectories might be reported even though $PATH cannot reach them. To get around this, you would actually need a find with more options than POSIX requires. A GNU-compatible can get around this with:

IFS=':'

find $PATH -maxdepth 1 -type f -executable -exec basename {} \; | sort

The -maxdepth 1 tells find not to enter any of these subdirectories.

Solution 2

For one thing, $PATH gives a list of directories. If you want to check each file in your $PATH, you'll need to look at each file in each directory, not just check each item in $PATH.

Next, you are using -x to see if the file is executable, but you aren't specifying which file to check. I have written an amended version below:

IFS=':'

for directory in $PATH; do

for file in $directory/*; do

if [ -x $file ]; then

echo "Executable File: " $file

else

echo "Not executable: " $file

fi

done

done

Fox's answer is a much nicer solution, but I just thought you'd be interested in what was wrong with yours.

Solution 3

Specifically for bash, you might be able to take advantage of the compgen builtin:

compgen -clists all available commands, builtins, functions, aliases, etc. (essentially everything that can show up if you press Tab at an empty prompt).compgen -blists all builtins, similarlydfor directories,ffor files,afor aliases and so on.

So you could use the output of compgen and abuse which:

$ compgen -c | xargs which -a

/bin/egrep

/bin/fgrep

/bin/grep

/bin/ls

/bin/ping

/usr/bin/time

/usr/bin/[

/bin/echo

/bin/false

/bin/kill

The first five are actually aliases shadowing commands in my case, then we have keywords and builtins, so there seems to be some order to compgen's output.

Related videos on Youtube

Tyler

Updated on September 18, 2022Comments

-

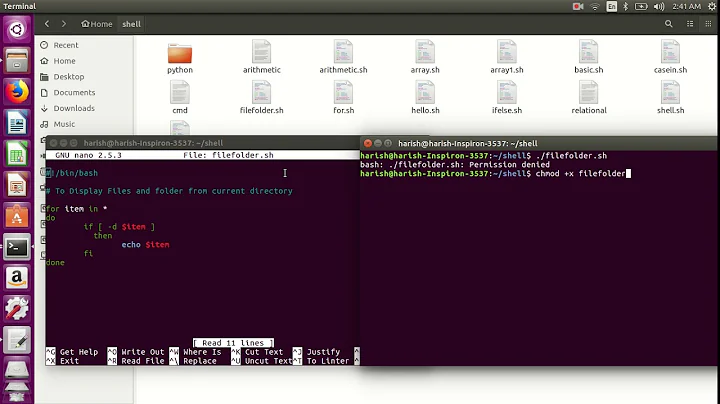

Tyler almost 2 years

Here is what I have so far:

#!/bin/bash for file in $PATH ; do # Scanning files in $PATH if [ -x ] ; then #Check if executable echo "Executable File" else echo "Not executable" fi doneOutput is "File is executable"

I don't think it is looping through all folders correctly

-

Overmind Jiang about 7 yearsNote that in theory, you could have a

PATH=:/bin::/usr/bin:which lists the current directory,., three times implicitly — before the first colon, between the consecutive colons, and after the trailing colon. So far, the answers would not interpolate the current directory when expanding PATH. It's up to you whether you regard that as a problem or not. Fixing it is decidedly non-trivial.

-

-

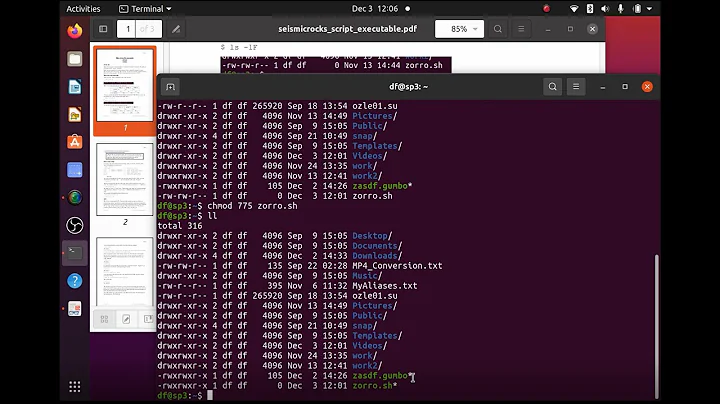

Fox about 7 yearsEnvironment variables don't effect the parent shell, so there should be no need to save and restore

Fox about 7 yearsEnvironment variables don't effect the parent shell, so there should be no need to save and restoreIFS -

zondo about 7 years@Kamaraj: You're right. My code originally used

zondo about 7 years@Kamaraj: You're right. My code originally used$(ls $directory), but I forgot to change everything when I used$directory/*. Thanks. -

Tyler about 7 yearsOk awesome thank you however I have 2 questions: 1: Why is the field seperator needed? What purpose does it serve? 2: So is "directory" and "file" in the for loops just a global variable and since we are defining the directory it will check for any file? I guess what I am confused about is how "file" and "directory work since they arent predefined. Thanks.

-

Fox about 7 years@Tyler

Fox about 7 years@TylerPATHlooks like/usr/bin:/binor similar. The field separator causes the shell to split it into two parts:/usr/binand/bin.directoryis then (yes) a variable that refers to each of these parts in turn. Similarly,fileis a variable that points to each file in turn in the currently-being-processed directory. -

Wildcard about 7 years@Tyler,

Wildcard about 7 years@Tyler,fileanddirectoryare arbitrarily chosen variable names. You could just as easily do (after setting IFS):for d in $PATH; do for f in "$d"/*; do [ -x "$f" ] && echo "$f"; done; done -

Stephen Harris about 7 yearsThis may report on subdirectory names that exist inside PATH directories; you may need a

Stephen Harris about 7 yearsThis may report on subdirectory names that exist inside PATH directories; you may need a-ftest as well. -

Fox about 7 years@StephenHarris Thanks for that! Added a note with

Fox about 7 years@StephenHarris Thanks for that! Added a note with-maxdepth 1to fix this, along with a note that there is not a POSIX-compatible way to emulate this (that I know of)