Search output of AWK in another file

Solution 1

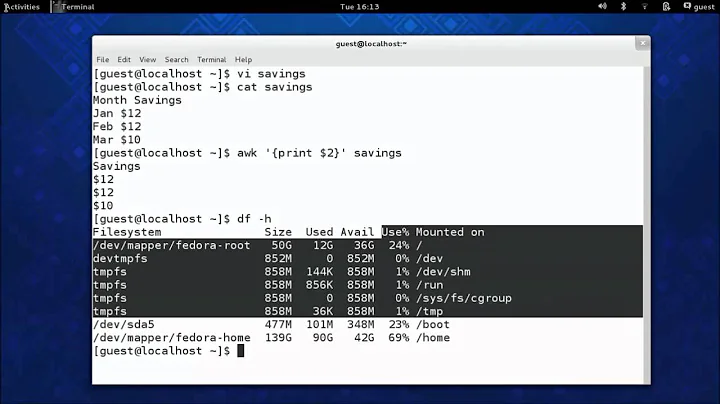

Can be achieved easily with awk as follows:

awk 'NR==FNR{inFileA[$1]; next} ($1 in inFileA)' fileA fileB > write_to_fileC

result,

seg1 one

seg2 two

seg3 three

at above, first we are reading the fileA and holds the entire column1 from into an array named inFileA, then look in fileB for its first column and if it's matched with the saved column1 from fileA then goes to print entire row of fileB.

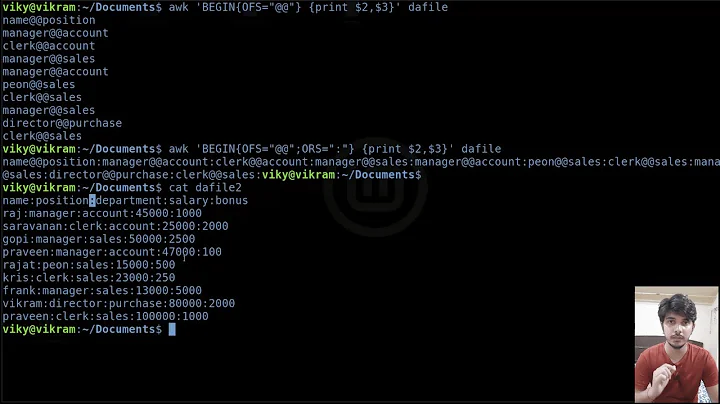

Solution 2

If the columns to be compared are sorted, you can use join:

join -o 2.1,2.2 file1 file2

join matches sorted columns from input files and prints them. -o 2,1,2.2 restricts the output to first and second columns of the second input file.

Solution 3

You can use the following one-liner:

cut -f1 fileA | grep -f - fileB > fileC

- the

cutcommand will extract the first column offileA(assuming tab separation. use-dto specify something else) - the

grepcommand takes the output ofcutand searchesfileBfor all strings. - the output will be written to

fileC

Solution 4

You have already received some excellent answers. Just to add to the mix, here's a Perl approach:

$ perl -ane '$i ? $k{$F[0]} && print : { $k{$F[0]}++ }; $i++ if eof' fileA fileB

seg1 one

seg2 two

seg3 three

And a golfed version of αғsнιη's answer:

$ awk 'NR==FNR ? a[$1] : $1 in a' fileA fileB

seg1 one

seg2 two

seg3 three

And here's a kinda convoluted grep solution:

$ grep -Ff <(grep -oP '^\S+' fileA) fileB

seg1 one

seg2 two

seg3 three

Solution 5

An attempt with bash script. (Remember to make executable.)

fileA and fileB should exist in the same folder as the script.

A general script which will work for any two files described with script and generate the file with matching text as <fa>_<fb>_match.txt:

To use this, run ./script_name.sh fileA fileB

#!/bin/bash

fa="$1" # first file- which has columns

fb="$2" # second file - which has raw data to be searched

# file with name <fa>_<fb>_match.txt will be generated.

myarr=($(awk 'NR>1 {print $1}' "$fa")) # NR makes awk to ignore first row.

for index in ${!myarr[@]}; do

#echo $index/${#myarr[@]}

#echo "${myarr[index]}"

text="${myarr[index]}"

grep -w -F "$text" $fb >> $fa"_"$fb"_match".txt

done

# file with name <fa>_<fb>_match.txt will be generated.

Related videos on Youtube

ASAD

Updated on September 18, 2022Comments

-

ASAD over 1 year

I have two files fileA and fileB.

I have to extract column1 from fileA like

awk '{print $1}'and then the output will be searched into other fileB and will save the matched records into a new file fileC in simple words like:fileA:seg1 rec1 seg2 rec2 seg3 rec3I need to retrieve column 1 by using awk command and this column 1 is searched into

fileBto retrieve the records like:fileB:seg1 one seg2 two seg3 three seg4 four seg5 fiveFrom fileA, column1 data is extracted and and this data is used to search in fileB and matched record is saved to a test file. My output should be like this:

fileC:seg1 one seg2 two seg3 three -

terdon over 7 yearsNote that this assumes the fields are tab-separated.

terdon over 7 yearsNote that this assumes the fields are tab-separated. -

terdon over 7 yearsThis will also match substrings. For example, if

terdon over 7 yearsThis will also match substrings. For example, iffileAcontainsfooseg1andfile2containsseg1, it will be considered a match. -

Wayne_Yux over 7 years@terdon yes, I know. I mentioned this in the first bullet point ;-)

-

terdon over 7 yearsArgh. So you did. Sorry!

terdon over 7 yearsArgh. So you did. Sorry! -

terdon over 7 yearsYou could fix that with grep's

terdon over 7 yearsYou could fix that with grep's-woption. You could also do the whole thing in one step, there's no need for the array:awk 'NR>1 {print $1}' "$fa" | while read index; do .... Oh, and you should always quote your variables. That said, using the shell for this sort of thing is really not a good idea. It is hard to write, easy to get wrong and will always be very slow. -

ankit7540 over 7 yearsI am relatively new to shell scripting, and as mentioned by the links, I will study and improve my style. Thanks for the links. Really helpful !

ankit7540 over 7 yearsI am relatively new to shell scripting, and as mentioned by the links, I will study and improve my style. Thanks for the links. Really helpful ! -

terdon over 7 yearsYou're very welcome. We're all here to learn!

terdon over 7 yearsYou're very welcome. We're all here to learn!