sudo apt-get update gets Connection failed error but can curl to mirror

Solution 1

Firstly I'd like to thank @mchid for adding a lot of valuable information.

As it turns out this was a firewall issue, but one not under my control.

Firstly I was only using curl to test connections. When I tried curl www.google.com it would work fine, I would get HTML and confirmed it was from google. When I tried curl http://speedtest.ftp.otenet.gr/files/test1Mb.db it would appear to work, ie no errors and a file would be created locally.

However when I tried wget http://speedtest.ftp.otenet.gr/files/test1Mb.db I would get a ERROR 503: Service Unavailable. message. I then tried tweaking HTTP headers etc with no luck. So it dawned on me that curl may not be working so I checked the downloaded files and low and behold they were all 11kb in size and contained HTML. That HTML was our intranet's blocked URL message. Hitting a web end point would work, but any file download was being blocked.

So in my case this is all a result of using an ExpressRoute to connect an Azure VNet to on-prem network and the restrictions our IT group placed around that.

So the morals of this story are:

- After a week of research if you have a similar issue, it's 98% of the time some kind of firewall issue, 1% DNS, or 1% nuke it from orbit.

- curl may lie to you, use wget

- Just because the Azure connectivity test tool says you can access a URI/port doesn't mean you actually can for real-world usage.

- Try testing downloading files, not just hitting end points with wget

- Make sure the test files you download are valid. If the file size isn't what you expect inspect the contents.

- Talk to as many local IT people as you can, in my case I had to log a support ticket pretty high up in our org before anyone even knew about the restrictions.

Solution 2

You can use apt-fast to install packages instead of apt or apt-get. apt-fast is a wrapper script which uses the aria2c command to download packages from the mirrors.

First make sure the following dependencies are installed before you begin.

Here is a list of the dependencies you need:

libc-ares2

libc6

libgcc1

libgmp10 libgnutls30 libnettle6 libsqlite3-0

libstdc++6

libxml2

zlib1g

Please run the following command to list the installed packages:

for i in $(echo "libc-ares2 libc6 libgcc1 libgmp10 libgnutls30 libnettle6 libsqlite3-0 libstdc++6 libxml2 zlib1g"); do dpkg -l | grep "ii $i:"; done | sed 's/ .*$//g'

If all of the dependencies are not listed, do not proceed!

Next, to install aria2 please run the following commands:

curl -L -O http://mirrors.kernel.org/ubuntu/pool/universe/a/aria2/aria2_1.33.1-1_amd64.deb

sudo dpkg -i aria2_1.33.1-1_amd64.deb

Finally, to install apt-fast, run the following commands:

curl -L -O https://launchpad.net/~apt-fast/+archive/ubuntu/stable/+files/apt-fast_1.9.8-1~ubuntu18.04.1_all.deb

sudo dpkg -i apt-fast_1.9.8-1~ubuntu18.04.1_all.deb

Follow the on screen instructions to finish the installation.

The commands for apt-fast are the same commands used for apt-get:

sudo apt-fast update

sudo apt-fast upgrade

sudo apt-fast install <package name>

And if you normally use sudo apt full-upgrade or sudo apt dist-upgrade, use the following command:

sudo apt-fast dist-upgrade

For more information on apt-fast see the following links:

Also, here is a link to the download page for aria2:

Please post any errors. Thanks!

Related videos on Youtube

Geordie

Updated on September 18, 2022Comments

-

Geordie almost 2 years

I have an Ubuntu 18.04.2 LTS VM running on Azure without a public IP due to security restrictions. I can use curl to download files over HTTP though FTP doesn't work.

These are the errors I'm getting:

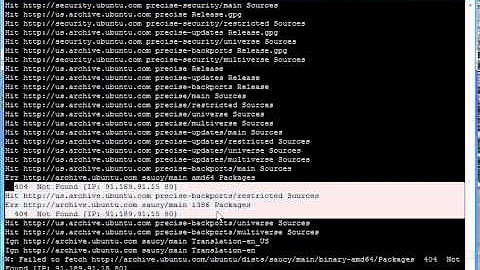

sudo apt-get update Err:1 http://mirror.cs.unm.edu/archive bionic InRelease Connection failed [IP: 64.106.20.76 80] Err:2 http://mirror.cs.unm.edu/archive bionic-updates InRelease Connection failed [IP: 64.106.20.76 80] Err:3 http://mirror.cs.unm.edu/archive bionic-backports InRelease Connection failed [IP: 64.106.20.76 80] Err:4 http://mirror.cs.unm.edu/archive bionic-security InRelease Connection failed [IP: 64.106.20.76 80] Reading package lists... Done W: Failed to fetch http://mirror.cs.unm.edu/archive/dists/bionic/InRelease Connection failed [IP: 64.106.20.76 80] W: Failed to fetch http://mirror.cs.unm.edu/archive/dists/bionic-updates/InRelease Connection failed [IP: 64.106.20.76 80] W: Failed to fetch http://mirror.cs.unm.edu/archive/dists/bionic-backports/InRelease Connection failed [IP: 64.106.20.76 80] W: Failed to fetch http://mirror.cs.unm.edu/archive/dists/bionic-security/InRelease Connection failed [IP: 64.106.20.76 80] W: Some index files failed to download. They have been ignored, or old ones used instead.I picked http://mirror.cs.unm.edu since according to here it only supports HTTP which in theory should rule out the FTP problem.

I can however access this mirror with curl fine:

curl -O http://mirror.cs.unm.edu/archive/dists % Total % Received % Xferd Average Speed Time Time Time Current Dload Upload Total Spent Left Speed 100 10616 100 10616 0 0 61011 0 --:--:-- --:--:-- --:--:-- 61011I've tried several mirrors with no luck. AFAIK there's no firewall, proxy or antivirus settings interfering with updating. All outgoing ports are open. Also tried all suggestions from this question

Edit: Regarding DNS setting, as this VM is connected to a intranet with an ExpressRoute there's some kind of magic happening.

First couple of lines of/etc/resolv.conf read:

# This file is managed by man:systemd-resolved(8). Do not edit. # # This is a dynamic resolv.conf file for connecting local clients to the # internal DNS stub resolver of systemd-resolved. This file lists all # configured search domains. # # Run "systemd-resolve --status" to see details about the uplink DNS servers # currently in use. # # Third party programs must not access this file directly, but only through the # symlink at /etc/resolv.conf. To manage man:resolv.conf(5) in a different way, # replace this symlink by a static file or a different symlink. # # See man:systemd-resolved.service(8) for details about the supported modes of # operation for /etc/resolv.conf. nameserver 127.0.0.53 options edns0 search reddog.microsoft.comHow can I further debug this problem?

-

mchid almost 5 yearsAlso, please try using the

mchid almost 5 yearsAlso, please try using thearia2ccommand instead ofcurland please tell me if this works. Ifaria2cworks, you can useapt-fastwhich instead ofapt. The package name isaria2but the command isaria2c. -

Geordie almost 5 yearsYup same error, also tried archive.ubuntu.com/ubuntu and the original Azure mirror. Is there some IP address validation etc that occurs when Ubuntu is attempting to fetch packages?

-

mchid almost 5 yearsI don't know of address validation. I do know there is package verification but that is not the error you are getting here.

mchid almost 5 yearsI don't know of address validation. I do know there is package verification but that is not the error you are getting here. -

Geordie almost 5 yearsIs there a good resource for manually installing aria2 as the problem also stops me getting any packages onto the VM. Tried DLing the package with curl but bzip2 says it's not a valid bz2 file.

-

Geordie almost 5 yearsAdded more info in the main post re DNS

-

mchid almost 5 yearsOkay, I have a workaround answer for you below. Just make sure that the dependencies are installed before installing the downloaded

mchid almost 5 yearsOkay, I have a workaround answer for you below. Just make sure that the dependencies are installed before installing the downloadeddebofaria2. If the dependencies are not installed, please let me know and maybe we can figure something else out.

-

-

mchid almost 5 yearsIf you would like to uninstall

mchid almost 5 yearsIf you would like to uninstallapt-fast, use the following command:dpkg -P apt-fast -

Geordie almost 5 yearsWhen I try to list the dependencies I get "E: No packages found"

-

mchid almost 5 years@Geordie Thanks, I have updated the command to list the dependencies.

mchid almost 5 years@Geordie Thanks, I have updated the command to list the dependencies.