Tuning Linux + HAProxy

Have you tried using a more reliable test tool than AB? You could well find it is AB itself hitting a ceiling rather than HAProxy. Consider using Apache jMeter or a proper statefull load testing application.

Keepalives on Apache are probably masking/skewing the AB results giving you the discrepancy you see,

You should post the contents of netstat during a load test so we can establish the connection states in use and appropriately tune settings based on this.

Nevertheless, you should be able to slightly increase performance by adding the following ptions in your defaults section :

option tcp-smart-accept

option tcp-smart-connect

There is a really good discussion that mirrors some of your concerns here, http://www.mail-archive.com/[email protected]/msg07156.html

It is a bit too tricky to remotely diagnose. But if Apache is capable of hitting those levels of reqs/s it is unlikely to be a kernel level or TCP/IP stack setting. So your focus would be better served looking at your HAProxy configuration.

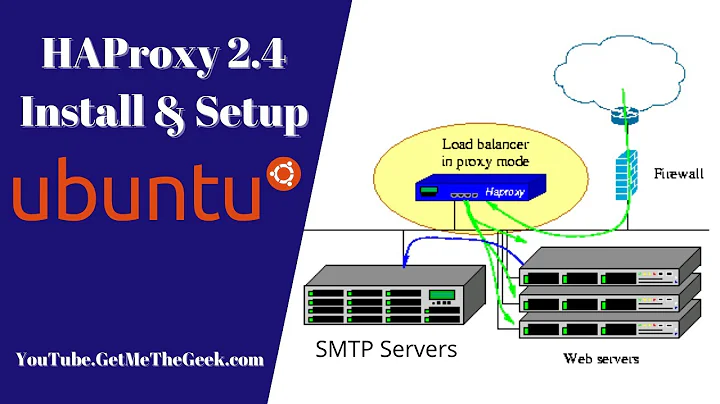

Related videos on Youtube

react

Updated on September 18, 2022Comments

-

react over 1 year

I'm currently rolling out HAProxy on Centos 6 which will send requests to some Apache HTTPD servers and I'm having issues with performance. I've spent the last couple of days googling and still can't seem to get past 10k/sec connections consistently when benchmarking (sometimes I do get 30k/sec though).

I've pinned the IRQ's of the TX/RX queues for both the internal and external NICS to separate CPU cores and made sure HAProxy is pinned to it's own core.

I've also made the following adjustments to sysctl.conf:

# Max open file descriptors fs.file-max = 331287 # TCP Tuning net.ipv4.tcp_tw_reuse = 1 net.ipv4.ip_local_port_range = 1024 65023 net.ipv4.tcp_max_syn_backlog = 10240 net.ipv4.tcp_max_tw_buckets = 400000 net.ipv4.tcp_max_orphans = 60000 net.ipv4.tcp_synack_retries = 3 net.core.somaxconn = 40000 net.ipv4.tcp_rmem = 4096 8192 16384 net.ipv4.tcp_wmem = 4096 8192 16384 net.ipv4.tcp_mem = 65536 98304 131072 net.core.netdev_max_backlog = 40000 net.ipv4.tcp_tw_reuse = 1If I use AB to hit the a webserver directly I easily get 30k/s connections. If I stop the webservers and use AB to hit HAProxy then I get 30k/s connections but obviously it's useless.

I've also disabled iptables for now since I read that nf_conntrack can slow everything down, no change. I've also disabled the irqbalance service.

The fact that I can hit each individual device with 30k/s makes me believe the tuning of the servers is OK and that it must be some HAProxy config?

Here's the config which I've built from reading tuning articles, etc http://pastebin.com/zsCyAtgU

The server is a dual Xeon CPU E5-2620 (6 cores) with 32GB of RAM. Running Centos 6.2 x64. The private and public interfaces are on separate NICS.

Anyone have any ideas? Thanks.

-

Khaled almost 12 yearsDid you try using the option

nbproc? You can specify number of processes to be forked. For example, you can fork 4 processes if you have 4 CPU cores. You may get higher throughput. -

react almost 12 yearsNo, I haven't but it's my understanding that I shouldn't have to? Also I believe I lose the ability to have stats, etc if I fork multiple processes. The CPU that the process is tied to is 95% when I'm benchmarking, forgot to mention that previously.

-

Kyle Brandt almost 12 years@react: What flags did you

Kyle Brandt almost 12 years@react: What flags did youmakehaproxy with? -

react almost 12 years@KyleBrandtTARGET=linux26 CPU=x86_64 USE_PCRE=1

-

-

react almost 12 yearsForgot to mention I have keep-alive turned off on both HAProxy and and Apache HTTPD. I agree that AB probably isn't the best but I'm more concerned about the variance in results I'm getting. Netstat basically shows 22244 connections in TIME_WAIT, this is when I run the following AB command: ab -n 100000 -c 100 -k host I added those options to my HAProxy config but can't see a noticeable difference. Thanks anyway.

-

Ben Lessani almost 12 yearsIs it a VPS you are using or a dedicated machine?

-

react almost 12 yearsA dedicated machine.

-

Ben Lessani almost 12 yearsWell, its a bit too tricky to remotely diagnose. But if Apache is capable of hitting those levels of reqs/s its unlikely to be a kernel level or TCP/IP stack setting. So your focus would be better served looking at your HAProxy configuration.

-

react almost 12 yearsActually I think I'm seeing the expected performance. The CPU that HAProxy is bound to is hitting 100% usage and I found a posting from the author where he says something along the lines of 'you're getting half the performance because you're now going through a 2nd device". It turns out I was getting 30k/s from HAPoxy when the backend servers were being marked as down and HAProxy was just returning 503's really quickly.