Update a Deployment image in Kubernetes

Solution 1

when not changing the container image name or tag you would just scale your application to 0 and back to the original size with sth like:

kubectl scale --replicas=0 deployment application

kubectl scale --replicas=1 deployment application

As mentioned in the comments already ImagePullPolicy: Always is then required in your configuration.

When changing the image I found this to be the most straight forward way to update the

kubectl set image deployment/application app-container=$IMAGE

Not changing the image has the downsite that you'll have nothing to fall back to in case of problems. Therefore I'd not suggest to use this outside of a development environment.

Edit: small bonus - keeping the scale in sync before and after could look sth. like:

replica_spec=$(kubectl get deployment/applicatiom -o jsonpath='{.spec.replicas}')

kubectl scale --replicas=0 deployment application

kubectl scale --replicas=$replica_spec deployment application

Cheers

Solution 2

Use the following functionality if you have atleast a 1.15 version

kubectl rollout restart deployment/deployment-name

Read more about it here kubectl rollout restart

Related videos on Youtube

Comments

-

Yuval Simhon almost 2 years

Yuval Simhon almost 2 yearsI'm very new to Kubernetes and using k8s v1.4, Minikube v0.15.0 and Spotify maven Docker plugin.

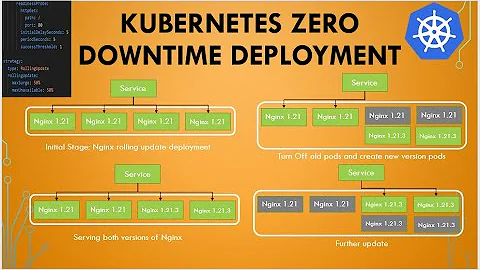

The build process of my project creates a Docker image and push it directly into the Docker engine of Minikube.The pods are created by the Deployment I've created (using replica set), and the strategy was set to

type: RollingUpdate.I saw this in the documentation:

Note: a Deployment’s rollout is triggered if and only if the Deployment’s pod template (i.e. .spec.template) is changed.

I'm searching for an easy way/workaround to automate the flow: Build triggered > a new Docker image is pushed (withoud version changing) > Deployment will update the pod > service will expose the new pod.-

Anirudh Ramanathan over 7 yearsIf you aren't changing the image at all, then there is no way to ensure that you get the new image in each pod, unless you set

ImagePullPolicy: Alwaysand kill each pod and have the deployment recreate it. However, if you are creating a new docker image every time, it would make sense to update the tag as well. -

Yuval Simhon over 7 years@AnirudhRamanathan As I'm not creating a "new" image every time, just updating the image, I will go with the first approach, so there is a way to kill the old pods automatically?

Yuval Simhon over 7 years@AnirudhRamanathan As I'm not creating a "new" image every time, just updating the image, I will go with the first approach, so there is a way to kill the old pods automatically? -

Yuval Simhon over 7 years

Yuval Simhon over 7 yearsImagePullPolicy: Alwaysis not working with local images, so meanwhile i'm manually delete the pods with specific lable, then the replica set is creating them with the updated image. wondering if there is any way to do it automatically.

-

-

Yuval Simhon over 7 yearsthere is a way to do the same with Kubernetes API?

Yuval Simhon over 7 yearsthere is a way to do the same with Kubernetes API? -

pagid over 7 yearsHello again - see: stackoverflow.com/questions/41792851/…

-

B. Stucke over 3 yearsWon't there be a downtime of your app, if you scale down and then up? If yes, how can i avoid downtime?

B. Stucke over 3 yearsWon't there be a downtime of your app, if you scale down and then up? If yes, how can i avoid downtime? -

pagid over 3 yearsYes there's be a downtime - that's an answer from 2017, there are other ways to trigger the update e.g.

kubectl rollout restart ... -

Martin Melka over 3 yearsIn my case we are not changing the image version when deploying dev/staging environments from the same branch. The tag/version is just the branch slug. That way we can make cleanup easier and save space in the registry. But I'm all ears for better approaches :)

Martin Melka over 3 yearsIn my case we are not changing the image version when deploying dev/staging environments from the same branch. The tag/version is just the branch slug. That way we can make cleanup easier and save space in the registry. But I'm all ears for better approaches :) -

santiago arizti about 3 yearsI recommend using the git commit (or the first 8 digits like I do) for image tags, this way you can find the corresponding commit very easily and you force k8s to get the correct version. NOTE: if you are only changing the src of your application in the image the space taken in the registry will only be the size of the src, not the full size of the image again

santiago arizti about 3 yearsI recommend using the git commit (or the first 8 digits like I do) for image tags, this way you can find the corresponding commit very easily and you force k8s to get the correct version. NOTE: if you are only changing the src of your application in the image the space taken in the registry will only be the size of the src, not the full size of the image again -

Melroy van den Berg over 2 yearsIt's actually

kubectl rollout restart deploy <name>for me.