ValueError: non-broadcastable output operand with shape (3,1) doesn't match the broadcast shape (3,4)

Change self.synaptic_weights += adjustment to

self.synaptic_weights = self.synaptic_weights + adjustment

self.synaptic_weights must have a shape of (3,1) and adjustment must have a shape of (3,4). While the shapes are broadcastable numpy must not like trying to assign the result with shape (3,4) to an array of shape (3,1)

a = np.ones((3,1))

b = np.random.randint(1,10, (3,4))

>>> a

array([[1],

[1],

[1]])

>>> b

array([[8, 2, 5, 7],

[2, 5, 4, 8],

[7, 7, 6, 6]])

>>> a + b

array([[9, 3, 6, 8],

[3, 6, 5, 9],

[8, 8, 7, 7]])

>>> b += a

>>> b

array([[9, 3, 6, 8],

[3, 6, 5, 9],

[8, 8, 7, 7]])

>>> a

array([[1],

[1],

[1]])

>>> a += b

Traceback (most recent call last):

File "<pyshell#24>", line 1, in <module>

a += b

ValueError: non-broadcastable output operand with shape (3,1) doesn't match the broadcast shape (3,4)

The same error occurs when using numpy.add and specifying a as the output array

>>> np.add(a,b, out = a)

Traceback (most recent call last):

File "<pyshell#31>", line 1, in <module>

np.add(a,b, out = a)

ValueError: non-broadcastable output operand with shape (3,1) doesn't match the broadcast shape (3,4)

>>>

A new a needs to be created

>>> a = a + b

>>> a

array([[10, 4, 7, 9],

[ 4, 7, 6, 10],

[ 9, 9, 8, 8]])

>>>

Related videos on Youtube

dpopp783

Incoming Computer Science major in Case Western Reserve University's class of 2024.

Updated on July 18, 2022Comments

-

dpopp783 almost 2 years

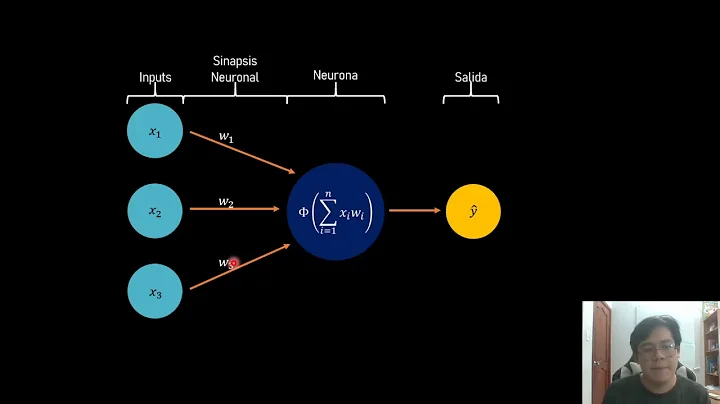

I recently started to follow along with Siraj Raval's Deep Learning tutorials on YouTube, but I an error came up when I tried to run my code. The code is from the second episode of his series, How To Make A Neural Network. When I ran the code I got the error:

Traceback (most recent call last): File "C:\Users\dpopp\Documents\Machine Learning\first_neural_net.py", line 66, in <module> neural_network.train(training_set_inputs, training_set_outputs, 10000) File "C:\Users\dpopp\Documents\Machine Learning\first_neural_net.py", line 44, in train self.synaptic_weights += adjustment ValueError: non-broadcastable output operand with shape (3,1) doesn't match the broadcast shape (3,4)I checked multiple times with his code and couldn't find any differences, and even tried copying and pasting his code from the GitHub link. This is the code I have now:

from numpy import exp, array, random, dot class NeuralNetwork(): def __init__(self): # Seed the random number generator, so it generates the same numbers # every time the program runs. random.seed(1) # We model a single neuron, with 3 input connections and 1 output connection. # We assign random weights to a 3 x 1 matrix, with values in the range -1 to 1 # and mean 0. self.synaptic_weights = 2 * random.random((3, 1)) - 1 # The Sigmoid function, which describes an S shaped curve. # We pass the weighted sum of the inputs through this function to # normalise them between 0 and 1. def __sigmoid(self, x): return 1 / (1 + exp(-x)) # The derivative of the Sigmoid function. # This is the gradient of the Sigmoid curve. # It indicates how confident we are about the existing weight. def __sigmoid_derivative(self, x): return x * (1 - x) # We train the neural network through a process of trial and error. # Adjusting the synaptic weights each time. def train(self, training_set_inputs, training_set_outputs, number_of_training_iterations): for iteration in range(number_of_training_iterations): # Pass the training set through our neural network (a single neuron). output = self.think(training_set_inputs) # Calculate the error (The difference between the desired output # and the predicted output). error = training_set_outputs - output # Multiply the error by the input and again by the gradient of the Sigmoid curve. # This means less confident weights are adjusted more. # This means inputs, which are zero, do not cause changes to the weights. adjustment = dot(training_set_inputs.T, error * self.__sigmoid_derivative(output)) # Adjust the weights. self.synaptic_weights += adjustment # The neural network thinks. def think(self, inputs): # Pass inputs through our neural network (our single neuron). return self.__sigmoid(dot(inputs, self.synaptic_weights)) if __name__ == '__main__': # Initialize a single neuron neural network neural_network = NeuralNetwork() print("Random starting synaptic weights:") print(neural_network.synaptic_weights) # The training set. We have 4 examples, each consisting of 3 input values # and 1 output value. training_set_inputs = array([[0, 0, 1], [1, 1, 1], [1, 0, 1], [0, 1, 1]]) training_set_outputs = array([[0, 1, 1, 0]]) # Train the neural network using a training set # Do it 10,000 times and make small adjustments each time neural_network.train(training_set_inputs, training_set_outputs, 10000) print("New Synaptic weights after training:") print(neural_network.synaptic_weights) # Test the neural net with a new situation print("Considering new situation [1, 0, 0] -> ?:") print(neural_network.think(array([[1, 0, 0]])))Even after copying and pasting the same code that worked in Siraj's episode, I'm still getting the same error.

I just started out look into artificial intelligence, and don't understand what the error means. Could someone please explain what it means and how to fix it? Thanks!

-

wwii over 6 years

wwii over 6 years

-

-

Ferdz over 5 yearsWelcome to Stack Overflow! Please take some time to format your answers before posting to ensure it is readable easily by everyone. You can use backsticks (`) to format inline code for example

Ferdz over 5 yearsWelcome to Stack Overflow! Please take some time to format your answers before posting to ensure it is readable easily by everyone. You can use backsticks (`) to format inline code for example -

wwii over 4 yearsDoing this solves the

wwii over 4 yearsDoing this solves theValueErrorthe OP was/is getting? ... OP is using the nametraining_set_outputsnottraining_outputs.