Very low performance of raid6

Solution 1

Check if your FS is aligned with RAID dimensions. I'm getting 320MB/s on RAID-6 array with 8 x 2TB SATA drives on XFS and I think it is limited by 3Gb/s SAS channel rater then RAID-6 performance. You can get some ideas on alignment from this thread.

Solution 2

Unfortunately you're comparing apples with oranges.

450Mb/s = 56MB/s which is about on par with what you're seeing in real life. They're both giving you the same reading (but one is in bits, one is in bytes). You need to divide 450 by 8 to get the same measure for both.

(In your question, you've got the capitalisation the other way around, now I can only hope/assume that this is a typo error, because if you reverse the capitalisation you get an almost perfect match)

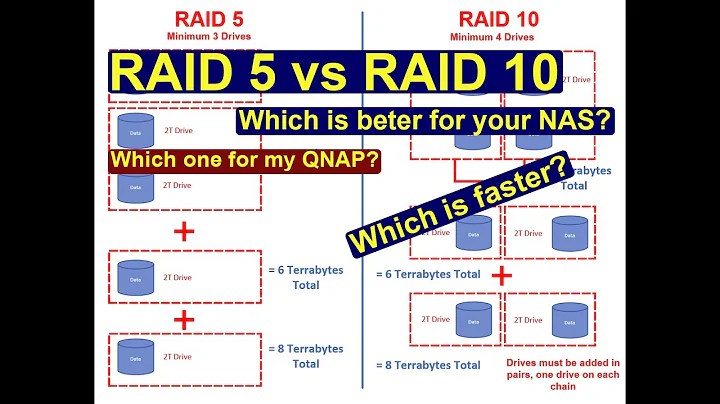

Related videos on Youtube

Arman

I am a researcher. We are trying to understand the influence of the gas-dynamics on the galactic bar formation in the numerical N-Body simulations. My Research interests are: Galaxy formation Hydrodynamics N-Body interaction AGN influence on galaxy formation Large scale structure Parallel programming Visualization tools

Updated on September 18, 2022Comments

-

Arman almost 2 years

I noticed that writing to raid-6 is very low, but when I make tests with hdparm the speed is reasonable:

dd if=/dev/zero of=/store/01/test.tmp bs=1M count=10000Gives: 50Mb/s or even less.

The hdparm gives: hdparm --direct -t /dev/vg_store_01/logical_vg_store_01 Gives 450MB/s

Why the file writings are low than hdparm test? Are there some kernel limit should be tuned?

I have an Areca 1680 adapter with 16x1Tb SAS disks, scientific linux 6.0

EDIT

My bad. Sorry all units are in MB/s

More on hardware:

2 areca contollers in dual quadcore machine. 16Gb ram

the firmware for sas backplane and areca is recent one.

the disks are seagate 7.200 rpm 16x1Tb x2 raid boxes. each 8 disks are raid6, so total 4 volumes with lba=64.two volumes groupped by striped lvm and formatted ext4

the stripe size is 128

when I format the volume I can see by iotop it writes 400mb/s

iostat shows also that both lvm member drives are writing with 450MB/s

FINALLY WRITING with 1600GB/s

One of the raids was degrading the performance due to bad disk. It is strange that disk in the jbod mode gives 100MB/s with hdparm as others. After heavy IO, it was reporting in the log files Write Error(not it has 10 of them). The raid still was not failing or degrading.

Well after replacement my configuration is following:

- 2xARC1680 controllers with

- RAID0 with 16x1Tb SAS disks stripe 128 lba64

- RAID0 with 16x1Tb SAS disks stripe 128 lba64

volume group with 128K stripe size

formatted to XFS

Direct

hdparm --direct -t /dev/vg_store01/vg_logical_store01

/dev/vg_store01/vg_logical_store01: Timing O_DIRECT disk reads: 4910 MB in 3.00 seconds = 1636.13 MB/sec

No Direct

hdparm -t /dev/vg_store01/vg_logical_store01

/dev/vg_store01/vg_logical_store01: Timing buffered disk reads: 1648 MB in 3.00 seconds = 548.94 MB/sec

** dd test DIRECT**

dd if=/dev/zero of=/store/01/test.tmp bs=1M count=10000 oflag=direct 10000+0 records in 10000+0 records out 10485760000 bytes (10 GB) copied, 8.87402 s, 1.2 GB/s

** WITHOUT DIRECT**

dd if=/dev/zero of=/store/01/test.tmp bs=1M count=10000 10000+0 records in 10000+0 records out 10485760000 bytes (10 GB) copied, 19.1996 s, 546 MB/s

-

Arman about 13 yearsYes, the cache write back is enabled and we have apc's

-

John about 13 yearsMaybe try a new firmware , or try another linux flavor (scientific linux 6.0 could be too new)

-

rthomson about 13 yearsUPS != "battery on your controller"

-

poige about 13 yearsUnfortunately™, you're deeply wrong. There's no reason for hdparm to speak in terms of bits at all, so it doesn't. It uses "MebiBytes" per second and you can check it out by yourself.

-

MrGigu about 13 years@Poige - you'll have to forgive me then, I was simply working off the fact that the op talked in Mbs and then MBs, of which there is a large difference.

-

Arman about 13 yearsSorry my mistake all units are in MB/s

-

Arman about 13 yearsThe hdparm has a --direct option which performs direct reading.