What could cause the file command in Linux to report a text file as binary data?

Solution 1

I found the issue using binary search to locate the problematic lines.

head -n {1/2 line count} file.cpp > a.txt

tail -n {1/2 line count} file.cpp > b.txt

Running file against each half, and repeating the process, helped me locate the offending line. I found a Control+P (^P) character embedded in it. Removing it solved the problem. I'll write myself a Perl script to search for these characters (and other extended) in the future.

A big thanks to everyone who provided an answer for all the tips!

Solution 2

Vim tries very hard to make sense of whatever you throw at it without complaining. This makes it a relatively poor tool to use to diagnose file's output.

Vim's "[converted]" notice indicates there was something in the file that vim wouldn't expect to see in the text encoding suggested by your locale settings (LANG etc).

Others have already suggested

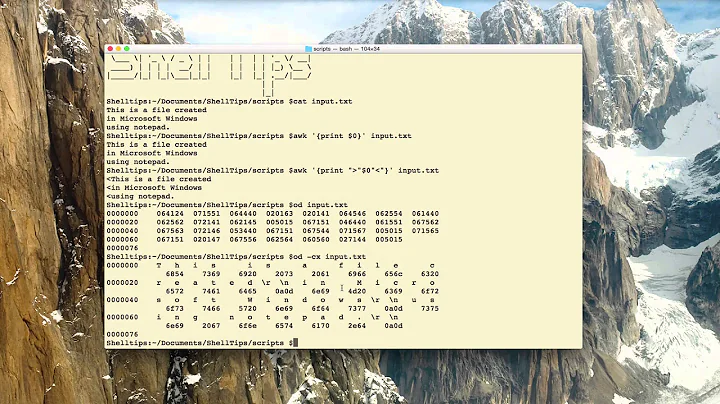

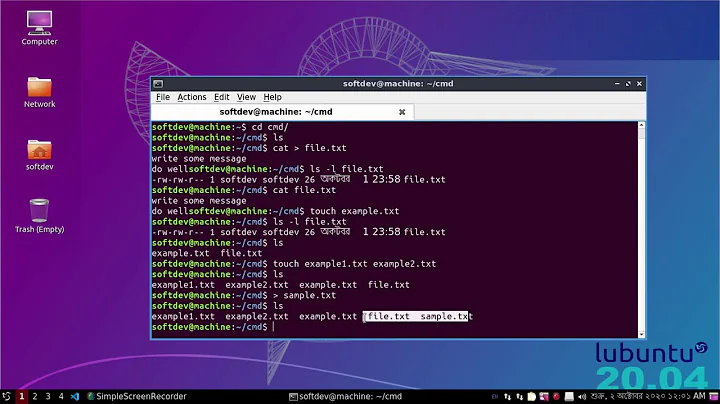

cat -vxxd

You could try grepping for non-ASCII characters.

grep -P '[\x7f-\xff]' filename

The other possibility is non-standard line-endings for the platform (i.e. CRLF or CR) but I'd expect file to cope with that and report "DOS text file" or similar.

Solution 3

If you run file -D filename, file displays debugging information, including the tests it performs. Near the end, it will show what test was successful in determining the file type.

For a regular text file, it looks like this:

[31> 0 regex,=^package[ \t]+[0-9A-Za-z_:]+ *;,""]

1 == 0 = 0

ascmagic 1

filename.txt: ISO-8859 text, with CRLF line terminators

This will tell you what it found to determine it's that mime type.

Related videos on Youtube

Jonah Bishop

Updated on September 18, 2022Comments

-

Jonah Bishop over 1 year

I have a couple of C++ source files (one .cpp and one .h) that are being reported as type data by the

filecommand in Linux. When I run thefile -bicommand against these files, I'm given this output (same output for each file):application/octet-stream; charset=binaryEach file is clearly plain-text (I can view them in

vi). What's causingfileto misreport the type of these files? Could it be some sort of Unicode thing? Both of these files were created in Windows-land (using Visual Studio 2005), but they're being compiled in Linux (it's a cross-platform application).Any ideas would be appreciated.

Update: I don't see any null characters in either file. I found some extended characters in the .cpp file (in a comment block), removed them, but

filestill reports the same encoding. I've tried forcing the encoding in SlickEdit, but that didn't seem to have an effect. When I open the file invim, I see a[converted]line as soon as I open the file. Perhaps I can get vim to force the encoding? -

Jonah Bishop about 12 yearsThat an interesting tip. I've run both files through xxd, and I see no BOM in the first character position. Each file starts out with a giant comment block, so I see a bunch of slashes to start.

-

GodEater about 12 yearsCare to share an excerpt?

-

Jonah Bishop about 12 yearsThis search resulted in a comment block containing some extended characters in my .cpp file. However, I don't see any similar characters in the .h...

-

garyjohn about 12 yearsI updated my answer to include searching for nulls as Mehrdad suggested.

-

Jonah Bishop about 12 yearsI don't see any null characters in either file. :(

-

Jonah Bishop about 12 yearsI don't see a -D option in my file install (v5.04)...

-

garyjohn about 12 yearsTry -d instead. That works with file-5.03 as installed on Fedora 11.

-

HikeMike about 12 yearsNotifying @JonahBishop about garyjohn's comment. My post was written for the

fileincluded with OS X. My Debian 6 has neither-dnor-Dthough... -

Jonah Bishop about 12 yearsThe -d flag works for me, but there's so much output I'm not sure what to look for...