What is the advantage of GCC's __builtin_expect in if else statements?

Solution 1

Imagine the assembly code that would be generated from:

if (__builtin_expect(x, 0)) {

foo();

...

} else {

bar();

...

}

I guess it should be something like:

cmp $x, 0

jne _foo

_bar:

call bar

...

jmp after_if

_foo:

call foo

...

after_if:

You can see that the instructions are arranged in such an order that the bar case precedes the foo case (as opposed to the C code). This can utilise the CPU pipeline better, since a jump thrashes the already fetched instructions.

Before the jump is executed, the instructions below it (the bar case) are pushed to the pipeline. Since the foo case is unlikely, jumping too is unlikely, hence thrashing the pipeline is unlikely.

Solution 2

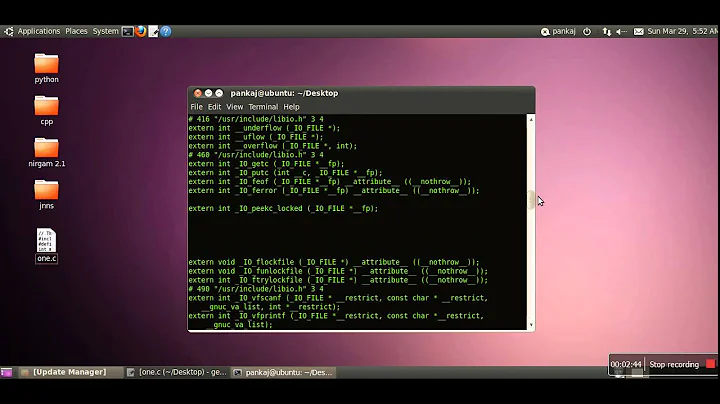

Let's decompile to see what GCC 4.8 does with it

Blagovest mentioned branch inversion to improve the pipeline, but do current compilers really do it? Let's find out!

Without __builtin_expect

#include "stdio.h"

#include "time.h"

int main() {

/* Use time to prevent it from being optimized away. */

int i = !time(NULL);

if (i)

puts("a");

return 0;

}

Compile and decompile with GCC 4.8.2 x86_64 Linux:

gcc -c -O3 -std=gnu11 main.c

objdump -dr main.o

Output:

0000000000000000 <main>:

0: 48 83 ec 08 sub $0x8,%rsp

4: 31 ff xor %edi,%edi

6: e8 00 00 00 00 callq b <main+0xb>

7: R_X86_64_PC32 time-0x4

b: 48 85 c0 test %rax,%rax

e: 75 0a jne 1a <main+0x1a>

10: bf 00 00 00 00 mov $0x0,%edi

11: R_X86_64_32 .rodata.str1.1

15: e8 00 00 00 00 callq 1a <main+0x1a>

16: R_X86_64_PC32 puts-0x4

1a: 31 c0 xor %eax,%eax

1c: 48 83 c4 08 add $0x8,%rsp

20: c3 retq

The instruction order in memory was unchanged: first the puts and then retq return.

With __builtin_expect

Now replace if (i) with:

if (__builtin_expect(i, 0))

and we get:

0000000000000000 <main>:

0: 48 83 ec 08 sub $0x8,%rsp

4: 31 ff xor %edi,%edi

6: e8 00 00 00 00 callq b <main+0xb>

7: R_X86_64_PC32 time-0x4

b: 48 85 c0 test %rax,%rax

e: 74 07 je 17 <main+0x17>

10: 31 c0 xor %eax,%eax

12: 48 83 c4 08 add $0x8,%rsp

16: c3 retq

17: bf 00 00 00 00 mov $0x0,%edi

18: R_X86_64_32 .rodata.str1.1

1c: e8 00 00 00 00 callq 21 <main+0x21>

1d: R_X86_64_PC32 puts-0x4

21: eb ed jmp 10 <main+0x10>

The puts was moved to the very end of the function, the retq return!

The new code is basically the same as:

int i = !time(NULL);

if (i)

goto puts;

ret:

return 0;

puts:

puts("a");

goto ret;

This optimization was not done with -O0.

But good luck on writing an example that runs faster with __builtin_expect than without, CPUs are really smart those days. My naive attempts are here.

C++20 [[likely]] and [[unlikely]]

C++20 has standardized those C++ built-ins: How to use C++20's likely/unlikely attribute in if-else statement They will likely (a pun!) do the same thing.

Solution 3

The idea of __builtin_expect is to tell the compiler that you'll usually find that the expression evaluates to c, so that the compiler can optimize for that case.

I'd guess that someone thought they were being clever and that they were speeding things up by doing this.

Unfortunately, unless the situation is very well understood (it's likely that they have done no such thing), it may well have made things worse. The documentation even says:

In general, you should prefer to use actual profile feedback for this (

-fprofile-arcs), as programmers are notoriously bad at predicting how their programs actually perform. However, there are applications in which this data is hard to collect.

In general, you shouldn't be using __builtin_expect unless:

- You have a very real performance issue

- You've already optimized the algorithms in the system appropriately

- You've got performance data to back up your assertion that a particular case is the most likely

Solution 4

Well, as it says in the description, the first version adds a predictive element to the construction, telling the compiler that the x == 0 branch is the more likely one - that is, it's the branch that will be taken more often by your program.

With that in mind, the compiler can optimize the conditional so that it requires the least amount of work when the expected condition holds, at the expense of maybe having to do more work in case of the unexpected condition.

Take a look at how conditionals are implemented during the compilation phase, and also in the resulting assembly, to see how one branch may be less work than the other.

However, I would only expect this optimization to have noticeable effect if the conditional in question is part of a tight inner loop that gets called a lot, since the difference in the resulting code is relatively small. And if you optimize it the wrong way round, you may well decrease your performance.

Solution 5

I don't see any of the answers addressing the question that I think you were asking, paraphrased:

Is there a more portable way of hinting branch prediction to the compiler.

The title of your question made me think of doing it this way:

if ( !x ) {} else foo();

If the compiler assumes that 'true' is more likely, it could optimize for not calling foo().

The problem here is just that you don't, in general, know what the compiler will assume -- so any code that uses this kind of technique would need to be carefully measured (and possibly monitored over time if the context changes).

Related videos on Youtube

RajSanpui

Around 9+ years experience into development C, C++, and Linux domain. Also understand Core-Java and consider it as a secondary skill. Currently, in addition to the developer responsibilities, i am also serving the role of DevOps engineer.

Updated on January 06, 2022Comments

-

RajSanpui over 2 years

RajSanpui over 2 yearsI came across a

#definein which they use__builtin_expect.The documentation says:

Built-in Function:

long __builtin_expect (long exp, long c)You may use

__builtin_expectto provide the compiler with branch prediction information. In general, you should prefer to use actual profile feedback for this (-fprofile-arcs), as programmers are notoriously bad at predicting how their programs actually perform. However, there are applications in which this data is hard to collect.The return value is the value of

exp, which should be an integral expression. The semantics of the built-in are that it is expected thatexp == c. For example:if (__builtin_expect (x, 0)) foo ();would indicate that we do not expect to call

foo, since we expectxto be zero.So why not directly use:

if (x) foo ();instead of the complicated syntax with

__builtin_expect?-

Ciro Santilli OurBigBook.com almost 9 yearspossible duplicate of likely()/unlikely() macros in the Linux kernel - how do they work? What's their benefit?

Ciro Santilli OurBigBook.com almost 9 yearspossible duplicate of likely()/unlikely() macros in the Linux kernel - how do they work? What's their benefit? -

Nawaz over 7 yearsI think your direct code should have been

Nawaz over 7 yearsI think your direct code should have beenif ( x == 0) {} else foo();.. or simplyif ( x != 0 ) foo();which is equivalent to the code from GCC documentation.

-

-

RajSanpui over 12 yearsBut at the end it is all about checking the condition by the compiler, do you mean to say that the compiler always assumes this branch and proceeds, and later if there is not a match then ? What happens? I think there is something more about this branch prediction stuff in compiler design, and how it works.

RajSanpui over 12 yearsBut at the end it is all about checking the condition by the compiler, do you mean to say that the compiler always assumes this branch and proceeds, and later if there is not a match then ? What happens? I think there is something more about this branch prediction stuff in compiler design, and how it works. -

Kerrek SB over 12 yearsThis is truly a micro-optimization. Look up how conditionals are implemented, there's a small bias towards one branch. As a hypothetical example, suppose a conditional becomes a test plus a jump in the assembly. Then the jumping branch is slower than the non-jumping one, so you'd prefer to make the expected branch the non-jumping one.

-

RajSanpui over 12 yearsYes, i think it is like that, but somewhere i see i need to understand the concept of branch prediction as you and Kerrek have said, it may flash more light in that. I think it takes place during syntatic analysis, right?

RajSanpui over 12 yearsYes, i think it is like that, but somewhere i see i need to understand the concept of branch prediction as you and Kerrek have said, it may flash more light in that. I think it takes place during syntatic analysis, right? -

endorphin over 12 years@kindsmasher1 - the idea of branch prediction is that the underlying processor compare and branch instructions are often faster in one direction than the other (for instance, not taking the branch may be quicker than taking the branch). If the processor knows what value is most likely, and therefore which half of the if is most likely to be taken, it can pick compare operations and branch instructions to maximize the speed of that most likely branch. Note that it can also do things at a higher level and re-arrange your code, if that makes the most sense.

-

RajSanpui over 12 yearsDoes it really work like that? Why the foo definition can't come first? The order of function definitions are irrelevant, as far as you have a prototype, right?

RajSanpui over 12 yearsDoes it really work like that? Why the foo definition can't come first? The order of function definitions are irrelevant, as far as you have a prototype, right? -

Blagovest Buyukliev over 12 yearsThis is not about function definitions. It is about rearranging the machine code in a way that causes a smaller probability for the CPU to fetch instructions that are not going to be executed.

-

RajSanpui over 12 yearsI better go back to my college book of

RajSanpui over 12 yearsI better go back to my college book ofcompiler design - Aho, Ullmann, Sethi:-) -

RajSanpui over 12 yearsOhh i understand. So you mean since there is a high probability for

RajSanpui over 12 yearsOhh i understand. So you mean since there is a high probability forx = 0so the bar is given first. And foo, is defined later since it's chances (rather use probability) is less, right? -

RajSanpui over 12 yearsReally it makes sense. But do you have any reference which states this is how should be the ordering of instructions, when one has greater probability than the other?

RajSanpui over 12 yearsReally it makes sense. But do you have any reference which states this is how should be the ordering of instructions, when one has greater probability than the other? -

Oliver Charlesworth over 12 years@Michael: That's not really a description of branch prediction.

-

Steve Jessop over 12 years"most programmers are BAD" or anyway no better than the compiler. Any idiot can tell that in a for loop, the continuation condition is likely to be true, but the compiler knows that too so there's no benefit telling it. If for some reason you wrote a loop that would almost always break immediately, and if you can't provide profile data to the compiler for PGO, then maybe the programmer knows something the compiler doesn't.

-

Hasturkun over 12 yearsThis may also embed hints for the CPU branch predictor, improving pipelining

-

Neowizard about 12 yearsIn some situations, it doesn't matter which branch is more likely, but rather which branch matters. If the unexpected branch leads to abort(), then likelihood doesn't matter, and the expected branch should be given performance-priority when optimizing.

-

Brent Bradburn almost 9 yearsThis may have, in fact, been exactly what the OP had originally intended to type (as indicated by the title) -- but for some reason the use of

elsewas left out of the body of the post. -

dave over 7 yearsThis also places the most likely case closer to the comparison, such that it might be on the same cache line (and the unlikely case might not be in the instruction cache at all).

-

Nawaz over 7 years@KerrekSB: I think, you got it wrong. You said "

Nawaz over 7 years@KerrekSB: I think, you got it wrong. You said "x != 0branch is the more likely one", I thinkx==0branch is more likely one, because it saysif (__builtin_expect(x, 0)) foo();.. i.e iffoo()will be executed only ifxis not0. which means theifisx!=0branch, and the implicitelseisx==0branch, which is more likely to be executed, asxis expected to be0. Note that__builtin_expectreturns the first argument passed to it. -

BeeOnRope over 7 yearsThe problem with your claim is that the optimizations the CPU can perform with respect to branch probability is pretty much limited to one: branch prediction, and this optimization happens whether you use

__builtin_expector not. On the other hand, the compiler can perform many optimizations based on the branch probability, such as organizing the code so the hot path is contiguous, moving code unlikely to be optimized further away or reducing its size, taking decisions about which branches to vectorize, better scheduling the hot path, and so on. -

BeeOnRope over 7 years... without information from the developer, it is blind and chooses a neutral strategy. If the developer is right about the probabilities (and in many cases it is trivial to understand that a branch is usually taken/not taken) - you get these benefits. If you aren't you get some penalty, but it is not somehow much larger than the benefits, and most critically, none of this somehow overrides the CPU branch prediction.

-

Hi-Angel about 7 yearsWell, actually, sometimes it's clever to use. E.g. AMD are using it in their driver. The

Hi-Angel about 7 yearsWell, actually, sometimes it's clever to use. E.g. AMD are using it in their driver. Thelinkely()/unlikely()functions you see is a macros to__builtin_expect(). The (si_)draw_vbo()is called 5k…7.5k times per second in GTAⅣ, so it's quite a thing to optimize. -

endorphin about 7 years@Hi-Angel - which is what I said - if you actually HAVE performance data and understand the situation, then sure it makes sense. And the folks doing GTA DO have the data and DO know the situation. They are BY FAR in the minority and are among the few who should be using __builtin_expect.

-

Hi-Angel about 7 yearsYeah, it's called a lot in every game. That said, the GTAⅣ measurements are actually mine, obtained with

Hi-Angel about 7 yearsYeah, it's called a lot in every game. That said, the GTAⅣ measurements are actually mine, obtained withGALLIUM_HUD=draw-callsvariable (tho with r600 driver, not radeonsi). Judging from IRC discussion, 7.5k is too much, modern games do around 4k. -

Adam Kaplan over 6 years@Nik-Lz no, the effects of that jump should be accounted for by the branch predictor. One assumption for __builtin_expect is usually that all things are not equal... there is a slow path and a fast path, and you as the programmer happen to know which path is most likely to be used.

-

Adam Kaplan over 6 yearsCheck out libdispatch's dispatch_once function, which uses __builtin_expect for a practical optimization. The slow path runs one-time-ever and exploits __builtin_expect to hint the branch predictor that the fast path should be taken. The fast path runs without using any locks at all! mikeash.com/pyblog/…

-

maxbc about 6 yearsI have doubts about this. I heard modern branch predictors will follow the most "likely" path anyway (depending on what happened at this location on previous paths), so it doesn't really matter how you arrange things (it's even the base of Spectre and Meltdown attacks).

-

Tomeamis almost 5 years@maxbc It is true that the branch predictor can learn, but during the first (couple) pass(es), it doesn't have any data to predict from. In that case, static branch prediction is used, and this construct lets the compiler generate assembly such that the static branch prediction will predict the likely branch.

-

Ruslan over 4 yearsDoesn't appear to make any difference in GCC 9.2: gcc.godbolt.org/z/GzP6cx (actually, already in 8.1)

Ruslan over 4 yearsDoesn't appear to make any difference in GCC 9.2: gcc.godbolt.org/z/GzP6cx (actually, already in 8.1) -

Andreas about 3 yearsAs for the example, I think an even more efficient implementation saving at least one instruction is placing the unexpected branch (_foo()) somewhere else completely and let it have an unconditional jump back to where the branching happened (if) or ended (if/else). This will avoid the unconditional jmp in the expected branch, and avoid dispatching any of the instructions in the unexpected branch because of dispatching multiple instructions every clock cycle. (This assuming I remember and understand PowerPC 74xx manual right.)

-

Anton Samsonov about 3 yearsBy saying "CPUs are really smart" you imply they are all using out-of-order, dynamic execution, which is not true, as long as there are other CPU architectures — in-order, explicitly scheduled at compile time.

Anton Samsonov about 3 yearsBy saying "CPUs are really smart" you imply they are all using out-of-order, dynamic execution, which is not true, as long as there are other CPU architectures — in-order, explicitly scheduled at compile time.