What is the maximum container(s) in a single-node cluster (hadoop)?

Maximum containers that run on a single NodeManager (hadoop worker) depends on lot of factors like how much memory is assigned for the NodeManager to use and also depends on application specific requirements.

The defaults for yarn.scheduler.*-allocation-* are: 1GB (minimum allocation), 8GB (maximum allocation), 1 core and 32 cores. So, minimum and maximum allocation, affects number of containers per node.

So, if you have 6GB RAM and 4 virtual cores, here is how the YARN configuration should look like:

yarn.scheduler.minimum-allocation-mb: 128

yarn.scheduler.maximum-allocation-mb: 2048

yarn.scheduler.minimum-allocation-vcores: 1

yarn.scheduler.maximum-allocation-vcores: 2

yarn.nodemanager.resource.memory-mb: 4096

yarn.nodemanager.resource.cpu-vcores: 4

The above configuration tells hadoop to use atmost 4GB and 4 virtual cores and that each container can have between 128 MB and 2 GB of memory and between 1 and 2 virtual cores, with these settings you could run upto 2 containers with maximum resources at a time.

Now, for MapReduce specific configuration:

yarn.app.mapreduce.am.resource.mb: 1024

yarn.app.mapreduce.am.command-opts: -Xmx768m

mapreduce.[map|reduce].cpu.vcores: 1

mapreduce.[map|reduce].memory.mb: 1024

mapreduce.[map|reduce].java.opts: -Xmx768m

With this configuration, you could theoretically have up to 4 mappers/reducers running simultaneously in 4 1GB containers. In practice, the MapReduce application master will use a 1GB container so the actual number of concurrent mappers and reducers will be limited to 3. You can play around with the memory limits but it might require some experimentation to find the best ones.

As a rule of thumb, you should limit the heap-size to about 75% of the total memory available to ensure things run more smoothly.

You could also set memory per container using yarn.scheduler.minimum-allocation-mb property.

For more detail configuration for production systems use this document from hortonworks as a reference.

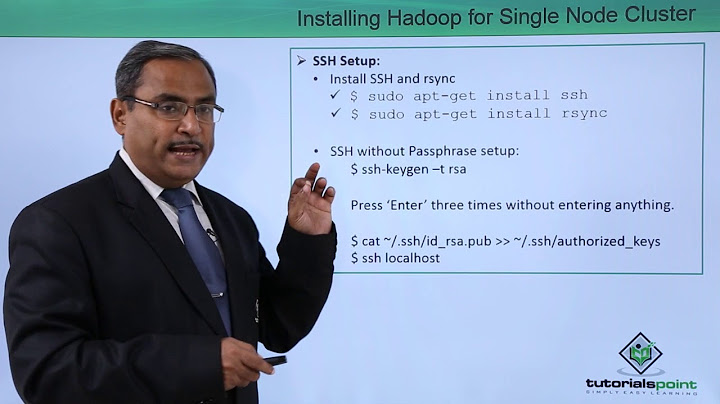

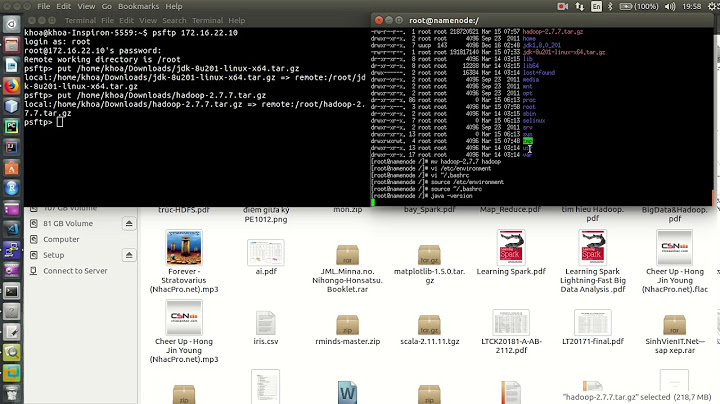

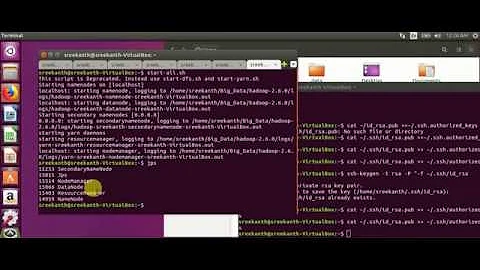

Related videos on Youtube

Andoy Abarquez

Java Web Developer Odoo Functional Consultant & Developer

Updated on October 24, 2020Comments

-

Andoy Abarquez over 3 years

Andoy Abarquez over 3 yearsI am new in hadoop and i am not yet familiar to its configuration.

I just want to ask the maximum container per node.

I am using a single node cluster (6GB ram laptop)

and below is my mapred and yarn configuration:

**mapred-site.xml** map-mb : 4096 opts:-Xmx3072m reduce-mb : 8192 opts:-Xmx6144m **yarn-site.xml** resource memory-mb : 40GB min allocation-mb : 1GBThe above setup can only run 4 to 5 jobs. and max of 8 container.

-

Tariq about 9 yearsThere is one master node with 8GB RAM and 8VCPU cores and 10 slave nodes with 2GB RAM and 1VCPU core on each in my Hadoop 2.5.2 cluster. 5 Inputsplits are created for the MapReduce application. One container is running on one slave node only. What confgiuration will enable my applciaton to use all/ masx slave ndoes. I aim to run my application with 50 slave ndoes.