Convert hex-encoded String to String

Solution 1

You want to use the hex encoded data as an AES key, but the

data is not a valid UTF-8 sequence. You could interpret

it as a string in ISO Latin encoding, but the AES(key: String, ...)

initializer converts the string back to its UTF-8 representation,

i.e. you'll get different key data from what you started with.

Therefore you should not convert it to a string at all. Use the

extension Data {

init?(fromHexEncodedString string: String)

}

method from hex/binary string conversion in Swift

to convert the hex encoded string to Data and then pass that

as an array to the AES(key: Array<UInt8>, ...) initializer:

let hexkey = "dcb04a9e103a5cd8b53763051cef09bc66abe029fdebae5e1d417e2ffc2a07a4"

let key = Array(Data(fromHexEncodedString: hexkey)!)

let encrypted = try AES(key: key, ....)

Solution 2

Your code doesn't do what you think it does. This line:

if let data = hexString.data(using: .utf8){

means "encode these characters into UTF-8." That means that "01" doesn't encode to 0x01 (1), it encodes to 0x30 0x31 ("0" "1"). There's no "hex" in there anywhere.

This line:

return String.init(data:data, encoding: .utf8)

just takes the encoded UTF-8 data, interprets it as UTF-8, and returns it. These two methods are symmetrical, so you should expect this whole function to return whatever it was handed.

Pulling together Martin and Larme's comments into one place here. This appears to be encoded in Latin-1. (This is a really awkward way to encode this data, but if it's what you're looking for, I think that's the encoding.)

import Foundation

extension Data {

// From http://stackoverflow.com/a/40278391:

init?(fromHexEncodedString string: String) {

// Convert 0 ... 9, a ... f, A ...F to their decimal value,

// return nil for all other input characters

func decodeNibble(u: UInt16) -> UInt8? {

switch(u) {

case 0x30 ... 0x39:

return UInt8(u - 0x30)

case 0x41 ... 0x46:

return UInt8(u - 0x41 + 10)

case 0x61 ... 0x66:

return UInt8(u - 0x61 + 10)

default:

return nil

}

}

self.init(capacity: string.utf16.count/2)

var even = true

var byte: UInt8 = 0

for c in string.utf16 {

guard let val = decodeNibble(u: c) else { return nil }

if even {

byte = val << 4

} else {

byte += val

self.append(byte)

}

even = !even

}

guard even else { return nil }

}

}

let d = Data(fromHexEncodedString: "dcb04a9e103a5cd8b53763051cef09bc66abe029fdebae5e1d417e2ffc2a07a4")!

let s = String(data: d, encoding: .isoLatin1)

Solution 3

There is still a way to convert the key from hex to readable string by adding the below extension:

extension String {

func hexToString()->String{

var finalString = ""

let chars = Array(self)

for count in stride(from: 0, to: chars.count - 1, by: 2){

let firstDigit = Int.init("\(chars[count])", radix: 16) ?? 0

let lastDigit = Int.init("\(chars[count + 1])", radix: 16) ?? 0

let decimal = firstDigit * 16 + lastDigit

let decimalString = String(format: "%c", decimal) as String

finalString.append(Character.init(decimalString))

}

return finalString

}

func base64Decoded() -> String? {

guard let data = Data(base64Encoded: self) else { return nil }

return String(data: data, encoding: .init(rawValue: 0))

}

}

Example of use:

let hexToString = secretKey.hexToString()

let base64ReadableKey = hexToString.base64Decoded() ?? ""

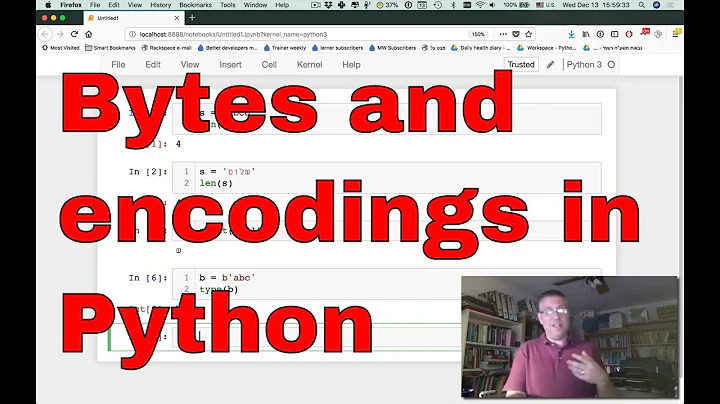

Related videos on Youtube

Chanchal Raj

Updated on May 25, 2022Comments

-

Chanchal Raj almost 2 years

Chanchal Raj almost 2 yearsI want to convert following hex-encoded

Stringin Swift 3:dcb04a9e103a5cd8b53763051cef09bc66abe029fdebae5e1d417e2ffc2a07a4to its equivalant

String:Ü°J:\ص7cï ¼f«à)ýë®^A~/ü*¤Following websites do the job very fine:

http://codebeautify.org/hex-string-converter

http://string-functions.com/hex-string.aspx

But I am unable to do the same in Swift 3. Following code doesn't do the job too:

func convertHexStringToNormalString(hexString:String)->String!{ if let data = hexString.data(using: .utf8){ return String.init(data:data, encoding: .utf8) }else{ return nil} }-

Martin R over 7 yearsSee stackoverflow.com/a/40278391/1187415 for a method to create

Martin R over 7 yearsSee stackoverflow.com/a/40278391/1187415 for a method to createDatafrom a hex-encoded string. -

Martin R over 7 yearsBut your data does not look like a valid string in any known encoding ...

Martin R over 7 yearsBut your data does not look like a valid string in any known encoding ... -

Larme over 7 yearsTransform as suggested by Marin R, the Hex Representation that is a String, into its equivalent in

Larme over 7 yearsTransform as suggested by Marin R, the Hex Representation that is a String, into its equivalent in(NS)Data. And useNSASCIIStringEncoding, notNSUTF8StringEncoding. I get in Objective-C the correct resultÜ°J:\ص7cï ¼f«à)ýë®^A~/ü*¤with the ASCII, but null with the UTF8. -

Martin R over 7 yearsWhy do you want to store that binary data as a string at all?

Martin R over 7 yearsWhy do you want to store that binary data as a string at all? -

Martin R over 7 years@Larme: That works, but NSASCIIStringEncoding is documented as a "strict 7-bit encoding", so one might not rely on it.

Martin R over 7 years@Larme: That works, but NSASCIIStringEncoding is documented as a "strict 7-bit encoding", so one might not rely on it. -

Larme over 7 years@MartinR True, but if the hex is a representation of the ASCII conversion one, it should work. It's up to the dev to use the correct and most suitable encoding for its own use.

Larme over 7 years@MartinR True, but if the hex is a representation of the ASCII conversion one, it should work. It's up to the dev to use the correct and most suitable encoding for its own use. -

Rob Napier over 7 yearsI believe the "correct" (it's whacky, but seems to be what is desired) encoding here is

.isoLatin1rather than.ascii. -

Chanchal Raj over 7 yearsThanks @Larme and @MartinR for guiding me to right direction. I converted the hex-String to Data by Martiin's way and converted the Data to String and thanks it's not nil this time but got this result in swift:

Chanchal Raj over 7 yearsThanks @Larme and @MartinR for guiding me to right direction. I converted the hex-String to Data by Martiin's way and converted the Data to String and thanks it's not nil this time but got this result in swift:Optional("Ü°J\u{10}:\\ص7c\u{05}\u{1C}ï\t¼f«à)ýë®^\u{1D}A~/ü*\u{07}¤") -

Chanchal Raj over 7 years@MartinR, Why am I converting it to String? It is because my php developer want me to send param values encrypted with AES. And the AES key he has provided me is hex-encoded. For me to use CryptoSwift, I need to de-code this hex key first... :/

Chanchal Raj over 7 years@MartinR, Why am I converting it to String? It is because my php developer want me to send param values encrypted with AES. And the AES key he has provided me is hex-encoded. For me to use CryptoSwift, I need to de-code this hex key first... :/ -

Martin R over 7 yearsUnless I am mistaken, you can pass the AES key as a binary blob as well: github.com/krzyzanowskim/CryptoSwift#aes-advanced-usage. – And if you pass it as a string then the string will be converted to its UTF-8 representation, not ISO Latin 1.

Martin R over 7 yearsUnless I am mistaken, you can pass the AES key as a binary blob as well: github.com/krzyzanowskim/CryptoSwift#aes-advanced-usage. – And if you pass it as a string then the string will be converted to its UTF-8 representation, not ISO Latin 1. -

Chanchal Raj over 7 years@MartinR, you are right there are two ways. To make binary blob, what should I do? I think use your method to create Data first then merely convert it to

Chanchal Raj over 7 years@MartinR, you are right there are two ways. To make binary blob, what should I do? I think use your method to create Data first then merely convert it to[uInt8]bylet binaryArray = [UInt8](data), right?

-

-

Chanchal Raj over 7 yearsThanks Rob, for the input. I am wondering why Swift prints the converted string as:

Chanchal Raj over 7 yearsThanks Rob, for the input. I am wondering why Swift prints the converted string as:Optional("Ü°J\u{10}:\\ص7c\u{05}\u{1C}ï\t¼f«à)ýë®^\u{1D}A~/ü*\u{07}¤")instead of desired:Ü°J:\ص7cï ¼f«à)ýë®^A~/ü*¤ -

Chanchal Raj over 7 yearsThanks Martin, you have been a lot of help. Can I mark both of the answers as correct?

Chanchal Raj over 7 yearsThanks Martin, you have been a lot of help. Can I mark both of the answers as correct? -

Martin R over 7 years@ChanchalRaj: Because you did not unwrap the optional. Optional strings use a slightly different printing method which escapes control characters.

Martin R over 7 years@ChanchalRaj: Because you did not unwrap the optional. Optional strings use a slightly different printing method which escapes control characters. -

Rob over 7 years@ChanchalRaj - I actually think you should accept this answer because this gets to the heart of your question, namely that you should not be building

Rob over 7 years@ChanchalRaj - I actually think you should accept this answer because this gets to the heart of your question, namely that you should not be buildingStringobject at all, but rather use this[UInt8]rendition of the AES methods.