Hadoop on Local FileSystem

You can remove the fs.default.name value from your mapred-site.xml file - this should only be in the core-site.xml file.

If you want to run on your local file system, in a pseudo mode, this is typically achieved by running in what's called local mode - by setting the fs.default.name value in core-site.xml to file:/// (you currently have it configured for hdfs://localhost:54310).

The stack trace you are seeing is when the secondary name node is starting up - this isn't needed when running in 'local mode' as there is no fsimage or edits file for the 2NN to work against.

Fix up your core-site.xml and mapred-site.xml. Stop all hadoop daemons and just start the map-reduce daemons (Job Tracker and Task Tracker).

Related videos on Youtube

Learner

Updated on June 05, 2022Comments

-

Learner almost 2 years

Learner almost 2 yearsI'm running Hadoop on a pseudo-distributed. I want to read and write from Local filesystem by Abstracting the HDFS for my job. Am using the

file:///parameter. I followed this link.This is the file contents of

core-site.xml,<configuration> <property> <name>hadoop.tmp.dir</name> <value> /home/abimanyu/temp</value> </property> <property> <name>fs.default.name</name> <value>hdfs://localhost:54310</value> </property> </configuration>This is the file contents of

mapred-site.xml,<configuration> <property> <name>mapred.job.tracker</name> <value>localhost:54311</value> </property> <property> <name>fs.default.name</name> <value>file:///</value> </property> <property> <name>mapred.tasktracker.map.tasks.maximum</name> <value>1</value> </property> <property> <name>mapred.tasktracker.reduce.tasks.maximum</name> <value>1</value> </property> </configuration>This is the file contents of

hdfs-site.xml,<configuration> <property> <name>dfs.replication</name> <value>1</value> </property> </configuration>This is the error I get when I try to start the demons(using start-dfs or start-all),

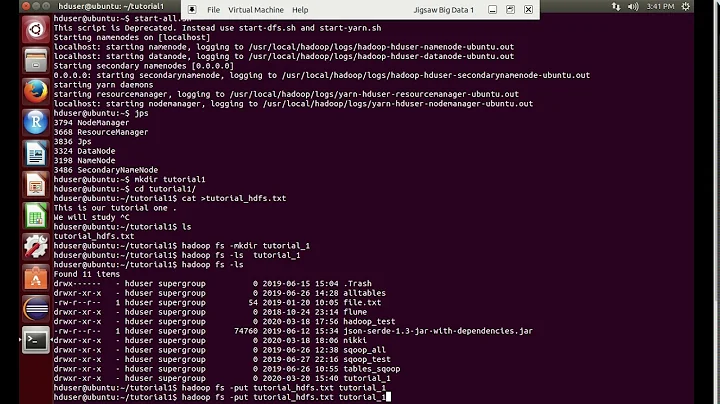

localhost: Exception in thread "main" java.lang.IllegalArgumentException: Does not contain a valid host:port authority: file:/// localhost: at org.apache.hadoop.net.NetUtils.createSocketAddr(NetUtils.java:164) localhost: at org.apache.hadoop.hdfs.server.namenode.NameNode.getAddress(NameNode.java:212) localhost: at org.apache.hadoop.hdfs.server.namenode.NameNode.getAddress(NameNode.java:244) localhost: at org.apache.hadoop.hdfs.server.namenode.NameNode.getServiceAddress(NameNode.java:236) localhost: at org.apache.hadoop.hdfs.server.namenode.SecondaryNameNode.initialize(SecondaryNameNode.java:194) localhost: at org.apache.hadoop.hdfs.server.namenode.SecondaryNameNode.<init>(SecondaryNameNode.java:150) localhost: at org.apache.hadoop.hdfs.server.namenode.SecondaryNameNode.main(SecondaryNameNode.java:676)What is strange to me is that this reading from local file system works completely fine in

hadoop-0.20.2but not inhadoop-1.2.1. Has anything changed from initial release to the later version ? Let me know how to read from Local File system for a Hadoop JAR.