How can I count the number of different characters in a file?

Solution 1

The following should work:

$ sed 's/\(.\)/\1\n/g' text.txt | sort | uniq -c

First, we insert a newline after every character, putting each character on its own line. Then we sort it. Then we use the uniq command to remove the duplicates, prefixing each line with the number of occurrences of that character.

To sort the list by frequency, pipe this all into sort -nr.

Solution 2

Steven's solution is a good, simple one. It's not so performant for very large files (files that don't fit comfortably in about half your RAM) because of the sorting step. Here's an awk version. It's also a little more complicated because it tries to do the right thing for a few special characters (newlines, ', \, :).

awk '

{for (i=1; i<=length; i++) ++c[substr($0,i,1)]; ++c[RS]}

function chr (x) {return x=="\n" ? "\\n" : x==":" ? "\\072" :

x=="\\" || x=="'\''" ? "\\" x : x}

END {for (x in c) printf "'\''%s'\'': %d\n", chr(x), c[x]}

' | sort -t : -k 2 -r | sed 's/\\072/:/'

Here's a Perl solution on the same principle. Perl has the advantage of being able to sort internally. Also this will correctly not count an extra newline if the file does not end in a newline character.

perl -ne '

++$c{$_} foreach split //;

END { printf "'\''%s'\'': %d\n", /[\\'\'']/ ? "\\$_" : /./ ? $_ : "\\n", $c{$_}

foreach (sort {$c{$b} <=> $c{$a}} keys %c) }'

Solution 3

Simple and relatively performant:

fold -c1 testfile.txt | sort | uniq -c

Just tell fold to wrap (i.e. insert newline) after every 1 character.

How tested:

- a 128MB all-ASCII file

- Created by

find . -type f -name '*.[hc]' -exec cat {} >> /tmp/big.txt \;in a few codebases.

- Created by

- workstation-class machine (real iron, not VM)

- environment variable

LC_ALL=C

Runtimes in descending order:

- Steven's

sed|sort|uniqsolution (https://unix.stackexchange.com/a/5011/427210): 102.5 sec - my

fold|sort|uniqsolution: 59.3 sec - my

fold|sort|uniqsolution, with--buffer-size=12Goption given tosort: 38.9 sec - my

fold|sort|uniqsolution, with--buffer-size=12Gand--stableoptions given tosort: 37.9 sec - Giles's

perlsolution (https://unix.stackexchange.com/a/5013/427210): 34.0 sec- Winner! Like they say, the fastest sort is not having to sort.

:-)

- Winner! Like they say, the fastest sort is not having to sort.

Solution 4

More obvious solution that I use to count occurrences of characters in a file:

cat filename | grep -o . | sort | uniq -c | sort -bnr

pipes output to grep, which then prints every char on one line | sort then reprints each char the amount of times it shows up in the file | uniq counts the amount of occurrences | sort -n sorts that input again, by number

With a file that contains the text "Peanut butter and jelly caused the elderly lady to think about her past."

Output:

13

9 e

7 d

5 s

5 a

4 o

4 h

... and more

The first line would be the amount of space characters in the file, you can filter that out if you like using tr -d " "

Solution 5

A slow but relatively memory-friendly version, using ruby. About a dozen MB of RAM, regardless of input size.

# count.rb

ARGF.

each_char.

each_with_object({}) {|e,a| a[e] ||= 0; a[e] += 1}.

each {|i| puts i.join("\t")}

ruby count.rb < input.txt

t 20721

d 20628

S 20844

k 20930

h 20783

... etc

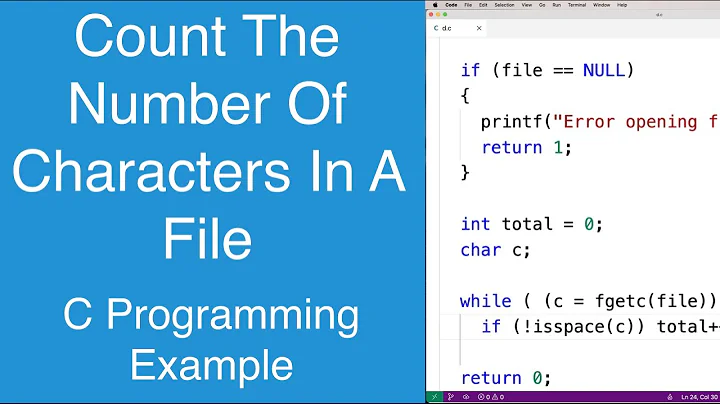

Related videos on Youtube

Mnementh

Updated on September 17, 2022Comments

-

Mnementh over 1 year

I would need a program, that outputs the number of the different characters in a file. Example:

> stats testfile ' ': 207 'e': 186 'n': 102Exists any tool, that do this?

-

Sparr over 13 years+1 for not doing that horrible sort

-

mb21 over 10 yearsOn sed for Mac OS X it's

sed 's/\(.\)/\1\'$'\n/g' text.txt -

bitinerant over 4 yearsVery nice, but unfortunately it does not work correctly if the text contains Unicode (utf8) characters. There may be a way to make

bitinerant over 4 yearsVery nice, but unfortunately it does not work correctly if the text contains Unicode (utf8) characters. There may be a way to makeseddo this, but Jacob Vlijm's Python solution worked well for me.