How to remove previous RAID configuration in CentOS for re-install

Solution 1

The problem was with the CentOS Anaconda installer. The Ubuntu installer had no problem seeing the individual drives. Even doing a full Ubuntu install on the drives did not clear out the raid bits. What ended up working was starting the Centos Installer using

linux text nodmraid

That let the installer run without checking for exiting RAID configurations, and the partitioning went.

Solution 2

It is an old thread but it ranks high on Google so many people read it and it needs to be updated.

The "correct" way would be to use mdadm with --zero-superblock.

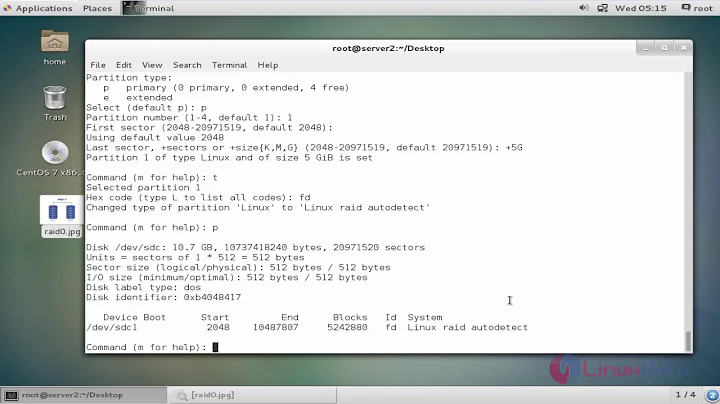

## If the device is being reused or re-purposed from an existing array,

## erase any old RAID configuration information:

mdadm --zero-superblock /dev/<drive>

## or if a particular partition on a drive is to be deleted:

mdadm --zero-superblock /dev/<partition>

man mdadm

--zero-superblock

If the device contains a valid md superblock, the block is overwritten with zeros.

With --force the block where the superblock would be is overwritten even if it doesn't appear to be valid.

The dd method with bs=<block size> works also but one needs to be careful because not all superblocks are written to the beginning of the disk - some are written to the end of the disk.

Update : rather use gdisk for wiping than any other method

# wipe any GPT data or MBR data

gdisk /dev/sdc

x = extra functionality

z = zap GPT data structures (+ MBR also after)

Source:

Solution 3

For me, the fastest (in other word: Easiest to remember) way to fix this is to boot into a rescue mode and overwrite the first few thousand bytes of the disc with dd:

dd if=/dev/zero of=/dev/sda bs=512 count=100

should do the trick. This overwrites the MBR, the partition table and all the relevant data for the RAID.

Solution 4

You can also use wipefs to "wipe a signature from a device".

To remove all signtaures from /dev/sdb:

wipefs -a /dev/sdb

To just list what it finds without actually removing it use it without the -a option:

wipefs /dev/sdb

See man wipefs or this or this page.

Solution 5

Ran into this as well. Version 0.90 puts software RAID info at end of disk. You may want to use dd to zero-out the last few MB instead.

Related videos on Youtube

John P

Updated on September 17, 2022Comments

-

John P over 1 year

I have a server that was previously setup with Software RAID1 under CentOS 5.5 (

/dev/sdaandsdb). I added two additional drives to the server and was attempting re-install CentOS. The CentOS installer sees the 2 new drives fine (sdcandsdd), however it does not see the the two original drives sda and sdb as individual drives. Instead it only shows Drive/dev/mapper/pdc_... (Model: Linux device-mapper). Basically what I need to do is strip all RAID configurations off these drives and allow the installer to see them as individual physical disks.I've tried pulling all the drives except one of the original ones, installing a minimal CentOS and running

dmraid -r -E, but it still sees the old RAID partition. None of the CentOS install options (remove previous partitions, etc.) seem to work. -

John P over 13 yearsThat did not seem to work. CentOS install still sees mapper/ddf1_rootfs (Linux device-mapper). Booting from a Ubuntu livecd, I see the disks correctly, and I overwrote them with /dev/zero, but the Centos install still sees the old device-mapper

-

FooBee over 13 yearsHmmh, this never failed for me. Don't know what RHEL/CentOS does different... Maybe you could try to manually create a new partition table with a dummy partition and file system on it and hope the installer allows you delete them.