How to set cpu limit for given process permanently. Cpulimit and nice don't work

In the absence of requested details ...

Here is how I use cgroups on ubuntu.

Throughout this post, you will need to change the variable "$USER" to the user running the process

I added information for memory as well as that is going to be a FAQ, if you do not need it do not use it.

1) Install cgroup-bin

sudo apt-get install cgroup-bin

2) Reboot. cgroups is now located at /sys/fs/cgroup

3) Make a cgroup for your user (the owner of the process)

# Change $USER to the system user running your process.

sudo cgcreate -a $USER -g memory,cpu:$USER

4) Your user can them manage resources. By default users get 1024 cpu units (shares), so to limit to about 10 % cpu , memory is in bytes ...

# About 10 % cpu

echo 100 > /cgroup/cpu/$USER/cpu.shares

# 10 Mb

echo 10000000 > /cgroup/memory/$USER/memory.limit_in_bytes

5) Start your process (change exec to cgexec)

# -g specifies the control group to run the process in

# Limit cpu

cgexec -g cpu:$USER command <options> &

# Limit cpu and memory

cgexec -g memory,cpu:$USER command <options> &

Configuration

Assuming cgroups are working for you ;)

Edit /etc/cgconfig.conf , add in your custom cgroup

# Graphical

gksudo gedit /etc/cgconfig.conf

# Command line

sudo -e /etc/cgconfig.conf

Add in your cgroup. Again change $USER to the user name owning the process.

group $USER {

# Specify which users can admin (set limits) the group

perm {

admin {

uid = $USER;

}

# Specify which users can add tasks to this group

task {

uid = $USER;

}

}

# Set the cpu and memory limits for this group

cpu {

cpu.shares = 100;

}

memory {

memory.limit_in_bytes = 10000000;

}

}

You can also specify groups gid=$GROUP , /etc/cgconfig.conf is well commented.

Now again run your process with cgexec -g cpu:$USER command <options>

You can see your process (by PID) in /sys/fs/cgroup/cpu/$USER/tasks

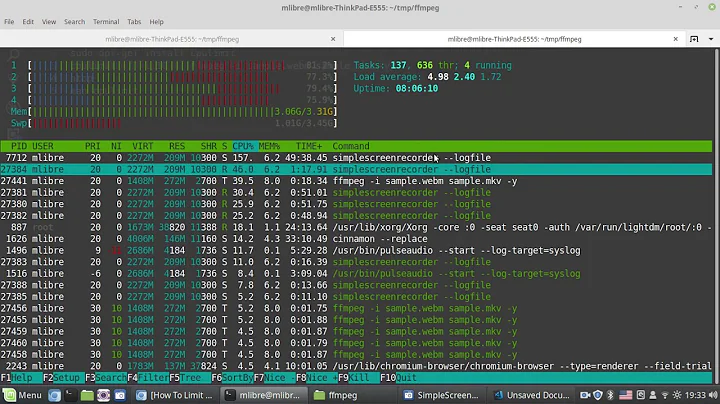

Example

bodhi@ufbt:~$ cgexec -g cpu:bodhi sleep 100 &

[1] 1499

bodhi@ufbt:~$ cat /sys/fs/cgroup/cpu/bodhi/tasks

1499

For additional information see: http://docs.redhat.com/docs/en-US/Red_Hat_Enterprise_Linux/6/html/Resource_Management_Guide/

Related videos on Youtube

Rafal

Updated on September 18, 2022Comments

-

Rafal almost 2 years

There are many similar questions but none of them help me with my problem.

I have ubuntu 11.10 on server (it's desktop version). I have a running website on it. There is one process that is very important, but don't have to be run on high priority. I want to permanently limit cpu usage for process, not for user. This process is run by exec function (it's php function, not system process).

So I see 2 options: 1. Add some kind of limit every time function is executed. 2. Limit cpu usage permanently for this process.

I have tried to use "nice" and cpulimit, but nothing seems to work. Nice don't have any effect and cpulimit (with -e) says: "no target process found".

I am quite a beginer, so please assume I know almost nothing.

-

Panther over 12 yearsCan you please tell us what you have tried. cgroups can do this for you.

Panther over 12 yearsCan you please tell us what you have tried. cgroups can do this for you. -

Mingliang Liu over 10 yearsAs to the configuration, you can run the

cgsnapshot -sto retrieve the information without writing the configuration file manually, the latter of which is not recommended. -

Ken Sharp about 9 yearsDo you want to actually throttle the process, i.e. make sure that it never exceeds a set amount of time?

Ken Sharp about 9 yearsDo you want to actually throttle the process, i.e. make sure that it never exceeds a set amount of time? -

MSH about 7 yearsI used this method and it worked for me.. ubuntuforums.org/showthread.php?t=992706

MSH about 7 yearsI used this method and it worked for me.. ubuntuforums.org/showthread.php?t=992706

-

-

Rafal over 12 yearsI managed to finish part 1. It's working, but you should add information that process is limited only while some other process needs cpu/memory. It's not obvious - and it take me long to figure out (as I am begginer). Do I understand correctly that "Configuration" is to save settings even after reboot?

-

Panther over 12 yearsGlad you got it sorted. There is a bit of a learning curve =) Yes, configuration is to keep the settings across booting.

Panther over 12 yearsGlad you got it sorted. There is a bit of a learning curve =) Yes, configuration is to keep the settings across booting. -

Rafal over 12 yearsWhen I use cgroup from command line it works fine. But when I execute same command from exec function in php: (<?php exec("cgexec -g cpu:GroupHere NameOfProcess InputFile OutputFile"); it doesn't work. Only difference is that from command line I use files which are in folder I'm in and from php I use long path to those files. Any idea what can be a problem? I use same user in both cases.

-

Flimm almost 11 years@Rafal: I don't your first comment is correct, memory should be limited even if no other processes are taking much memory up.

-

Rafal over 10 years@Flimm Only 2 processes were running when I was testing this solution - apache and process that should be limited. When no apache connection was up or there was only one connection to apache, that limited process took 100% of cpu. It got limited only once several connections to apache was up (I did this by re-freshing website a lot from web browser). So maybe it was case of no other process running.

-

Diego over 8 yearsFor those looking to set a hard limit on CPU usage, even when no other process is running, look at

Diego over 8 yearsFor those looking to set a hard limit on CPU usage, even when no other process is running, look atcpu.cfs_quota_usparameter (see manual)