LVM logical volumes fail to activate on boot after 11.04 to 11.10 live upgrade

Solution 1

The machine gradually became worse and worse as updates were installed. It was rebooted this morning and didn't boot back up - /home wouldn't activate properly at all. And then dbus errors, etc. etc.

9 hours later, I've done a full reinstall of 11.10 onto those same partitions and they now work fine. Very odd, but this appears to have been the solution.

Thanks ppetraki, I agree with your points on the basis of performance will look at this when the machine is replaced.

Solution 2

Nothing error/warning wise in the logs is standing out. However, I did notice that you've created 3 MDs, all using the same backing store, with essentially 3 different filesystems (ext3,ext4,reiserfs (maybe raw VMware too?)). I wonder if all the competing I/O writeback strategies, combined with multiple locks/queuing to the same backing store is crowding out your attempts to use the adjacent MDs under certain conditions?

This would blowback on the filesystem as a failed journal or writeback and manifest in LVM as a failure to assemble or activate the mapping.

Ideally, you're setup should look like this:

sda [sda1 {SPAN entire disk - 1-2%}]

sdb [sdb1 {SPAN entire disk - 1-2%}]

md1 (RAID1) [sda1 sdb1]

vg [md1]

You can boot directly from a root lvm backed by an md, I do this myself. The reason you don't span the entire disk is if you ever get bad blocks and use the vendor low level repair tool, you can sometimes run out of free blocks and the tool commender's blocks that are in use, which changes the net size of the disk, and breaking your partition table and certainly MD in the process. See MDADM Superblock Recovery

If you still have problems with one MD, instead of 3, then either one of your filesystems or VMware is being naughty and someone's starving out your siblings, or there's a real problem with your backing store which makes everyone else a victim.

Related videos on Youtube

nOw2

Updated on September 18, 2022Comments

-

nOw2 almost 2 years

I have upgraded my server from 11.04 to 11.10 (64 bit) using

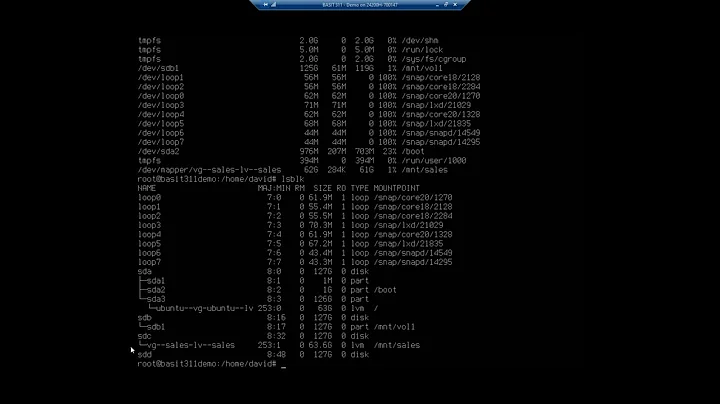

sudo do-release-upgradeThe machine now halts during boot because it can't find certain logical volumes in /mnt. When this happens, I hit "m" to drop down to a root shell, and I see the following (forgive me for inaccuracies, I'm recreating this):

$ lvs LV VG Attr LSize Origin Snap% Move Log Copy% Convert audio vg -wi--- 372.53g home vg -wi-ao 186.26g swap vg -wi-ao 3.72gThe corresponding block devices in /dev are missing for "audio".

If I run:

$ vgchange -a y $ lvs LV VG Attr LSize Origin Snap% Move Log Copy% Convert audio vg -wi-ao 372.53g home vg -wi-ao 186.26g swap vg -wi-ao 3.72gThen all LVs are activated and the system continues to boot perfectly after exiting from the root maintenance shell.

What is going on, and how would I set the LVs to always be active on boot up?

Update to answer questions raised:

There is one volume group:

# vgs VG #PV #LV #SN Attr VSize VFree vg 1 6 0 wz--n- 1.68t 0 # pvs PV VG Fmt Attr PSize PFree /dev/md2 vg lvm2 a- 1.68t 0Over a RAID1 MD array consisting of a matched pair of SATA hard disks:

# cat /proc/mdstat Personalities : [linear] [multipath] [raid0] [raid1] [raid6] [raid5] [raid4] [raid10] md2 : active raid1 sda3[0] sdb3[1] 1806932928 blocks [2/2] [UU] md1 : active raid1 sda2[0] sdb2[1] 146484160 blocks [2/2] [UU] md3 : active raid1 sda4[0] sdb4[1] 95168 blocks [2/2] [UU] unused devices: <none>So:

# mount /dev/md1 on / type ext4 (rw,errors=remount-ro) proc on /proc type proc (rw,noexec,nosuid,nodev) sysfs on /sys type sysfs (rw,noexec,nosuid,nodev) fusectl on /sys/fs/fuse/connections type fusectl (rw) none on /sys/kernel/debug type debugfs (rw) none on /sys/kernel/security type securityfs (rw) udev on /dev type devtmpfs (rw,mode=0755) devpts on /dev/pts type devpts (rw,noexec,nosuid,gid=5,mode=0620) tmpfs on /run type tmpfs (rw,noexec,nosuid,size=10%,mode=0755) none on /run/lock type tmpfs (rw,noexec,nosuid,nodev,size=5242880) none on /run/shm type tmpfs (rw,nosuid,nodev) /dev/md3 on /boot type ext3 (rw) /dev/mapper/vg-home on /home type reiserfs (rw) /dev/mapper/vg-audio on /mnt/audio type reiserfs (rw) rpc_pipefs on /var/lib/nfs/rpc_pipefs type rpc_pipefs (rw) nfsd on /proc/fs/nfsd type nfsd (rw)

Update with full lvdisplay - as suspected the partitions that work are first in the list. Can't see anything odd myself. I've included the full list here - this is all my LVM partitions.

This is from the running machine, if output from the broken state is useful that'll need some time to obtain.

# lvdisplay --- Logical volume --- LV Name /dev/vg/swap VG Name vg LV UUID INuOTR-gwB8-Z0RW-lGHM-qtRF-Xc7D-Bv43ah LV Write Access read/write LV Status available # open 2 LV Size 3.72 GiB Current LE 953 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 256 Block device 252:0 --- Logical volume --- LV Name /dev/vg/home VG Name vg LV UUID 7L34YS-Neh0-V5OL-bFfd-TmO4-8CkV-GwXuRL LV Write Access read/write LV Status available # open 2 LV Size 186.26 GiB Current LE 47683 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 256 Block device 252:1 --- Logical volume --- LV Name /dev/vg/audio VG Name vg LV UUID AX1ZG5-vwyk-mYVl-DBHt-Rgp2-DSwg-oDZlbS LV Write Access read/write LV Status available # open 2 LV Size 372.53 GiB Current LE 95367 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 256 Block device 252:2 --- Logical volume --- LV Name /dev/vg/vmware VG Name vg LV UUID bj0m1h-jndV-GWU8-aePm-gaoo-Q0pE-cWhWj2 LV Write Access read/write LV Status available # open 2 LV Size 372.53 GiB Current LE 95367 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 256 Block device 252:3 --- Logical volume --- LV Name /dev/vg/backup VG Name vg LV UUID PHDnjD-8uT8-yHB2-8SBW-d7E1-1Zws-Qx0Tp8 LV Write Access read/write LV Status available # open 1 LV Size 93.13 GiB Current LE 23841 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 256 Block device 252:4 --- Logical volume --- LV Name /dev/vg/download VG Name vg LV UUID 64Your-pvNG-7EvG-exns-eK9A-vMDD-eozIBM LV Write Access read/write LV Status available # open 2 LV Size 695.05 GiB Current LE 177934 Segments 2 Allocation inherit Read ahead sectors auto - currently set to 256 Block device 252:5

Update 2012/01/28: a reboot has given an opportunity to look at the machine in its broken state.

I don't know if relevant, but the machine was shut down cleanly but the file systems were not clean on starting up again.

# lvs LV VG Attr LSize Origin Snap% Move Log Copy% Convert audio vg -wi--- 372.53g backup vg -wi--- 93.13g download vg -wi--- 695.05g home vg -wi-ao 186.26g swap vg -wi-ao 3.72g vmware vg -wi--- 372.53gAlthough perhaps of interest is (note download):

# lvs --segments LV VG Attr #Str Type SSize audio vg -wi--- 1 linear 372.53g backup vg -wi--- 1 linear 93.13g download vg -wi--- 1 linear 508.79g download vg -wi--- 1 linear 186.26g home vg -wi-ao 1 linear 186.26g swap vg -wi-ao 1 linear 3.72g vmware vg -wi--- 1 linear 372.53gMore:

# lvdisplay --- Logical volume --- LV Name /dev/vg/swap VG Name vg LV UUID INuOTR-gwB8-Z0RW-lGHM-qtRF-Xc7D-Bv43ah LV Write Access read/write LV Status available # open 2 LV Size 3.72 GiB Current LE 953 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 256 Block device 252:0 --- Logical volume --- LV Name /dev/vg/home VG Name vg LV UUID 7L34YS-Neh0-V5OL-bFfd-TmO4-8CkV-GwXuRL LV Write Access read/write LV Status available # open 2 LV Size 186.26 GiB Current LE 47683 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 256 Block device 252:1 --- Logical volume --- LV Name /dev/vg/audio VG Name vg LV UUID AX1ZG5-vwyk-mYVl-DBHt-Rgp2-DSwg-oDZlbS LV Write Access read/write LV Status NOT available LV Size 372.53 GiB Current LE 95367 Segments 1 Allocation inherit Read ahead sectors auto --- Logical volume --- LV Name /dev/vg/vmware VG Name vg LV UUID bj0m1h-jndV-GWU8-aePm-gaoo-Q0pE-cWhWj2 LV Write Access read/write LV Status NOT available LV Size 372.53 GiB Current LE 95367 Segments 1 Allocation inherit Read ahead sectors auto --- Logical volume --- LV Name /dev/vg/backup VG Name vg LV UUID PHDnjD-8uT8-yHB2-8SBW-d7E1-1Zws-Qx0Tp8 LV Write Access read/write LV Status NOT available LV Size 93.13 GiB Current LE 23841 Segments 1 Allocation inherit Read ahead sectors auto --- Logical volume --- LV Name /dev/vg/download VG Name vg LV UUID 64Your-pvNG-7EvG-exns-eK9A-vMDD-eozIBM LV Write Access read/write LV Status NOT available LV Size 695.05 GiB Current LE 177934 Segments 2 Allocation inherit Read ahead sectors auto # vgs VG #PV #LV #SN Attr VSize VFree vg 1 6 0 wz--n- 1.68t 0 # pvs PV VG Fmt Attr PSize PFree /dev/md2 vg lvm2 a- 1.68t 0From dmesg:

[ 0.908322] ata3.00: ATA-8: ST32000542AS, CC34, max UDMA/133 [ 0.908325] ata3.00: 3907029168 sectors, multi 16: LBA48 NCQ (depth 0/32) [ 0.908536] ata3.01: ATA-8: ST32000542AS, CC34, max UDMA/133 [ 0.908538] ata3.01: 3907029168 sectors, multi 16: LBA48 NCQ (depth 0/32) [ 0.924307] ata3.00: configured for UDMA/133 [ 0.940315] ata3.01: configured for UDMA/133 [ 0.940408] scsi 2:0:0:0: Direct-Access ATA ST32000542AS CC34 PQ: 0 ANSI: 5 [ 0.940503] sd 2:0:0:0: [sda] 3907029168 512-byte logical blocks: (2.00 TB/1.81 TiB) [ 0.940541] sd 2:0:0:0: Attached scsi generic sg0 type 0 [ 0.940544] sd 2:0:0:0: [sda] Write Protect is off [ 0.940546] sd 2:0:0:0: [sda] Mode Sense: 00 3a 00 00 [ 0.940564] sd 2:0:0:0: [sda] Write cache: enabled, read cache: enabled, doesn't support DPO or FUA [ 0.940611] scsi 2:0:1:0: Direct-Access ATA ST32000542AS CC34 PQ: 0 ANSI: 5 [ 0.940699] sd 2:0:1:0: Attached scsi generic sg1 type 0 [ 0.940728] sd 2:0:1:0: [sdb] 3907029168 512-byte logical blocks: (2.00 TB/1.81 TiB) [ 0.945319] sd 2:0:1:0: [sdb] Write Protect is off [ 0.945322] sd 2:0:1:0: [sdb] Mode Sense: 00 3a 00 00 [ 0.945660] sd 2:0:1:0: [sdb] Write cache: enabled, read cache: enabled, doesn't support DPO or FUA [ 0.993794] sda: sda1 sda2 sda3 sda4 [ 1.023974] sd 2:0:0:0: [sda] Attached SCSI disk [ 1.024277] sdb: sdb1 sdb2 sdb3 sdb4 [ 1.024529] sd 2:0:1:0: [sdb] Attached SCSI disk [ 1.537688] md: bind<sdb3> [ 1.538922] bio: create slab <bio-1> at 1 [ 1.538983] md/raid1:md2: active with 2 out of 2 mirrors [ 1.539005] md2: detected capacity change from 0 to 1850299318272 [ 1.540678] md2: unknown partition table [ 1.540851] md: bind<sdb4> [ 1.542231] md/raid1:md3: active with 2 out of 2 mirrors [ 1.542245] md3: detected capacity change from 0 to 97452032 [ 1.543867] md: bind<sdb2> [ 1.544680] md3: unknown partition table [ 1.545627] md/raid1:md1: active with 2 out of 2 mirrors [ 1.545642] md1: detected capacity change from 0 to 149999779840 [ 1.556008] generic_sse: 9824.000 MB/sec [ 1.556010] xor: using function: generic_sse (9824.000 MB/sec) [ 1.556721] md: raid6 personality registered for level 6 [ 1.556723] md: raid5 personality registered for level 5 [ 1.556724] md: raid4 personality registered for level 4 [ 1.560491] md: raid10 personality registered for level 10 [ 1.571416] md1: unknown partition table [ 1.935835] EXT4-fs (md1): INFO: recovery required on readonly filesystem [ 1.935838] EXT4-fs (md1): write access will be enabled during recovery [ 2.901833] EXT4-fs (md1): orphan cleanup on readonly fs [ 2.901840] EXT4-fs (md1): ext4_orphan_cleanup: deleting unreferenced inode 4981215 [ 2.901904] EXT4-fs (md1): ext4_orphan_cleanup: deleting unreferenced inode 8127848 [ 2.901944] EXT4-fs (md1): 2 orphan inodes deleted [ 2.901946] EXT4-fs (md1): recovery complete [ 3.343830] EXT4-fs (md1): mounted filesystem with ordered data mode. Opts: (null) [ 64.851211] Adding 3903484k swap on /dev/mapper/vg-swap. Priority:-1 extents:1 across:3903484k [ 67.600045] EXT4-fs (md1): re-mounted. Opts: errors=remount-ro [ 68.459775] EXT3-fs: barriers not enabled [ 68.460520] kjournald starting. Commit interval 5 seconds [ 68.461183] EXT3-fs (md3): using internal journal [ 68.461187] EXT3-fs (md3): mounted filesystem with ordered data mode [ 130.280048] REISERFS (device dm-1): found reiserfs format "3.6" with standard journal [ 130.280060] REISERFS (device dm-1): using ordered data mode [ 130.284596] REISERFS (device dm-1): journal params: device dm-1, size 8192, journal first block 18, max trans len 1024, max batch 900, max commit age 30, max trans age 30 [ 130.284918] REISERFS (device dm-1): checking transaction log (dm-1) [ 130.450867] REISERFS (device dm-1): Using r5 hash to sort names-

ppetraki over 12 yearsWe need to know more about the storage that's actually part of the LVM. Are you using MD as well? Is the VG a pool of disks or just one basic hard disk? Thanks.

-

nOw2 over 12 yearsI've added some additional information. It seems odd to me that the /home and swap LVs are set to active automatically, but the rest are not (there are three others in addition to the example 'audio'). I'm sure there is something simple I've missed. I wonder how many others have seen the same issue after an upgrade? as my install isn't anything special.

-

nOw2 over 12 yearsI've added information from the broken state in case anything stands out.

-

-

ppetraki over 12 yearsVery well, could you please vote and checkbox the appropriate responses so we can close out this issue? Thanks.

-

nOw2 over 12 yearsMy reputation here isn't high enough to give you a vote up :-) but I've clicked the accept my solution as the answer. Just happy that the machine is working now, though I'll certainly think twice about using do-release-upgrade again!