Maximum number of files in one ext3 directory while still getting acceptable performance?

Solution 1

Provided you have a distro that supports the dir_index capability then you can easily have 200,000 files in a single directory. I'd keep it at about 25,000 though, just to be safe. Without dir_index, try to keep it at 5,000.

Solution 2

Be VERY careful how you select the directory split. "a/b/c" sounds like a recipe for disaster to me...

Do not just blindly go making a several directory deep structure, say 100 entries in the first level, 100 entries in the second level, 100 entries in the third. I've been there, done that, got the jacket and had to restructure it when performance went in the crapper with a few million files. :-)

We have a client that did the "multiple directories" layout, and ends up putting just one to five files per directory, and this was killing them. 3 to 6 hours to do a "du" in this directory structure. The savior here was SSD, they were unwilling to rewrite this part of their application, and an SSD took this du time down from hours to minutes.

The problem is that each level of directory lookups takes seeks, and seeks are extremely expensive. The size of the directory is also a factor, so having it be smaller rather than larger is a big win.

To answer your question about how many files per directory, 1,000 I've heard talked about as "optimum" but performance at 10,000 seems to be fine.

So, what I'd recommend is one level of directories, each level being a directory 2 characters long, made up of upper and lowercase letters and the digits, for around 3800 directories in the top level. You can then hold 14M files with those sub-directories containing 3800 files, or around 1,000 files per sub-directory for 3M files.

I have done a change like this for another client, and it made a huge difference.

Solution 3

I would suggest you try testing various directory sizes with a benchmarking tool such as postmark, because there are a lot of variables like cache size (both in the OS and in the disk subsystem) that depend on your particular environment.

My personal rule of thumb is to aim for a directory size of <= 20k files, although I've seen relatively decent performance with up to 100k files/directory.

Solution 4

I have all files go folders like:

uploads/[date]/[hour]/yo.png

and don't have any performance problems.

Solution 5

I can confirm on a pretty powerful server with plenty of memory under a decent load that 70,000 files can cause all sorts of havoc. I went to remove a cache folder with 70k files in it and it cause apache to start spawning new instances until it maxed out at 255 and the system used all free memory (16gb although the virtual instance may have been lower). Either way, keeping it under 25,000 is probably a very prudent move

Related videos on Youtube

knorv

Updated on September 17, 2022Comments

-

knorv over 1 year

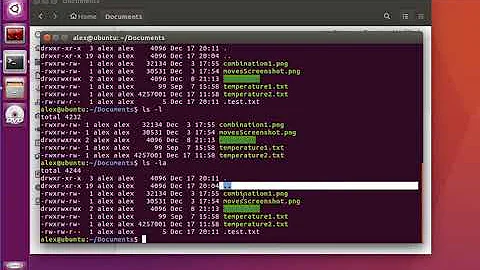

I have an application writing to an ext3 directory which over time has grown to roughly three million files. Needless to say, reading the file listing of this directory is unbearably slow.

I don't blame ext3. The proper solution would have been to let the application code write to sub-directories such as

./a/b/c/abc.extrather than using only./abc.ext.I'm changing to such a sub-directory structure and my question is simply: roughly how many files should I expect to store in one ext3 directory while still getting acceptable performance? What's your experience?

Or in other words; assuming that I need to store three million files in the structure, how many levels deep should the

./a/b/c/abc.extstructure be?Obviously this is a question that cannot be answered exactly, but I'm looking for a ball park estimate.

-

Cascabel about 14 yearsAnd how many files do you get per hour?

Cascabel about 14 yearsAnd how many files do you get per hour?

![[Solved] Maximum request length exceeded in Visual Studio 2017/ 2019](https://i.ytimg.com/vi/XcgR75VQvwc/hq720.jpg?sqp=-oaymwEcCNAFEJQDSFXyq4qpAw4IARUAAIhCGAFwAcABBg==&rs=AOn4CLBhMPw2tp74PSLvsQncJl4up4PcEw)