Good filesystem for 20TB hardware-controlled RAID5 array?

Solution 1

Of the two you've listed (ext3 and XFS) I'd be tempted to go with XFS, both have broadly similar capabilities and you don't sound like you'll be pushing them particularly hard but given ext3 can only really grow to 32TB maximum and you already want 20TB on day one I'd say go with XFS simply because it'll allow for larger and smoother growth if you ever go past this 32TB limit.

Solution 2

Given that ext3 and ext4 are still limited to 16TB volumes (a limit of 2^32 4KiB blocks for ext2/3, and while ext4 has a higher limit in theory e2fsprogs doesn't support it), I'd say that your only real option for a widely used, stable filesystem is XFS.

EDIT: Adding note around why ext3 will not work at all, even if you change the blocksize.

$ head -n2 /etc/motd

Linux uranium 2.6.35-24-generic #42-Ubuntu SMP Thu Dec 2 02:41:37 UTC 2010 x86_64 GNU/Linux

Ubuntu 10.10

$ mkfs.ext3 -b 8192 ./8KiBfs

Warning: blocksize 8192 not usable on most systems.

mke2fs 1.41.12 (17-May-2010)

mkfs.ext3: 8192-byte blocks too big for system (max 4096)

Proceed anyway? (y,n) y

Warning: 8192-byte blocks too big for system (max 4096), forced to continue

./8KiBfs is not a block special device.

Proceed anyway? (y,n) y

Filesystem label=

OS type: Linux

Block size=8192 (log=3)

Fragment size=8192 (log=3)

Stride=0 blocks, Stripe width=0 blocks

16384 inodes, 16384 blocks

819 blocks (5.00%) reserved for the super user

First data block=0

Maximum filesystem blocks=33550336

1 block group

65528 blocks per group, 65528 fragments per group

16384 inodes per group

Writing inode tables: done

Creating journal (1024 blocks): done

Writing superblocks and filesystem accounting information: done

This filesystem will be automatically checked every 25 mounts or

180 days, whichever comes first. Use tune2fs -c or -i to override.

$ sudo mount ./8KiBfs /mnt/8KiBfs/ -o loop

mount: wrong fs type, bad option, bad superblock on /dev/loop0,

missing codepage or helper program, or other error

In some cases useful info is found in syslog - try

dmesg | tail or so

$ dmesg | tail -n 1

[1396759.041587] EXT3-fs (loop0): error: bad blocksize 8192

I'm not trying to flog a dead horse here, but the people insisting that ext3 will let you build a 32TiB fs using 8KiB blocks have clearly never tried it. I'm open to suggestions about how to make it work, but on the face of it, it just won't work. OK, my test filesystem was only 128MB, but I can't use 8KiB blocks even for that - it's an architecture limitation.

I don't have ready access to a > 16TiB block device to demonstrate the limitations in ext4, but I can probably arrange that next week.

XFS is definitely the best option given what we currently know.

Solution 3

Typically, filesystem performance differs when you have 1) very small files, 2) very many files, or 3) very deep directory trees. Since neither of these criteria apply here, I expect you are not going to see a significant performance difference from filesystem. I would choose ext3 in order to be as mainstream as possible.

Solution 4

I'm using XFS for similar configuration (12 x 2TB in RAID-6) it performs very well, but you can go with EXT4 or JFS. I would not recommend EXT3 though - it is outdated and it is rather inefficient on multi-core systems and when deleting many files. In either case, make sure your FS is aligned with dimensions of your RAID array (i.e. stripe size and stripe width). Here is my reply in another thread with sample configuration for XFS.

Solution 5

XFS, EXT4, JFS. Btrfs (for real-brave) :-)

Related videos on Youtube

ensnare

Updated on September 18, 2022Comments

-

ensnare over 1 year

What's the best file system for Ubuntu 10.10 assuming the average filesize is 8-30GB with no more than 1-2 users accessing the array at any given time?

-

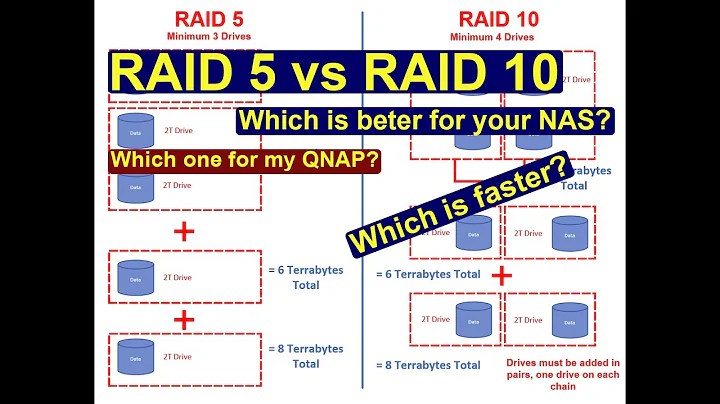

Hecter almost 13 yearsI would not trust RAID5 to rebuild reliably with a volume of that size. RAID6 or RAID10 would be safer.

-

-

Daniel Lawson about 13 yearsext3 is (apparently) currently limited to 16TB volumes, which may make it unsuitable for the OP

-

poige about 13 yearsThat's wrong. EXT4 has 16TB for a file size limit, not volume's. Check it out.

-

Daniel Lawson about 13 yearsCheck my links. e2fsprogs can't create a volume greater than 16TB. If you've done otherwise yourself then I stand corrected of course :)

-

Rob Moir about 13 yearsI have to agree with this, +1. I always think 'act for today, plan for tomorrow' with IT deployments, the requirements only ever seem to go up.

Rob Moir about 13 yearsI have to agree with this, +1. I always think 'act for today, plan for tomorrow' with IT deployments, the requirements only ever seem to go up. -

Bittrance about 13 yearsThat depends on block size, does it not? If you set a block size of 8k, you should get 8192 * 2 ^ 32 = 32TB.

-

Marcin about 13 yearsIf the size limit stems from block size and number of blocks, why not up the size of blocks? Unless your data set largely consists of <4k files, this should not be a problem.

-

poige about 13 yearsXFS is really slow on meta-data ops.

-

Daniel Lawson about 13 yearsAccording to all the docs, and the last time I tried it (Centos 5.3 I believe), that's just not possible on x86/x86_64 systems. The docs say it will work on Alpha only. It's to do with system pagesize, and x86_64 still has 4 KiBpages

-

Daniel Lawson about 13 yearsYou can't increase the blocksize past 4KiB, unless you're on an Alpha.