multiprocessing vs multithreading vs asyncio in Python 3

Solution 1

They are intended for (slightly) different purposes and/or requirements. CPython (a typical, mainline Python implementation) still has the global interpreter lock so a multi-threaded application (a standard way to implement parallel processing nowadays) is suboptimal. That's why multiprocessing may be preferred over threading. But not every problem may be effectively split into [almost independent] pieces, so there may be a need in heavy interprocess communications. That's why multiprocessing may not be preferred over threading in general.

asyncio (this technique is available not only in Python, other languages and/or frameworks also have it, e.g. Boost.ASIO) is a method to effectively handle a lot of I/O operations from many simultaneous sources w/o need of parallel code execution. So it's just a solution (a good one indeed!) for a particular task, not for parallel processing in general.

Solution 2

TL;DR

Making the Right Choice:

We have walked through the most popular forms of concurrency. But the question remains - when should choose which one? It really depends on the use cases. From my experience (and reading), I tend to follow this pseudo code:

if io_bound:

if io_very_slow:

print("Use Asyncio")

else:

print("Use Threads")

else:

print("Multi Processing")

- CPU Bound => Multi Processing

- I/O Bound, Fast I/O, Limited Number of Connections => Multi Threading

- I/O Bound, Slow I/O, Many connections => Asyncio

[NOTE]:

- If you have a long call method (e.g. a method containing a sleep time or lazy I/O), the best choice is asyncio, Twisted or Tornado approach (coroutine methods), that works with a single thread as concurrency.

- asyncio works on Python3.4 and later.

- Tornado and Twisted are ready since Python2.7

-

uvloop is ultra fast

asyncioevent loop (uvloop makesasyncio2-4x faster).

[UPDATE (2019)]:

Solution 3

In multiprocessing you leverage multiple CPUs to distribute your calculations. Since each of the CPUs runs in parallel, you're effectively able to run multiple tasks simultaneously. You would want to use multiprocessing for CPU-bound tasks. An example would be trying to calculate a sum of all elements of a huge list. If your machine has 8 cores, you can "cut" the list into 8 smaller lists and calculate the sum of each of those lists separately on separate core and then just add up those numbers. You'll get a ~8x speedup by doing that.

In (multi)threading you don't need multiple CPUs. Imagine a program that sends lots of HTTP requests to the web. If you used a single-threaded program, it would stop the execution (block) at each request, wait for a response, and then continue once received a response. The problem here is that your CPU isn't really doing work while waiting for some external server to do the job; it could have actually done some useful work in the meantime! The fix is to use threads - you can create many of them, each responsible for requesting some content from the web. The nice thing about threads is that, even if they run on one CPU, the CPU from time to time "freezes" the execution of one thread and jumps to executing the other one (it's called context switching and it happens constantly at non-deterministic intervals). So if your task is I/O bound - use threading.

asyncio is essentially threading where not the CPU but you, as a programmer (or actually your application), decide where and when does the context switch happen. In Python you use an await keyword to suspend the execution of your coroutine (defined using async keyword).

Solution 4

This is the basic idea:

Is it IO-BOUND ? -----------> USE

asyncioIS IT CPU-HEAVY ? ---------> USE

multiprocessingELSE ? ----------------------> USE

threading

So basically stick to threading unless you have IO/CPU problems.

Solution 5

Many of the answers suggest how to choose only 1 option, but why not be able to use all 3? In this answer I explain how you can use asyncio to manage combining all 3 forms of concurrency instead as well as easily swap between them later if need be.

The short answer

Many developers that are first-timers to concurrency in Python will end up using processing.Process and threading.Thread. However, these are the low-level APIs which have been merged together by the high-level API provided by the concurrent.futures module. Furthermore, spawning processes and threads has overhead, such as requiring more memory, a problem which plagued one of the examples I showed below. To an extent, concurrent.futures manages this for you so that you cannot as easily do something like spawn a thousand processes and crash your computer by only spawning a few processes and then just re-using those processes each time one finishes.

These high-level APIs are provided through concurrent.futures.Executor, which are then implemented by concurrent.futures.ProcessPoolExecutor and concurrent.futures.ThreadPoolExecutor. In most cases, you should use these over the multiprocessing.Process and threading.Thread, because it's easier to change from one to the other in the future when you use concurrent.futures and you don't have to learn the detailed differences of each.

Since these share a unified interfaces, you'll also find that code using multiprocessing or threading will often use concurrent.futures. asyncio is no exception to this, and provides a way to use it via the following code:

import asyncio

from concurrent.futures import Executor

from functools import partial

from typing import Any, Callable, Optional, TypeVar

T = TypeVar("T")

async def run_in_executor(

executor: Optional[Executor],

func: Callable[..., T],

/,

*args: Any,

**kwargs: Any,

) -> T:

"""

Run `func(*args, **kwargs)` asynchronously, using an executor.

If the executor is None, use the default ThreadPoolExecutor.

"""

return await asyncio.get_running_loop().run_in_executor(

executor,

partial(func, *args, **kwargs),

)

# Example usage for running `print` in a thread.

async def main():

await run_in_executor(None, print, "O" * 100_000)

asyncio.run(main())

In fact it turns out that using threading with asyncio was so common that in Python 3.9 they added asyncio.to_thread(func, *args, **kwargs) to shorten it for the default ThreadPoolExecutor.

The long answer

Are there any disadvantages to this approach?

Yes. With asyncio, the biggest disadvantage is that asynchronous functions aren't the same as synchronous functions. This can trip up new users of asyncio a lot and cause a lot of rework to be done if you didn't start programming with asyncio in mind from the beginning.

Another disadvantage is that users of your code will also become forced to use asyncio. All of this necessary rework will often leave first-time asyncio users with a really sour taste in their mouth.

Are there any non-performance advantages to this?

Yes. Similar to how using concurrent.futures is advantageous over threading.Thread and multiprocessing.Process for its unified interface, this approach can be considered a further abstraction from an Executor to an asynchronous function. You can start off using asyncio, and if later you find a part of it you need threading or multiprocessing, you can use asyncio.to_thread or run_in_executor. Likewise, you may later discover that an asynchronous version of what you're trying to run with threading already exists, so you can easily step back from using threading and switch to asyncio instead.

Are there any performance advantages to this?

Yes... and no. Ultimately it depends on the task. In some cases, it may not help (though it likely does not hurt), while in other cases it may help a lot. The rest of this answer provides some explanations as to why using asyncio to run an Executor may be advantageous.

- Combining multiple executors and other asynchronous code

asyncio essentially provides significantly more control over concurrency at the cost of you need to take control of the concurrency more. If you want to simultaneously run some code using a ThreadPoolExecutor along side some other code using a ProcessPoolExecutor, it is not so easy managing this using synchronous code, but it is very easy with asyncio.

import asyncio

from concurrent.futures import ProcessPoolExecutor, ThreadPoolExecutor

async def with_processing():

with ProcessPoolExecutor() as executor:

tasks = [...]

for task in asyncio.as_completed(tasks):

result = await task

...

async def with_threading():

with ThreadPoolExecutor() as executor:

tasks = [...]

for task in asyncio.as_completed(tasks):

result = await task

...

async def main():

await asyncio.gather(with_processing(), with_threading())

asyncio.run(main())

How does this work? Essentially asyncio asks the executors to run their functions. Then, while an executor is running, asyncio will go run other code. For example, the ProcessPoolExecutor starts a bunch of processes, and then while waiting for those processes to finish, the ThreadPoolExecutor starts a bunch of threads. asyncio will then check in on these executors and collect their results when they are done. Furthermore, if you have other code using asyncio, you can run them while waiting for the processes and threads to finish.

- Narrowing in on what sections of code needs executors

It is not common that you will have many executors in your code, but what is a common problem that I have seen when people use threads/processes is that they will shove the entirety of their code into a thread/process, expecting it to work. For example, I once saw the following code (approximately):

from concurrent.futures import ThreadPoolExecutor

import requests

def get_data(url):

return requests.get(url).json()["data"]

urls = [...]

with ThreadPoolExecutor() as executor:

for data in executor.map(get_data, urls):

print(data)

The funny thing about this piece of code is that it was slower with concurrency than without. Why? Because the resulting json was large, and having many threads consume a huge amount of memory was disastrous. Luckily the solution was simple:

from concurrent.futures import ThreadPoolExecutor

import requests

urls = [...]

with ThreadPoolExecutor() as executor:

for response in executor.map(requests.get, urls):

print(response.json()["data"])

Now only one json is unloaded into memory at a time, and everything is fine.

The lesson here?

You shouldn't try to just slap all of your code into threads/processes, you should instead focus in on what part of the code actually needs concurrency.

But what if get_data was not a function as simple as this case? What if we had to apply the executor somewhere deep in the middle of the function? This is where asyncio comes in:

import asyncio

import requests

async def get_data(url):

# A lot of code.

...

# The specific part that needs threading.

response = await asyncio.to_thread(requests.get, url, some_other_params)

# A lot of code.

...

return data

urls = [...]

async def main():

tasks = [get_data(url) for url in urls]

for task in asyncio.as_completed(tasks):

data = await task

print(data)

asyncio.run(main())

Attempting the same with concurrent.futures is by no means pretty. You could use things such as callbacks, queues, etc., but it would be significantly harder to manage than basic asyncio code.

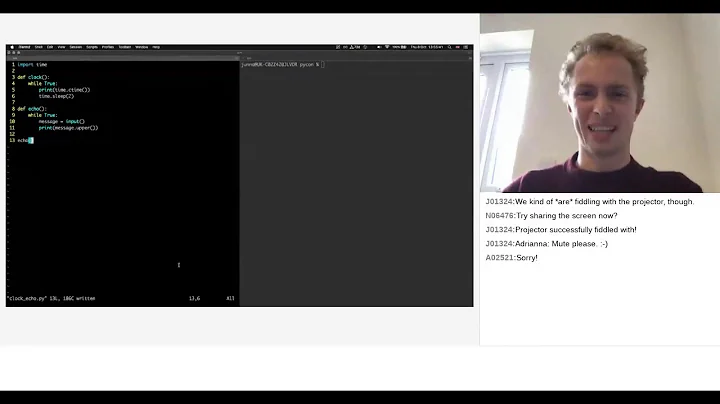

Related videos on Youtube

user3654650

Updated on April 12, 2022Comments

-

user3654650 about 2 years

I found that in Python 3.4 there are few different libraries for multiprocessing/threading: multiprocessing vs threading vs asyncio.

But I don't know which one to use or is the "recommended one". Do they do the same thing, or are different? If so, which one is used for what? I want to write a program that uses multicores in my computer. But I don't know which library I should learn.

-

Martin Thoma about 6 yearsMaybe I’m too stupid for AsyncIO helps

-

-

sargas over 8 yearsNoting that while all three may not achieve parallelism, they are all capable of doing concurrent (non-blocking) tasks.

-

mingchau almost 5 yearsSo if I have a list of urls to request, it's better to use Asyncio?

mingchau almost 5 yearsSo if I have a list of urls to request, it's better to use Asyncio? -

Benyamin Jafari - aGn almost 5 years@mingchau, Yes, but keep in mind, you could use from

Benyamin Jafari - aGn almost 5 years@mingchau, Yes, but keep in mind, you could use fromasynciowhen you use from awaitable functions,requestlibrary is not an awaitable method, instead of that you can use such as theaiohttplibrary or async-request and etc. -

qrtLs over 4 yearsplease extend on slowIO and fastIO to go multithread or asyncio>?

-

Benyamin Jafari - aGn over 4 years@qrtLs When you have a SlowIO, AsyncIO is very helpful and more efficient.

Benyamin Jafari - aGn over 4 years@qrtLs When you have a SlowIO, AsyncIO is very helpful and more efficient. -

variable over 4 yearsPlease can you advise what exactly is io_very_slow

-

variable over 4 yearsWhat is an example of io_very_slow ?

-

Benyamin Jafari - aGn over 4 years@variable I/O bound means your program spends most of its time talking to a slow device, like a network connection, a hard drive, a printer, or an event loop with a sleep time. So in blocking mode, you could choose between threading or asyncio, and if your bounding section is very slow, cooperative multitasking (asyncio) is a better choice (i.e. avoiding to resource starvation, dead-locks, and race conditions)

Benyamin Jafari - aGn over 4 years@variable I/O bound means your program spends most of its time talking to a slow device, like a network connection, a hard drive, a printer, or an event loop with a sleep time. So in blocking mode, you could choose between threading or asyncio, and if your bounding section is very slow, cooperative multitasking (asyncio) is a better choice (i.e. avoiding to resource starvation, dead-locks, and race conditions) -

aspiring1 over 3 yearsIf I have multiple threads and then I start getting the responses faster - and after the responses my work is more CPU bound - would my process use the multiple cores? That is, would it freeze threads instead of also using the multiple cores?

aspiring1 over 3 yearsIf I have multiple threads and then I start getting the responses faster - and after the responses my work is more CPU bound - would my process use the multiple cores? That is, would it freeze threads instead of also using the multiple cores? -

Tomasz Bartkowiak over 3 yearsNot sure if I understood the question. Is it about whether you should use multiple cores when responses become faster? If that's the case - it depends how fast the responses are and how much time you really spend waiting for them vs. using CPU. If you're spending majority of time doing CPU-intensive tasks then it'd be beneficial to distribute over multiple cores (if possible). And if the question if whether the system would spontaneously switch to parallel processing after "realizing" its job is CPU-bound - I don't think so - usually you need to tell it explicitly to do so.

Tomasz Bartkowiak over 3 yearsNot sure if I understood the question. Is it about whether you should use multiple cores when responses become faster? If that's the case - it depends how fast the responses are and how much time you really spend waiting for them vs. using CPU. If you're spending majority of time doing CPU-intensive tasks then it'd be beneficial to distribute over multiple cores (if possible). And if the question if whether the system would spontaneously switch to parallel processing after "realizing" its job is CPU-bound - I don't think so - usually you need to tell it explicitly to do so. -

aspiring1 over 3 yearsI was thinking of a chatbot application, in which the chatbot messages by users is sent to the server and the responses are sent back by the server using a POST request? Do you think is this more of a CPU intensive task, since the response sent & received can be json, but I was doubtful - what would happen if the user takes time to type his response, is this an example of slow I/O? (user sending response late)

aspiring1 over 3 yearsI was thinking of a chatbot application, in which the chatbot messages by users is sent to the server and the responses are sent back by the server using a POST request? Do you think is this more of a CPU intensive task, since the response sent & received can be json, but I was doubtful - what would happen if the user takes time to type his response, is this an example of slow I/O? (user sending response late) -

Talal Zahid almost 3 years@TomaszBartkowiak Hi, I have a question: So I have a realtime facial-recongnition model that takes in input from a webcam and shows whether a user is present or not. There is an obvious lag because all the frames are not processed in real-time as the processesing rate is slower. Can you tell me if multi-threading can help me here if I create like 10 threads to process 10 frames rather than processing those 10 frames on one thread? And just to clarify, by processing I mean, there is a trained model on keras that takes in an image frame as an input and outputs if a person is detected or not.

-

Tomasz Bartkowiak almost 3 years@TalalZahid your task seems to be CPU bound - it's only the machine (CPU) that performs inference (detection), as opposed to waiting for IO or someone else to do some part of the work (i.e. calling external API). So it would not make sense to do multithreading. If processing a given frame takes a considerable amount of time (does it?) and each frame is independent then you might consider distributing detection across separate machines/core.

Tomasz Bartkowiak almost 3 years@TalalZahid your task seems to be CPU bound - it's only the machine (CPU) that performs inference (detection), as opposed to waiting for IO or someone else to do some part of the work (i.e. calling external API). So it would not make sense to do multithreading. If processing a given frame takes a considerable amount of time (does it?) and each frame is independent then you might consider distributing detection across separate machines/core. -

Arkyo over 2 yearsI like how you mention that developers control the context switch in

Arkyo over 2 yearsI like how you mention that developers control the context switch inasyncbut the OS controls it inthreading -

EralpB over 2 yearswhat is the 3rd problem you could have?

EralpB over 2 yearswhat is the 3rd problem you could have? -

Farshid Ashouri over 2 years@EralpB Not io or CPU bound, like a thread worker doing simple calculation or reading chunks of data locally or from a fast local database. Or just sleeping and watching something. Basically, most problems fall into this criteria unless you have a networking application or a heavy calculation.

Farshid Ashouri over 2 years@EralpB Not io or CPU bound, like a thread worker doing simple calculation or reading chunks of data locally or from a fast local database. Or just sleeping and watching something. Basically, most problems fall into this criteria unless you have a networking application or a heavy calculation. -

TheLogicGuy over 2 yearsRegarding threading, why is it good for lots of HTTP requests? i.e. I've 3 requests and 3 threads, each time CPU polls one of the threads and does a small progress in getting the data how is it better than getting all the data at once from each request one by one? I can rephrase the question, when a sleeping thread sends a web request, does he get answer even if CPU runs another thread? if so how is it possible?

-

Tomasz Bartkowiak over 2 years@TheLogicGuy Once the request is sent, without threading the (sequential) program freezes until it gets a response from an external server (note that this includes: time for the network to send the request out, time to do compute by an external server and time for network to deliver back the response). During that time you could have switched to other thread so that your CPU isn't idle. Re does he get answer even if CPU runs another thread - the thread (after "waking up") might be e.g. checking some message queue if there is a response for a request it had sent before yielding control back.

Tomasz Bartkowiak over 2 years@TheLogicGuy Once the request is sent, without threading the (sequential) program freezes until it gets a response from an external server (note that this includes: time for the network to send the request out, time to do compute by an external server and time for network to deliver back the response). During that time you could have switched to other thread so that your CPU isn't idle. Re does he get answer even if CPU runs another thread - the thread (after "waking up") might be e.g. checking some message queue if there is a response for a request it had sent before yielding control back. -

TheLogicGuy over 2 years@TomaszBartkowiak so if I understand, this message queue that manages the responses must be on a different process so when each thread wakes up it checks if its request was finished in that queue that was managed by someone else that was awake all that time?

-

Tomasz Bartkowiak over 2 yearsTypically yes but I presume there could be other ways that don't require a queue running in a separate process but e.g. some dedicated shared memory buffer that could be written to by some other process via IPC). In some other cases you can have e.g. a distributed queue (e.g.

Tomasz Bartkowiak over 2 yearsTypically yes but I presume there could be other ways that don't require a queue running in a separate process but e.g. some dedicated shared memory buffer that could be written to by some other process via IPC). In some other cases you can have e.g. a distributed queue (e.g.kafka) which not only runs in a separate process but in a different container (or a different virtual machine). -

Simply Beautiful Art over 2 yearsA whole package isn't super necessary for this, you can see my answer on how to do most of this using normal

Simply Beautiful Art over 2 yearsA whole package isn't super necessary for this, you can see my answer on how to do most of this using normalasyncioandconcurrent.futures.ProcessPoolExecutor. A notable difference is thataiomultiprocessingworks on coroutines, which means it likely spawns many event loops instead of using one unified event loop (as seen from the source code), for better or worse. -

Christo Goosen over 2 yearsOf course its not necessary for a library. But the point of the library is multiple event loops. This was built at Facebook in a situation where they wanted to use every available CPU for a python based object/file store. Think django spawning multiple subprocesses with uwsgi and each has mutliple threads.

Christo Goosen over 2 yearsOf course its not necessary for a library. But the point of the library is multiple event loops. This was built at Facebook in a situation where they wanted to use every available CPU for a python based object/file store. Think django spawning multiple subprocesses with uwsgi and each has mutliple threads. -

Christo Goosen over 2 yearsAlso the library removes some boilerplate code, simplifies it for the developer.

Christo Goosen over 2 yearsAlso the library removes some boilerplate code, simplifies it for the developer. -

Simply Beautiful Art over 2 yearsThanks for explaining the difference, I think I now have a better understanding of its purpose. Rather than really be for computationally expensive tasks, as you might normally think for

Simply Beautiful Art over 2 yearsThanks for explaining the difference, I think I now have a better understanding of its purpose. Rather than really be for computationally expensive tasks, as you might normally think formultiprocessing, where it actually shines is in running multiple event loops. That is to say, this is the option to go to if you find the event loop forasyncioitself to have become the bottleneck, such as due to a shear number of clients on a server. -

Christo Goosen over 2 yearsPleasure. Yeah I happened to watch a youtube video where the author described its use. Was very insightful as it explained the purpose well. Definitely not a magic bullet and probably not the use case for everyone. Would perhaps be at the core of web server or low level network application. Basically just churn through as many requests as CPUs and the multiple event loops can handle. youtube.com/watch?v=0kXaLh8Fz3k

Christo Goosen over 2 yearsPleasure. Yeah I happened to watch a youtube video where the author described its use. Was very insightful as it explained the purpose well. Definitely not a magic bullet and probably not the use case for everyone. Would perhaps be at the core of web server or low level network application. Basically just churn through as many requests as CPUs and the multiple event loops can handle. youtube.com/watch?v=0kXaLh8Fz3k -

Zac Wrangler almost 2 yearscan you elaborate on the reason why using

requests.getinstead ofget_datawould avoid unloading json objects into memory? they are both functions and in order to return from that, therequests.getseems also need to unload the object into memory. -

Simply Beautiful Art almost 2 years@ZacWrangler There are two significant components to the process here:

Simply Beautiful Art almost 2 years@ZacWrangler There are two significant components to the process here:requests.get(...)and.json()["data"]. One performs an API request, the other loads the desired data into memory. Applyingthreadingto the API request may result in a significant performance improvement because your computer isn't doing any work for it, it's just waiting for stuff to get downloaded. Applyingthreadingto the.json()["data"]may (and likely will) result in multiple.json()'s to start at the same time, and eventually followed by["data"], perhaps after ALL of the.json()'s are ran. -

Simply Beautiful Art almost 2 years(cont.) In the latter case, this could cause a significant amount of memory to get loaded in at once (size of the

Simply Beautiful Art almost 2 years(cont.) In the latter case, this could cause a significant amount of memory to get loaded in at once (size of the.json()times the amount of threads), which can be catastrophic for performance. Withasyncio, you can easily cherry-pick what code gets ran withthreadingand what code doesn't, allowing you to choose not to run.json()["data"]withthreadingand instead only load them one at a time. -

CAIO WANDERLEY almost 2 yearsWell if you are doing any type of calculation then this is an CPU bound problem so you should use multiprocessing and if is simple you may not need any concurrency solution. In the case of read data locally or from a database, well this is an IO bound problem, so either threading or asyncio could help you. The main difference between the two is that in asyncio you have more control than threading and threading has a initialization cost to your program, so if you plan to use a lot of threads maybe asyncio will suit better to you. I don't think that we have another type of problem besides those

-

hadriel almost 2 yearsAs always, there are of course exceptions to such rules. One example is if you need to run a non-trivial subprocess. The

subprocessmodule and itsPopenclass use a busy-loop while waiting for the subprocess to complete, whileasyncio.create_subprocess_exec()and friends use the loop's poller. Soasynciocan be better for such use-cases too. -

Arjuna Deva almost 2 yearsI am a bit lost with the concept of IO in threading. What are examples of IO probems?