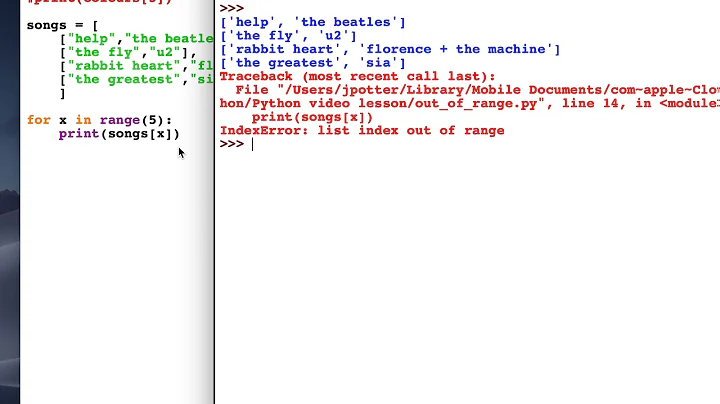

pytorch embedding index out of range

Solution 1

Found the answer here https://discuss.pytorch.org/t/embeddings-index-out-of-range-error/12582

I'm converting words to indexes, but I had the indexes based off the total number of words, not vocab_size which is a smaller set of the most frequent words.

Solution 2

You've got some things wrong. Please correct those and re-run your code:

params['vocab_size']is the total number of unique tokens. So, it should belen(vocab)in the tutorial.params['embedding_dim']can be50or100or whatever you choose. Most folks would use something in the range[50, 1000]both extremes inclusive. Both Word2Vec and GloVe uses300dimensional embeddings for the words.self.embedding()would accept arbitrary batch size. So, it doesn't matter. BTW, in the tutorial the commented things such as# dim: batch_size x batch_max_len x embedding_dimindicates the shape of output tensor of that specific operation, not the inputs.

Related videos on Youtube

gary69

Updated on June 23, 2022Comments

-

gary69 almost 2 years

I'm following this tutorial here https://cs230-stanford.github.io/pytorch-nlp.html. In there a neural model is created, using

nn.Module, with an embedding layer, which is initialized hereself.embedding = nn.Embedding(params['vocab_size'], params['embedding_dim'])vocab_sizeis the total number of training samples, which is 4000.embedding_dimis 50. The relevant piece of theforwardmethod is belowdef forward(self, s): # apply the embedding layer that maps each token to its embedding s = self.embedding(s) # dim: batch_size x batch_max_len x embedding_dimI get this exception when passing a batch to the model like so

model(train_batch)train_batchis a numpy array of dimensionbatch_sizexbatch_max_len. Each sample is a sentence, and each sentence is padded so that it has the length of the longest sentence in the batch.File "/Users/liam_adams/Documents/cs512/research_project/custom/model.py", line 34, in forward s = self.embedding(s) # dim: batch_size x batch_max_len x embedding_dim File "/Users/liam_adams/Documents/cs512/venv_research/lib/python3.7/site-packages/torch/nn/modules/module.py", line 493, in call result = self.forward(*input, **kwargs) File "/Users/liam_adams/Documents/cs512/venv_research/lib/python3.7/site-packages/torch/nn/modules/sparse.py", line 117, in forward self.norm_type, self.scale_grad_by_freq, self.sparse) File "/Users/liam_adams/Documents/cs512/venv_research/lib/python3.7/site-packages/torch/nn/functional.py", line 1506, in embedding return torch.embedding(weight, input, padding_idx, scale_grad_by_freq, sparse) RuntimeError: index out of range at ../aten/src/TH/generic/THTensorEvenMoreMath.cpp:193

Is the problem here that the embedding is initialized with different dimensions than those of my batch array? My

batch_sizewill be constant butbatch_max_lenwill change with every batch. This is how its done in the tutorial. -

gary69 almost 5 yearsThank you, the problem was with my word indexes being greater than my

vocab_size -

Yingqiang Gao over 3 yearsI'm running into the same problem, but I didn't change the dictionary at all. How that your word indexes is bigger than the vocab size?