Resizing a LUKS encrypted volume

LUKS doesn't actually store the size of the device -- it simply discovers it when the volume is opened. It therefore only comes into play if the volume is not closed and reopened during the process (e.g. doing an online grow). In this case the size of the open volume needs to be rediscovered.

To shrink your volume, use the following process:

- Unmount the filesystem with

umount - Resize the filesystem with

resize2fs - Close the LUKS volume with

cryptsetup luksClose - Resize the LV with

lvreduceorlvresize - Open the LUKS volume with

cryptsetup luksOpen - Mount the filesystem with

mount

You could also omit the luksClose and luksOpen steps, and use cryptsetup resize after resizing the LV. Also remember that LUKS uses some extra space to store metadata, so the LV needs to be slightly bigger than the filesystem. I usually resize the filesystem significantly smaller, and then grow it again after resizing the LV.

If you were growing the filesystem and wanted to do it online, you would use the following process:

- Resize the LV with

lvextendorlvresize - Update the size of the open LUKS volume with

cryptsetup resize - Grow the filesystem with

resize2fs

Related videos on Youtube

Comments

-

mgorven over 1 year

I have a 500GiB ext4 filesystem on top of LUKS on top of an LVM LV. I want to resize the LV to 100GiB. I know how to resize ext4 on top of an LVM LV, but how do I deal with the LUKS volume?

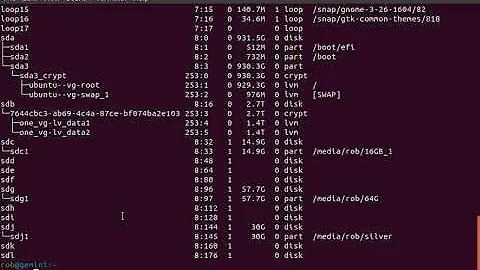

mgorven@moab:~% sudo lvdisplay /dev/moab/backup --- Logical volume --- LV Name /dev/moab/backup VG Name moab LV UUID nQ3z1J-Pemd-uTEB-fazN-yEux-nOxP-QQair5 LV Write Access read/write LV Status available # open 1 LV Size 500.00 GiB Current LE 128000 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 2048 Block device 252:3 mgorven@moab:~% sudo cryptsetup status backup /dev/mapper/backup is active and is in use. type: LUKS1 cipher: aes-cbc-essiv:sha256 keysize: 256 bits device: /dev/mapper/moab-backup offset: 3072 sectors size: 1048572928 sectors mode: read/write mgorven@moab:~% sudo tune2fs -l /dev/mapper/backup tune2fs 1.42 (29-Nov-2011) Filesystem volume name: backup Last mounted on: /srv/backup Filesystem UUID: 63877e0e-0549-4c73-8535-b7a81eb363ed Filesystem magic number: 0xEF53 Filesystem revision #: 1 (dynamic) Filesystem features: has_journal ext_attr resize_inode dir_index filetype extent flex_bg sparse_super large_file huge_file uninit_bg dir_nlink extra_isize Filesystem flags: signed_directory_hash Default mount options: (none) Filesystem state: clean with errors Errors behavior: Continue Filesystem OS type: Linux Inode count: 32768000 Block count: 131071616 Reserved block count: 0 Free blocks: 112894078 Free inodes: 32044830 First block: 0 Block size: 4096 Fragment size: 4096 Reserved GDT blocks: 992 Blocks per group: 32768 Fragments per group: 32768 Inodes per group: 8192 Inode blocks per group: 512 RAID stride: 128 RAID stripe width: 128 Flex block group size: 16 Filesystem created: Sun Mar 11 19:24:53 2012 Last mount time: Sat May 19 13:29:27 2012 Last write time: Fri Jun 1 11:07:22 2012 Mount count: 0 Maximum mount count: 100 Last checked: Fri Jun 1 11:03:50 2012 Check interval: 31104000 (12 months) Next check after: Mon May 27 11:03:50 2013 Lifetime writes: 118 GB Reserved blocks uid: 0 (user root) Reserved blocks gid: 0 (group root) First inode: 11 Inode size: 256 Required extra isize: 28 Desired extra isize: 28 Journal inode: 8 Default directory hash: half_md4 Directory Hash Seed: 383bcbc5-fde9-4720-b98e-2d6224713ecf Journal backup: inode blocks -

Johnny Utahh about 4 yearsQuite helpful. Does anyone have experience shrinking or growing a LUKS "partition" within a loop device (a "filesystem contained within a file," as I understand it) instead of an LVM, created in a fashion similar to this procedure?