Sluggish performance on NTFS drive with large number of files

The server did not have enough memory. Instead of caching NTFS metafile data in memory every file access required multiple disk reads. As usual, the issue is obvious once you see it. Let me share what clouded my perspective:

The server showed 2 GB memory available both in Task Manager and RamMap. So either Windows decided that the available memory was not enough to hold a meaningful part of the metafile data. Or some internal restriction does not allow to use the last bit of memory for metafile data.

After upgrading the RAM Task Manager would not show more memory being used. However, RamMap reported multiple GB of metafile data being held as standby data. Apparently, the standby data can have a substantial impact.

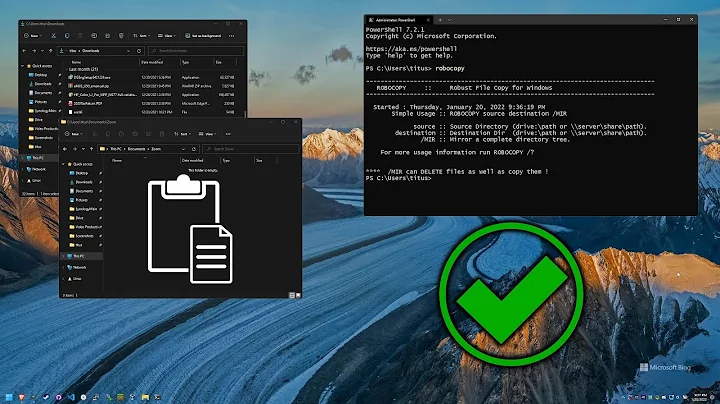

Tools used for the analysis:

-

fsutil fsinfo ntfsinfo driveletter:to show NTFS MFT size (or NTFSInfo) - RamMap to show memory allocation

- Process Monitor to show that every file read is preceded by about 4 read operations to drive:\$Mft and drive:\$Directory. Though I could not find the exact definition of $Directory it seems to be related to the MFT as well.

Related videos on Youtube

Paul B.

Updated on September 18, 2022Comments

-

Paul B. almost 2 years

I am looking at this setup:

- Windows Server 2012

- 1 TB NTFS drive, 4 KB clusters, ~90% full

- ~10M files stored in 10,000 folders = ~1,000 files/folder

- Files mostly quite small < 50 KB

- Virtual drive hosted on disk array

When an application accesses files stored in random folders it takes 60-100 ms to read each file. With a test tool it seems that the delay occurs when opening the file. Reading the data then only takes a fraction of the time.

In summary this means that reading 50 files can easily take 3-4 seconds which is much more than expected. Writing is done in batch so performance is not an issue here.

I already followed advice on SO and SF to arrive at these numbers.

- Using folders to reduce number of files per folder (Storing a million images in the filesystem)

- Run

contigto defragment folders and files (https://stackoverflow.com/a/291292/1059776) - 8.3 names and last access time disabled (Configuring NTFS file system for performance)

What to do about the read times?

- Consider 60-100 ms per file to be ok (it isn't, is it?)

- Any ideas how the setup can be improved?

- Are there low-level monitoring tools that can tell what exactly the time is spent on?

UPDATE

As mentioned in the comments the system runs Symantec Endpoint Protection. However, disabling it does not change the read times.

PerfMon measures 10-20 ms per read. This would mean that any file read takes ~6 I/O read operations, right? Would this be MFT lookup and ACL checks?

The MFT has a size of ~8.5 GB which is more than main memory.

-

Tomas Dabasinskas about 8 yearsTo rule something out, would you mind sharing a screenshot of RAMMap?

-

Paul B. about 8 yearsDo you mean the File Summary table? Now that you mention it I see a SYMEFA.DB file with 900 MB in memory which reminds me that Symantec Endpoint Protection is installed on the system. Maybe that's the culprit? I'll try to find out more.

-

Tomas Dabasinskas about 8 yearsActually, I was more interested in Metafile usage

-

Paul B. about 8 yearsOk, got it. Metafile shows 250 MB total, 40 active, 210 standby. Seems normal or not?

-

Tomas Dabasinskas about 8 yearsYes, it seems so

-

shodanshok about 8 yearsA question: if the same file is opened two times in a row, the second time it is faster? If so, how much faster?

shodanshok about 8 yearsA question: if the same file is opened two times in a row, the second time it is faster? If so, how much faster? -

Paul B. about 8 yearsThe second read is around 500x faster than the first. Looks like the data comes directly from a cache.

-

Paul B. about 8 years@TomasDabasinskas - getting back to Metafile usage, if the amount kept in memory is much smaller than the actual MFT can I assume that multiple MFT reads are require before accessing a file? This might explain the issue. Do you happen to know if adding RAM will make Windows cache the MFT? Currently 1 GB is unused. But maybe Windows determines that it cannot fit the entire MFT and therefore doesn't cache anything?

-

jarvis about 8 yearsReads will take time if there are a lot of files in a folder. The number of files greatly matters over the size of each file. If its possible, you can further break them down to more sub-folders with fewer files. That should increase read speeds.

jarvis about 8 yearsReads will take time if there are a lot of files in a folder. The number of files greatly matters over the size of each file. If its possible, you can further break them down to more sub-folders with fewer files. That should increase read speeds. -

phuclv almost 6 yearsSince you have mainly small files, 4KB cluster will waste a lot of space, on average 2KB/50KB = 4%. Moreover you're 90% full which mean Windows already have to write into reserved space for the MFT (default 12.5%) which is not a good sign. Reducing cluster size will give you a few percent free space back for the MFT

phuclv almost 6 yearsSince you have mainly small files, 4KB cluster will waste a lot of space, on average 2KB/50KB = 4%. Moreover you're 90% full which mean Windows already have to write into reserved space for the MFT (default 12.5%) which is not a good sign. Reducing cluster size will give you a few percent free space back for the MFT

-

D-Klotz almost 8 yearsSo increasing physical memory did improve the response times? You did not configure any registry setting?

-

Paul B. almost 8 yearsYes. I had previously played around with registry settings. But in the end no change was needed after adding memory.

-

phuclv almost 6 yearsStandby memory are memory regions that are ready for programs to use. But since they're not used yet, the OS will utilize them as a cache. Once any program needs that memory it'll be released immediately

phuclv almost 6 yearsStandby memory are memory regions that are ready for programs to use. But since they're not used yet, the OS will utilize them as a cache. Once any program needs that memory it'll be released immediately