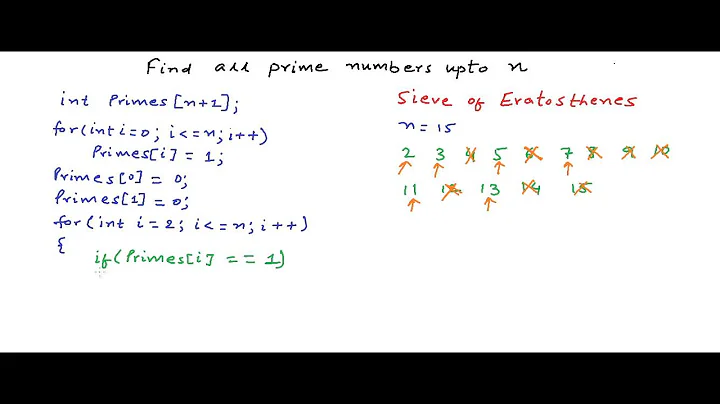

Time complexity of Sieve of Eratosthenes algorithm

Solution 1

Your n/2 + n/3 + n/5 + … n/97 is not O(n), because the number of terms is not constant. [Edit after your edit: O(n2) is too loose an upper bound.] A loose upper-bound is n(1+1/2+1/3+1/4+1/5+1/6+…1/n) (sum of reciprocals of all numbers up to n), which is O(n log n): see Harmonic number. A more proper upper-bound is n(1/2 + 1/3 + 1/5 + 1/7 + …), that is sum of reciprocals of primes up to n, which is O(n log log n). (See here or here.)

The "find the next prime number" bit is only O(n) overall, amortized — you will move ahead to find the next number only n times in total, not per step. So this whole part of the algorithm takes only O(n).

So using these two you get an upper bound of O(n log log n) + O(n) = O(n log log n) arithmetic operations. If you count bit operations, since you're dealing with numbers up to n, they have about log n bits, which is where the factor of log n comes in, giving O(n log n log log n) bit operations.

Solution 2

That the complexity includes the loglogn term tells me that there is a sqrt(n) somewhere.

Keep in mind that when you find a prime number P while sieving, you don't start crossing off numbers at your current position + P; you actually start crossing off numbers at P^2. All multiples of P less than P^2 will have been crossed off by previous prime numbers.

Solution 3

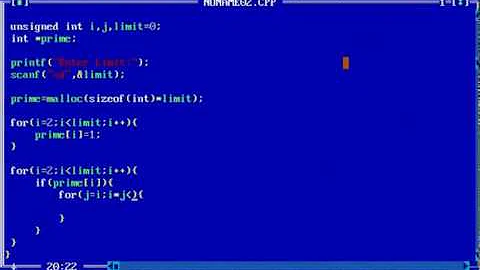

- The inner loop does

n/isteps, whereiis prime => the whole complexity issum(n/i) = n * sum(1/i). According to prime harmonic series, thesum (1/i)whereiis prime islog (log n). In total,O(n*log(log n)). - I think the upper loop can be optimized by replacing

nwithsqrt(n)so overall time complexity willO(sqrt(n)loglog(n)):

void isPrime(int n){

int prime[n],i,j,count1=0;

for(i=0; i < n; i++){

prime[i] = 1;

}

prime[0] = prime[1] = 0;

for(i=2; i <= n; i++){

if(prime[i] == 1){

printf("%d ",i);

for(j=2; (i*j) <= n; j++)

prime[i*j] = 0;

}

}

}

Solution 4

int n = 100;

int[] arr = new int[n+1];

for(int i=2;i<Math.sqrt(n)+1;i++) {

if(arr[i] == 0) {

int maxJ = (n/i) + 1;

for(int j=2;j<maxJ;j++)

{

arr[i*j]= 1;

}

}

}

for(int i=2;i<=n;i++) {

if(arr[i]==0) {

System.out.println(i);

}

}

For all i>2, Ti = sqrt(i) * (n/i) => Tk = sqrt(k) * (n/k) => Tk = n/sqrt(k)

Loop stops when k=sqrt(n) => n[ 1/sqrt(2) + 1/sqrt(3) + ...] = n * log(log(n)) => O(nloglogn)

Related videos on Youtube

Lazer

Updated on June 06, 2021Comments

-

Lazer almost 3 years

From Wikipedia:

The complexity of the algorithm is

O(n(logn)(loglogn))bit operations.How do you arrive at that?

That the complexity includes the

loglognterm tells me that there is asqrt(n)somewhere.

Suppose I am running the sieve on the first 100 numbers (

n = 100), assuming that marking the numbers as composite takes constant time (array implementation), the number of times we usemark_composite()would be something liken/2 + n/3 + n/5 + n/7 + ... + n/97 = O(n^2)And to find the next prime number (for example to jump to

7after crossing out all the numbers that are multiples of5), the number of operations would beO(n).So, the complexity would be

O(n^3). Do you agree?-

R. Martinho Fernandes about 14 yearsI don't know about the rest (too mathy for my too sleepy brain right now), but the square root stems from the fact that if a number has no divisors less that its square root, it is prime. Also, I just learned that loglog(n) means there's a square root. Nice.

R. Martinho Fernandes about 14 yearsI don't know about the rest (too mathy for my too sleepy brain right now), but the square root stems from the fact that if a number has no divisors less that its square root, it is prime. Also, I just learned that loglog(n) means there's a square root. Nice. -

sidgeon smythe about 14 yearsHow does the loglog(n) being there mean there is a sqrt(n) somewhere? (@Martinho: Why do you say you "just learned this"?) The actual analysis does not involve any square roots!

-

-

Will Ness about 11 yearsthis statement is true in itself, but has no bearing on the quoted statement which itself has no merit. Whether we start from

Will Ness about 11 yearsthis statement is true in itself, but has no bearing on the quoted statement which itself has no merit. Whether we start fromporp^2, the complexity is the same (with direct access arrays).SUM (1/p) {p<N} ~ log (log N)is the reason. -

crisron about 8 yearsFor one part of the problem, you are considering the asymptotic complexity. For the other part, you are considering amortized compexity. I'm confused.

crisron about 8 yearsFor one part of the problem, you are considering the asymptotic complexity. For the other part, you are considering amortized compexity. I'm confused. -

sidgeon smythe about 8 years@crisron What is the problem? It's not the case that "asymptotic complexity" and "amortized complexity" are two different kinds of the same thing. Amortization is just a technique for more carefully counting something, which can happen to be the asymptotic complexity.

-

crisron about 8 yearsAll this while I used to think of them as different. Thanks for clarifying it.

crisron about 8 yearsAll this while I used to think of them as different. Thanks for clarifying it. -

a_123 about 8 years@ShreevatsaR Why do we calculate the sum of harmonic series upto n terms. Shouldn't we calculate just upto sqrt(n) terms? Giving the answer as theta of n(loglogsqrt(n)) arithmetic operations? Also, wikipedia says that the space complexity is O(n). Shouldn't that be theta of n because we need an array of n elements in any case?

-

sidgeon smythe about 8 years@s_123 Yes you can calculate just up to √n terms, but it doesn't make a difference in the asymptotic analysis (or even a significant practical difference in the running time), because log(√x) = (1/2)log x for any x. So Θ(n log log √n) = Θ(n log log n). To your other question, yes the space complexity is Θ(n), which is also O(n): it is conventional to use O() to indicate that you're specifying the upper bound, instead of saying Θ() to indicate that it's the lower bound as well (especially when the lower bound is obvious, as it is here).

-

a_123 about 8 years@ShreevatsaR Thanks :)

-

a_123 about 8 years@ShreevatsaR Oh..how do you type the notation for theta here?

-

sidgeon smythe about 8 years@s_123 :-) Θ is a Unicode character; I just copy-pasted it from somewhere else but you can also probably find a keyboard layout with which you can input this character. Also you may find my answer here helpful: What is the difference between O, Ω, and Θ?

-

Bananach almost 6 years@ShreevatsaR Shouldn't every single sieving step be bounded by $\sqrt(n)$ so that you actually can replace the main $n$ by $\sqrt{n}$? You only need to check the multiples until you reach $\sqrt(n)$

-

sidgeon smythe almost 6 years@Bananach You can stop at √n (instead of at n), but it doesn't make a difference: the running time will be (n/2 + n/3 + ... + n/P) arithmetic operations where P is the largest prime less than √n (instead of n), but that sum is still asymptotic to n log log n, just as if you stopped at n. (See the comment above from "Mar 25 '16 at 21:57".)

-

Bananach almost 6 years@ShreevatsaR Why is the numerator n though? For each prime below or equal to P, I need to cross out the multiples that are below P, so I would have said the running time is (P/2+P/3+...+P/P)=P log log P

-

sidgeon smythe almost 6 years@Bananach Then you'll only find the primes up to P, not up to N. E.g. consider N = 1000, so that P=31. Also consider the fact that 893 = 19×47 is not prime. Now, if you don't cross out all multiples of 19 up to 893 or higher, you have no way of knowing that 893 is not prime.

-

Bananach almost 6 years@ShreevatsaR of course, thanks for letting me dig out this old thread for such a stupid question

-

sidgeon smythe almost 6 years@Bananach Not stupid at all, I get confused from time to time too :-)

-

Will Ness almost 6 yearswrong. we mark all the way to the N: N/2 + N/3 + N/5 + N/7 + N/11 + ... = N (1/2 + 1/3 + 1/5 + 1/7 + 1/11 + ...) ~ N log log (sqrt N) ~ N log log N.

Will Ness almost 6 yearswrong. we mark all the way to the N: N/2 + N/3 + N/5 + N/7 + N/11 + ... = N (1/2 + 1/3 + 1/5 + 1/7 + 1/11 + ...) ~ N log log (sqrt N) ~ N log log N. -

Will Ness almost 6 yearsno, replacing n with sqrt(n) makes it ~ n log log (sqrt n) which is still ~ n log log n. and

Will Ness almost 6 yearsno, replacing n with sqrt(n) makes it ~ n log log (sqrt n) which is still ~ n log log n. andisprimeis absolutely the wrong name to use there. -

Jack O'Connor over 2 yearsThe optimization in this answer helped me fix a "time limit exceeded" error on leetcode :)