Gevent monkeypatching breaking multiprocessing

Solution 1

use monkey.patch_all(thread=False, socket=False)

I have run into the same issue in a similar situation and tracked this down to line 115 in gevent/monkey.py under the patch_socket() function: _socket.socket = socket.socket. Commenting this line out prevents the breakage.

This is where gevent replaces the stdlib socket library with its own. multiprocessing.connection uses the socket library quite extensively, and is apparently not tolerant to this change.

Specifically, you will see this in any scenario where a module you import performs a gevent.monkey.patch_all() call without setting socket=False. In my case it was grequests that did this, and I had to override the patching of the socket module to fix this error.

Solution 2

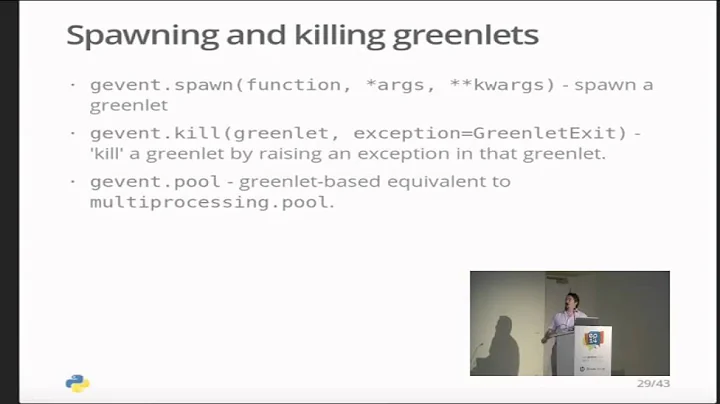

Application of multiprocessing in the context of gevent is unfortunately known to raise problems. Your rationale, however, is reasonable ("a lot of network activity, but also a lot of CPU activity"). If you like, have a look at http://gehrcke.de/gipc. This is designed primarily for your use case. With gipc, you can easily spawn a few fully gevent-aware child processes and let them cooperatively talk to each other and/or with the parent through pipes.

If you have specific questions, you're welcome to get back to me.

Solution 3

If you will use original Queue, then you code will work normally even with monkey patched socket.

import multiprocessing

from gevent import monkey

monkey.patch_all(thread=False)

q= multiprocessing.Queue()

Solution 4

Your provided code works for me on Windows 7.

EDIT:

Removed previous answer, because I've tried your code on Ubuntu 11.10 VPS, and I'm getting the same error.

Look's like Eventlet have this issue too

Solution 5

Wrote a replacement Nose Multiprocess plugin - this one should play well with all kinds of crazy Gevent-based patching.

https://pypi.python.org/pypi/nose-gevented-multiprocess/

https://github.com/dvdotsenko/nose_gevent_multiprocess

- Switches from

multiprocess.forkto plainsubprocess.popenfor worker processes (fixes module-level erroneously shared objects issues for me) - Switched from multiprocess.Queue to JSON-RPC over HTTP for master-to-clients RPC

- This can now theoretically allow tests to be distributed to multiple machines

Related videos on Youtube

user964375

Updated on June 04, 2022Comments

-

user964375 almost 2 years

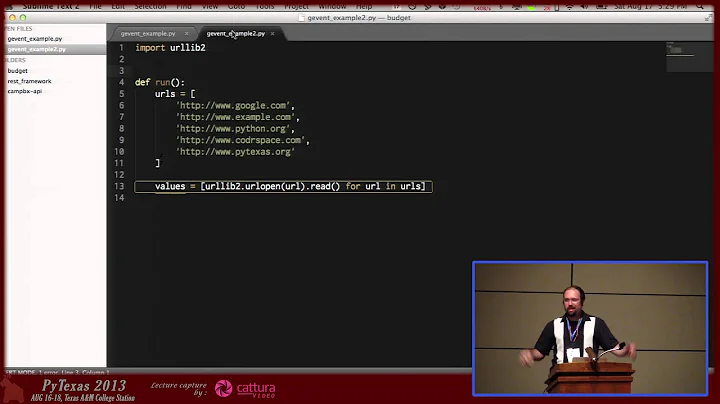

I am attempting to use multiprocessing's pool to run a group of processes, each of which will run a gevent pool of greenlets. The reason for this is that there is a lot of network activity, but also a lot of CPU activity, so to maximise my bandwidth and all of my CPU cores, I need multiple processes AND gevent's async monkey patching. I am using multiprocessing's manager to create a queue which the processes will access to get data to process.

Here is a simplified fragment of the code:

import multiprocessing from gevent import monkey monkey.patch_all(thread=False) manager = multiprocessing.Manager() q = manager.Queue()Here is the exception it produces:

Traceback (most recent call last): File "multimonkeytest.py", line 7, in <module> q = manager.Queue() File "/usr/local/Cellar/python/2.7.2/Frameworks/Python.framework/Versions/2.7/lib/python2.7/multiprocessing/managers.py", line 667, in temp token, exp = self._create(typeid, *args, **kwds) File "/usr/local/Cellar/python/2.7.2/Frameworks/Python.framework/Versions/2.7/lib/python2.7/multiprocessing/managers.py", line 565, in _create conn = self._Client(self._address, authkey=self._authkey) File "/usr/local/Cellar/python/2.7.2/Frameworks/Python.framework/Versions/2.7/lib/python2.7/multiprocessing/connection.py", line 175, in Client answer_challenge(c, authkey) File "/usr/local/Cellar/python/2.7.2/Frameworks/Python.framework/Versions/2.7/lib/python2.7/multiprocessing/connection.py", line 409, in answer_challenge message = connection.recv_bytes(256) # reject large message IOError: [Errno 35] Resource temporarily unavailableI believe this must be due to some difference between the behaviour of the normal socket module and gevent's socket module.

If I monkeypatch within the subprocess, The queue is created successfully, but when the subprocess tries to get() from the queue, a very similar exception occurs. The socket does need to be monkeypatched due to doing large numbers of network requests in the subprocesses.

My version of gevent, which I believe is the latest:

>>> gevent.version_info (1, 0, 0, 'alpha', 3)Any ideas?

-

jfs about 11 yearsrelated: bugs.python.org/issue6056

-

-

user964375 over 12 yearsThe error is occurring without actually doing any requests, but I have also tested with a local gevent bottle.py powered server. I am getting the traceback on both OS X Lion and Ubuntu 11.04 VPS, both with Python 2.7. What OS / Python / Gevent are you using?

-

reclosedev over 12 years@user964375, Hm, on Ubuntu 11.10 (VPS) i'm getting the same error. No errors on Win7.

-

Martin over 12 yearsTested on windows xp, and the code, even without the gevent part breaks after some time. Seems to be an issue in multiprocessing module.

-

Nisan.H about 11 yearsI personally think it's the thoughtless monkey patching that's problematic. Anything that uses gevent should come with a very large warning sign about what it's going to break. The average programmer who hasn't yet encountered this particular quirk of gevent-multiprocessing incompatibility would (rightfully) assume that gevent doesn't break stdlib features. It's not exactly obvious that gevent doesn't patch the IPC functions (which is doable) when it patches socket.

-

ddotsenko over 10 yearsI tried the gipc approach. Since it misses the Queue object, alternative implementation using pipes was too convoluted. See

ddotsenko over 10 yearsI tried the gipc approach. Since it misses the Queue object, alternative implementation using pipes was too convoluted. Seenose-gevented-multiprocessmodule instead -

Ishan Bhatt almost 7 yearsCan I use requests library with gevent without the patching the socket?