How to mark one of RAID1 disks as a spare? (mdadm)

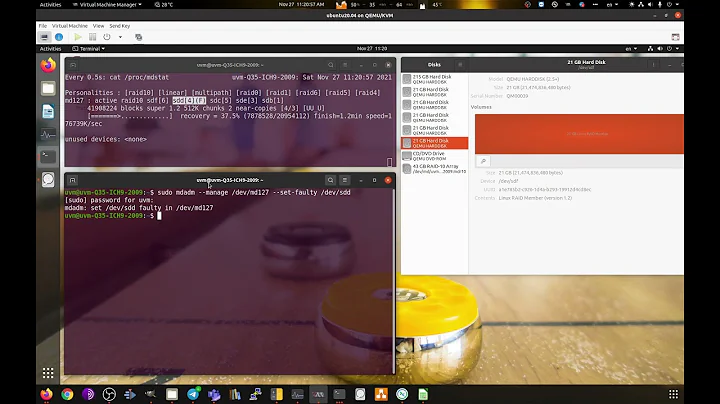

You can check the current state of the array with cat /proc/mdstat. In this example, that's where the data comes from.

So let's assume we have md127 with 3 disks in a raid1. Here they're just partitions of one disk, but it doesn't matter

md127 : active raid1 vdb3[2] vdb2[1] vdb1[0]

102272 blocks super 1.2 [3/3] [UUU]

We need to offline one of the disks before we can remove it:

$ sudo mdadm --manage /dev/md127 --fail /dev/vdb2

mdadm: set /dev/vdb2 faulty in /dev/md127

And the status now shows it's bad

md127 : active raid1 vdb3[2] vdb2[1](F) vdb1[0]

102272 blocks super 1.2 [3/2] [U_U]

We can now remove this disk:

$ sudo mdadm --manage /dev/md127 --remove /dev/vdb2

mdadm: hot removed /dev/vdb2 from /dev/md127

md127 : active raid1 vdb3[2] vdb1[0]

102272 blocks super 1.2 [3/2] [U_U]

And now resize:

$ sudo mdadm --grow /dev/md127 --raid-devices=2

raid_disks for /dev/md127 set to 2

unfreeze

At this point we have successfully reduced the array down to 2 disks:

md127 : active raid1 vdb3[2] vdb1[0]

102272 blocks super 1.2 [2/2] [UU]

So now the new disk can be re-added as a hotspare:

$ sudo mdadm -a /dev/md127 /dev/vdb2

mdadm: added /dev/vdb2

md127 : active raid1 vdb2[3](S) vdb3[2] vdb1[0]

102272 blocks super 1.2 [2/2] [UU]

The (S) shows it's a hotspare.

We can verify this works as expected by failing an existing disk and noticing a rebuild takes place on the spare:

$ sudo mdadm --manage /dev/md127 --fail /dev/vdb1

mdadm: set /dev/vdb1 faulty in /dev/md127

md127 : active raid1 vdb2[3] vdb3[2] vdb1[0](F)

102272 blocks super 1.2 [2/1] [_U]

[=======>.............] recovery = 37.5% (38400/102272) finish=0.0min speed=38400K/sec

vdb2 is no longer marked (S) because it's not a hotspare.

After the bad disk has been re-added it is now marked as the hotspare

md127 : active raid1 vdb1[4](S) vdb2[3] vdb3[2]

102272 blocks super 1.2 [2/2] [UU]

Related videos on Youtube

Infogeek

Updated on September 18, 2022Comments

-

Infogeek over 1 year

I have a healthy and working software based RAID1 using 3 HDDs as active on my Debian machine.

I want to mark one of the disks as a spare so it ends up being 2 active + 1 spare.

Things like:

mdadm --manage --raid-devices=2 --spare-devices=1 /dev/md0and similar just fail saying either one of the options is not supported in current option mode or simply fails.

Billy@localhost~#: mdadm -G --raid-devices=2 /dev/md0 mdadm: failed to set raid disks unfreezeor

Billy@localhost~#: mdadm --manage --raid-devices=2 --spare-devices=1 /dev/md0 mdadm: :option --raid-devices not valid in manage modeor similar. I have no idea man. please help?

-

Infogeek over 7 yearsThank you sir. You are the man. I find it odd that you HAVE TO fail it first and there's no "switch" parameter or something. By the way, you also think running 3HDDs as active in RAID1 is probably a waste? Just want your opinion on whether I've done the right thing.

-

Stephen Harris over 7 yearsIn a RAID-1 with 3 disks would keep all three active, rather than a hotspare, so that if there's a failure you still have resilliency and don't need to wait for a rebuild. 2 active and 1 hotspare seems an odd configuration.

Stephen Harris over 7 yearsIn a RAID-1 with 3 disks would keep all three active, rather than a hotspare, so that if there's a failure you still have resilliency and don't need to wait for a rebuild. 2 active and 1 hotspare seems an odd configuration.