KeyError : The tensor variable , Refer to the tensor which does not exists

For graph.get_tensor_by_name("prediction:0") to work you should have named it when you created it. This is how you can name it

prediction = tf.nn.softmax(tf.matmul(last,weight)+bias, name="prediction")

If you have already trained the model and can't rename the tensor, you can still get that tensor by its default name as in,

y_pred = graph.get_tensor_by_name("Reshape_1:0")

If Reshape_1 is not the actual name of the tensor, you'll have to look at the names in the graph and figure it out.

You can inspect that with

for op in graph.get_operations():

print(op.name)

Related videos on Youtube

Comments

-

Madhi almost 2 years

Using LSTMCell i trained a model to do text generation . I started the tensorflow session and save all the tensorflow varibles using tf.global_variables_initializer() .

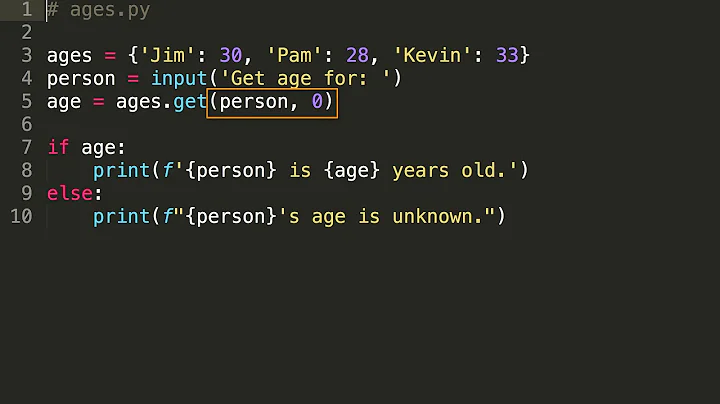

import tensorflow as tf sess = tf.Session() //code blocks run_init_op = tf.global_variables_intializer() sess.run(run_init_op) saver = tf.train.Saver() #varible that makes prediction prediction = tf.nn.softmax(tf.matmul(last,weight)+bias) #feed the inputdata into model and trained #saved the model #save the tensorflow model save_path= saver.save(sess,'/tmp/text_generate_trained_model.ckpt') print("Model saved in the path : {}".format(save_path))The model get trained and saved all its session . Link to review the whole code lstm_rnn.py

Now i loaded the stored model and tried to do text generation for the document . So,i restored the model with following code

tf.reset_default_graph() imported_data = tf.train.import_meta_graph('text_generate_trained_model.ckpt.meta') with tf.Session() as sess: imported_meta.restore(sess,tf.train.latest_checkpoint('./')) #accessing the default graph which we restored graph = tf.get_default_graph() #op that we can be processed to get the output #last is the tensor that is the prediction of the network y_pred = graph.get_tensor_by_name("prediction:0") #generate characters for i in range(500): x = np.reshape(pattern,(1,len(pattern),1)) x = x / float(n_vocab) prediction = sess.run(y_pred,feed_dict=x) index = np.argmax(prediction) result = int_to_char[index] seq_in = [int_to_char[value] for value in pattern] sys.stdout.write(result) patter.append(index) pattern = pattern[1:len(pattern)] print("\n Done...!") sess.close()I came to know that the prediction variable does not exist in the graph.

KeyError: "The name 'prediction:0' refers to a Tensor which does not exist. The operation, 'prediction', does not exist in the graph."

Full code is available here text_generation.py

Though i saved all tensorflow varibles , the prediction tensor is not saved in the tensorflow computation graph . whats wrong in my lstm_rnn.py file .

Thanks!

-

ab123 almost 6 yearsI have similar problem, but when I use your suggestion and name my layer as

prediction = tf.layers.dense(net, 8, name='prediction_output')I get operations named likeprediction_output/bias prediction_output/bias/read prediction_output/bias/Initializer/zeros prediction_output/bias/Assign prediction_output/kernel/Regularizer/l2_regularizer/scalebut not any nameprediction_output, which is why I get the errorKeyError: "The name 'prediction_output:0' refers to a Tensor which does not exist. The operation, 'prediction_output', does not exist in the graph."How can I resolve this? -

Effective_cellist almost 6 yearsThe layer in my answer is

tf.nn.softmaxwhich doesn't have parameters like bias or weights. On the other hand you are usingtf.layers.densewhich has those parameters, hence you'll have the bias and weights named after the name you assign to the layer. If you want your output inprediction_output, you can leave the dense layer unnamed, and then pass its output to an identity function with that name.prediction = tf.layers.dense(net, 8) final_output = tf.identity(prediction, name='prediction_output')