MySQL: Pacemaker cannot start the failed master as a new slave?

Eureka!

Both of us forgot a very very important log file, it's... /var/log/mysqld.log:

socket: '/var/lib/mysql/mysql.sock' port: 3306 MySQL Community Server (GPL) by Atomicorp

[Note] Slave SQL thread initialized, starting replication in log 'mysql-bin.000082' at position 58569, relay log './mysqld-relay-bin.000002' position: 58715

[Note] Slave I/O thread: connected to master '[email protected]:3306',replication started in log 'mysql-bin.000082' at position 58569

[Warning] Aborted connection 10 to db: 'unconnected' user: 'test_user' host: 'localhost' (init_connect command failed)

[Warning] The MySQL server is running with the --read-only option so it cannot execute this statement

[Note] /usr/libexec/mysqld: Normal shutdown

As you can guess, I tracked the user activity by combining the binlog and init-connect:

init_connect = "INSERT INTO audit.accesslog (connect_time, user_host, connection_id) VALUES (NOW(), CURRENT_USER(), CONNECTION_ID());"

but serving-6192 is set read-only when starting as a slave, and then when Pacemaker perform monitor operation with test_user:

# Check for test table

ocf_run -q $MYSQL $MYSQL_OPTIONS_TEST \

-e "SELECT COUNT(*) FROM $OCF_RESKEY_test_table"

init_connect command failed with the above error:

The MySQL server is running with the

--read-onlyoption so it cannot execute this statement

The solution is I should set the init_connect option to the empty string before initializing the monitor action (don't forget to turn it back when promoting a node to become a master)

To anyone who are using event scheduler: also note that you must turn it on when promoting a slave to become a master:

set_event_scheduler() {

local es_val

if ocf_is_true $1; then

es_val="on"

else

es_val="off"

fi

ocf_run $MYSQL $MYSQL_OPTIONS_REPL \

-e "SET GLOBAL event_scheduler=${es_val}"

}

get_event_scheduler() {

# Check if event-scheduler is set

local event_scheduler_state

event_scheduler_state=`$MYSQL $MYSQL_OPTIONS_REPL \

-e "SHOW VARIABLES" | grep event_scheduler | awk '{print $2}'`

if [ "$event_scheduler_state" = "ON" ]; then

return 0

else

return 1

fi

}

mysql_promote() {

local master_info

if ( ! mysql_status err ); then

return $OCF_NOT_RUNNING

fi

ocf_run $MYSQL $MYSQL_OPTIONS_REPL \

-e "STOP SLAVE"

# Set Master Info in CIB, cluster level attribute

update_data_master_status

master_info="$(get_local_ip)|$(get_master_status File)|$(get_master_status Position)"

${CRM_ATTR_REPL_INFO} -v "$master_info"

rm -f $tmpfile

set_read_only off || return $OCF_ERR_GENERIC

set_event_scheduler on || return $OCF_ERR_GENERIC

Also don't forget to turn it off when demoting:

'pre-demote')

# Is the notification for our set

notify_resource=`echo $OCF_RESKEY_CRM_meta_notify_demote_resource|cut -d: -f1`

my_resource=`echo $OCF_RESOURCE_INSTANCE|cut -d: -f1`

if [ $notify_resource != ${my_resource} ]; then

ocf_log debug "Notification is not for us"

return $OCF_SUCCESS

fi

demote_host=`echo $OCF_RESKEY_CRM_meta_notify_demote_uname|tr -d " "`

if [ $demote_host = ${HOSTNAME} ]; then

ocf_log info "post-demote notification for $demote_host"

set_read_only on

set_event_scheduler off

Cheers,

Related videos on Youtube

Greg Petersen

Updated on September 18, 2022Comments

-

Greg Petersen almost 2 years

- pacemaker-1.0.12-1

- corosync-1.2.7-1.1

I'm going to setup failover for MySQL replication (1 master and 1 slave) follow this guide: https://github.com/jayjanssen/Percona-Pacemaker-Resource-Agents/blob/master/doc/PRM-setup-guide.rst

Here're the output of

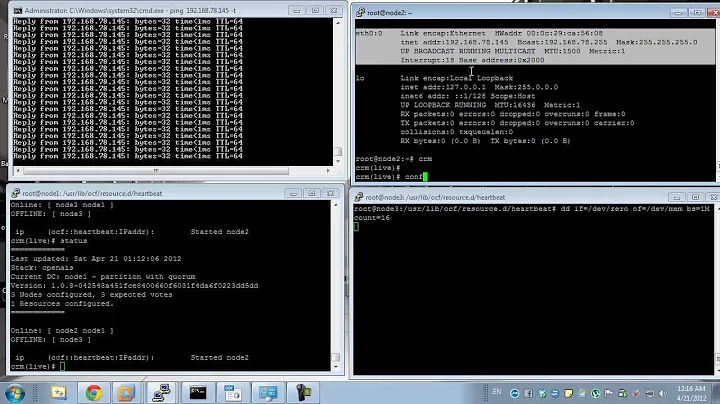

crm configure show:node serving-6192 \ attributes p_mysql_mysql_master_IP="192.168.6.192" node svr184R-638.localdomain \ attributes p_mysql_mysql_master_IP="192.168.6.38" primitive p_mysql ocf:percona:mysql \ params config="/etc/my.cnf" pid="/var/run/mysqld/mysqld.pid" socket="/var/lib/mysql/mysql.sock" replication_user="repl" replication_passwd="x" test_user="test_user" test_passwd="x" \ op monitor interval="5s" role="Master" OCF_CHECK_LEVEL="1" \ op monitor interval="2s" role="Slave" timeout="30s" OCF_CHECK_LEVEL="1" \ op start interval="0" timeout="120s" \ op stop interval="0" timeout="120s" primitive writer_vip ocf:heartbeat:IPaddr2 \ params ip="192.168.6.8" cidr_netmask="32" \ op monitor interval="10s" \ meta is-managed="true" ms ms_MySQL p_mysql \ meta master-max="1" master-node-max="1" clone-max="2" clone-node-max="1" notify="true" globally-unique="false" target-role="Master" is-managed="true" colocation writer_vip_on_master inf: writer_vip ms_MySQL:Master order ms_MySQL_promote_before_vip inf: ms_MySQL:promote writer_vip:start property $id="cib-bootstrap-options" \ dc-version="1.0.12-unknown" \ cluster-infrastructure="openais" \ expected-quorum-votes="2" \ no-quorum-policy="ignore" \ stonith-enabled="false" \ last-lrm-refresh="1341801689" property $id="mysql_replication" \ p_mysql_REPL_INFO="192.168.6.192|mysql-bin.000006|338"crm_mon:Last updated: Mon Jul 9 10:30:01 2012 Stack: openais Current DC: serving-6192 - partition with quorum Version: 1.0.12-unknown 2 Nodes configured, 2 expected votes 2 Resources configured. ============ Online: [ serving-6192 svr184R-638.localdomain ] Master/Slave Set: ms_MySQL Masters: [ serving-6192 ] Slaves: [ svr184R-638.localdomain ] writer_vip (ocf::heartbeat:IPaddr2): Started serving-6192Editing

/etc/my.cnfon the serving-6192 of wrong syntax to test failover and it's working fine:- svr184R-638.localdomain being promoted to become the master

- writer_vip switch to svr184R-638.localdomain

Current state:

Last updated: Mon Jul 9 10:35:57 2012 Stack: openais Current DC: serving-6192 - partition with quorum Version: 1.0.12-unknown 2 Nodes configured, 2 expected votes 2 Resources configured. ============ Online: [ serving-6192 svr184R-638.localdomain ] Master/Slave Set: ms_MySQL Masters: [ svr184R-638.localdomain ] Stopped: [ p_mysql:0 ] writer_vip (ocf::heartbeat:IPaddr2): Started svr184R-638.localdomain Failed actions: p_mysql:0_monitor_5000 (node=serving-6192, call=15, rc=7, status=complete): not running p_mysql:0_demote_0 (node=serving-6192, call=22, rc=7, status=complete): not running p_mysql:0_start_0 (node=serving-6192, call=26, rc=-2, status=Timed Out): unknown exec errorRemove the wrong syntax from

/etc/my.cnfon serving-6192, and restartcorosync, what I would like to see is serving-6192 being started as a new slave but it doesn't:Failed actions: p_mysql:0_start_0 (node=serving-6192, call=4, rc=1, status=complete): unknown errorHere're snippet of the logs which I'm suspecting:

Jul 09 10:46:32 serving-6192 lrmd: [7321]: info: rsc:p_mysql:0:4: start Jul 09 10:46:32 serving-6192 lrmd: [7321]: info: RA output: (p_mysql:0:start:stderr) Error performing operation: The object/attribute does not exist Jul 09 10:46:32 serving-6192 crm_attribute: [7420]: info: Invoked: /usr/sbin/crm_attribute -N serving-6192 -l reboot --name readable -v 0/var/log/cluster/corosync.log: http://fpaste.org/AyOZ/The strange thing is I can starting it manually:

export OCF_ROOT=/usr/lib/ocf export OCF_RESKEY_config="/etc/my.cnf" export OCF_RESKEY_pid="/var/run/mysqld/mysqld.pid" export OCF_RESKEY_socket="/var/lib/mysql/mysql.sock" export OCF_RESKEY_replication_user="repl" export OCF_RESKEY_replication_passwd="x" export OCF_RESKEY_test_user="test_user" export OCF_RESKEY_test_passwd="x"sh -x /usr/lib/ocf/resource.d/percona/mysql start: http://fpaste.org/RVGh/Did I make something wrong?

Reply to @Patrick Fri Jul 13 10:22:10 ICT 2012:

I'm not sure why its failing as your log doesnt contain any messages from the resource script (the ocf_log commands)

I take it all from

/var/log/cluster/corosync.log. Have you got any reason in your mind?/etc/corosync/corosync.confcompatibility: whitetank totem { version: 2 secauth: off threads: 0 interface { member { memberaddr: 192.168.6.192 } member { memberaddr: 192.168.6.38 } ringnumber: 0 bindnetaddr: 192.168.6.0 mcastaddr: 226.94.1.1 mcastport: 5405 } } logging { fileline: off to_stderr: yes to_logfile: yes to_syslog: yes logfile: /var/log/cluster/corosync.log debug: on timestamp: on logger_subsys { subsys: AMF debug: off } } amf { mode: disabled }Also the reason the script works when you run it manually is because you're not setting the variables which tells the script its a master/slave resource. So when it runs, the script thinks its just a single standalone instance.

Thanks. I've appended the following variables to my

~/.bash_profile:export OCF_RESKEY_CRM_meta_clone_max="2" export OCF_RESKEY_CRM_meta_role="Slave"Make it take effect

. ~/.bash_profileand manually start mysql resource:sh -x /usr/lib/ocf/resource.d/percona/mysql start: http://fpaste.org/EMwa/and it works fine:

mysql> show slave status\G *************************** 1. row *************************** Slave_IO_State: Waiting for master to send event Master_Host: 192.168.6.38 Master_User: repl Master_Port: 3306 Connect_Retry: 60 Master_Log_File: mysql-bin.000072 Read_Master_Log_Pos: 1428602 Relay_Log_File: mysqld-relay-bin.000006 Relay_Log_Pos: 39370 Relay_Master_Log_File: mysql-bin.000072 Slave_IO_Running: Yes Slave_SQL_Running: Yes Replicate_Do_DB: Replicate_Ignore_DB: Replicate_Do_Table: Replicate_Ignore_Table: Replicate_Wild_Do_Table: Replicate_Wild_Ignore_Table: Last_Errno: 0 Last_Error: Skip_Counter: 0 Exec_Master_Log_Pos: 1428602 Relay_Log_Space: 39527 Until_Condition: None Until_Log_File: Until_Log_Pos: 0 Master_SSL_Allowed: No Master_SSL_CA_File: Master_SSL_CA_Path: Master_SSL_Cert: Master_SSL_Cipher: Master_SSL_Key: Seconds_Behind_Master: 0 Master_SSL_Verify_Server_Cert: No Last_IO_Errno: 0 Last_IO_Error: Last_SQL_Errno: 0 Last_SQL_Error: Replicate_Ignore_Server_Ids: Master_Server_Id: 123 1 row in set (0.00 sec)

Stop MySQL, turn on the debug, restart corosync, and here're the logs: http://fpaste.org/mZzS/

As you can see, nothing but 'unknown error':

1. Jul 13 10:48:06 serving-6192 crmd: [3341]: debug: get_xpath_object: No match for //cib_update_result//diff-added//crm_config in /notify/cib_update_result/diff 2. Jul 13 10:48:06 serving-6192 lrmd: [3338]: WARN: Managed p_mysql:1:start process 3416 exited with return code 1. 3. Jul 13 10:48:06 serving-6192 crmd: [3341]: info: process_lrm_event: LRM operation p_mysql:1_start_0 (call=4, rc=1, cib-update=10, confirmed=true) unknown errorAny thoughts?

UPDATE Sat Jul 14 17:16:03 ICT 2012:

@Patrick: thank you for your tips!

The environment variables that Pacemaker uses as follow: http://fpaste.org/92yN/

As I suspected when chatting with you, the node

serving-6192was started with theOCF_RESKEY_CRM_meta_master_max=1, therefore, due to the following code:/usr/lib/ocf/resource.d/percona/mysql:if ocf_is_ms; then mysql_extra_params="--skip-slave-start" fi/usr/lib/ocf//lib/heartbeat/ocf-shellfuncs:ocf_is_ms() { [ ! -z "${OCF_RESKEY_CRM_meta_master_max}" ] && [ "${OCF_RESKEY_CRM_meta_master_max}" -gt 0 ] }the extra param

--skip-slave-startis included:ps -ef | grep mysqlroot 18215 1 0 17:12 pts/4 00:00:00 /bin/sh /usr/bin/mysqld_safe --defaults-file=/etc/my.cnf --pid-file=/var/run/mysqld/mysqld.pid --socket=/var/lib/mysql/mysql.sock --datadir=/var/lib/mysql --user=mysql --skip-slave-startmysql 19025 18215 1 17:12 pts/4 00:00:14 /usr/libexec/mysqld --defaults-file=/etc/my.cnf --basedir=/usr --datadir=/var/lib/mysql --plugin-dir=/usr/lib64/mysql/plugin --user=mysql --skip-slave-start --log-error=/var/log/mysqld.log --open-files-limit=8192 --pid-file=/var/run/mysqld/mysqld.pid --socket=/var/lib/mysql/mysql.sock --port=3306but SQL thread is still running:

Slave_IO_Running: Yes Slave_SQL_Running: Yesand replication is working fine.

IFS=$'\n' ENV=( $(cat /tmp/16374.env) ); env -i - "${ENV[@]}" sh -x /usr/lib/ocf/resource.d/percona/mysql start: http://fpaste.org/x7xE/I am hitting my head against the wall (: -> |

-

phemmer almost 12 yearsI'm not sure why its failing as your log doesnt contain any messages from the resource script (the ocf_log commands). However the

crm_attributeerror is because the script is broken and is setting a non-existent attribute (it's non-fatal though). Also the reason the script works when you run it manually is because you're not setting the variables which tells the script its a master/slave resource. So when it runs, the script thinks its just a single standalone instance. And since this works, that indicates the error is likely when it tries to go into slave mode. -

Greg Petersen almost 12 yearsupdated my question.

-

phemmer almost 12 yearsI'm not sure why it's not logging. Not saying you did something wrong, just makes no sense. The next step I'd take is to fully duplicate the environment. Edit

/usr/lib/ocf/resource.d/percona/mysqland addenv > /tmp/$$.env. Then have the script fail again, and in the log look forWARN: Managed p_mysql:1:start process XXXX exited with return code 1. Then doIFS=$'\n' ENV=( $(cat /tmp/XXXX.env) ); env -i - "${ENV[@]}" sh -x /usr/lib/ocf/resource.d/percona/mysql start(replacing XXXX with the pid from the log). This will give you the exact same environment the script runs with. -

Greg Petersen almost 12 years@Patrick: updated again.

-

Beekhof almost 12 yearsDo not, under any circumstances, define OCF_RESKEY_ variables in your shell's profile. These are set for you when you configure properties for the resource in the cluster configuration. That you need to set them indicates your config is broken.